## Screenshot: Task List for Python Debugging Challenge

### Overview

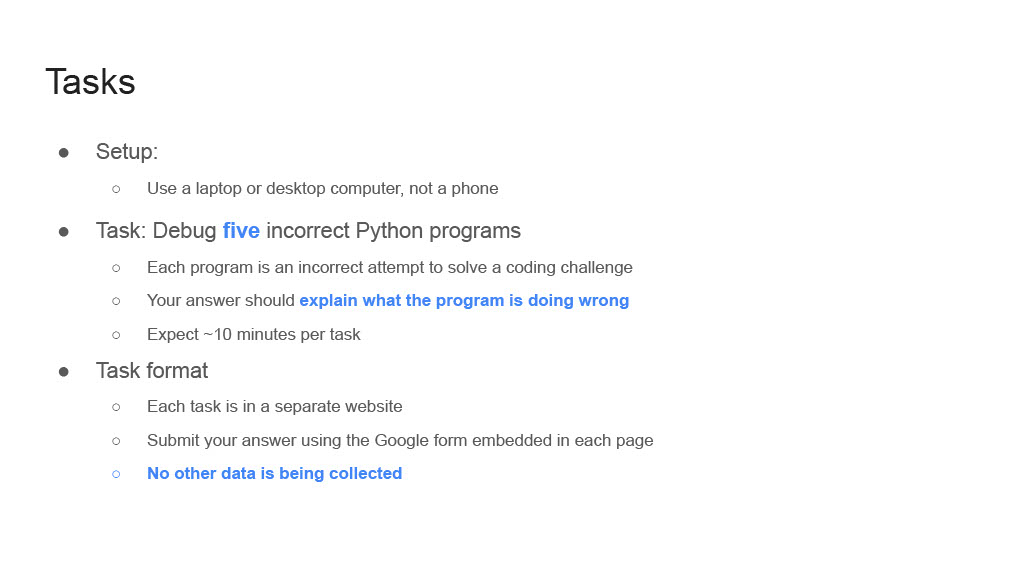

The image displays a structured task list for a Python debugging challenge. It outlines setup requirements, task objectives, and submission guidelines. The text is presented in a hierarchical bullet-point format with emphasis on specific instructions.

### Components/Axes

- **Headings**:

- "Tasks" (main title)

- "Setup"

- "Task"

- "Task format"

- **Subpoints**:

- Circular bullet points (`○`) for nested instructions

- Bold text for key terms (e.g., "Debug five incorrect Python programs")

- Blue hyperlinks for critical actions (e.g., "explain what the program is doing wrong")

### Detailed Analysis

1. **Setup**:

- Requires a laptop or desktop computer (explicitly excludes phones).

- No additional technical specifications provided.

2. **Task**:

- Objective: Debug **five** incorrect Python programs.

- Each program represents an incorrect attempt to solve a coding challenge.

- Answers must **explain what the program is doing wrong** (hyperlinked text).

- Time expectation: ~10 minutes per task.

3. **Task Format**:

- Each task is hosted on a **separate website**.

- Answers must be submitted via a **Google form embedded in each page**.

- Explicit statement: "**No other data is being collected**" (hyperlinked).

### Key Observations

- **Emphasis on Explanation**: The task prioritizes understanding over rote correction, requiring users to articulate errors.

- **Time Constraints**: ~10 minutes per task suggests a focus on efficiency or assessment of problem-solving speed.

- **Data Privacy Note**: The explicit mention of no additional data collection may address user concerns about privacy or compliance.

### Interpretation

This task list appears to be part of a structured assessment or training module for Python debugging skills. The requirement to explain errors (rather than just fix them) implies an emphasis on pedagogical understanding. The use of Google Forms for submission suggests integration with an existing platform, while the exclusion of phones indicates a controlled environment for task completion. The ~10-minute timeframe per task may reflect either a realistic estimate for experienced users or a deliberate constraint to simulate real-world debugging pressures. The absence of data collection beyond form submissions highlights a focus on task-specific outcomes rather than broader analytics.