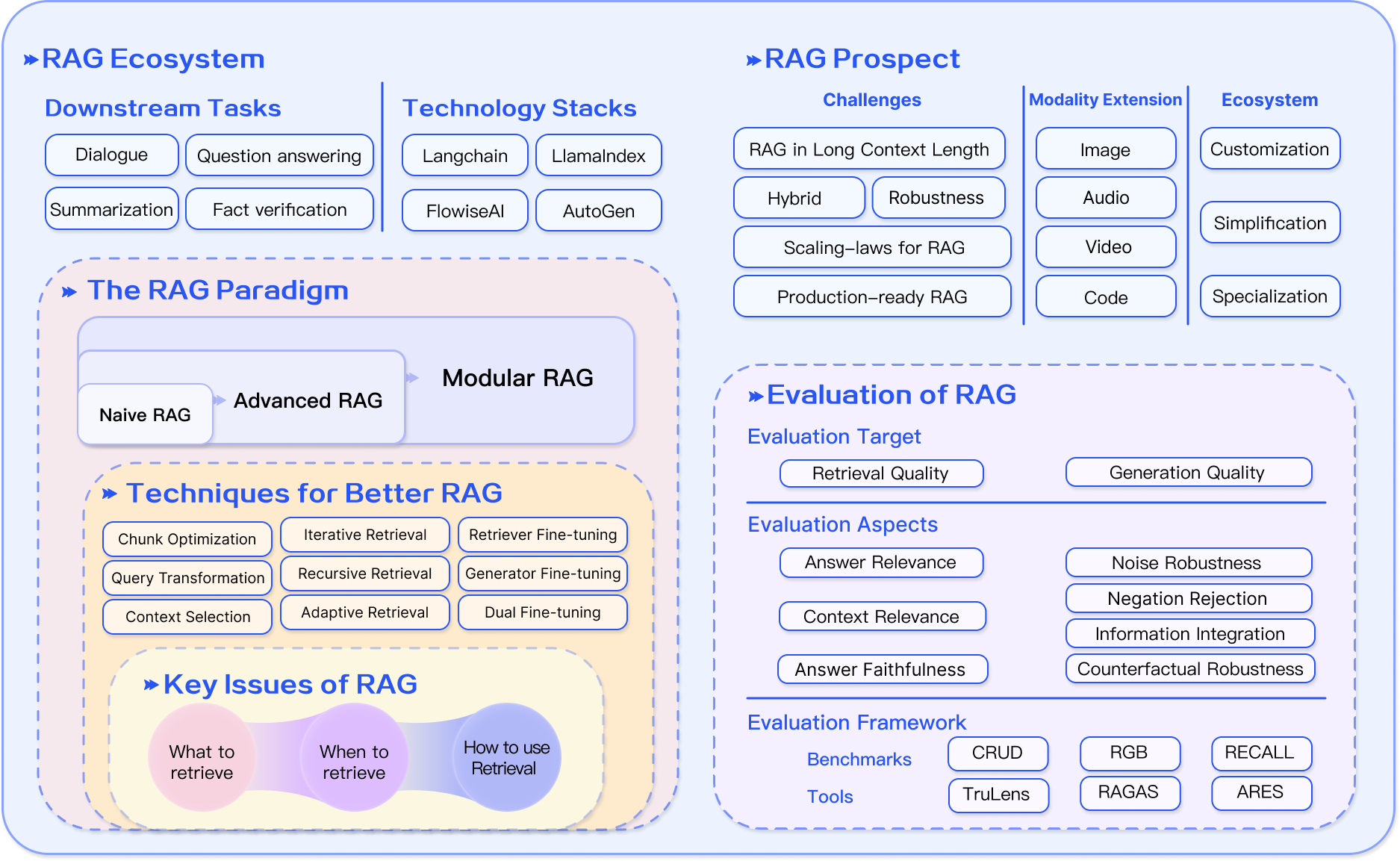

# Technical Document Extraction: RAG (Retrieval-Augmented Generation) Overview

This document provides a comprehensive extraction of the information contained in the provided infographic regarding the Retrieval-Augmented Generation (RAG) ecosystem, paradigm, prospects, and evaluation.

---

## 1. RAG Ecosystem (Top Left Section)

This section categorizes the practical applications and the software infrastructure supporting RAG.

### Downstream Tasks

* **Dialogue**: Interactive conversational agents.

* **Question answering**: Providing direct answers to user queries.

* **Summarization**: Condensing long documents into shorter versions.

* **Fact verification**: Validating the truthfulness of claims against a knowledge base.

### Technology Stacks

* **Langchain**: Framework for developing applications powered by language models.

* **LlamaIndex**: Data framework for LLM applications to connect custom data sources.

* **FlowiseAI**: Low-code tool for building customized LLM flows.

* **AutoGen**: Framework for enabling LLM workflows using multiple agents.

---

## 2. The RAG Paradigm (Middle Left Section)

This nested section describes the evolution and technical components of RAG systems.

### Evolution of RAG

The diagram shows a progression (indicated by arrows) within a "Modular RAG" container:

1. **Naive RAG**: The foundational approach.

2. **Advanced RAG**: An evolution of the naive approach with more sophisticated processing.

3. **Modular RAG**: The overarching framework that encompasses the previous two, suggesting a plug-and-play architecture.

### Techniques for Better RAG

A list of specific technical optimizations:

* **Chunk Optimization**: Refining how data is split for indexing.

* **Query Transformation**: Modifying user queries to improve retrieval.

* **Context Selection**: Filtering retrieved information for relevance.

* **Iterative Retrieval**: Performing multiple rounds of retrieval.

* **Recursive Retrieval**: Drilling down into data structures for better context.

* **Adaptive Retrieval**: Dynamically adjusting retrieval strategies.

* **Retriever Fine-tuning**: Training the retrieval model on specific data.

* **Generator Fine-tuning**: Training the LLM to better utilize retrieved context.

* **Dual Fine-tuning**: Optimizing both the retriever and the generator simultaneously.

### Key Issues of RAG

Represented as three interconnected nodes:

1. **What to retrieve**: Identifying the correct data sources and segments.

2. **When to retrieve**: Determining the optimal timing for retrieval in a workflow.

3. **How to use Retrieval**: Integrating the retrieved information into the generation process.

---

## 3. RAG Prospect (Top Right Section)

This section outlines the future directions and current hurdles in the field.

### Challenges

* **RAG in Long Context Length**: Handling very large input windows.

* **Hybrid**: Combining different retrieval or generation methods.

* **Robustness**: Ensuring consistent performance across various inputs.

* **Scaling-laws for RAG**: Understanding how performance scales with data and model size.

* **Production-ready RAG**: Moving from experimental setups to stable, scalable deployments.

### Modality Extension

Extending RAG beyond text to include:

* **Image**

* **Audio**

* **Video**

* **Code**

### Ecosystem (Future Trends)

* **Customization**: Tailoring RAG to specific user needs.

* **Simplification**: Making RAG systems easier to build and deploy.

* **Specialization**: Developing RAG for niche domains or specific industries.

---

## 4. Evaluation of RAG (Bottom Right Section)

This section details how RAG systems are measured and validated.

### Evaluation Target

* **Retrieval Quality**: How well the system finds relevant information.

* **Generation Quality**: How well the system produces the final output.

### Evaluation Aspects

* **Answer Relevance**: Does the answer address the query?

* **Context Relevance**: Is the retrieved context actually useful?

* **Answer Faithfulness**: Is the answer grounded in the retrieved context (avoiding hallucinations)?

* **Noise Robustness**: Can the system handle irrelevant information in the context?

* **Negation Rejection**: Can the system correctly identify when information is not present?

* **Information Integration**: Can the system synthesize information from multiple sources?

* **Counterfactual Robustness**: How the system handles false or misleading information.

### Evaluation Framework

Categorized into Benchmarks and Tools:

* **Benchmarks**:

* **CRUD**

* **RGB**

* **RECALL**

* **Tools**:

* **TruLens**

* **RAGAS**

* **ARES**