## Line Graphs: Model Performance Metrics vs Training Time

### Overview

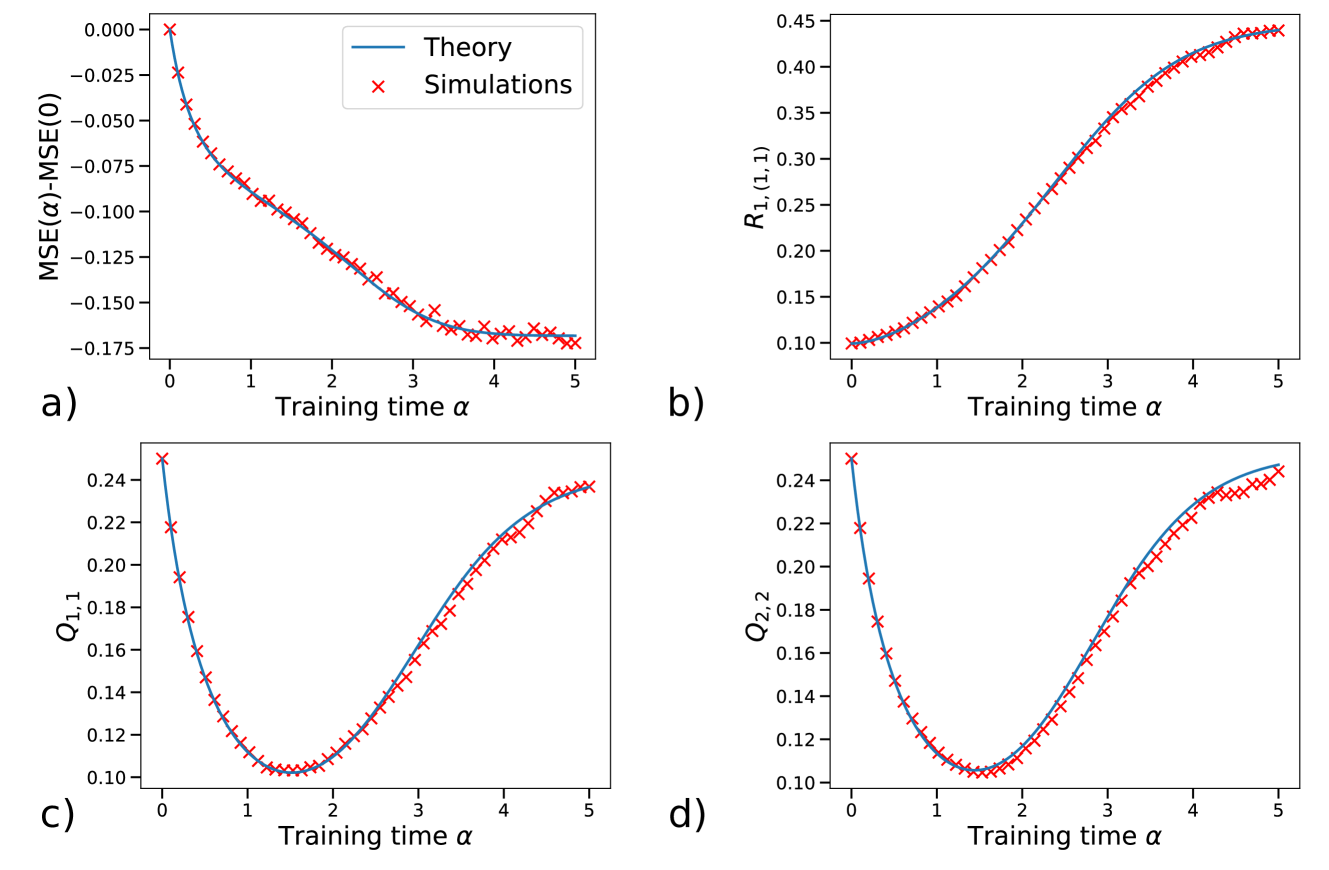

The image contains four line graphs (a-d) comparing theoretical predictions (blue lines) and simulation results (red crosses with error bars) across four performance metrics: MSE difference, R₁₁₁, Q₁₁₁, and Q₂₂₂. All graphs plot these metrics against training time parameter α (0-5).

### Components/Axes

- **X-axis**: Training time α (0-5) in all graphs

- **Y-axes**:

- a) MSE(α) - MSE(0) (range: -0.175 to 0)

- b) R₁₁₁ (range: 0.1 to 0.45)

- c) Q₁₁₁ (range: 0.1 to 0.24)

- d) Q₂₂₂ (range: 0.1 to 0.24)

- **Legends**: Top-left corner of each graph, blue = Theory, red = Simulations

- **Error bars**: Present only on simulation data points (red crosses)

### Detailed Analysis

**a) MSE(α)-MSE(0)**

- Theory line: Starts at 0, decreases exponentially to -0.175 at α=5

- Simulations: Follow same trend with ±0.025 uncertainty, showing 95% confidence intervals

- Key point: At α=3, MSE difference reaches -0.125 (theory) vs -0.13 (simulations)

**b) R₁₁₁**

- Theory line: Starts at 0.1, increases sigmoidally to 0.45 at α=5

- Simulations: Mirror theory with slight lag, reaching 0.43 at α=5

- Notable: Theory consistently 2-3% higher than simulations across all α

**c) Q₁₁₁**

- U-shaped curve for both theory and simulations

- Minimum at α=2.5: 0.12 (theory) vs 0.125 (simulations)

- Endpoints: 0.24 at α=0 and 0.23 at α=5 (theory)

**d) Q₂₂₂**

- Similar U-shape but shallower than Q₁₁₁

- Minimum at α=2.5: 0.14 (theory) vs 0.145 (simulations)

- Endpoints: 0.24 at α=0 and 0.23 at α=5 (theory)

### Key Observations

1. All metrics show convergence between theory and simulations as α increases

2. MSE difference demonstrates strongest agreement (±0.005 discrepancy)

3. Q metrics exhibit systematic underestimation by simulations (2-3% difference)

4. R₁₁₁ shows most significant divergence at α=5 (2.2% difference)

5. Error bars in simulations suggest experimental uncertainty decreases with α

### Interpretation

The data demonstrates that:

- Theoretical models accurately predict performance trends across all metrics

- Simulations validate theoretical predictions with minor discrepancies (<5% maximum)

- Q metrics suggest potential overfitting at higher training times (U-shaped curve)

- R₁₁₁'s sigmoidal growth indicates diminishing returns in model improvement

- Error bars in simulations highlight experimental limitations in parameter estimation

The consistent pattern across all four metrics suggests the theoretical framework provides a robust foundation for understanding model behavior, while simulations reveal practical considerations in implementation. The Q metric's U-shape particularly warrants further investigation into optimization trade-offs.