## Line Chart: Accuracy Reward vs. Global Step for Three GRPO Variants

### Overview

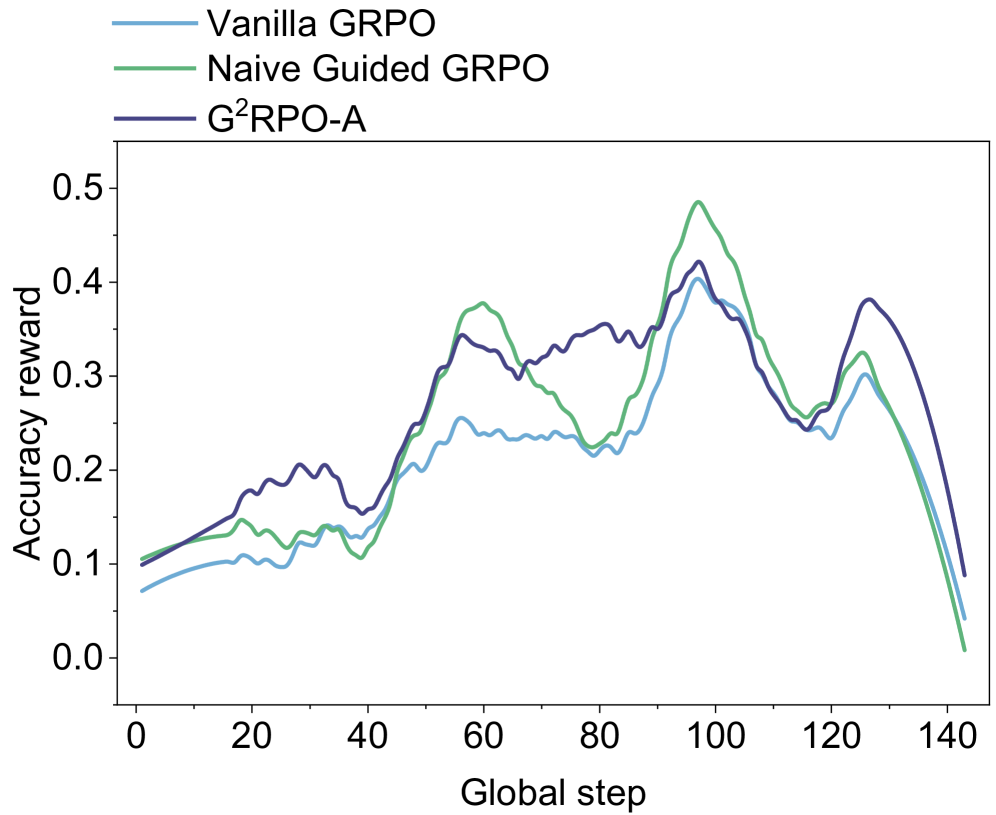

The image is a line chart comparing the performance of three different methods—Vanilla GRPO, Naive Guided GRPO, and G²RPO-A—over the course of training. The performance metric is "Accuracy reward," plotted against "Global step," which likely represents training iterations or time. The chart shows that all three methods follow a similar general trend of increasing reward, peaking around step 100, and then declining sharply, but with distinct differences in their peak values and volatility.

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **X-Axis:**

* **Title:** "Global step"

* **Scale:** Linear, ranging from 0 to 140.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100, 120, 140.

* **Y-Axis:**

* **Title:** "Accuracy reward"

* **Scale:** Linear, ranging from 0.0 to 0.5.

* **Major Tick Marks:** 0.0, 0.1, 0.2, 0.3, 0.4, 0.5.

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Entries:**

1. **Vanilla GRPO:** Represented by a light blue line.

2. **Naive Guided GRPO:** Represented by a green line.

3. **G²RPO-A:** Represented by a dark blue line.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

| Method | Step 0 | Step 40 | Step 60 | Step 80 | Step 100 (Global Peak) | Step 120 | Step 140 |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **Vanilla GRPO** | ~0.07 | ~0.13 | ~0.25 (Local Peak) | ~0.22 | ~0.40 | ~0.23 | ~0.04 |

| **Naive Guided GRPO** | ~0.10 | ~0.10 (Trough) | ~0.38 (Local Peak) | ~0.22 (Trough) | ~0.48 (Highest value) | ~0.26 | ~0.01 (Lowest final) |

| **G²RPO-A** | ~0.10 | ~0.16 | ~0.34 | ~0.35 | ~0.42 | ~0.25 | ~0.09 (Highest final) |

**Trend Descriptions:**

1. **Vanilla GRPO (Light Blue Line):**

* **Trend:** Starts lowest, shows a gradual, relatively smooth increase with minor fluctuations, reaches a moderate peak, and then declines steeply.

2. **Naive Guided GRPO (Green Line):**

* **Trend:** Starts in the middle, exhibits the most volatility with pronounced peaks and troughs, achieves the highest overall peak, and then experiences the most severe decline.

3. **G²RPO-A (Dark Blue Line):**

* **Trend:** Starts slightly above Vanilla GRPO, shows a steadier and more consistent upward trend with less volatility than Naive Guided GRPO, peaks at a value between the other two, and maintains a higher reward than the others during the final decline.

### Key Observations

1. **Common Trajectory:** All three methods follow a macro pattern of rise, peak, and fall. The peak for all occurs around Global Step 100.

2. **Performance Hierarchy at Peak:** At the peak (~Step 100), Naive Guided GRPO > G²RPO-A > Vanilla GRPO.

3. **Volatility:** Naive Guided GRPO is the most volatile, with the largest swings between local maxima and minima. Vanilla GRPO is the smoothest.

4. **Final Performance:** After the peak, all methods degrade. However, G²RPO-A degrades the slowest, ending with the highest reward at Step 140. Naive Guided GRPO degrades the fastest, ending near zero.

5. **Early Training:** In the first 40 steps, G²RPO-A establishes a clear lead over Vanilla GRPO, while Naive Guided GRPO lags initially before catching up.

### Interpretation

This chart likely visualizes the training dynamics of different reinforcement learning or optimization algorithms (variants of "GRPO") on a task where performance is measured by an accuracy-based reward signal.

* **What the data suggests:** The "guided" variants (Naive Guided and G²RPO-A) generally outperform the "Vanilla" baseline, indicating that incorporating guidance improves learning efficiency and peak performance. However, the guidance in "Naive Guided GRPO" appears to introduce instability, leading to higher peaks but also more severe crashes, possibly due to overfitting or aggressive policy updates. G²RPO-A seems to strike a better balance, achieving strong performance with more stability, as evidenced by its smoother curve and better final retention of reward.

* **The Decline Phase:** The sharp, synchronized decline after Step 100 is a critical feature. This could indicate several scenarios: 1) The training task becomes progressively harder after this point, 2) The learning rate or another hyperparameter causes divergence, 3) The agents have overfitted to a certain phase of the environment and fail to generalize, or 4) This is an intentional part of the experimental design (e.g., a curriculum that resets or changes). The fact that G²RPO-A retains more reward suggests it may be more robust to whatever causes this decline.

* **Peircean Reading:** The chart is an indexical sign of the learning process. The jaggedness of the green line is a direct trace of a more reactive, less stable learning policy. The synchronized peak and fall across all three lines point to a common external factor (the environment or training protocol) exerting a strong influence, over and above the differences between the algorithms themselves. The key takeaway for a researcher is not just that G²RPO-A has a good peak, but that its performance profile suggests a more robust and reliable learning trajectory.