## Diagram: Taxonomy of LLMs Meet KGs

### Overview

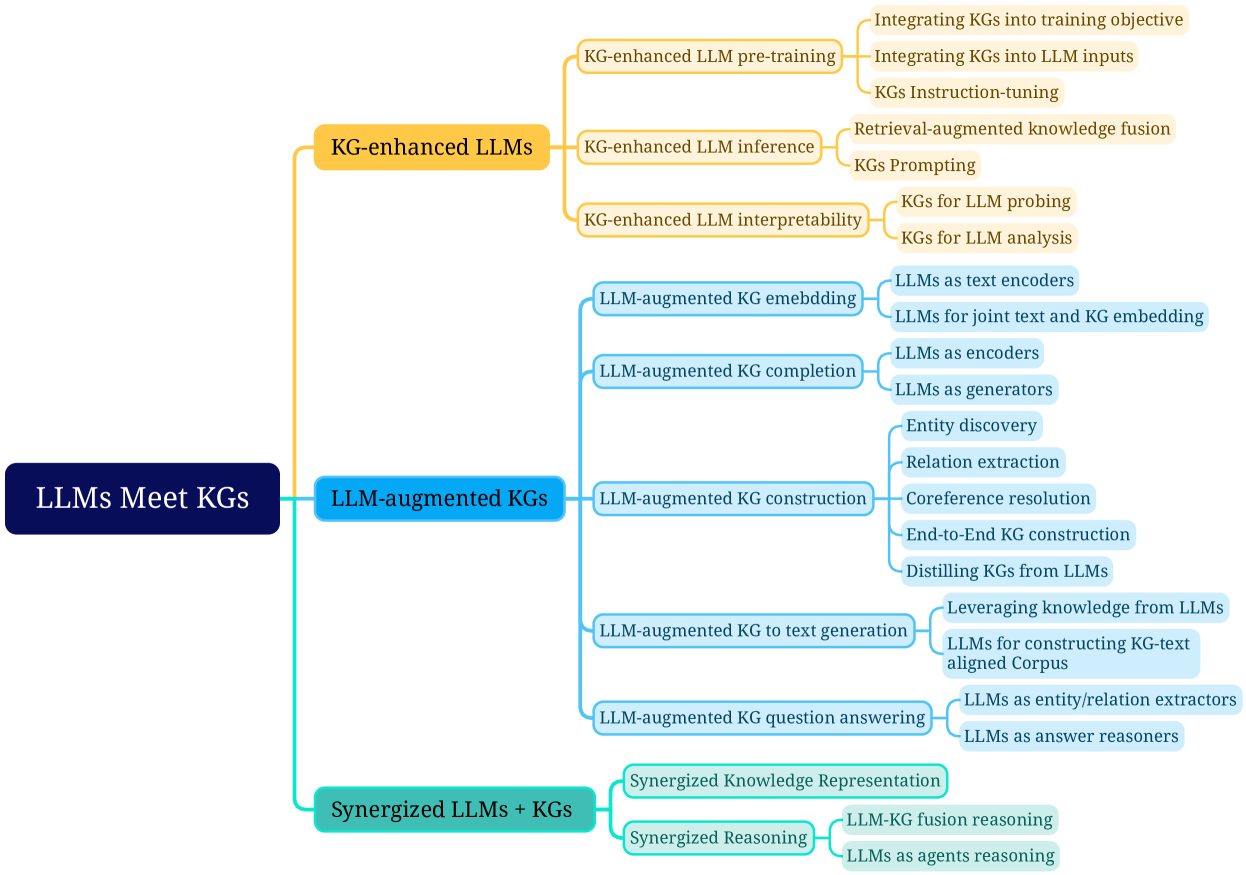

This image is a hierarchical tree diagram (mind map) illustrating the taxonomy of research areas at the intersection of Large Language Models (LLMs) and Knowledge Graphs (KGs). The central theme is "LLMs Meet KGs," which branches into three primary categories, each further subdivided into specific research directions and applications. The diagram uses color-coding to distinguish the main branches.

### Components/Axes

* **Central Node (Root):** "LLMs Meet KGs" (Dark blue box, left side).

* **Primary Branches (Level 1):**

1. **KG-enhanced LLMs** (Yellow box, top branch).

2. **LLM-augmented KGs** (Light blue box, middle branch).

3. **Synergized LLMs + KGs** (Teal box, bottom branch).

* **Secondary Branches (Level 2):** Each primary branch splits into 2-5 sub-categories, represented by lighter-colored boxes connected by lines.

* **Tertiary Branches (Level 3):** Some secondary branches further split into specific techniques or tasks, shown in the lightest-colored boxes.

* **Spatial Layout:** The diagram flows from left (root) to right (leaves). The legend is implicit in the color-coding of the boxes and connecting lines, which consistently group related concepts.

### Detailed Analysis

The diagram systematically breaks down the field into three main paradigms:

**1. KG-enhanced LLMs (Yellow Branch):** Focuses on using Knowledge Graphs to improve LLMs.

* **KG-enhanced LLM pre-training:**

* Integrating KGs into training objective

* Integrating KGs into LLM inputs

* KGs Instruction-tuning

* **KG-enhanced LLM inference:**

* Retrieval-augmented knowledge fusion

* KGs Prompting

* **KG-enhanced LLM interpretability:**

* KGs for LLM probing

* KGs for LLM analysis

**2. LLM-augmented KGs (Light Blue Branch):** Focuses on using LLMs to improve Knowledge Graph tasks.

* **LLM-augmented KG embedding** (Note: Corrected from "emebedding" in the source image.):

* LLMs as text encoders

* LLMs for joint text and KG embedding

* **LLM-augmented KG completion:**

* LLMs as encoders

* LLMs as generators

* **LLM-augmented KG construction:**

* Entity discovery

* Relation extraction

* Coreference resolution

* End-to-End KG construction

* Distilling KGs from LLMs

* **LLM-augmented KG to text generation:**

* Leveraging knowledge from LLMs

* LLMs for constructing KG-text aligned Corpus

* **LLM-augmented KG question answering:**

* LLMs as entity/relation extractors

* LLMs as answer reasoners

**3. Synergized LLMs + KGs (Teal Branch):** Focuses on the mutual integration and co-evolution of both technologies.

* **Synergized Knowledge Representation**

* **Synergized Reasoning:**

* LLM-KG fusion reasoning

* LLMs as agents reasoning

### Key Observations

* **Structured Taxonomy:** The diagram presents a clear, three-pronged classification of the research landscape.

* **Directionality of Enhancement:** The first two branches are asymmetric: one uses KGs to help LLMs, the other uses LLMs to help KGs. The third branch proposes a more balanced, synergistic relationship.

* **Granularity:** The "LLM-augmented KGs" branch is the most detailed, suggesting a wide range of established or emerging tasks in this area.

* **Typographical Error:** The term "emebedding" under the light blue branch is a misspelling of "embedding."

### Interpretation

This diagram serves as a conceptual map for understanding how two powerful AI technologies—LLMs (parametric, generative knowledge) and KGs (structured, symbolic knowledge)—can be combined. It moves beyond a simple "A + B" model to show distinct research philosophies:

1. **KG-enhanced LLMs** represents the "knowledge injection" paradigm, aiming to ground LLMs in factual, structured knowledge to improve accuracy, reduce hallucinations, and enhance interpretability.

2. **LLM-augmented KGs** represents the "automation and scaling" paradigm, leveraging the linguistic and reasoning prowess of LLMs to build, complete, and query knowledge graphs more efficiently.

3. **Synergized LLMs + KGs** represents the "unified intelligence" paradigm, envisioning a future where the two forms of knowledge are deeply integrated for more robust reasoning and representation, potentially leading to systems that combine the flexibility of neural networks with the precision of symbolic AI.

The taxonomy highlights that the field is not monolithic but consists of complementary approaches targeting different stages of the AI pipeline (pre-training, inference, construction, reasoning). The detailed breakdown under "LLM-augmented KGs" indicates this is a particularly active area of applied research.