## Diagram: AI System Interaction with Human via Data Physicalizing Interfaces

### Overview

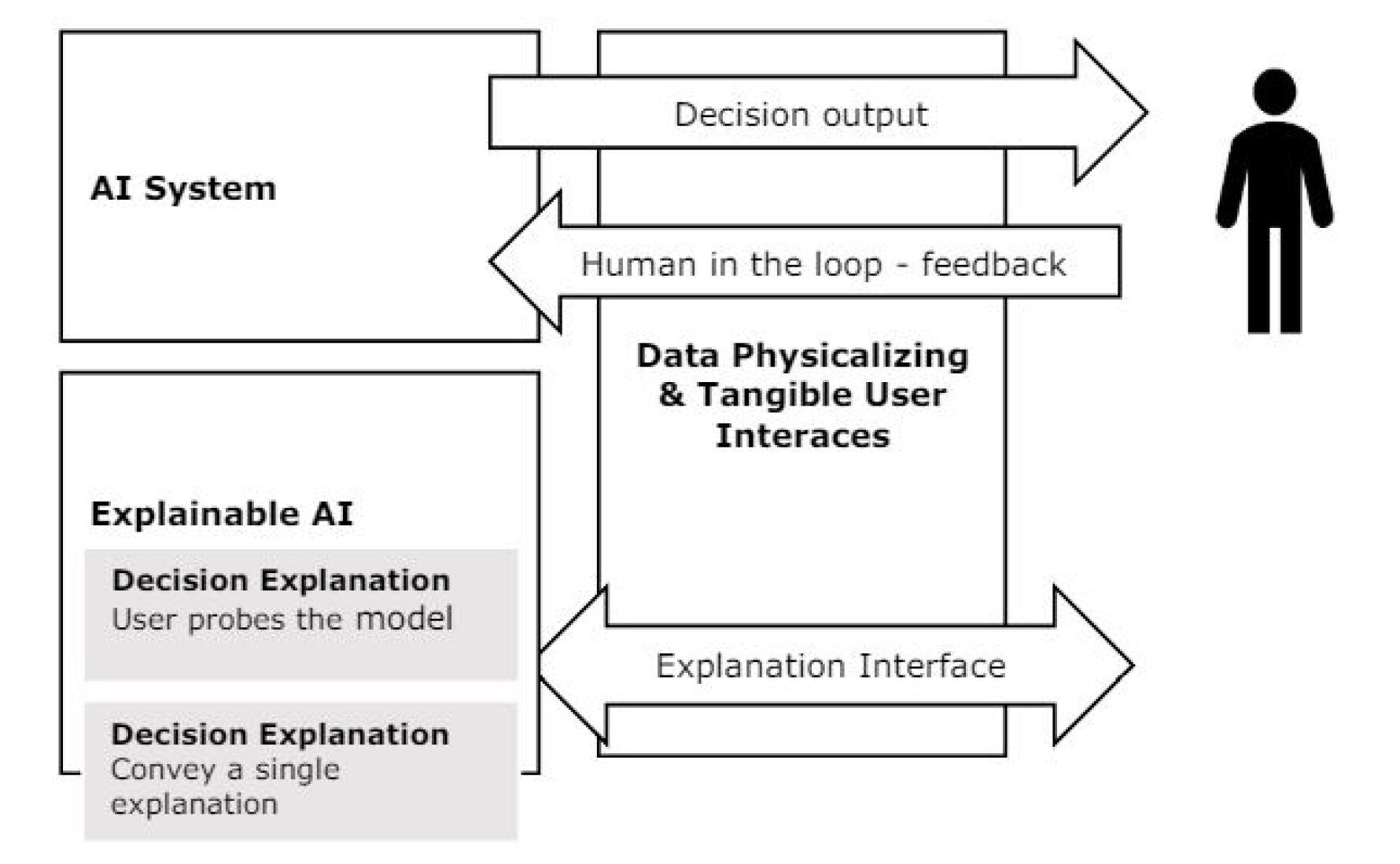

This diagram illustrates a conceptual model of how an AI system interacts with a human user through a mediating layer called "Data Physicalizing & Tangible User Interfaces." The model emphasizes two primary interaction pathways: one for decision output and feedback, and another for explainability. The diagram is a black-and-white line drawing with text labels and directional arrows.

### Components/Axes

The diagram is composed of several labeled rectangular boxes and directional arrows, organized in a left-to-right flow.

**Left Column (System Components):**

1. **Top Box:** Labeled "AI System".

2. **Bottom Box:** Labeled "Explainable AI". This box contains two nested, gray-shaded sub-boxes:

* Upper sub-box: "Decision Explanation" with subtext "User probes the model".

* Lower sub-box: "Decision Explanation" with subtext "Convey a single explanation".

**Center Column (Interface Layer):**

* A single large box spanning the vertical space between the two left boxes. It is labeled: "Data Physicalizing & Tangible User Interfaces". (Note: "Interfaces" is misspelled as "Interaces" in the image).

**Right Side (User):**

* A simple, black silhouette icon of a standing human figure.

**Arrows (Interaction Flows):**

1. **Top Arrow:** Points from the "AI System" box to the human icon. It is labeled "Decision output".

2. **Middle Arrow:** Points from the human icon back to the "AI System" box. It is labeled "Human in the loop - feedback".

3. **Bottom Arrow:** A double-headed arrow connecting the "Explainable AI" box and the human icon, passing through the central interface box. It is labeled "Explanation Interface".

### Detailed Analysis

The diagram defines a structured interaction loop with distinct pathways:

* **Primary Decision Loop:** The "AI System" generates a "Decision output" that is presented to the human user. The user then provides "Human in the loop - feedback" which flows back to the AI System. This entire exchange is mediated by the "Data Physicalizing & Tangible User Interfaces" layer.

* **Explainability Loop:** A separate, bidirectional channel exists for explainability. The "Explainable AI" component connects to the user via an "Explanation Interface". The two sub-components within "Explainable AI" suggest different modes of explanation: one where the user actively investigates ("User probes the model") and another where the system proactively provides a definitive reason ("Convey a single explanation").

### Key Observations

1. **Central Mediating Layer:** The "Data Physicalizing & Tangible User Interfaces" box is positioned as the essential conduit for *all* interactions between the AI systems (both standard and explainable) and the human user. This suggests the interface's physical or tangible nature is critical to the interaction model.

2. **Dual Explainability Functions:** The "Explainable AI" box explicitly contains two distinct functions for generating explanations, highlighting that explainability is not a single process but can be either user-driven or system-driven.

3. **Directional Flow Clarity:** The arrows clearly demarcate the direction of information flow: output from AI to human, feedback from human to AI, and a bidirectional exchange for explanations.

4. **Spatial Grouping:** The "AI System" and "Explainable AI" are grouped on the left as system-side components, while the human is isolated on the right as the recipient and actor. The interface layer physically and conceptually bridges this gap.

### Interpretation

This diagram presents a framework for human-AI collaboration that prioritizes two key principles: **tangibility** and **explainability**.

* **The Role of Tangibility:** By placing "Data Physicalizing & Tangible User Interfaces" at the center, the model argues that for effective human-AI teaming, data and AI decisions should not remain abstract digital signals. They need to be rendered into physical or tangible forms that humans can intuitively understand and manipulate. This could involve using objects, gestures, or physical controls to represent data and AI states.

* **The Necessity of Explainable AI (XAI):** The dedicated "Explainable AI" component with its own interface underscores that trust and effective collaboration require more than just decisions; they require understanding. The model accommodates both reactive explanations (when a user questions a decision) and proactive explanations (when the system volunteers its reasoning).

* **The Human-in-the-Loop Paradigm:** The explicit "Human in the loop - feedback" arrow confirms this is not a fully autonomous system. The human is an active participant whose feedback is intended to refine or correct the AI system's future outputs, creating a continuous improvement cycle.

In essence, the diagram advocates for a design philosophy where AI systems are built not just to perform tasks, but to communicate and collaborate with humans through intuitive, physical interaction modalities, supported by robust mechanisms for explanation. The misspelling "Interaces" is a minor textual error in the source image.