\n

## Diagram: AI System with Explainable AI and Human Interaction

### Overview

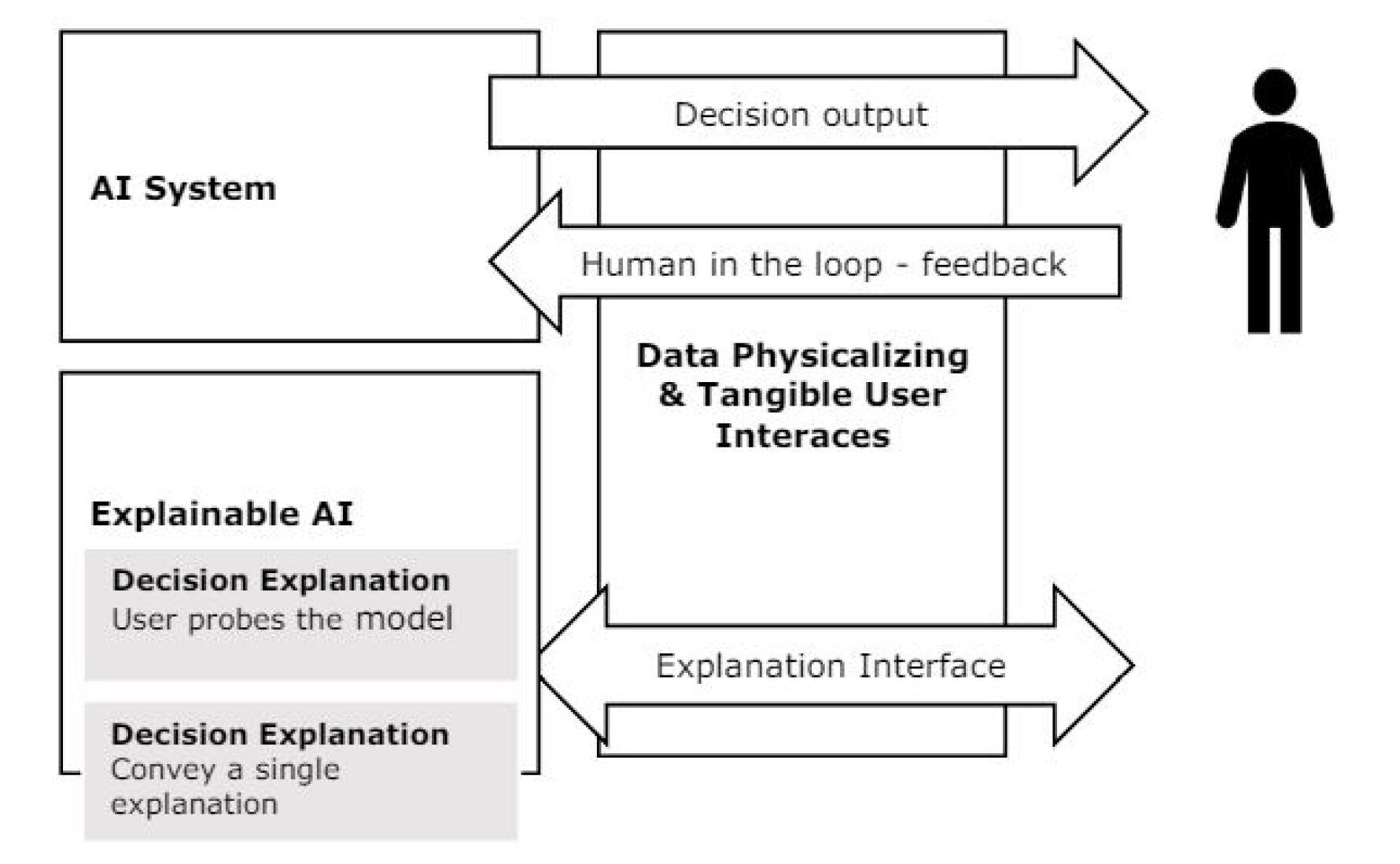

The image is a diagram illustrating the interaction between an AI System, Explainable AI (XAI), a human user, and a component labeled "Data Physicalizing & Tangible User Interfaces". It depicts a cyclical flow of information and feedback. The diagram uses boxes and arrows to represent components and their relationships.

### Components/Axes

The diagram consists of the following components:

* **AI System:** A large rectangular box labeled "AI System" positioned on the left side of the diagram.

* **Explainable AI:** A smaller rectangular box labeled "Explainable AI" positioned below and slightly to the left of the "Data Physicalizing & Tangible User Interfaces" box.

* **Data Physicalizing & Tangible User Interfaces:** A large rectangular box positioned in the center of the diagram.

* **Human User:** A stick figure representing a human user, positioned on the right side of the diagram.

* **Decision Output:** A rectangular box labeled "Decision output" connected to the "AI System" and pointing towards the "Human User".

* **Human in the loop - feedback:** A rectangular box labeled "Human in the loop - feedback" connecting the "Human User" to the "Data Physicalizing & Tangible User Interfaces" box.

* **Explanation Interface:** A rectangular box labeled "Explanation Interface" connecting the "Data Physicalizing & Tangible User Interfaces" box to the "Explainable AI" box.

* **Decision Explanation User probes the model:** A rectangular box within the "Explainable AI" box, labeled "Decision Explanation User probes the model".

* **Decision Explanation Convey a single explanation:** A rectangular box within the "Explainable AI" box, labeled "Decision Explanation Convey a single explanation".

### Detailed Analysis or Content Details

The diagram illustrates the following flow:

1. The "AI System" generates a "Decision output" which is directed towards the "Human User".

2. The "Human User" provides "Human in the loop - feedback" to the "Data Physicalizing & Tangible User Interfaces" component.

3. The "Data Physicalizing & Tangible User Interfaces" component communicates via an "Explanation Interface" to the "Explainable AI" component.

4. The "Explainable AI" component has two internal functions: "Decision Explanation User probes the model" and "Decision Explanation Convey a single explanation".

5. The "Explainable AI" component sends information back to the "AI System", completing the cycle.

The arrows indicate a bidirectional flow of information between the "AI System" and the "Data Physicalizing & Tangible User Interfaces", and between the "Explainable AI" and the "Data Physicalizing & Tangible User Interfaces". The arrow from the "AI System" to the "Human User" is unidirectional.

### Key Observations

The diagram emphasizes the importance of explainability in AI systems. The inclusion of "Explainable AI" and the "Explanation Interface" suggests a focus on making AI decisions transparent and understandable to human users. The "Human in the loop - feedback" component highlights the role of human input in refining and improving the AI system. The "Data Physicalizing & Tangible User Interfaces" component suggests a focus on making data more accessible and understandable through physical or tangible interactions.

### Interpretation

This diagram illustrates a human-centered approach to AI development. It suggests that AI systems should not operate as "black boxes" but should be designed to be explainable and responsive to human feedback. The inclusion of "Data Physicalizing & Tangible User Interfaces" indicates an interest in exploring novel ways to interact with and understand AI-generated data. The cyclical flow of information suggests an iterative process of learning and improvement, where human feedback is used to refine the AI system's decision-making process. The diagram highlights the importance of trust and collaboration between humans and AI systems. The diagram does not contain any numerical data or specific measurements; it is a conceptual representation of a system architecture.