## Diagram: Human-AI Interaction with Explainable AI Components

### Overview

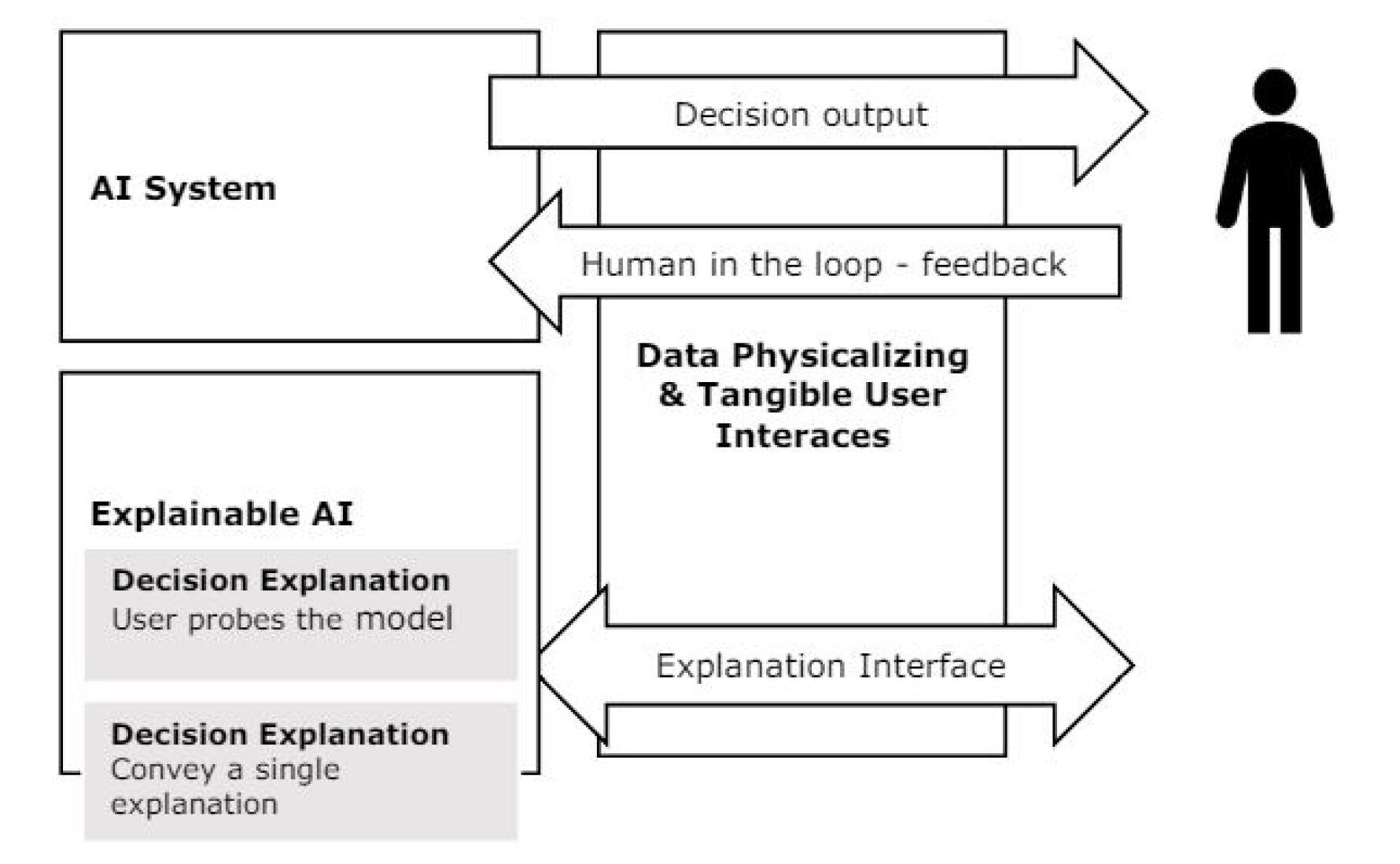

This diagram illustrates a human-AI interaction framework emphasizing explainability and user feedback. It depicts bidirectional communication between an AI system, explainable AI components, and a human user through tangible interfaces. The flow includes decision outputs, user probing capabilities, and iterative feedback loops.

### Components/Axes

1. **AI System** (Top-left box)

- Contains "AI System" label

- Outputs "Decision output" via rightward arrow

- Receives "Human in the loop - feedback" via leftward arrow

2. **Explainable AI** (Bottom-left box)

- Contains two stacked gray boxes:

- **Decision Explanation**: "User probes the model"

- **Decision Explanation**: "Convey a single explanation"

3. **Data Physicalizing & Tangible User Interfaces** (Central vertical box)

- Contains bidirectional arrows:

- Left arrow labeled "Explanation Interface"

- Right arrow labeled "Human in the loop - feedback"

4. **Human Figure** (Far right)

- Simple black silhouette representing the user

### Flow Direction

- Primary flow: AI System → Decision output → Human

- Secondary flows:

- Human feedback → AI System

- Explainable AI components ↔ Data Physicalizing interfaces

### Key Observations

1. **Bidirectional Communication**: The system emphasizes continuous feedback between AI and human users

2. **Explainability Layers**: Two distinct explanation mechanisms are shown:

- Proactive probing capability ("User probes the model")

- Simplified explanation delivery ("Convey a single explanation")

3. **Tangible Interface Role**: Acts as a bridge between abstract AI decisions and physical user interaction

4. **Cyclical Improvement**: Feedback loops suggest iterative refinement of AI decisions

### Interpretation

This diagram demonstrates a human-centered AI design philosophy where:

- **Transparency** is maintained through explainable components

- **User Agency** is preserved via probing capabilities

- **System Adaptability** is enabled through feedback loops

- **Tangibility** transforms abstract AI outputs into actionable user interfaces

The separation of explanation mechanisms suggests a dual approach to AI interpretability - allowing both deep technical investigation and simplified understanding. The central role of "Data Physicalizing & Tangible User Interfaces" implies a focus on making AI decisions perceptible through physical/digital interfaces, while maintaining technical transparency for expert users.