\n

## Diagram: Graph Construction and Retrieval/Answer Pipeline

### Overview

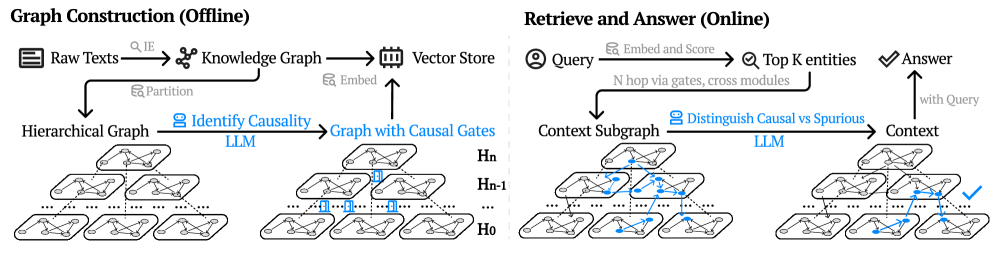

The image depicts a diagram illustrating a two-stage pipeline: "Graph Construction (Offline)" and "Retrieve and Answer (Online)". The left side shows the process of building a knowledge graph from raw text, while the right side demonstrates how to query this graph to obtain an answer. Both sides involve Large Language Models (LLMs) and utilize hierarchical graph structures.

### Components/Axes

The diagram is divided into two main sections, labeled "Graph Construction (Offline)" and "Retrieve and Answer (Online)". Within each section, there are several components connected by arrows indicating the flow of information. Key components include: Raw Texts, Knowledge Graph, Vector Store, Hierarchical Graph, LLM, Graph with Causal Gates, Context Subgraph, Query, Top K entities, Answer, and Context. The hierarchical graphs are labeled H₀, H₋₁, and Hₙ.

### Detailed Analysis or Content Details

**Graph Construction (Offline):**

1. **Raw Texts** are processed with a "Q,IE" (Query, Information Extraction) step to create a **Knowledge Graph**.

2. The Knowledge Graph is then embedded and stored in a **Vector Store**.

3. The Raw Texts are also partitioned to form a **Hierarchical Graph**.

4. An **LLM** is used to "Identify Causality" within the Hierarchical Graph, resulting in a **Graph with Causal Gates**.

5. The Vector Store embedding is used to connect to the Graph with Causal Gates.

**Retrieve and Answer (Online):**

1. A **Query** is embedded and scored, leading to the identification of **Top K entities**.

2. These entities are processed through "N hop via gates, cross modules" to generate a **Context Subgraph**.

3. An **LLM** is used to "Distinguish Causal vs Spurious" relationships within the Context Subgraph.

4. This process generates **Context**, which is then used to provide an **Answer** to the original query. A checkmark indicates a successful answer.

The hierarchical graphs (H₀, H₋₁, Hₙ) visually represent layers of abstraction. H₀ appears to be the lowest level, with the most detailed connections, while Hₙ represents the highest level of abstraction. The graphs are composed of nodes (circles) and edges (lines connecting the nodes). Some edges are highlighted in blue, potentially indicating causal relationships.

### Key Observations

* The pipeline is clearly divided into offline and online stages, suggesting a pre-processing step (graph construction) followed by a real-time query/answer process.

* LLMs are used in both stages, highlighting their importance in both knowledge extraction and reasoning.

* The use of hierarchical graphs and causal gates suggests an attempt to model complex relationships and avoid spurious correlations.

* The "Vector Store" component indicates the use of vector embeddings for efficient similarity search.

* The checkmark on the right side indicates a successful answer retrieval.

### Interpretation

This diagram illustrates a sophisticated approach to knowledge representation and question answering. The offline graph construction phase aims to create a structured knowledge base that captures causal relationships. The online retrieval phase leverages this knowledge base to answer queries efficiently and accurately. The use of LLMs suggests that the system is capable of understanding natural language and performing complex reasoning. The hierarchical graph structure allows for different levels of abstraction, potentially enabling the system to answer both broad and specific questions. The inclusion of causal gates suggests an attempt to mitigate the problem of spurious correlations, which is a common challenge in knowledge graph-based systems. The overall architecture suggests a system designed for robust and reliable question answering, particularly in domains where causal reasoning is important. The diagram does not provide any quantitative data, but rather focuses on the conceptual flow of information. It is a high-level overview of a complex system, and further details would be needed to fully understand its implementation and performance.