## Flowchart Diagram: Knowledge Graph Construction and Query Processing Pipeline

### Overview

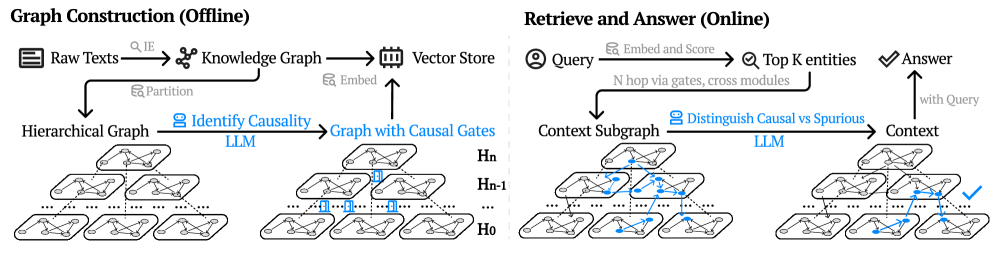

The diagram illustrates a two-phase system for knowledge graph construction and query processing. The left side depicts an offline "Graph Construction" pipeline, while the right side shows an online "Retrieve and Answer" workflow. Both phases involve hierarchical graph structures, causal reasoning, and large language model (LLM) integration.

### Components/Axes

**Left Section (Graph Construction - Offline):**

1. **Input**: Raw Texts → Knowledge Graph (via IE) → Vector Store

2. **Processing Steps**:

- Partition → Hierarchical Graph

- Embed → Identify Causality (LLM) → Graph with Causal Gates

3. **Output**: Multi-layered hierarchical graph (H₀ to Hₙ) with causal gates

**Right Section (Retrieve and Answer - Online):**

1. **Input**: Query → Embed and Score

2. **Processing Steps**:

- Top K entities → N-hop via gates, cross modules

- Distinguish Causal vs Spurious (LLM) → Context

3. **Output**: Answer with Query

**Visual Elements**:

- **Colors**:

- Blue: LLM components (Identify Causality, Distinguish Causal vs Spurious)

- Green: Causal gates

- **Arrows**: Indicate data flow direction

- **Hierarchical Structure**: Stacked graph layers (H₀ to Hₙ) in both sections

### Detailed Analysis

**Offline Phase**:

1. Raw texts are processed through Information Extraction (IE) to create a Knowledge Graph.

2. The graph is partitioned and embedded into a Vector Store.

3. A Hierarchical Graph is constructed, with layers labeled H₀ to Hₙ.

4. Causal relationships are identified using LLM, resulting in a Graph with Causal Gates.

**Online Phase**:

1. Queries are embedded and scored against the vector store.

2. Top K relevant entities are retrieved using N-hop traversal through causal gates and cross-module connections.

3. LLM distinguishes between causal and spurious relationships to refine context.

4. Final answer is generated by combining query context with retrieved information.

### Key Observations

1. **Modular Design**: The system separates offline graph construction from online query processing.

2. **Causal Reasoning**: Explicit emphasis on identifying and leveraging causal relationships throughout the pipeline.

3. **LLM Integration**: Used at two critical points - causality identification and spurious relationship filtering.

4. **Hierarchical Navigation**: Query processing involves multi-layered graph traversal (N-hop) through causal gates.

5. **Contextualization**: Final answer generation combines retrieved entities with context-aware reasoning.

### Interpretation

This architecture demonstrates a sophisticated approach to knowledge graph utilization:

- The offline phase focuses on building a causally-aware graph structure optimized for efficient querying.

- The online phase leverages this preprocessed structure to enable context-aware question answering.

- The use of LLM for both causality identification and spurious relationship filtering suggests an attempt to mitigate hallucination risks in knowledge graph applications.

- The hierarchical graph structure with causal gates implies a design for handling complex, multi-relational data while maintaining query efficiency.

The system appears to balance computational intensity between offline preprocessing and online query handling, potentially optimizing for real-time performance while maintaining high-quality causal reasoning capabilities.