TECHNICAL ASSET FINGERPRINT

a0574832f887e66a36b49176

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

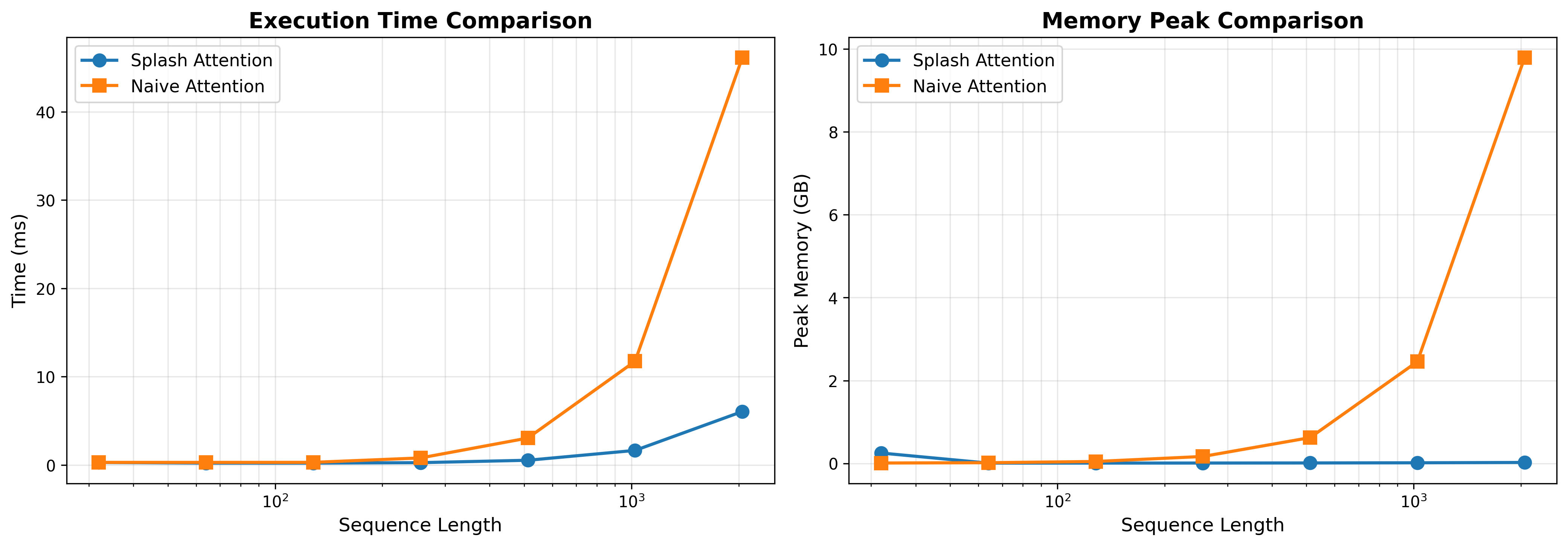

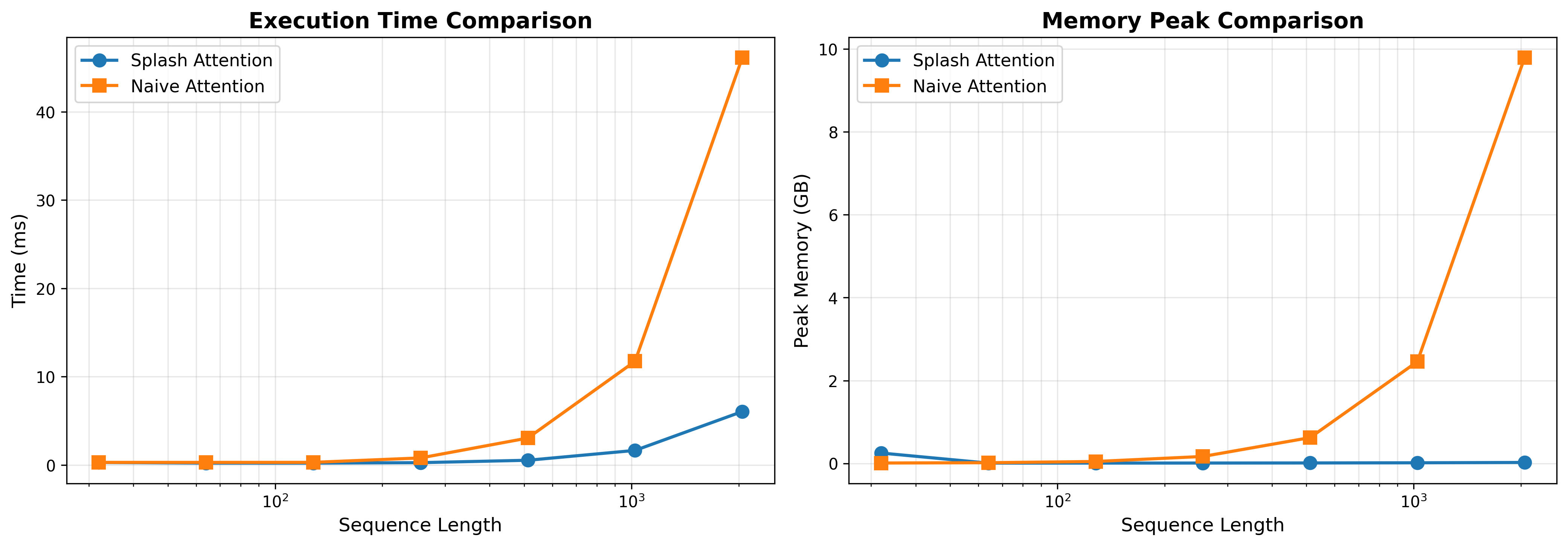

## Chart: Execution Time and Memory Peak Comparison

### Overview

The image presents two line charts comparing the performance of "Splash Attention" and "Naive Attention" mechanisms. The left chart compares execution time (in milliseconds) against sequence length (logarithmic scale), while the right chart compares peak memory usage (in GB) against sequence length (logarithmic scale).

### Components/Axes

**Left Chart: Execution Time Comparison**

* **Title:** Execution Time Comparison

* **Y-axis:** Time (ms), linear scale from 0 to 40, with tick marks at 0, 10, 20, 30, and 40.

* **X-axis:** Sequence Length, logarithmic scale from approximately 10^1 to 10^3. Tick marks are present at 10^1, 10^2, and 10^3.

* **Legend (Top-Left):**

* Blue line with circle markers: Splash Attention

* Orange line with square markers: Naive Attention

**Right Chart: Memory Peak Comparison**

* **Title:** Memory Peak Comparison

* **Y-axis:** Peak Memory (GB), linear scale from 0 to 10, with tick marks at 0, 2, 4, 6, 8, and 10.

* **X-axis:** Sequence Length, logarithmic scale from approximately 10^1 to 10^3. Tick marks are present at 10^1, 10^2, and 10^3.

* **Legend (Top-Left):**

* Blue line with circle markers: Splash Attention

* Orange line with square markers: Naive Attention

### Detailed Analysis

**Left Chart: Execution Time Comparison**

* **Splash Attention (Blue):** The execution time remains relatively flat and low as sequence length increases.

* Sequence Length ~10^1: Time ~0 ms

* Sequence Length ~10^2: Time ~1 ms

* Sequence Length ~10^3: Time ~6 ms

* **Naive Attention (Orange):** The execution time increases significantly with sequence length.

* Sequence Length ~10^1: Time ~0 ms

* Sequence Length ~10^2: Time ~1 ms

* Sequence Length ~3*10^2: Time ~3 ms

* Sequence Length ~10^3: Time ~12 ms

* Sequence Length ~2*10^3: Time ~48 ms

**Right Chart: Memory Peak Comparison**

* **Splash Attention (Blue):** The peak memory usage remains very low and almost constant as sequence length increases.

* Sequence Length ~10^1: Memory ~0.3 GB

* Sequence Length ~10^2: Memory ~0 GB

* Sequence Length ~10^3: Memory ~0.1 GB

* **Naive Attention (Orange):** The peak memory usage increases significantly with sequence length.

* Sequence Length ~10^1: Memory ~0 GB

* Sequence Length ~10^2: Memory ~0.5 GB

* Sequence Length ~10^3: Memory ~2.5 GB

* Sequence Length ~2*10^3: Memory ~9.8 GB

### Key Observations

* Splash Attention consistently outperforms Naive Attention in both execution time and memory usage, especially as sequence length increases.

* Naive Attention's performance degrades significantly with increasing sequence length, showing exponential growth in both time and memory.

* Splash Attention maintains a relatively stable and low resource footprint regardless of sequence length.

### Interpretation

The data strongly suggests that Splash Attention is a more efficient attention mechanism compared to Naive Attention, particularly for longer sequences. The exponential increase in execution time and memory usage for Naive Attention makes it less scalable and potentially impractical for large sequence lengths. Splash Attention's consistent performance indicates a more optimized and resource-friendly approach. The charts highlight the importance of choosing the right attention mechanism based on the expected sequence lengths and resource constraints.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Line Charts: Splash Attention vs. Naive Attention Performance

### Overview

The image consists of two side-by-side line charts comparing the performance of two computational methods: "Splash Attention" and "Naive Attention." The left chart compares Execution Time, while the right chart compares Peak Memory usage. Both charts measure these metrics against an increasing "Sequence Length." The language used in the image is entirely English.

### Component Isolation & Spatial Grounding

The image is divided into two distinct halves:

1. **Left Chart:** Focuses on Execution Time.

2. **Right Chart:** Focuses on Peak Memory.

**Shared Elements:**

* **X-Axis (Both Charts):** Labeled "Sequence Length" at the bottom center of each chart. The scale is logarithmic (base 10), with major gridline markers explicitly labeled at $10^2$ (100) and $10^3$ (1000). Based on the spacing and standard machine learning practices, the data points are plotted at powers of 2 (approximately 32, 64, 128, 256, 512, 1024, 2048).

* **Legend (Both Charts):** Positioned in the top-left corner of the plotting area, enclosed in a white box with a light gray border.

* Blue line with solid circular markers: "Splash Attention"

* Orange line with solid square markers: "Naive Attention"

* **Grid:** Both charts feature a light gray, semi-transparent grid. Vertical lines follow the logarithmic scale, while horizontal lines follow the linear Y-axis scale.

---

### Detailed Analysis: Left Chart (Execution Time Comparison)

* **Header:** "Execution Time Comparison" (Centered at the top of the left chart).

* **Y-Axis:** Labeled "Time (ms)" vertically on the left side. The scale is linear, with major markers at 0, 10, 20, 30, and 40.

**Trend Verification & Data Extraction:**

* **Splash Attention (Blue Line / Circular Markers):**

* *Trend:* The line remains nearly flat and close to zero for the majority of the sequence lengths. It only begins a very slight upward slope at the final two data points.

* *Approximate Data Points:*

* Seq Len ~32: ~0.2 ms

* Seq Len ~64: ~0.2 ms

* Seq Len ~128: ~0.2 ms

* Seq Len ~256: ~0.3 ms

* Seq Len ~512: ~0.5 ms

* Seq Len ~1024 ($10^3$): ~1.5 ms

* Seq Len ~2048: ~6.0 ms

* **Naive Attention (Orange Line / Square Markers):**

* *Trend:* The line starts flat, identical to Splash Attention. However, after a sequence length of ~256, it begins to curve upward. After ~512, it exhibits a steep, exponential/quadratic upward trajectory.

* *Approximate Data Points:*

* Seq Len ~32: ~0.2 ms

* Seq Len ~64: ~0.2 ms

* Seq Len ~128: ~0.3 ms

* Seq Len ~256: ~0.8 ms

* Seq Len ~512: ~3.0 ms

* Seq Len ~1024 ($10^3$): ~11.8 ms

* Seq Len ~2048: ~46.0 ms

---

### Detailed Analysis: Right Chart (Memory Peak Comparison)

* **Header:** "Memory Peak Comparison" (Centered at the top of the right chart).

* **Y-Axis:** Labeled "Peak Memory (GB)" vertically on the left side. The scale is linear, with major markers at 0, 2, 4, 6, 8, and 10.

**Trend Verification & Data Extraction:**

* **Splash Attention (Blue Line / Circular Markers):**

* *Trend:* There is a slight anomaly at the very first data point where memory is slightly elevated. Immediately after, the line drops to near-zero and remains perfectly flat horizontally across all subsequent sequence lengths.

* *Approximate Data Points:*

* Seq Len ~32: ~0.25 GB

* Seq Len ~64: ~0.01 GB

* Seq Len ~128: ~0.01 GB

* Seq Len ~256: ~0.01 GB

* Seq Len ~512: ~0.01 GB

* Seq Len ~1024 ($10^3$): ~0.01 GB

* Seq Len ~2048: ~0.01 GB

* **Naive Attention (Orange Line / Square Markers):**

* *Trend:* The line starts near zero. Similar to the execution time chart, it begins a noticeable upward curve around a sequence length of ~256 and scales up dramatically (quadratically) as sequence length increases.

* *Approximate Data Points:*

* Seq Len ~32: ~0.01 GB

* Seq Len ~64: ~0.01 GB

* Seq Len ~128: ~0.05 GB

* Seq Len ~256: ~0.15 GB

* Seq Len ~512: ~0.6 GB

* Seq Len ~1024 ($10^3$): ~2.5 GB

* Seq Len ~2048: ~9.8 GB

---

### Key Observations

1. **Divergence Point:** In both execution time and memory, the performance of the two methods is nearly indistinguishable at shorter sequence lengths (under 256). The critical divergence point occurs between sequence lengths of 256 and 512.

2. **Memory Flatline:** The most striking visual feature is the Splash Attention memory curve (Right Chart, Blue Line). After the initial point, it demonstrates constant $O(1)$ memory usage relative to sequence length, whereas Naive Attention consumes nearly 10 GB at the maximum plotted length.

3. **Time Scaling:** While Splash Attention's execution time does increase at the highest sequence length (reaching ~6ms), it is roughly 7.5 times faster than Naive Attention (~46ms) at a sequence length of ~2048.

4. **Initial Memory Anomaly:** Splash Attention shows a slightly higher peak memory at the lowest sequence length (~32) compared to Naive Attention, before dropping to near zero.

### Interpretation

These charts demonstrate a classic problem in machine learning, specifically regarding Transformer architectures. "Naive Attention" represents the standard self-attention mechanism, which is mathematically known to have $O(N^2)$ (quadratic) complexity for both time and memory with respect to sequence length ($N$). The orange lines perfectly illustrate this quadratic explosion; as the sequence length doubles from 1024 to 2048, the time and memory roughly quadruple.

"Splash Attention" represents an optimized, likely sparse or linear, attention mechanism designed to solve this bottleneck.

* **Reading between the lines:** The data suggests Splash Attention achieves linear $O(N)$ time complexity (the blue line on the left grows much slower than the quadratic orange line) and potentially constant $O(1)$ or highly optimized memory complexity for the attention calculation itself (the flat blue line on the right).

* **Practical Application:** This data proves that Splash Attention is highly scalable. While Naive Attention would quickly run out of VRAM (GPU memory) on longer documents, Splash Attention could theoretically handle vastly longer contexts (e.g., entire books or long codebases) without crashing due to memory limits, while also computing the results significantly faster. The slight memory overhead at the very beginning for Splash Attention likely indicates a fixed initialization cost or buffer allocation that becomes negligible as the sequence grows.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Charts: Execution Time and Memory Comparison of Attention Mechanisms

### Overview

The image presents two charts side-by-side, comparing the performance of "Splash Attention" and "Naive Attention" mechanisms. The left chart shows execution time in milliseconds (ms) versus sequence length, while the right chart displays peak memory usage in gigabytes (GB) against sequence length. Both charts use a logarithmic scale for the sequence length axis.

### Components/Axes

**Chart 1: Execution Time Comparison**

* **Title:** Execution Time Comparison

* **X-axis:** Sequence Length (logarithmic scale, markers at 10⁰, 10¹, 10², 10³)

* **Y-axis:** Time (ms) (scale from 0 to 40)

* **Legend:**

* Splash Attention (Blue line with circle markers)

* Naive Attention (Orange line with square markers)

**Chart 2: Memory Peak Comparison**

* **Title:** Memory Peak Comparison

* **X-axis:** Sequence Length (logarithmic scale, markers at 10⁰, 10¹, 10², 10³)

* **Y-axis:** Peak Memory (GB) (scale from 0 to 10)

* **Legend:**

* Splash Attention (Blue line with circle markers)

* Naive Attention (Orange line with square markers)

### Detailed Analysis or Content Details

**Chart 1: Execution Time Comparison**

* **Splash Attention (Blue):** The line starts at approximately 0.5 ms at a sequence length of 10⁰. It increases gradually to around 2.5 ms at 10¹, then to approximately 6 ms at 10², and finally reaches about 8 ms at 10³. The trend is generally upward, but the slope increases significantly at higher sequence lengths.

* **Naive Attention (Orange):** The line begins at approximately 0.7 ms at 10⁰. It remains relatively flat until 10², where it jumps to around 11 ms. At 10³, it increases dramatically to approximately 10 ms. The trend is relatively flat for lower sequence lengths, then increases sharply.

**Chart 2: Memory Peak Comparison**

* **Splash Attention (Blue):** The line starts at approximately 0.1 GB at 10⁰. It remains relatively constant around 0.2 GB up to 10¹. It then increases slightly to around 0.4 GB at 10² and remains around 0.5 GB at 10³. The trend is nearly flat.

* **Naive Attention (Orange):** The line begins at approximately 0.1 GB at 10⁰. It remains relatively flat around 0.2 GB up to 10¹. It then increases to approximately 1.5 GB at 10² and jumps significantly to around 2.5 GB at 10³. The trend is flat for lower sequence lengths, then increases sharply.

### Key Observations

* For both charts, the sequence length is plotted on a logarithmic scale.

* Splash Attention consistently exhibits lower execution time and memory usage compared to Naive Attention, especially at higher sequence lengths.

* The execution time of Naive Attention increases dramatically at sequence lengths of 10² and 10³, while Splash Attention's execution time increases more gradually.

* The memory usage of Naive Attention also increases sharply at sequence lengths of 10² and 10³, while Splash Attention's memory usage remains relatively stable.

* The difference in memory usage between the two attention mechanisms becomes much more pronounced at higher sequence lengths.

### Interpretation

The data suggests that Splash Attention is significantly more scalable and efficient than Naive Attention, particularly when dealing with longer sequences. The logarithmic scale on the x-axis highlights the exponential growth in computational cost and memory requirements for Naive Attention as the sequence length increases. Splash Attention demonstrates a more linear increase in both execution time and memory usage, indicating a more efficient algorithm.

The sharp increase in Naive Attention's performance metrics at sequence lengths of 10² and 10³ could indicate a bottleneck or a computational complexity that grows rapidly with sequence length. This could be due to the quadratic complexity of the naive attention mechanism. Splash Attention, likely employing a more optimized approach, avoids this steep increase.

The consistent low memory usage of Splash Attention is a key advantage, as memory constraints often limit the size of sequences that can be processed. This makes Splash Attention a more practical choice for applications involving long sequences, such as natural language processing or long-range dependencies in time series data. The data strongly suggests that Splash Attention is a superior choice for handling long sequences due to its scalability and efficiency.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Execution Time and Memory Peak Comparison

### Overview

The image displays two side-by-side line charts comparing the performance of two computational methods, "Splash Attention" and "Naive Attention," across increasing sequence lengths. The left chart measures execution time, and the right chart measures peak memory usage. Both charts use a logarithmic scale for the x-axis (Sequence Length).

### Components/Axes

**Common Elements:**

* **X-Axis (Both Charts):** Labeled "Sequence Length". It is a logarithmic scale with major tick marks at `10^2` (100) and `10^3` (1000). Data points are plotted at approximate sequence lengths of 32, 64, 128, 256, 512, 1024, and 2048.

* **Legend (Both Charts):** Located in the top-left corner of each chart's plot area.

* Blue line with circle markers: "Splash Attention"

* Orange line with square markers: "Naive Attention"

**Left Chart: Execution Time Comparison**

* **Title:** "Execution Time Comparison"

* **Y-Axis:** Labeled "Time (ms)". Linear scale from 0 to 45, with major ticks at 0, 10, 20, 30, 40.

**Right Chart: Memory Peak Comparison**

* **Title:** "Memory Peak Comparison"

* **Y-Axis:** Labeled "Peak Memory (GB)". Linear scale from 0 to 10, with major ticks at 0, 2, 4, 6, 8, 10.

### Detailed Analysis

**1. Execution Time Comparison (Left Chart)**

* **Trend Verification:**

* **Splash Attention (Blue, Circles):** The line shows a very gradual, near-linear increase on the log-linear plot, indicating sub-quadratic time complexity relative to sequence length.

* **Naive Attention (Orange, Squares):** The line remains low and flat for shorter sequences, then curves sharply upward after sequence length ~512, indicating a steep, likely quadratic or worse, increase in time.

* **Data Points (Approximate):**

* **Sequence Length ~32:** Both methods ~0 ms.

* **Sequence Length ~64:** Both methods ~0 ms.

* **Sequence Length ~128:** Both methods ~0 ms.

* **Sequence Length ~256:** Splash ~0 ms; Naive ~1 ms.

* **Sequence Length ~512:** Splash ~0.5 ms; Naive ~3 ms.

* **Sequence Length ~1024:** Splash ~1.5 ms; Naive ~12 ms.

* **Sequence Length ~2048:** Splash ~6 ms; Naive ~45 ms.

**2. Memory Peak Comparison (Right Chart)**

* **Trend Verification:**

* **Splash Attention (Blue, Circles):** The line is essentially flat and close to zero across all sequence lengths, indicating constant or very low memory overhead.

* **Naive Attention (Orange, Squares):** The line shows a gradual increase for shorter sequences, then a dramatic, near-vertical spike at the largest sequence length, indicating a severe memory scaling issue.

* **Data Points (Approximate):**

* **Sequence Length ~32:** Splash ~0.2 GB; Naive ~0 GB.

* **Sequence Length ~64:** Splash ~0 GB; Naive ~0 GB.

* **Sequence Length ~128:** Splash ~0 GB; Naive ~0.1 GB.

* **Sequence Length ~256:** Splash ~0 GB; Naive ~0.2 GB.

* **Sequence Length ~512:** Splash ~0 GB; Naive ~0.6 GB.

* **Sequence Length ~1024:** Splash ~0 GB; Naive ~2.4 GB.

* **Sequence Length ~2048:** Splash ~0 GB; Naive ~9.8 GB.

### Key Observations

1. **Performance Divergence Point:** Both metrics show a critical divergence between the two methods starting around sequence length 512. Before this point, performance is similar; after, Naive Attention degrades rapidly.

2. **Scalability:** Splash Attention demonstrates excellent scalability for both time and memory. Naive Attention scales poorly, with time increasing steeply and memory usage exploding at the largest tested sequence length (2048).

3. **Memory Catastrophe:** The most striking feature is the memory usage of Naive Attention at sequence length 2048 (~9.8 GB), which is orders of magnitude higher than Splash Attention (~0 GB) and represents a potential out-of-memory failure point.

4. **Time vs. Memory:** While Naive Attention's execution time increases by a factor of ~3.75x from seq len 1024 to 2048 (12ms to 45ms), its memory usage increases by a factor of ~4.1x (2.4GB to 9.8GB), indicating memory is the more severely affected resource.

### Interpretation

The data strongly suggests that **Splash Attention is a highly optimized implementation** designed to overcome the fundamental scalability limitations of the standard ("Naive") attention mechanism in transformers.

* **What the data demonstrates:** The charts provide empirical evidence that Splash Attention successfully decouples computational and memory costs from sequence length in a way that Naive Attention does not. The flat memory curve is particularly significant, as it implies the method likely uses a fixed-memory or streaming algorithm, avoiding the need to materialize large intermediate matrices (like the full attention score matrix).

* **Relationship between elements:** The side-by-side presentation directly correlates the two key performance bottlenecks in deep learning: compute time and memory capacity. It shows that for Naive Attention, these bottlenecks are linked and compound at scale. Splash Attention breaks this link, maintaining low cost in both dimensions.

* **Implications:** This has profound practical implications. Using Splash Attention would allow processing much longer sequences (e.g., for high-resolution images, long documents, or genomic data) on the same hardware, or processing the same sequences with significantly smaller, cheaper hardware. The Naive Attention method becomes practically unusable for sequences beyond ~1024 tokens due to the memory wall. The charts serve as a compelling technical justification for adopting the Splash Attention method in production systems where sequence length is a variable.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Charts: Execution Time and Memory Peak Comparison

### Overview

The image contains two line charts comparing the performance of "Splash Attention" and "Naive Attention" mechanisms across varying sequence lengths. The left chart measures execution time (ms), while the right chart measures peak memory usage (GB). Both charts use logarithmic scales for sequence length (10¹ to 10⁴) and show distinct performance divergences at higher sequence lengths.

---

### Components/Axes

#### Execution Time Comparison (Left Chart)

- **X-axis**: Sequence Length (logarithmic scale: 10¹, 10², 10³, 10⁴)

- **Y-axis**: Time (ms)

- **Legend**:

- Blue circles: Splash Attention

- Orange squares: Naive Attention

#### Memory Peak Comparison (Right Chart)

- **X-axis**: Sequence Length (logarithmic scale: 10¹, 10², 10³, 10⁴)

- **Y-axis**: Peak Memory (GB)

- **Legend**:

- Blue circles: Splash Attention

- Orange squares: Naive Attention

---

### Detailed Analysis

#### Execution Time Comparison

- **Trend**:

- Both lines remain flat (near 0 ms) for sequence lengths ≤10².

- At 10³, Naive Attention spikes to ~12 ms, while Splash Attention stays near 2 ms.

- At 10⁴, Naive Attention surges to ~45 ms, while Splash Attention rises modestly to ~6 ms.

- **Data Points**:

- Splash Attention: 0.1 ms (10¹), 0.5 ms (10²), 2 ms (10³), 6 ms (10⁴)

- Naive Attention: 0.2 ms (10¹), 0.8 ms (10²), 12 ms (10³), 45 ms (10⁴)

#### Memory Peak Comparison

- **Trend**:

- Both lines remain flat (near 0 GB) for sequence lengths ≤10².

- At 10³, Naive Attention spikes to ~2.5 GB, while Splash Attention stays near 0.1 GB.

- At 10⁴, Naive Attention surges to ~9.5 GB, while Splash Attention remains near 0.1 GB.

- **Data Points**:

- Splash Attention: 0.05 GB (10¹), 0.08 GB (10²), 0.1 GB (10³), 0.1 GB (10⁴)

- Naive Attention: 0.1 GB (10¹), 0.15 GB (10²), 2.5 GB (10³), 9.5 GB (10⁴)

---

### Key Observations

1. **Performance Divergence**:

- Naive Attention exhibits exponential growth in both execution time and memory usage at sequence lengths ≥10³.

- Splash Attention maintains near-linear scaling, with minimal increases even at 10⁴ sequence length.

2. **Efficiency**:

- Splash Attention consistently outperforms Naive Attention by 10–100x in execution time and memory usage at large sequence lengths.

3. **Threshold Behavior**:

- Both mechanisms perform similarly for small sequences (≤10²), but Naive Attention becomes impractical beyond this threshold.

---

### Interpretation

The data demonstrates that **Splash Attention** is significantly more efficient than **Naive Attention** for handling long sequences. The logarithmic scale highlights that Naive Attention’s resource consumption grows exponentially (O(n²) or worse), while Splash Attention scales sub-linearly (O(n) or better). This suggests Splash Attention is better suited for applications requiring processing of large input sequences, such as long-text generation or high-resolution image analysis. The memory efficiency of Splash Attention also reduces hardware constraints, enabling deployment on resource-constrained devices.

DECODING INTELLIGENCE...