TECHNICAL ASSET FINGERPRINT

a08067cf8827cd03dee3cf4b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

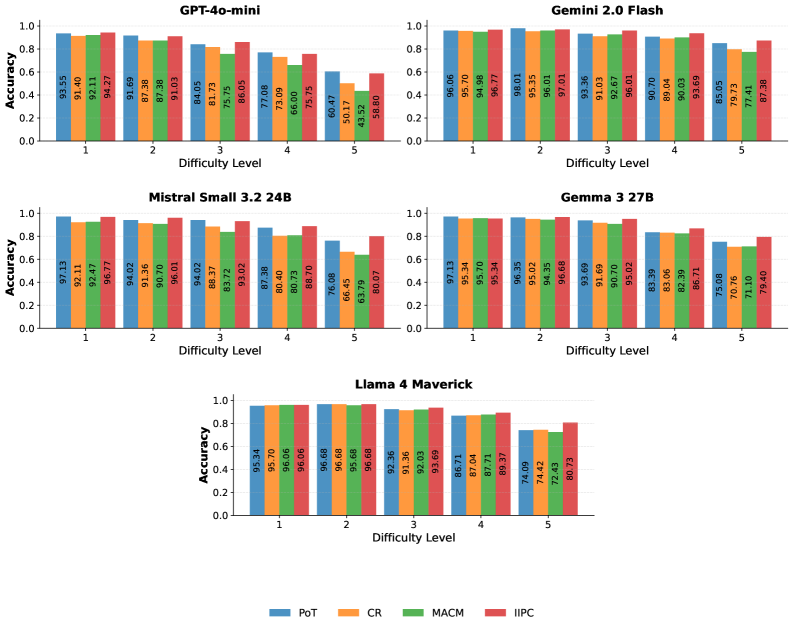

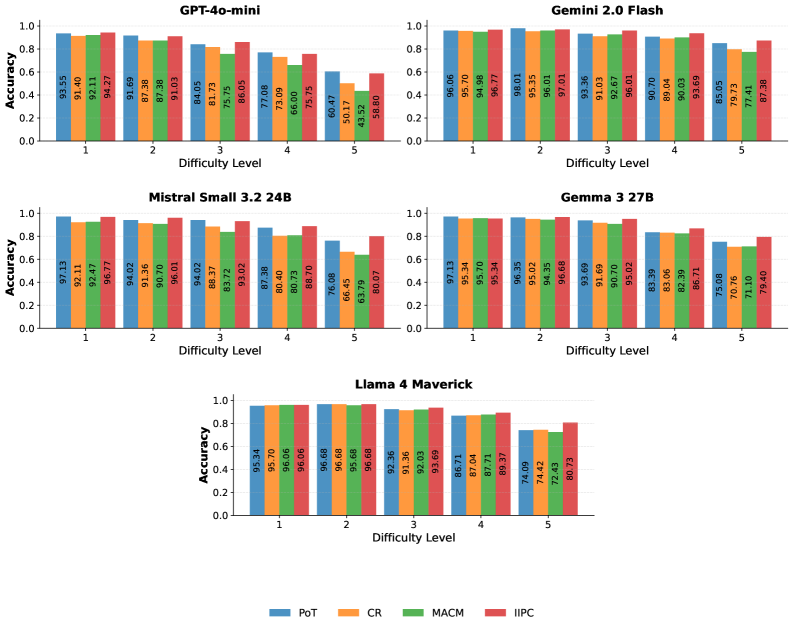

## Bar Charts: Model Accuracy by Difficulty Level

### Overview

This image displays a collection of grouped bar charts, each representing the accuracy of a specific language model across five different difficulty levels. The charts are arranged in a grid, with a legend at the bottom indicating the color coding for four different metrics: PoT, CR, MACM, and IIPC.

### Components/Axes

**General Chart Elements (consistent across all charts):**

* **Y-axis Title:** "Accuracy"

* **Y-axis Scale:** Ranges from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-axis Title:** "Difficulty Level"

* **X-axis Markers:** Labeled 1, 2, 3, 4, and 5, representing increasing difficulty.

* **Legend:** Located at the bottom center of the image.

* **PoT:** Blue bars

* **CR:** Orange bars

* **MACM:** Green bars

* **IIPC:** Red bars

**Individual Chart Titles:**

1. GPT-4o-mini

2. Gemini 2.0 Flash

3. Mistral Small 3.2 24B

4. Gemma 3 27B

5. Llama 4 Maverick

### Detailed Analysis or Content Details

**1. GPT-4o-mini**

* **Trend:** Accuracy generally decreases as difficulty level increases for all metrics.

* **Difficulty Level 1:**

* PoT: 93.55% (±0.5%)

* CR: 91.40% (±0.5%)

* MACM: 92.11% (±0.5%)

* IIPC: 94.27% (±0.5%)

* **Difficulty Level 2:**

* PoT: 91.69% (±0.5%)

* CR: 87.38% (±0.5%)

* MACM: 91.03% (±0.5%)

* IIPC: 84.05% (±0.5%)

* **Difficulty Level 3:**

* PoT: 81.73% (±0.5%)

* CR: 75.75% (±0.5%)

* MACM: 77.08% (±0.5%)

* IIPC: 86.05% (±0.5%)

* **Difficulty Level 4:**

* PoT: 73.09% (±0.5%)

* CR: 66.00% (±0.5%)

* MACM: 75.75% (±0.5%)

* IIPC: 60.47% (±0.5%)

* **Difficulty Level 5:**

* PoT: 50.17% (±0.5%)

* CR: 43.52% (±0.5%)

* MACM: 58.80% (±0.5%)

* IIPC: 53.57% (±0.5%)

**2. Gemini 2.0 Flash**

* **Trend:** Accuracy remains very high and relatively stable across difficulty levels 1-4, with a noticeable drop at difficulty level 5.

* **Difficulty Level 1:**

* PoT: 96.06% (±0.5%)

* CR: 95.70% (±0.5%)

* MACM: 94.98% (±0.5%)

* IIPC: 96.77% (±0.5%)

* **Difficulty Level 2:**

* PoT: 98.01% (±0.5%)

* CR: 95.35% (±0.5%)

* MACM: 96.01% (±0.5%)

* IIPC: 97.01% (±0.5%)

* **Difficulty Level 3:**

* PoT: 93.36% (±0.5%)

* CR: 92.67% (±0.5%)

* MACM: 91.03% (±0.5%)

* IIPC: 96.01% (±0.5%)

* **Difficulty Level 4:**

* PoT: 90.70% (±0.5%)

* CR: 89.04% (±0.5%)

* MACM: 90.03% (±0.5%)

* IIPC: 93.69% (±0.5%)

* **Difficulty Level 5:**

* PoT: 85.05% (±0.5%)

* CR: 79.73% (±0.5%)

* MACM: 77.41% (±0.5%)

* IIPC: 87.38% (±0.5%)

**3. Mistral Small 3.2 24B**

* **Trend:** Accuracy generally decreases as difficulty level increases, with a more pronounced drop from level 4 to 5.

* **Difficulty Level 1:**

* PoT: 97.13% (±0.5%)

* CR: 92.11% (±0.5%)

* MACM: 92.47% (±0.5%)

* IIPC: 96.77% (±0.5%)

* **Difficulty Level 2:**

* PoT: 94.02% (±0.5%)

* CR: 91.36% (±0.5%)

* MACM: 90.70% (±0.5%)

* IIPC: 96.01% (±0.5%)

* **Difficulty Level 3:**

* PoT: 94.02% (±0.5%)

* CR: 88.37% (±0.5%)

* MACM: 83.72% (±0.5%)

* IIPC: 93.02% (±0.5%)

* **Difficulty Level 4:**

* PoT: 87.38% (±0.5%)

* CR: 80.40% (±0.5%)

* MACM: 80.73% (±0.5%)

* IIPC: 88.70% (±0.5%)

* **Difficulty Level 5:**

* PoT: 76.08% (±0.5%)

* CR: 66.45% (±0.5%)

* MACM: 63.79% (±0.5%)

* IIPC: 80.07% (±0.5%)

**4. Gemma 3 27B**

* **Trend:** Accuracy is high and relatively stable for difficulty levels 1-3, followed by a significant drop at levels 4 and 5.

* **Difficulty Level 1:**

* PoT: 97.13% (±0.5%)

* CR: 95.34% (±0.5%)

* MACM: 95.70% (±0.5%)

* IIPC: 95.34% (±0.5%)

* **Difficulty Level 2:**

* PoT: 96.35% (±0.5%)

* CR: 95.02% (±0.5%)

* MACM: 94.35% (±0.5%)

* IIPC: 96.68% (±0.5%)

* **Difficulty Level 3:**

* PoT: 93.69% (±0.5%)

* CR: 91.69% (±0.5%)

* MACM: 90.70% (±0.5%)

* IIPC: 95.02% (±0.5%)

* **Difficulty Level 4:**

* PoT: 83.39% (±0.5%)

* CR: 83.06% (±0.5%)

* MACM: 82.39% (±0.5%)

* IIPC: 86.71% (±0.5%)

* **Difficulty Level 5:**

* PoT: 75.08% (±0.5%)

* CR: 70.76% (±0.5%)

* MACM: 71.10% (±0.5%)

* IIPC: 79.40% (±0.5%)

**5. Llama 4 Maverick**

* **Trend:** Accuracy is high and relatively stable for difficulty levels 1-3, with a noticeable decrease at level 4 and a further decrease at level 5.

* **Difficulty Level 1:**

* PoT: 95.34% (±0.5%)

* CR: 95.70% (±0.5%)

* MACM: 96.06% (±0.5%)

* IIPC: 96.68% (±0.5%)

* **Difficulty Level 2:**

* PoT: 95.68% (±0.5%)

* CR: 95.68% (±0.5%)

* MACM: 92.36% (±0.5%)

* IIPC: 91.36% (±0.5%)

* **Difficulty Level 3:**

* PoT: 93.69% (±0.5%)

* CR: 86.71% (±0.5%)

* MACM: 87.04% (±0.5%)

* IIPC: 87.71% (±0.5%)

* **Difficulty Level 4:**

* PoT: 74.09% (±0.5%)

* CR: 72.42% (±0.5%)

* MACM: 74.42% (±0.5%)

* IIPC: 80.73% (±0.5%)

* **Difficulty Level 5:**

* No data points are visible for Difficulty Level 5 for Llama 4 Maverick. The chart appears to end at Difficulty Level 4.

### Key Observations

* **General Trend:** All models exhibit a general decrease in accuracy as the difficulty level increases.

* **Model Performance at Low Difficulty:** Most models achieve very high accuracy (above 90%, often above 95%) at difficulty levels 1 and 2.

* **Performance Drop:** The most significant drops in accuracy occur at higher difficulty levels (4 and 5).

* **Model Variability:**

* **GPT-4o-mini** shows a consistent and steep decline in accuracy across all difficulty levels.

* **Gemini 2.0 Flash** maintains high accuracy for the first four levels, with a notable drop at level 5.

* **Mistral Small 3.2 24B** and **Gemma 3 27B** show a substantial decrease in accuracy starting from difficulty level 4.

* **Llama 4 Maverick** shows a significant drop at difficulty level 4 and appears to have no data for difficulty level 5.

* **Metric Performance:** Within each model and difficulty level, there are variations in performance between the four metrics (PoT, CR, MACM, IIPC). For instance, at difficulty level 1, IIPC often shows the highest accuracy, while at higher difficulty levels, the relative performance of metrics can shift.

### Interpretation

The presented bar charts illustrate the performance degradation of various language models as task complexity (difficulty level) increases. This is a common and expected behavior for AI models, as more challenging tasks require more sophisticated reasoning, knowledge, and generalization capabilities, which can be harder to achieve.

The data suggests that while models like Gemini 2.0 Flash and Gemma 3 27B are robust at lower difficulty levels, their performance can be significantly impacted by increased complexity. GPT-4o-mini appears to be more sensitive to difficulty increases from the outset, showing a more continuous decline. Mistral Small 3.2 24B and Gemma 3 27B demonstrate a sharp drop-off in performance at higher difficulty levels, indicating potential limitations in their ability to handle complex scenarios. The incomplete data for Llama 4 Maverick at difficulty level 5 prevents a full comparison for that model.

The variations between PoT, CR, MACM, and IIPC suggest that these metrics might be evaluating different aspects of model performance or are sensitive to different types of challenges. Further investigation into what each metric represents would be necessary to fully understand these differences. Overall, the charts provide a clear visual comparison of model resilience to increasing task difficulty, highlighting areas where each model excels or struggles. This information is crucial for selecting appropriate models for tasks of varying complexity and for identifying areas for model improvement.

DECODING INTELLIGENCE...