TECHNICAL ASSET FINGERPRINT

a0970c2282aea96e2250b479

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

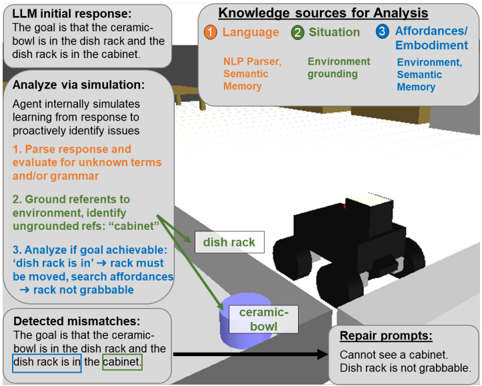

## Diagram: AI Agent Reasoning Process for Goal Achievement

### Overview

This image is a technical diagram illustrating the internal reasoning process of an AI agent tasked with achieving a specific goal in a simulated environment. The diagram is divided into two main sections: a flowchart on the left detailing the agent's analytical steps, and a 3D scene visualization on the right showing the physical context of the goal. The overall purpose is to demonstrate how the agent simulates, analyzes, and identifies issues with a proposed plan before execution.

### Components/Axes

The diagram is not a chart with axes but a process flow diagram with labeled components.

**1. Flowchart Components (Left Side):**

* **LLM initial response:** A box at the top stating the agent's initial goal.

* **Knowledge sources for Analysis:** A legend/key box at the top right listing three categories of knowledge used:

1. **Language:** NLP Parser, Semantic Memory

2. **Situation:** Environment grounding

3. **Affordances/Embodiment:** Environment, Semantic Memory

* **Analyze via simulation:** A large box outlining a three-step internal simulation process.

* **Detected mismatches:** A box highlighting a specific problem found during analysis.

* **Repair prompts:** A box listing corrective feedback generated from the detected mismatch.

**2. 3D Scene Components (Right Side):**

* A rendered scene showing a kitchen-like environment.

* **Labeled Objects:**

* `dish rack` (a grey, rectangular object on a counter)

* `ceramic bowl` (a blue, circular object on the floor)

* **Spatial Relationships:** The dish rack is on a counter. The ceramic bowl is on the floor, below and to the left of the dish rack. A cabinet is implied but not visually rendered.

**3. Connective Elements:**

* **Arrows:** Black arrows indicate the flow of information and process steps, connecting the boxes in a logical sequence from the initial response, through analysis, to mismatch detection and repair prompts.

* **Green Lines:** Two green lines connect the text "cabinet" in the "Analyze via simulation" box to the 3D scene, indicating the agent is attempting to ground this textual reference in the visual environment.

### Detailed Analysis

**Textual Content Transcription:**

* **LLM initial response:** "The goal is that the ceramic bowl is in the dish rack and the dish rack is in the cabinet."

* **Knowledge sources for Analysis:**

* 1 Language: NLP Parser, Semantic Memory

* 2 Situation: Environment grounding

* 3 Affordances/Embodiment: Environment, Semantic Memory

* **Analyze via simulation:** "Agent internally simulates learning from response to proactively identify issues."

* "1. Parse response and evaluate for unknown terms and/or grammar"

* "2. Ground refers to environment, identify ungrounded refs: 'cabinet'"

* "3. Analyze if goal achievable: 'dish rack is in' -> rack must be moved, search affordances -> rack not grabbable"

* **Detected mismatches:** "The goal is that the ceramic bowl is in the dish rack and the dish rack is in the cabinet." (The phrases "dish rack is in" and "cabinet" are highlighted with green boxes).

* **Repair prompts:** "Cannot see a cabinet. Dish rack is not grabbable."

**Process Flow:**

1. The agent receives a goal from an LLM.

2. It accesses three knowledge sources (Language, Situation, Affordances) to analyze the goal.

3. It runs an internal simulation:

* **Step 1 (Parse):** Checks the language of the goal.

* **Step 2 (Ground):** Attempts to link words to the environment. It identifies "cabinet" as an **ungrounded reference**—the word exists in the goal but has no corresponding object in the perceived 3D scene.

* **Step 3 (Analyze Achievability):** Breaks down the goal's requirements. It deduces that for the "dish rack is in" condition to be true, the rack must be moved. It then checks the environment's affordances (what actions are possible) and finds the rack is **not grabbable**.

4. Based on the simulation, it detects a **mismatch** between the goal's requirements and the environment's state/capabilities.

5. It generates **repair prompts** (feedback) stating the specific failures: the missing cabinet and the non-grabbable rack.

### Key Observations

* **Proactive Error Detection:** The core theme is the agent's ability to identify plan failures *before* attempting execution, through simulation.

* **Grounding Failure:** A primary issue is the "ungrounded reference" to "cabinet." The agent's perceptual system does not detect a cabinet in the scene, making that part of the goal impossible to verify or achieve.

* **Affordance Constraint:** A second, independent failure is identified: the dish rack lacks the "grabbable" affordance, meaning the agent cannot physically manipulate it to place it inside a cabinet (even if one were present).

* **Spatial Layout:** The legend ("Knowledge sources") is positioned in the top-right corner. The main analytical flowchart occupies the left ~60% of the image. The 3D scene is on the right, providing the visual context that the analysis is based upon.

### Interpretation

This diagram illustrates a sophisticated approach to AI planning and embodied reasoning. It demonstrates an agent that doesn't just blindly follow instructions but performs a **Peircean investigative** process: it forms a hypothesis (the goal), gathers evidence (from language, perception, and knowledge of capabilities), and tests the hypothesis against reality through simulation.

The data suggests that for an AI to successfully interact with a physical (or simulated) world, it requires tight integration between:

1. **Semantic Understanding** (parsing the goal).

2. **Perceptual Grounding** (linking words to objects in the world).

3. **Physical Reasoning** (understanding what actions are possible—affordances).

The "detected mismatches" are not errors in the agent's logic but accurate diagnoses of an impossible or ill-posed goal given the current environmental state. The "repair prompts" are the critical output, transforming an internal analysis into actionable feedback that could be used to revise the goal, modify the environment, or update the agent's knowledge. The diagram effectively argues that such proactive, simulation-based analysis is essential for robust and safe AI agents operating in complex, real-world-like settings.

DECODING INTELLIGENCE...