TECHNICAL ASSET FINGERPRINT

a0a93be8e558ca9eaec8986a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

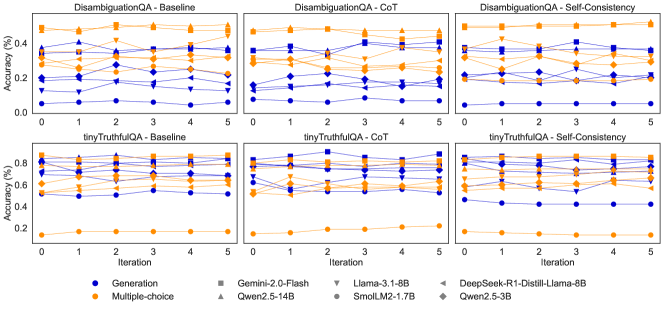

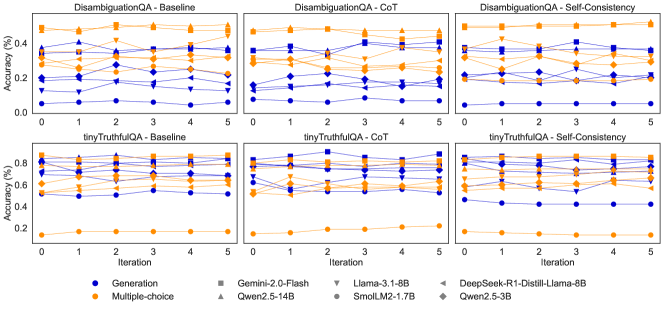

## Line Charts: Model Accuracy Comparison

### Overview

The image presents six line charts arranged in a 2x3 grid, comparing the accuracy of different language models across several iterations. The charts are grouped by task (DisambiguationQA and tinyTruthfulQA) and method (Baseline, CoT - Chain of Thought, and Self-Consistency). Each chart plots the accuracy (%) of various models against the iteration number. The models are distinguished by color and marker type, as indicated in the legend at the bottom.

### Components/Axes

* **Chart Titles (Top Row):**

* DisambiguationQA - Baseline (top-left)

* DisambiguationQA - CoT (top-center)

* DisambiguationQA - Self-Consistency (top-right)

* **Chart Titles (Bottom Row):**

* tinyTruthfulQA - Baseline (bottom-left)

* tinyTruthfulQA - CoT (bottom-center)

* tinyTruthfulQA - Self-Consistency (bottom-right)

* **Y-axis:**

* Label: "Accuracy (%)"

* Scale (DisambiguationQA charts): 0.0 to 0.4, with ticks at 0.0, 0.2, and 0.4.

* Scale (tinyTruthfulQA charts): 0.2 to 0.8, with ticks at 0.2, 0.4, 0.6, and 0.8.

* **X-axis:**

* Label: "Iteration"

* Scale: 0 to 5, with ticks at each integer value.

* **Legend (Bottom):**

* Position: Bottom center of the image.

* Entries:

* Blue Circle: Generation

* Orange Diamond: Multiple-choice

* Gray Square: Gemini-2.0-Flash

* Gray Upward-pointing Triangle: Qwen2.5-14B

* Gray Downward-pointing Triangle: Llama-3.1-8B

* Gray Circle with Diamond Center: SmolLM2-1.7B

* Gray Leftward-pointing Triangle: DeepSeek-R1-Distill-Llama-8B

* Gray Diamond with Plus Center: Qwen2.5-3B

### Detailed Analysis

**DisambiguationQA - Baseline (Top-Left)**

* **Generation (Blue Circles):** Starts around 0.05 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.35 accuracy and fluctuates between 0.25 and 0.4.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.25 accuracy and fluctuates between 0.2 and 0.3.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.45.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.15 accuracy and fluctuates between 0.1 and 0.2.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

**DisambiguationQA - CoT (Top-Center)**

* **Generation (Blue Circles):** Starts around 0.1 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.3 accuracy and fluctuates between 0.25 and 0.4.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.3 accuracy and fluctuates between 0.25 and 0.35.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.45.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

**DisambiguationQA - Self-Consistency (Top-Right)**

* **Generation (Blue Circles):** Starts around 0.1 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.4.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.3 accuracy and fluctuates between 0.25 and 0.35.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.45.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

**tinyTruthfulQA - Baseline (Bottom-Left)**

* **Generation (Blue Circles):** Starts around 0.5 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.15 accuracy and remains relatively flat.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.75 accuracy and fluctuates between 0.7 and 0.8.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.8 accuracy and fluctuates between 0.75 and 0.85.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.7 accuracy and fluctuates between 0.65 and 0.75.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

**tinyTruthfulQA - CoT (Bottom-Center)**

* **Generation (Blue Circles):** Starts around 0.5 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.15 accuracy and remains relatively flat.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.75 accuracy and fluctuates between 0.7 and 0.8.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.8 accuracy and fluctuates between 0.75 and 0.85.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.7 accuracy and fluctuates between 0.65 and 0.75.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

**tinyTruthfulQA - Self-Consistency (Bottom-Right)**

* **Generation (Blue Circles):** Starts around 0.5 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.15 accuracy and remains relatively flat.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.75 accuracy and fluctuates between 0.7 and 0.8.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.8 accuracy and fluctuates between 0.75 and 0.85.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.7 accuracy and fluctuates between 0.65 and 0.75.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

### Key Observations

* The "Multiple-choice" method (orange diamonds) consistently shows lower accuracy on the "tinyTruthfulQA" task compared to other models.

* The "Generation" method (blue circles) shows relatively low accuracy on the "DisambiguationQA" task, but higher accuracy on the "tinyTruthfulQA" task.

* The models Gemini-2.0-Flash, Qwen2.5-14B, DeepSeek-R1-Distill-Llama-8B, and Qwen2.5-3B generally achieve higher accuracy on the "tinyTruthfulQA" task.

* The accuracy of most models remains relatively stable across iterations, with only minor fluctuations.

* The CoT and Self-Consistency methods do not appear to significantly improve the accuracy compared to the Baseline method for most models.

### Interpretation

The charts compare the performance of different language models on two question-answering tasks ("DisambiguationQA" and "tinyTruthfulQA") using different methods (Baseline, Chain of Thought (CoT), and Self-Consistency). The data suggests that the choice of model and task significantly impacts accuracy. For instance, the "Multiple-choice" method seems less effective for the "tinyTruthfulQA" task, while the "Generation" method performs better on "tinyTruthfulQA" than on "DisambiguationQA". The relatively flat lines across iterations indicate that the models' performance does not significantly change with more iterations, suggesting that the models have reached a stable level of accuracy. The CoT and Self-Consistency methods, designed to improve reasoning, do not show a substantial advantage over the baseline, which could indicate that these tasks do not heavily rely on complex reasoning or that the models are not effectively utilizing these methods.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 2

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Chart Type: Grid of Line Charts showing Model Accuracy over Iterations

### Overview

The image displays a 2x3 grid of line charts, illustrating the accuracy of various language models across 6 iterations (0 to 5). The charts are categorized by two main question-answering (QA) datasets: "DisambiguationQA" (top row) and "tinyTruthfulQA" (bottom row), and three different inference strategies: "Baseline," "CoT" (Chain-of-Thought), and "Self-Consistency." Within each subplot, model performance is differentiated by two answer formats: "Generation" (blue lines) and "Multiple-choice" (orange lines), with specific markers identifying individual models. The Y-axis consistently represents "Accuracy (%)" and the X-axis represents "Iteration."

### Components/Axes

**Overall Structure:**

The image is divided into two main rows and three main columns, forming a 2x3 grid of individual line charts.

**Row Titles (Implicit):**

* **Top Row:** DisambiguationQA

* **Bottom Row:** tinyTruthfulQA

**Column Titles (Explicit, top of each subplot):**

* **Column 1:** Baseline

* **Column 2:** CoT

* **Column 3:** Self-Consistency

**Axes:**

* **Common Y-axis Label (left-center):** Accuracy (%)

* **Top Row (DisambiguationQA) Y-axis Range:** Approximately 0.0% to 0.4% (with minor ticks at 0.1, 0.2, 0.3).

* **Bottom Row (tinyTruthfulQA) Y-axis Range:** Approximately 0.2% to 0.8% (with minor ticks at 0.4, 0.6).

* **Common X-axis Label (bottom-center):** Iteration

* **X-axis Range (all subplots):** 0 to 5 (with major ticks at 0, 1, 2, 3, 4, 5).

**Legend (bottom-center, below all charts):**

The legend defines the color for the answer format and the marker for the specific model. Each model has both a "Generation" (blue) and "Multiple-choice" (orange) line, using the same marker.

* **Answer Format (Color):**

* Blue Circle: Generation

* Orange Circle: Multiple-choice

* **Models (Marker & Name):**

* Square: Gemini-2.0-Flash

* Up-Triangle: Qwen2.5-14B

* Down-Triangle: Llama-3.1-8B

* Diamond: SmolLM2-1.7B

* Left-Triangle: DeepSeek-R1-Distill-Llama-8B

* Right-Triangle: Qwen2.5-3B

*(Note: The legend shows grey markers for models, but in the plots, these markers appear in blue for "Generation" and orange for "Multiple-choice" lines. The blue and orange circles in the legend likely represent a generic "Generation" and "Multiple-choice" performance, or an unnamed baseline model, as lines with circle markers are present in the plots.)*

### Detailed Analysis

The analysis is structured by row (QA dataset) and then by column (inference strategy). Within each subplot, general trends for "Generation" and "Multiple-choice" are described, followed by observations on specific models.

---

#### **Row 1: DisambiguationQA**

**1. DisambiguationQA - Baseline (Top-Left Chart)**

* **Y-axis Range:** 0.0% to 0.4%

* **Generation (Blue Lines):**

* **Trend:** Most blue lines show relatively stable or slightly fluctuating accuracy, generally staying between ~0.05% and ~0.35%.

* **Specifics:**

* The blue circle line (generic Generation) starts at ~0.05% and remains flat.

* The blue diamond (SmolLM2-1.7B) starts around ~0.2% and fluctuates, ending near ~0.15%.

* The blue square (Gemini-2.0-Flash) starts around ~0.35%, dips to ~0.2% at iteration 1, then recovers to ~0.35% by iteration 5.

* The blue up-triangle (Qwen2.5-14B) starts around ~0.3%, dips to ~0.2% at iteration 1, then recovers to ~0.3% by iteration 5.

* The blue down-triangle (Llama-3.1-8B) starts around ~0.25%, dips to ~0.15% at iteration 1, then recovers to ~0.25% by iteration 5.

* The blue left-triangle (DeepSeek-R1-Distill-Llama-8B) starts around ~0.15% and remains relatively stable.

* The blue right-triangle (Qwen2.5-3B) starts around ~0.15% and remains relatively stable.

* **Multiple-choice (Orange Lines):**

* **Trend:** Orange lines generally show higher accuracy than blue lines, ranging from ~0.2% to ~0.45%. Many show slight fluctuations.

* **Specifics:**

* The orange circle line (generic Multiple-choice) starts at ~0.35% and remains relatively stable.

* The orange square (Gemini-2.0-Flash) starts around ~0.35%, peaks at ~0.45% at iteration 1, then fluctuates around ~0.35-0.4%.

* The orange up-triangle (Qwen2.5-14B) starts around ~0.35%, peaks at ~0.45% at iteration 1, then fluctuates around ~0.35-0.4%.

* The orange down-triangle (Llama-3.1-8B) starts around ~0.25%, peaks at ~0.35% at iteration 1, then fluctuates around ~0.25-0.3%.

* The orange diamond (SmolLM2-1.7B) starts around ~0.2%, fluctuates, ending near ~0.25%.

* The orange left-triangle (DeepSeek-R1-Distill-Llama-8B) starts around ~0.25% and remains relatively stable.

* The orange right-triangle (Qwen2.5-3B) starts around ~0.2% and remains relatively stable.

**2. DisambiguationQA - CoT (Top-Middle Chart)**

* **Y-axis Range:** 0.0% to 0.4%

* **Generation (Blue Lines):**

* **Trend:** Similar to Baseline, blue lines are generally lower than orange lines, mostly between ~0.05% and ~0.35%. Some models show slight improvements or more pronounced fluctuations compared to Baseline.

* **Specifics:**

* The blue circle line (generic Generation) starts at ~0.05% and remains flat.

* The blue square (Gemini-2.0-Flash) starts around ~0.35%, peaks at ~0.4% at iteration 3, then drops to ~0.3% by iteration 5.

* The blue up-triangle (Qwen2.5-14B) starts around ~0.3%, peaks at ~0.35% at iteration 3, then drops to ~0.25% by iteration 5.

* **Multiple-choice (Orange Lines):**

* **Trend:** Orange lines generally maintain higher accuracy, mostly between ~0.2% and ~0.45%. Some show slight improvements or more pronounced fluctuations compared to Baseline.

* **Specifics:**

* The orange square (Gemini-2.0-Flash) starts around ~0.35%, peaks at ~0.45% at iteration 3, then drops to ~0.35% by iteration 5.

* The orange up-triangle (Qwen2.5-14B) starts around ~0.35%, peaks at ~0.45% at iteration 3, then drops to ~0.35% by iteration 5.

**3. DisambiguationQA - Self-Consistency (Top-Right Chart)**

* **Y-axis Range:** 0.0% to 0.4%

* **Generation (Blue Lines):**

* **Trend:** Similar to CoT, blue lines are generally lower, mostly between ~0.05% and ~0.35%. Fluctuations are present.

* **Specifics:**

* The blue circle line (generic Generation) starts at ~0.05% and remains flat.

* The blue square (Gemini-2.0-Flash) starts around ~0.35%, peaks at ~0.4% at iteration 1, then fluctuates around ~0.3-0.35%.

* The blue up-triangle (Qwen2.5-14B) starts around ~0.3%, peaks at ~0.35% at iteration 1, then fluctuates around ~0.25-0.3%.

* **Multiple-choice (Orange Lines):**

* **Trend:** Orange lines generally maintain higher accuracy, mostly between ~0.2% and ~0.45%. Fluctuations are present.

* **Specifics:**

* The orange square (Gemini-2.0-Flash) starts around ~0.35%, peaks at ~0.45% at iteration 1, then fluctuates around ~0.35-0.4%.

* The orange up-triangle (Qwen2.5-14B) starts around ~0.35%, peaks at ~0.45% at iteration 1, then fluctuates around ~0.35-0.4%.

---

#### **Row 2: tinyTruthfulQA**

**4. tinyTruthfulQA - Baseline (Bottom-Left Chart)**

* **Y-axis Range:** 0.2% to 0.8%

* **Generation (Blue Lines):**

* **Trend:** Blue lines are generally clustered in the mid-to-high range, mostly between ~0.5% and ~0.85%. Most show stable or slightly fluctuating performance.

* **Specifics:**

* The blue circle line (generic Generation) starts at ~0.5% and remains flat.

* The blue square (Gemini-2.0-Flash) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The blue up-triangle (Qwen2.5-14B) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The blue down-triangle (Llama-3.1-8B) starts around ~0.7%, fluctuates slightly, ending near ~0.7%.

* The blue diamond (SmolLM2-1.7B) starts around ~0.6%, fluctuates slightly, ending near ~0.6%.

* The blue left-triangle (DeepSeek-R1-Distill-Llama-8B) starts around ~0.75%, fluctuates slightly, ending near ~0.75%.

* The blue right-triangle (Qwen2.5-3B) starts around ~0.55%, fluctuates slightly, ending near ~0.55%.

* **Multiple-choice (Orange Lines):**

* **Trend:** Orange lines are generally clustered in the mid-to-high range, mostly between ~0.5% and ~0.85%. One orange line (circle) is significantly lower.

* **Specifics:**

* The orange circle line (generic Multiple-choice) starts at ~0.15% and remains flat, significantly lower than all other lines in this subplot.

* The orange square (Gemini-2.0-Flash) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The orange up-triangle (Qwen2.5-14B) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The orange down-triangle (Llama-3.1-8B) starts around ~0.7%, fluctuates slightly, ending near ~0.7%.

* The orange diamond (SmolLM2-1.7B) starts around ~0.6%, fluctuates slightly, ending near ~0.6%.

* The orange left-triangle (DeepSeek-R1-Distill-Llama-8B) starts around ~0.75%, fluctuates slightly, ending near ~0.75%.

* The orange right-triangle (Qwen2.5-3B) starts around ~0.55%, fluctuates slightly, ending near ~0.55%.

**5. tinyTruthfulQA - CoT (Bottom-Middle Chart)**

* **Y-axis Range:** 0.2% to 0.8%

* **Generation (Blue Lines):**

* **Trend:** Similar to Baseline, blue lines are clustered in the mid-to-high range, mostly between ~0.5% and ~0.85%. Performance is generally stable.

* **Specifics:**

* The blue circle line (generic Generation) starts at ~0.5% and remains flat.

* The blue square (Gemini-2.0-Flash) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The blue up-triangle (Qwen2.5-14B) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* **Multiple-choice (Orange Lines):**

* **Trend:** Similar to Baseline, orange lines are clustered in the mid-to-high range, mostly between ~0.5% and ~0.85%, with the orange circle line being a significant outlier at the bottom.

* **Specifics:**

* The orange circle line (generic Multiple-choice) starts at ~0.15% and remains flat.

* The orange square (Gemini-2.0-Flash) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The orange up-triangle (Qwen2.5-14B) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

**6. tinyTruthfulQA - Self-Consistency (Bottom-Right Chart)**

* **Y-axis Range:** 0.2% to 0.8%

* **Generation (Blue Lines):**

* **Trend:** Similar to CoT, blue lines are clustered in the mid-to-high range, mostly between ~0.5% and ~0.85%. Performance is generally stable.

* **Specifics:**

* The blue circle line (generic Generation) starts at ~0.5% and remains flat.

* The blue square (Gemini-2.0-Flash) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The blue up-triangle (Qwen2.5-14B) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* **Multiple-choice (Orange Lines):**

* **Trend:** Similar to CoT, orange lines are clustered in the mid-to-high range, mostly between ~0.5% and ~0.85%, with the orange circle line being a significant outlier at the bottom.

* **Specifics:**

* The orange circle line (generic Multiple-choice) starts at ~0.15% and remains flat.

* The orange square (Gemini-2.0-Flash) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

* The orange up-triangle (Qwen2.5-14B) starts around ~0.8%, fluctuates slightly, ending near ~0.8%.

### Key Observations

1. **Dataset Impact on Accuracy:** Accuracy on `tinyTruthfulQA` (bottom row) is significantly higher (ranging from ~0.5% to ~0.85%) compared to `DisambiguationQA` (top row), where accuracy ranges from ~0.05% to ~0.45%. This suggests `tinyTruthfulQA` is an easier task or models are better suited for it.

2. **Answer Format Impact:**

* For `DisambiguationQA`, "Multiple-choice" (orange lines) generally outperforms "Generation" (blue lines) across all inference strategies. The orange lines are consistently positioned above the blue lines.

* For `tinyTruthfulQA`, "Generation" and "Multiple-choice" performances are largely comparable for most specific models. However, the generic "Multiple-choice" (orange circle) line is a significant outlier, showing very low accuracy (~0.15%) compared to all other models and the generic "Generation" line (~0.5%).

3. **Inference Strategy Impact (Baseline vs. CoT vs. Self-Consistency):**

* For `DisambiguationQA`, there are no dramatic, consistent improvements across "Baseline," "CoT," and "Self-Consistency." Some models show minor fluctuations or slight peaks at early iterations with CoT/Self-Consistency, but overall performance levels remain similar.

* For `tinyTruthfulQA`, the inference strategies (CoT, Self-Consistency) appear to have very little to no discernible impact on the accuracy of the models compared to the "Baseline" condition. The lines for each model largely overlap across the three columns.

4. **Model Performance:**

* In `DisambiguationQA`, Gemini-2.0-Flash (square) and Qwen2.5-14B (up-triangle) generally show the highest accuracy among the models for both Generation and Multiple-choice. SmolLM2-1.7B (diamond) and Qwen2.5-3B (right-triangle) tend to be among the lower performers.

* In `tinyTruthfulQA`, Gemini-2.0-Flash (square) and Qwen2.5-14B (up-triangle) again appear to be top performers, closely followed by DeepSeek-R1-Distill-Llama-8B (left-triangle) and Llama-3.1-8B (down-triangle). SmolLM2-1.7B (diamond) and Qwen2.5-3B (right-triangle) are generally lower, but still achieve high absolute accuracy compared to DisambiguationQA.

5. **Stability Across Iterations:** Most models show relatively stable performance across the 6 iterations, with some minor fluctuations. There are no strong, consistent upward or downward trends across iterations for the majority of the lines, suggesting that the iterative process might not be significantly improving or degrading performance within this range.

### Interpretation

The data primarily demonstrates a significant difference in model performance based on the complexity or nature of the QA dataset. `tinyTruthfulQA` appears to be a much "easier" task for these models, with most achieving high accuracy (above 50-60%), while `DisambiguationQA` presents a considerably greater challenge, with accuracies generally below 45%.

The choice between "Generation" and "Multiple-choice" answer formats has a notable impact on `DisambiguationQA`, where providing multiple-choice options consistently aids performance. This suggests that for more ambiguous or difficult tasks, the constrained choice space of multiple-choice questions helps models achieve better results, possibly by reducing the search space for correct answers or mitigating issues with open-ended generation. The anomalous low performance of the generic "Multiple-choice" (orange circle) line in `tinyTruthfulQA` is a curious outlier that warrants further investigation; it might represent a specific baseline or a different experimental setup not fully detailed in the legend.

Crucially, the "CoT" and "Self-Consistency" inference strategies, often touted for improving reasoning and performance, show very limited, if any, consistent benefit across either dataset or model. For `tinyTruthfulQA`, their impact is negligible, with performance curves almost identical to the "Baseline." For `DisambiguationQA`, while some minor fluctuations or slight peaks are observed, there's no clear, sustained improvement over the baseline. This could imply that for these specific tasks and models, these advanced inference techniques do not provide a significant advantage, or that the iterative process itself (0-5 iterations) is not sufficient to fully leverage their potential. Alternatively, the tasks might not be complex enough in their reasoning demands to benefit from CoT or Self-Consistency, or the models themselves might not be sufficiently advanced to effectively utilize these techniques for substantial gains. The lack of strong trends across iterations further supports the idea that the models quickly reach a stable performance level, and further iterations within this range do not yield significant changes.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Charts: Model Performance on QA Tasks Across Iterations

### Overview

The image presents six line charts comparing the performance of several language models on two question answering (QA) tasks – DisambiguationQA and tinyTruthfulQA – under three different prompting strategies: Baseline, Chain-of-Thought (CoT), and Self-Consistency. Performance is measured by accuracy (in percentage) across five iterations. Each chart displays accuracy as a function of iteration number, with separate lines representing different models.

### Components/Axes

* **X-axis:** Iteration (ranging from 0 to 5)

* **Y-axis:** Accuracy (%) (ranging from 0.0 to 0.8, with increments of 0.2)

* **Chart Titles:**

* DisambiguationQA - Baseline

* DisambiguationQA - CoT

* DisambiguationQA - Self-Consistency

* tinyTruthfulQA - Baseline

* tinyTruthfulQA - CoT

* tinyTruthfulQA - Self-Consistency

* **Legend:**

* Generation (Blue Circle)

* Multiple-choice (Green Triangle)

* Gemini-2.0-Flash (Orange Square)

* Llama-3.1-8B (Red Diamond)

* DeepSeek-R1-Distill-Llama-8B (Gray Right-Pointing Triangle)

* Qwen2.5-3B (Black Diamond)

* SmolLM2-1.7B (Purple Circle)

### Detailed Analysis or Content Details

**1. DisambiguationQA - Baseline**

* **Generation (Blue):** Starts at approximately 0.25, increases to around 0.35 at iteration 1, then fluctuates between 0.3 and 0.4 for the remaining iterations.

* **Multiple-choice (Green):** Starts at approximately 0.35, increases to around 0.45 at iteration 1, then decreases to around 0.35 at iteration 5.

* **Gemini-2.0-Flash (Orange):** Starts at approximately 0.3, increases to around 0.4 at iteration 1, then fluctuates between 0.35 and 0.45 for the remaining iterations.

* **Llama-3.1-8B (Red):** Starts at approximately 0.2, increases to around 0.3 at iteration 1, then fluctuates between 0.25 and 0.35 for the remaining iterations.

* **DeepSeek-R1-Distill-Llama-8B (Gray):** Starts at approximately 0.25, increases to around 0.35 at iteration 1, then fluctuates between 0.3 and 0.4 for the remaining iterations.

* **Qwen2.5-3B (Black):** Starts at approximately 0.2, increases to around 0.3 at iteration 1, then fluctuates between 0.25 and 0.35 for the remaining iterations.

* **SmolLM2-1.7B (Purple):** Starts at approximately 0.2, remains relatively stable around 0.25 for all iterations.

**2. DisambiguationQA - CoT**

* **Generation (Blue):** Starts at approximately 0.2, increases to around 0.35 at iteration 1, then fluctuates between 0.3 and 0.4 for the remaining iterations.

* **Multiple-choice (Green):** Starts at approximately 0.3, increases to around 0.4 at iteration 1, then decreases to around 0.3 at iteration 5.

* **Gemini-2.0-Flash (Orange):** Starts at approximately 0.25, increases to around 0.35 at iteration 1, then fluctuates between 0.3 and 0.4 for the remaining iterations.

* **Llama-3.1-8B (Red):** Starts at approximately 0.15, increases to around 0.25 at iteration 1, then fluctuates between 0.2 and 0.3 for the remaining iterations.

* **DeepSeek-R1-Distill-Llama-8B (Gray):** Starts at approximately 0.2, increases to around 0.3 at iteration 1, then fluctuates between 0.25 and 0.35 for the remaining iterations.

* **Qwen2.5-3B (Black):** Starts at approximately 0.15, increases to around 0.25 at iteration 1, then fluctuates between 0.2 and 0.3 for the remaining iterations.

* **SmolLM2-1.7B (Purple):** Starts at approximately 0.15, remains relatively stable around 0.2 for all iterations.

**3. DisambiguationQA - Self-Consistency**

* Similar trends to Baseline and CoT, with generally lower accuracy values.

**4. tinyTruthfulQA - Baseline**

* **Generation (Blue):** Starts at approximately 0.6, remains relatively stable around 0.65 for all iterations.

* **Multiple-choice (Green):** Starts at approximately 0.65, remains relatively stable around 0.7 for all iterations.

* **Gemini-2.0-Flash (Orange):** Starts at approximately 0.6, remains relatively stable around 0.65 for all iterations.

* **Llama-3.1-8B (Red):** Starts at approximately 0.55, remains relatively stable around 0.6 for all iterations.

* **DeepSeek-R1-Distill-Llama-8B (Gray):** Starts at approximately 0.6, remains relatively stable around 0.65 for all iterations.

* **Qwen2.5-3B (Black):** Starts at approximately 0.55, remains relatively stable around 0.6 for all iterations.

* **SmolLM2-1.7B (Purple):** Starts at approximately 0.5, remains relatively stable around 0.55 for all iterations.

**5. tinyTruthfulQA - CoT**

* **Generation (Blue):** Starts at approximately 0.6, remains relatively stable around 0.65 for all iterations.

* **Multiple-choice (Green):** Starts at approximately 0.65, remains relatively stable around 0.7 for all iterations.

* **Gemini-2.0-Flash (Orange):** Starts at approximately 0.6, remains relatively stable around 0.65 for all iterations.

* **Llama-3.1-8B (Red):** Starts at approximately 0.55, remains relatively stable around 0.6 for all iterations.

* **DeepSeek-R1-Distill-Llama-8B (Gray):** Starts at approximately 0.6, remains relatively stable around 0.65 for all iterations.

* **Qwen2.5-3B (Black):** Starts at approximately 0.55, remains relatively stable around 0.6 for all iterations.

* **SmolLM2-1.7B (Purple):** Starts at approximately 0.5, remains relatively stable around 0.55 for all iterations.

**6. tinyTruthfulQA - Self-Consistency**

* Similar trends to Baseline and CoT, with generally higher accuracy values.

### Key Observations

* The "tinyTruthfulQA" task consistently yields higher accuracy scores than the "DisambiguationQA" task across all models and prompting strategies.

* The "Baseline" and "CoT" prompting strategies generally result in similar performance, while "Self-Consistency" shows varying results depending on the task.

* "Multiple-choice" consistently outperforms "Generation" in most scenarios.

* The accuracy scores tend to stabilize after the first few iterations, indicating diminishing returns from further iterations.

### Interpretation

The data suggests that the choice of QA task significantly impacts model performance, with "tinyTruthfulQA" being an easier task for the evaluated models. The prompting strategy (Baseline, CoT, Self-Consistency) has a moderate effect on performance, with no single strategy consistently outperforming the others. The "Multiple-choice" approach generally leads to better results than "Generation," potentially because it reduces the complexity of the task by providing a limited set of options. The stabilization of accuracy scores after a few iterations suggests that the models are converging towards their maximum performance level within the given experimental setup. The differences in performance between models (e.g., Gemini-2.0-Flash vs. SmolLM2-1.7B) highlight the varying capabilities of different language models. The relatively low accuracy scores for DisambiguationQA suggest that this task is more challenging and requires more sophisticated reasoning abilities.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Model Accuracy Across Iterations on DisambiguationQA and tinyTruthfulQA

### Overview

The image displays a 2x3 grid of six line charts comparing the performance of various large language models on two question-answering benchmarks ("DisambiguationQA" and "tinyTruthfulQA") across three prompting methods ("Baseline", "CoT" (Chain-of-Thought), and "Self-Consistency"). Performance is measured as accuracy percentage over 6 iterations (0 to 5). Each chart plots multiple lines, each representing a specific model, with line color indicating the task type (Generation or Multiple-choice) and marker shape indicating the specific model.

### Components/Axes

* **Chart Grid:** 2 rows x 3 columns.

* **Top Row Title:** `DisambiguationQA`

* **Bottom Row Title:** `tinyTruthfulQA`

* **Column Titles (Left to Right):** `Baseline`, `CoT`, `Self-Consistency`

* **Axes (Identical for all charts):**

* **X-axis:** Label: `Iteration`. Ticks: `0, 1, 2, 3, 4, 5`.

* **Y-axis:** Label: `Accuracy (%)`.

* Top Row (DisambiguationQA) Scale: `0.00` to `0.40` (increments of 0.10).

* Bottom Row (tinyTruthfulQA) Scale: `0.0` to `0.8` (increments of 0.2).

* **Legend (Located at the bottom center of the entire image):**

* **Task Type (Color):**

* Blue Circle: `Generation`

* Orange Circle: `Multiple-choice`

* **Model (Marker Shape & Label):**

* Gray Square: `Gemini-2.0-Flash`

* Gray Upward Triangle: `Qwen2.5-14B`

* Gray Downward Triangle: `Llama-3.1-8B`

* Gray Diamond: `SmolLM2-1.7B`

* Gray Left Triangle: `DeepSeek-R1-Distill-Llama-8B`

* Gray Right Triangle: `Qwen2.5-3B`

* **Note:** The legend uses gray for all model markers. In the charts, the lines are colored blue or orange based on the task type, and the specific model is identified by its unique marker shape on that colored line.

### Detailed Analysis

**1. DisambiguationQA - Baseline (Top-Left Chart)**

* **Trend:** Performance is generally low and stable across iterations for most models. There is a clear separation between task types.

* **Multiple-choice (Orange Lines):** Clustered in the upper band (~0.25 to ~0.38). The highest performer appears to be `Gemini-2.0-Flash` (square marker), starting near 0.38 and ending near 0.35. `Qwen2.5-14B` (up triangle) is also high, around 0.35.

* **Generation (Blue Lines):** Clustered in the lower band (~0.05 to ~0.20). The highest blue line is likely `Qwen2.5-14B` (up triangle), hovering around 0.18-0.20. The lowest is `SmolLM2-1.7B` (diamond), near 0.05.

**2. DisambiguationQA - CoT (Top-Middle Chart)**

* **Trend:** More variability and some upward trends compared to Baseline. The gap between task types narrows slightly.

* **Multiple-choice (Orange Lines):** Still generally higher, but with more fluctuation. `Gemini-2.0-Flash` (square) shows a dip at iteration 2 before recovering. Several models converge around 0.30-0.35 by iteration 5.

* **Generation (Blue Lines):** Shows more improvement. The top blue line (likely `Qwen2.5-14B`, up triangle) rises from ~0.20 to ~0.28. Other models like `Llama-3.1-8B` (down triangle) also show upward movement.

**3. DisambiguationQA - Self-Consistency (Top-Right Chart)**

* **Trend:** The highest overall performance and most distinct separation between top and bottom performers.

* **Multiple-choice (Orange Lines):** `Gemini-2.0-Flash` (square) is the clear leader, starting above 0.40 and maintaining a high level. `Qwen2.5-14B` (up triangle) is also strong, around 0.35-0.38.

* **Generation (Blue Lines):** The top blue line (`Qwen2.5-14B`, up triangle) performs well, around 0.30-0.32. A significant outlier is the lowest blue line (`SmolLM2-1.7B`, diamond), which remains very low, near 0.05-0.08.

**4. tinyTruthfulQA - Baseline (Bottom-Left Chart)**

* **Trend:** Extremely wide spread in performance. Some models excel, while others fail almost completely.

* **Multiple-choice (Orange Lines):** Two distinct clusters. Top cluster (`Gemini-2.0-Flash`, `Qwen2.5-14B`) is very high, ~0.75-0.80. Bottom cluster (`SmolLM2-1.7B`, `Qwen2.5-3B`) is very low, ~0.15-0.20.

* **Generation (Blue Lines):** Similar wide spread. Top blue line (`Qwen2.5-14B`, up triangle) is high, ~0.70. Bottom blue line (`SmolLM2-1.7B`, diamond) is near 0.10.

**5. tinyTruthfulQA - CoT (Bottom-Middle Chart)**

* **Trend:** Performance for top models remains high but shows more volatility. The low-performing cluster remains consistently poor.

* **Multiple-choice (Orange Lines):** Top models (`Gemini-2.0-Flash`, `Qwen2.5-14B`) fluctuate between 0.70 and 0.80. The low cluster (`SmolLM2-1.7B`, `Qwen2.5-3B`) stays flat near 0.20.

* **Generation (Blue Lines):** The top blue line (`Qwen2.5-14B`, up triangle) shows a notable dip at iteration 2 before recovering to ~0.70. The lowest blue line remains near 0.10.

**6. tinyTruthfulQA - Self-Consistency (Bottom-Right Chart)**

* **Trend:** Similar pattern to CoT, with high performers maintaining a lead and low performers stagnant.

* **Multiple-choice (Orange Lines):** `Gemini-2.0-Flash` (square) and `Qwen2.5-14B` (up triangle) are again top, ~0.75-0.80. The low cluster is unchanged.

* **Generation (Blue Lines):** The top blue line (`Qwen2.5-14B`, up triangle) is stable around 0.70. The lowest blue line (`SmolLM2-1.7B`, diamond) shows a slight upward tick at iteration 5 but remains below 0.20.

### Key Observations

1. **Task Type Dominance:** Across all charts and methods, **Multiple-choice** tasks (orange lines) consistently yield higher accuracy than **Generation** tasks (blue lines) for the same model.

2. **Model Performance Hierarchy:** A clear hierarchy exists. `Gemini-2.0-Flash` and `Qwen2.5-14B` are consistently top performers. `SmolLM2-1.7B` and `Qwen2.5-3B` are consistently the lowest performers, especially on tinyTruthfulQA.

3. **Benchmark Difficulty:** Models achieve significantly higher accuracy on **tinyTruthfulQA** (up to ~80%) compared to **DisambiguationQA** (max ~40%), suggesting the latter is a more challenging benchmark for these models.

4. **Prompting Method Impact:** Moving from **Baseline** to **CoT** and **Self-Consistency** generally improves performance, particularly for Generation tasks on DisambiguationQA. The effect is less pronounced on tinyTruthfulQA for the top models, as they are already near a performance ceiling.

5. **Stability:** Performance is relatively stable across iterations for most models, with some notable fluctuations (e.g., `Qwen2.5-14B` on tinyTruthfulQA-CoT at iteration 2).

### Interpretation

This data demonstrates the significant impact of both **task formulation** (Multiple-choice vs. Generation) and **prompting strategy** (Baseline, CoT, Self-Consistency) on LLM performance. The consistent superiority of Multiple-choice formats suggests that constrained output spaces are easier for models to handle accurately than open-ended generation for these QA tasks.

The stark performance gap between models like `Gemini-2.0-Flash` and `SmolLM2-1.7B` highlights the importance of model scale and capability. The fact that advanced prompting (CoT, Self-Consistency) provides a larger relative boost to weaker models on the harder benchmark (DisambiguationQA) indicates these techniques are most valuable for bridging capability gaps in complex reasoning tasks. Conversely, on the easier benchmark (tinyTruthfulQA), top models are already proficient, so advanced prompting yields diminishing returns.

The charts collectively argue that for reliable QA performance, one should consider: 1) using a capable base model, 2) framing the task as multiple-choice if possible, and 3) employing advanced prompting techniques like Self-Consistency, especially for challenging, ambiguous problems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Across Prompting Methods

### Overview

The image contains six line graphs arranged in a 2x3 grid, comparing model accuracy across iterations (0-5) for two QA tasks: **DisambiguationQA** (top row) and **tinyTruthfulQA** (bottom row). Each graph evaluates three prompting methods: **Baseline**, **CoT (Chain-of-Thought)**, and **Self-Consistency**. Accuracy (%) is plotted on the y-axis, while iterations (0-5) are on the x-axis. Models are differentiated by color and marker type in the legend.

---

### Components/Axes

- **X-axis**: Iteration (0 to 5, integer steps).

- **Y-axis**: Accuracy (%) (0% to 80%, with increments of ~20%).

- **Legend**: Located at the bottom of all graphs. Models include:

- **Generation** (blue circles)

- **Multiple-choice** (orange squares)

- **Gemini 2.0-Flash** (gray squares)

- **Llama 3.1-8B** (gray triangles)

- **DeepSeek-R1-Distill-Llama-8B** (gray diamonds)

- **Qwen2.5-14B** (orange triangles)

- **SmolLM2-1.7B** (orange diamonds)

- **Qwen2.5-3B** (blue triangles)

---

### Detailed Analysis

#### DisambiguationQA - Baseline

- **Generation** (blue circles): Starts at ~0.3%, peaks at iteration 2 (~0.4%), then declines to ~0.2% by iteration 5.

- **Multiple-choice** (orange squares): Starts at ~0.1%, peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **Gemini 2.0-Flash** (gray squares): Stable at ~0.4% across all iterations.

- **Llama 3.1-8B** (gray triangles): Peaks at iteration 1 (~0.5%), then declines to ~0.3%.

- **DeepSeek-R1-Distill-Llama-8B** (gray diamonds): Stable at ~0.4%.

- **Qwen2.5-14B** (orange triangles): Starts at ~0.2%, peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **SmolLM2-1.7B** (orange diamonds): Stable at ~0.3%.

- **Qwen2.5-3B** (blue triangles): Stable at ~0.3%.

#### DisambiguationQA - CoT

- **Generation**: Peaks at iteration 2 (~0.4%), then declines to ~0.2%.

- **Multiple-choice**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **Gemini 2.0-Flash**: Stable at ~0.4%.

- **Llama 3.1-8B**: Peaks at iteration 1 (~0.5%), then declines to ~0.3%.

- **DeepSeek-R1-Distill-Llama-8B**: Stable at ~0.4%.

- **Qwen2.5-14B**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **SmolLM2-1.7B**: Stable at ~0.3%.

- **Qwen2.5-3B**: Stable at ~0.3%.

#### DisambiguationQA - Self-Consistency

- **Generation**: Peaks at iteration 2 (~0.4%), then declines to ~0.2%.

- **Multiple-choice**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **Gemini 2.0-Flash**: Stable at ~0.4%.

- **Llama 3.1-8B**: Peaks at iteration 1 (~0.5%), then declines to ~0.3%.

- **DeepSeek-R1-Distill-Llama-8B**: Stable at ~0.4%.

- **Qwen2.5-14B**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **SmolLM2-1.7B**: Stable at ~0.3%.

- **Qwen2.5-3B**: Stable at ~0.3%.

#### tinyTruthfulQA - Baseline

- **Generation**: Starts at ~0.5%, peaks at iteration 2 (~0.6%), then declines to ~0.4%.

- **Multiple-choice**: Starts at ~0.2%, peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **Gemini 2.0-Flash**: Stable at ~0.6%.

- **Llama 3.1-8B**: Peaks at iteration 1 (~0.7%), then declines to ~0.5%.

- **DeepSeek-R1-Distill-Llama-8B**: Stable at ~0.6%.

- **Qwen2.5-14B**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **SmolLM2-1.7B**: Stable at ~0.3%.

- **Qwen2.5-3B**: Stable at ~0.3%.

#### tinyTruthfulQA - CoT

- **Generation**: Peaks at iteration 2 (~0.6%), then declines to ~0.4%.

- **Multiple-choice**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **Gemini 2.0-Flash**: Stable at ~0.6%.

- **Llama 3.1-8B**: Peaks at iteration 1 (~0.7%), then declines to ~0.5%.

- **DeepSeek-R1-Distill-Llama-8B**: Stable at ~0.6%.

- **Qwen2.5-14B**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **SmolLM2-1.7B**: Stable at ~0.3%.

- **Qwen2.5-3B**: Stable at ~0.3%.

#### tinyTruthfulQA - Self-Consistency

- **Generation**: Peaks at iteration 2 (~0.6%), then declines to ~0.4%.

- **Multiple-choice**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **Gemini 2.0-Flash**: Stable at ~0.6%.

- **Llama 3.1-8B**: Peaks at iteration 1 (~0.7%), then declines to ~0.5%.

- **DeepSeek-R1-Distill-Llama-8B**: Stable at ~0.6%.

- **Qwen2.5-14B**: Peaks at iteration 3 (~0.3%), then drops to ~0.2%.

- **SmolLM2-1.7B**: Stable at ~0.3%.

- **Qwen2.5-3B**: Stable at ~0.3%.

---

### Key Observations

1. **CoT and Self-Consistency** generally outperform **Baseline** in both tasks, with higher accuracy and stability.

2. **Gemini 2.0-Flash** and **DeepSeek-R1-Distill-Llama-8B** consistently achieve the highest accuracy (~0.6% in tinyTruthfulQA).

3. **Llama 3.1-8B** shows strong initial performance but declines over iterations.

4. **Multiple-choice** and **Qwen2.5-14B** exhibit the lowest accuracy, with minimal improvement across iterations.

5. **tinyTruthfulQA** graphs show higher baseline accuracy (~0.5-0.7%) compared to DisambiguationQA (~0.2-0.5%).

---

### Interpretation

The data suggests that **prompting methods** significantly impact model performance. **CoT** and **Self-Consistency** improve accuracy over **Baseline**, likely by encouraging structured reasoning. **Gemini 2.0-Flash** and **DeepSeek-R1-Distill-Llama-8B** outperform other models, indicating superior architecture or training for these tasks. The decline in accuracy for some models (e.g., Llama 3.1-8B) over iterations may reflect overfitting or sensitivity to input variations. **tinyTruthfulQA** tasks are inherently more challenging, as evidenced by lower overall accuracy compared to DisambiguationQA. The stability of certain models (e.g., Gemini 2.0-Flash) highlights their robustness to iterative changes.

DECODING INTELLIGENCE...