TECHNICAL ASSET FINGERPRINT

a0a93be8e558ca9eaec8986a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Model Accuracy Comparison

### Overview

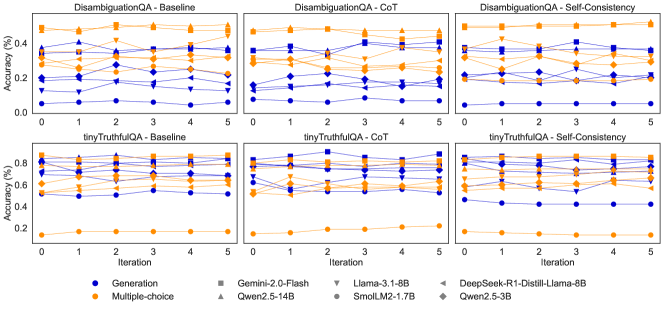

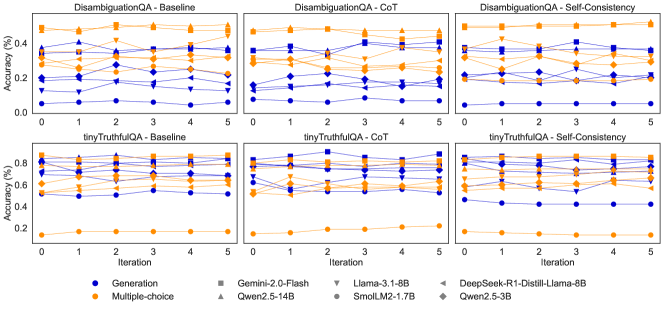

The image presents six line charts arranged in a 2x3 grid, comparing the accuracy of different language models across several iterations. The charts are grouped by task (DisambiguationQA and tinyTruthfulQA) and method (Baseline, CoT - Chain of Thought, and Self-Consistency). Each chart plots the accuracy (%) of various models against the iteration number. The models are distinguished by color and marker type, as indicated in the legend at the bottom.

### Components/Axes

* **Chart Titles (Top Row):**

* DisambiguationQA - Baseline (top-left)

* DisambiguationQA - CoT (top-center)

* DisambiguationQA - Self-Consistency (top-right)

* **Chart Titles (Bottom Row):**

* tinyTruthfulQA - Baseline (bottom-left)

* tinyTruthfulQA - CoT (bottom-center)

* tinyTruthfulQA - Self-Consistency (bottom-right)

* **Y-axis:**

* Label: "Accuracy (%)"

* Scale (DisambiguationQA charts): 0.0 to 0.4, with ticks at 0.0, 0.2, and 0.4.

* Scale (tinyTruthfulQA charts): 0.2 to 0.8, with ticks at 0.2, 0.4, 0.6, and 0.8.

* **X-axis:**

* Label: "Iteration"

* Scale: 0 to 5, with ticks at each integer value.

* **Legend (Bottom):**

* Position: Bottom center of the image.

* Entries:

* Blue Circle: Generation

* Orange Diamond: Multiple-choice

* Gray Square: Gemini-2.0-Flash

* Gray Upward-pointing Triangle: Qwen2.5-14B

* Gray Downward-pointing Triangle: Llama-3.1-8B

* Gray Circle with Diamond Center: SmolLM2-1.7B

* Gray Leftward-pointing Triangle: DeepSeek-R1-Distill-Llama-8B

* Gray Diamond with Plus Center: Qwen2.5-3B

### Detailed Analysis

**DisambiguationQA - Baseline (Top-Left)**

* **Generation (Blue Circles):** Starts around 0.05 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.35 accuracy and fluctuates between 0.25 and 0.4.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.25 accuracy and fluctuates between 0.2 and 0.3.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.45.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.15 accuracy and fluctuates between 0.1 and 0.2.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

**DisambiguationQA - CoT (Top-Center)**

* **Generation (Blue Circles):** Starts around 0.1 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.3 accuracy and fluctuates between 0.25 and 0.4.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.3 accuracy and fluctuates between 0.25 and 0.35.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.45.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

**DisambiguationQA - Self-Consistency (Top-Right)**

* **Generation (Blue Circles):** Starts around 0.1 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.4.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.3 accuracy and fluctuates between 0.25 and 0.35.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.35 accuracy and fluctuates between 0.3 and 0.45.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.2 accuracy and fluctuates between 0.15 and 0.25.

**tinyTruthfulQA - Baseline (Bottom-Left)**

* **Generation (Blue Circles):** Starts around 0.5 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.15 accuracy and remains relatively flat.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.75 accuracy and fluctuates between 0.7 and 0.8.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.8 accuracy and fluctuates between 0.75 and 0.85.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.7 accuracy and fluctuates between 0.65 and 0.75.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

**tinyTruthfulQA - CoT (Bottom-Center)**

* **Generation (Blue Circles):** Starts around 0.5 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.15 accuracy and remains relatively flat.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.75 accuracy and fluctuates between 0.7 and 0.8.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.8 accuracy and fluctuates between 0.75 and 0.85.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.7 accuracy and fluctuates between 0.65 and 0.75.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

**tinyTruthfulQA - Self-Consistency (Bottom-Right)**

* **Generation (Blue Circles):** Starts around 0.5 accuracy and remains relatively flat.

* **Multiple-choice (Orange Diamonds):** Starts around 0.15 accuracy and remains relatively flat.

* **Gemini-2.0-Flash (Gray Squares):** Starts around 0.75 accuracy and fluctuates between 0.7 and 0.8.

* **Qwen2.5-14B (Gray Upward-pointing Triangles):** Starts around 0.8 accuracy and fluctuates between 0.75 and 0.85.

* **Llama-3.1-8B (Gray Downward-pointing Triangles):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **SmolLM2-1.7B (Gray Circle with Diamond Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

* **DeepSeek-R1-Distill-Llama-8B (Gray Leftward-pointing Triangles):** Starts around 0.7 accuracy and fluctuates between 0.65 and 0.75.

* **Qwen2.5-3B (Gray Diamond with Plus Center):** Starts around 0.6 accuracy and fluctuates between 0.55 and 0.65.

### Key Observations

* The "Multiple-choice" method (orange diamonds) consistently shows lower accuracy on the "tinyTruthfulQA" task compared to other models.

* The "Generation" method (blue circles) shows relatively low accuracy on the "DisambiguationQA" task, but higher accuracy on the "tinyTruthfulQA" task.

* The models Gemini-2.0-Flash, Qwen2.5-14B, DeepSeek-R1-Distill-Llama-8B, and Qwen2.5-3B generally achieve higher accuracy on the "tinyTruthfulQA" task.

* The accuracy of most models remains relatively stable across iterations, with only minor fluctuations.

* The CoT and Self-Consistency methods do not appear to significantly improve the accuracy compared to the Baseline method for most models.

### Interpretation

The charts compare the performance of different language models on two question-answering tasks ("DisambiguationQA" and "tinyTruthfulQA") using different methods (Baseline, Chain of Thought (CoT), and Self-Consistency). The data suggests that the choice of model and task significantly impacts accuracy. For instance, the "Multiple-choice" method seems less effective for the "tinyTruthfulQA" task, while the "Generation" method performs better on "tinyTruthfulQA" than on "DisambiguationQA". The relatively flat lines across iterations indicate that the models' performance does not significantly change with more iterations, suggesting that the models have reached a stable level of accuracy. The CoT and Self-Consistency methods, designed to improve reasoning, do not show a substantial advantage over the baseline, which could indicate that these tasks do not heavily rely on complex reasoning or that the models are not effectively utilizing these methods.

DECODING INTELLIGENCE...