## Diagram: Artificial Neural Network Architecture

### Overview

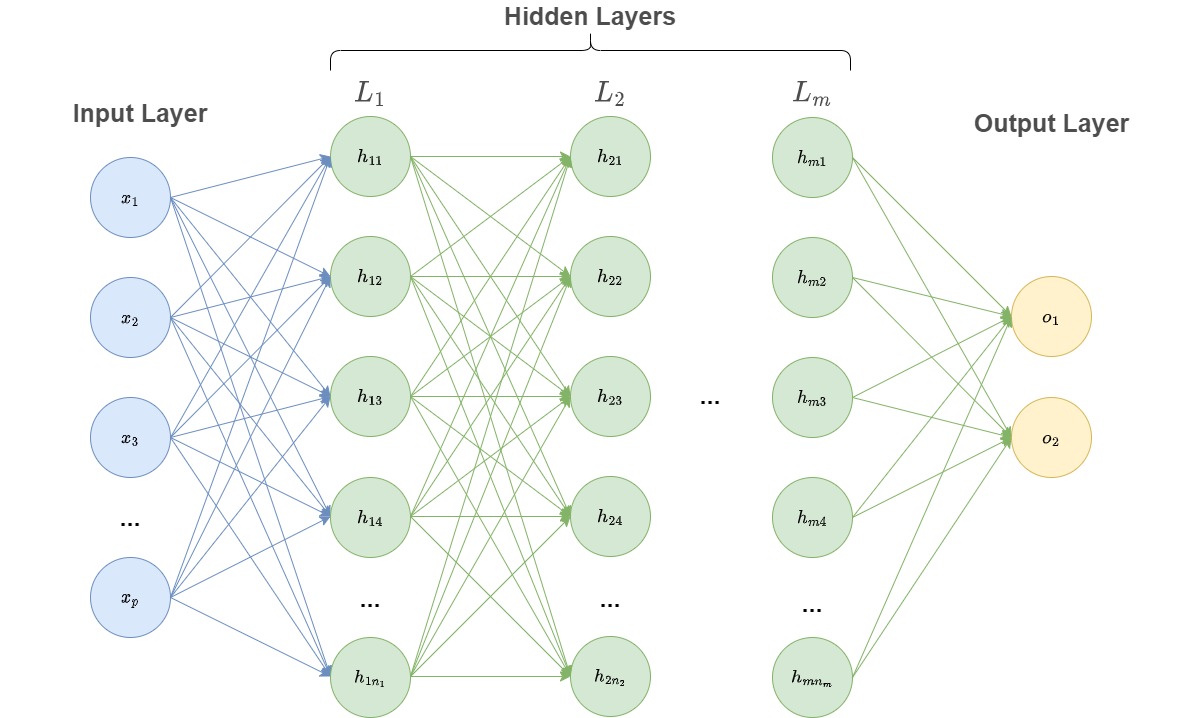

The image is a technical diagram illustrating the architecture of a feedforward artificial neural network (ANN), specifically a multi-layer perceptron (MLP). It visually represents the flow of data from an input layer, through one or more hidden layers, to an output layer. The diagram uses a standard node-and-edge notation where circles represent neurons (nodes) and lines represent weighted connections between them.

### Components/Axes

The diagram is organized into three primary vertical sections, labeled from left to right:

1. **Input Layer** (Leftmost section, light blue nodes):

* Label: "Input Layer"

* Nodes: Labeled `x₁`, `x₂`, `x₃`, followed by an ellipsis (`...`), and ending with `xₚ`. This indicates an input vector of `p` dimensions.

2. **Hidden Layers** (Central section, light green nodes):

* Main Label: "Hidden Layers" (centered above the section).

* The section is subdivided into multiple layers, each with its own label:

* Layer 1: Labeled `L₁`. Contains nodes labeled `h₁₁`, `h₁₂`, `h₁₃`, `h₁₄`, followed by an ellipsis (`...`), and ending with `h₁ₙ₁`. This indicates the first hidden layer has `n₁` neurons.

* Layer 2: Labeled `L₂`. Contains nodes labeled `h₂₁`, `h₂₂`, `h₂₃`, `h₂₄`, followed by an ellipsis (`...`), and ending with `h₂ₙ₂`. This indicates the second hidden layer has `n₂` neurons.

* Intermediate Layers: An ellipsis (`...`) is placed between Layer 2 and the final hidden layer, indicating the potential for additional hidden layers.

* Final Hidden Layer (m-th layer): Labeled `Lₘ`. Contains nodes labeled `hₘ₁`, `hₘ₂`, `hₘ₃`, `hₘ₄`, followed by an ellipsis (`...`), and ending with `hₘₙₘ`. This indicates the m-th hidden layer has `nₘ` neurons.

3. **Output Layer** (Rightmost section, light yellow nodes):

* Label: "Output Layer"

* Nodes: Labeled `o₁` and `o₂`. This indicates the network produces a 2-dimensional output vector.

**Connections (Edges):**

* **Input to Hidden (L₁):** Blue lines connect every input node (`x₁` through `xₚ`) to every node in the first hidden layer (`h₁₁` through `h₁ₙ₁`). This represents a fully connected layer.

* **Hidden to Hidden:** Green lines connect every node in one hidden layer to every node in the subsequent hidden layer (e.g., all nodes in `L₁` connect to all nodes in `L₂`). This pattern continues through all hidden layers.

* **Hidden (Lₘ) to Output:** Green lines connect every node in the final hidden layer (`hₘ₁` through `hₘₙₘ`) to every output node (`o₁` and `o₂`).

### Detailed Analysis

* **Network Topology:** The diagram depicts a fully connected, feedforward neural network. The flow of information is strictly from left (input) to right (output), with no cycles or backward connections.

* **Scalability Notation:** The use of ellipses (`...`) in the input layer, between hidden layers, and within each hidden layer is a critical notational element. It explicitly shows that the network is generalized:

* The input dimension `p` can be any positive integer.

* The number of hidden layers `m` can be any positive integer (including `m=1` for a single hidden layer).

* The number of neurons `n₁`, `n₂`, ..., `nₘ` in each hidden layer can vary independently.

* **Node Labeling Convention:** The labeling follows a systematic pattern:

* Input nodes: `xᵢ` where `i` is the feature index (1 to `p`).

* Hidden nodes: `hₗₖ` where `l` is the layer index (1 to `m`) and `k` is the neuron index within that layer (1 to `nₗ`).

* Output nodes: `oⱼ` where `j` is the output index (1 to 2 in this case).

### Key Observations

1. **Fully Connected Layers:** Every possible connection between adjacent layers is drawn, emphasizing that this is a dense network where each neuron receives input from all neurons in the previous layer.

2. **Generalized Architecture:** The diagram is not a specific instance but a template for a class of neural networks. The variables (`p`, `m`, `n₁`, `n₂`, ..., `nₘ`) allow it to represent networks of vastly different sizes and complexities.

3. **Color Coding:** A consistent color scheme is used to differentiate layer types: light blue for input, light green for hidden, and light yellow for output. The connection lines also follow a scheme: blue for input-to-first-hidden, and green for all subsequent hidden-to-hidden and hidden-to-output connections.

4. **Output Dimension:** The output layer has exactly two nodes (`o₁`, `o₂`), suggesting this specific template is for a network designed for a task with two outputs (e.g., binary classification with two output neurons, or regression with two target variables).

### Interpretation

This diagram serves as a foundational schematic for understanding deep learning models. It visually communicates the core concepts of a multi-layer perceptron:

* **Hierarchical Feature Learning:** The multiple hidden layers (`L₁` to `Lₘ`) suggest the network's ability to learn increasingly abstract representations of the input data. Early layers (`L₁`) might learn simple features, while deeper layers (`Lₘ`) combine them into complex patterns.

* **Universal Approximation:** The structure, with at least one hidden layer and non-linear activation functions (implied but not shown), represents a model that, in theory, can approximate any continuous function given sufficient neurons (`nₗ`). The ellipses highlight that increasing `m` and `nₗ` increases the model's capacity.

* **Parameterization:** The diagram implicitly represents a large set of learnable parameters: the weights associated with every drawn connection line and biases for every hidden and output neuron (biases are not visualized). The total number of parameters is determined by the values of `p`, `m`, and the `nₗ`'s.

* **Task Specification:** The fixed output layer with two nodes indicates the network's final purpose is to produce a two-value output, framing the type of problem it is designed to solve. The input layer's variable size `p` shows it can accept feature vectors of different lengths.

In essence, this image is a canonical representation of a deep neural network's "wiring diagram," abstracting away the specific mathematical operations (like weighted sums and activation functions) to focus on the architecture's topology and scalability.