## Diagram: Synergized Model Architecture for AI Applications

### Overview

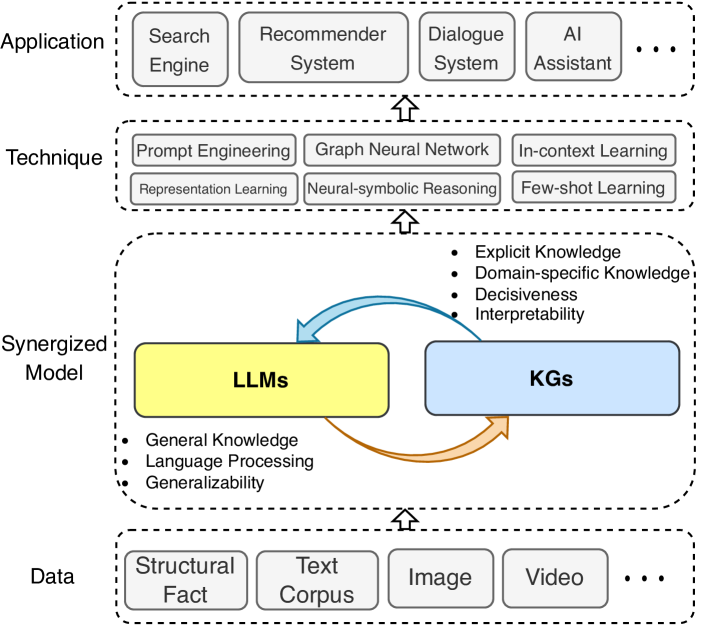

The diagram illustrates a hierarchical framework for AI applications, showing the integration of Large Language Models (LLMs) and Knowledge Graphs (KGs) within a data-driven architecture. It emphasizes bidirectional interactions between components and their roles in enhancing application performance.

### Components/Axes

1. **Application Layer** (Top):

- Search Engine

- Recommender System

- Dialogue System

- AI Assistant

- *... (ellipsis indicates additional unspecified components)*

2. **Technique Layer** (Middle):

- Prompt Engineering

- Graph Neural Network

- In-context Learning

- Representation Learning

- Neural-symbolic Reasoning

- Few-shot Learning

3. **Synergized Model** (Central):

- **LLMs** (Yellow box):

- General Knowledge

- Language Processing

- Generalizability

- **KGs** (Blue box):

- Explicit Knowledge

- Domain-specific Knowledge

- Decisiveness

- Interpretability

- Arrows:

- Orange bidirectional arrow between LLMs and KGs (mutual enhancement)

- Blue arrow from Data Layer to Synergized Model (data input)

4. **Data Layer** (Bottom):

- Structural Fact

- Text Corpus

- Image

- Video

- *... (ellipsis indicates additional data types)*

### Detailed Analysis

- **LLMs** are positioned as the core general knowledge processors, emphasizing their role in language understanding and adaptability.

- **KGs** are highlighted for their structured, domain-specific knowledge and interpretability advantages.

- The bidirectional orange arrow between LLMs and KGs suggests iterative refinement: LLMs provide contextual understanding to KGs, while KGs ground LLMs in factual knowledge.

- Data types (Structural Fact, Text, Image, Video) feed into the model, implying multimodal input capabilities.

- Techniques like Prompt Engineering and Graph Neural Networks are positioned as enabling methods for the Synergized Model.

### Key Observations

1. **Bidirectional Integration**: The orange arrow between LLMs and KGs is the only bidirectional connection, emphasizing their interdependent relationship.

2. **Data Flow**: All data types flow upward into the Synergized Model but not downward, suggesting a unidirectional data pipeline.

3. **Technique Hierarchy**: Techniques are positioned above the Synergized Model, implying they are methodological frameworks rather than direct components.

4. **Application Diversity**: The Application Layer includes both traditional (Search Engine) and emerging (AI Assistant) use cases.

### Interpretation

This architecture demonstrates a knowledge-centric AI paradigm where:

1. **LLMs and KGs Complement Each Other**: LLMs handle linguistic generalization while KGs provide structured domain expertise, creating a hybrid system that mitigates individual limitations (e.g., hallucination in LLMs, rigidity in KGs).

2. **Data Diversity Matters**: The inclusion of multimodal data (text, images, video) suggests the model is designed for cross-modal understanding, though specific fusion mechanisms are not shown.

3. **Technique Specialization**: Different techniques are positioned as specialized tools for different aspects of the model (e.g., Prompt Engineering for LLM optimization, Graph Neural Networks for KG construction).

4. **Application Flexibility**: The ellipsis in both Application and Data Layers implies scalability and adaptability to new use cases and data types.

The diagram positions this synergized approach as a solution to the "knowledge gap" in current AI systems, where general language models lack domain specificity and structured knowledge systems lack contextual adaptability.