## Diagram: GPU-REASON and Intra-REASON Pipeline Architecture

### Overview

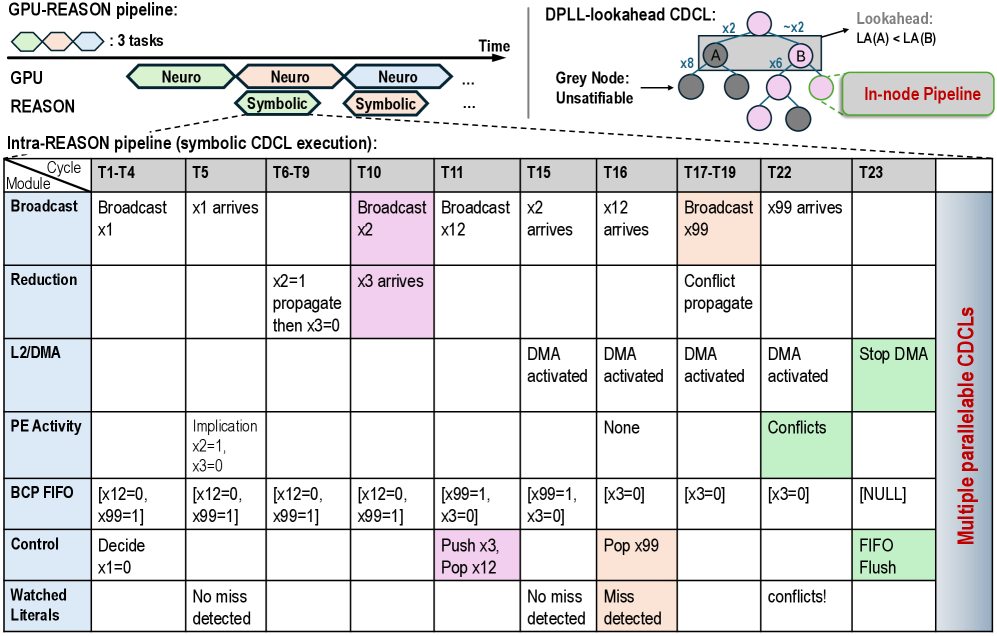

The image depicts two interconnected computational pipelines:

1. **GPU-REASON Pipeline**: A sequential task flow (Neuro → Neuro → Symbolic) over time, with a DPLL-lookahead CDCL component.

2. **Intra-REASON Pipeline**: A tabular representation of symbolic CDCL execution across time steps (T1-T23), detailing module interactions (Broadcast, Reduction, L2/DMA, etc.).

### Components/Axes

#### GPU-REASON Pipeline

- **Tasks**:

- Neuro (green), Neuro (pink), Symbolic (blue) arranged sequentially over time.

- **DPLL-lookahead CDCL**:

- Nodes A and B connected via edges labeled `x2`, `x6`, `x8`.

- Lookahead condition: `LA(A) < LA(B)`.

- Grey nodes labeled "Unsatisfiable."

- **In-node Pipeline**: Highlighted in green.

#### Intra-REASON Pipeline (Symbolic CDCL Execution)

- **Rows (Modules)**:

- Broadcast, Reduction, L2/DMA, PE Activity, BCP FIFO, Control, Watched Literals.

- **Columns (Time Steps)**:

- T1-T4, T5, T6-T9, T10, T11, T15, T16, T17-T19, T22, T23.

- **Color Coding**:

- Pink: Broadcast/Reduction actions (e.g., "Broadcast x2" at T10).

- Green: Conflict/Flush events (e.g., "Conflicts" at T22).

### Detailed Analysis

#### GPU-REASON Pipeline

- **Task Flow**:

- Neuro tasks dominate early stages (T1-T4, T5), followed by Symbolic processing (T6-T9 onward).

- DPLL-lookahead CDCL introduces constraints (`LA(A) < LA(B)`) to optimize decision-making.

#### Intra-REASON Pipeline

- **Key Actions**:

- **Broadcast**: Propagates variables (e.g., "Broadcast x1" at T1-T4, "Broadcast x99" at T17-T19).

- **Reduction**: Resolves conflicts (e.g., "x2=1 propagate then x3=0" at T6-T9).

- **L2/DMA**: Activated at T15-T19, deactivated at T23.

- **PE Activity**: Reports implications (e.g., "x2=1, x3=0" at T5) and conflicts (T22).

- **BCP FIFO**: Tracks variable states (e.g., `[x12=0, x99=1]` at T1-T4).

- **Control**: Manages variable assignments (e.g., "Decide x1=0" at T1-T4).

- **Watched Literals**: Detects misses (e.g., "Miss detected" at T16).

#### DPLL-lookahead CDCL

- **Node Connections**:

- Node A connected to Node B via edges with weights `x2`, `x6`, `x8`.

- Lookahead condition enforces `LA(A) < LA(B)` to prioritize paths.

### Key Observations

1. **Temporal Progression**:

- Early stages focus on variable broadcasting (T1-T4, T5), transitioning to conflict resolution (T6-T9, T15-T19).

- Late stages (T22-T23) involve conflict propagation and FIFO flushes.

2. **Color Significance**:

- Pink highlights active Broadcast/Reduction operations.

- Green marks conflict/flush events (e.g., "Conflicts" at T22).

3. **Unsatisfiable Nodes**: Grey nodes in the DPLL-lookahead CDCL indicate deadlocks or invalid states.

### Interpretation

- **Pipeline Coordination**: The GPU-REASON pipeline orchestrates task sequencing, while the Intra-REASON pipeline manages symbolic CDCL execution with real-time conflict detection.

- **Lookahead Optimization**: The DPLL-lookahead CDCL uses node constraints to avoid unsatisfiable states, improving efficiency.

- **Conflict Management**: The BCP FIFO and Control modules handle variable states, with flushes triggered by unresolved conflicts (e.g., T22).

- **Anomalies**: The "Miss detected" at T16 suggests a gap in watched literals, potentially requiring reprocessing.

This architecture balances parallelism (via Broadcast/Reduction) with sequential decision-making (via DPLL-lookahead), critical for high-performance symbolic reasoning systems.