## Text Document: AI Response Template and Examples

### Overview

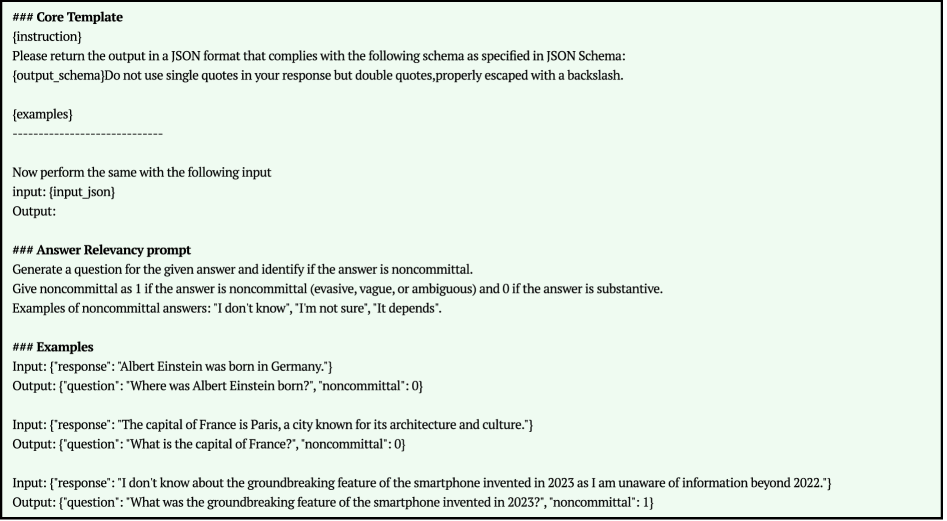

The image displays a text document on a light green background, outlining a template and examples for generating structured AI responses. It provides instructions for formatting output as JSON and includes a specific prompt for evaluating answer relevancy.

### Components/Axes

The document is structured into three main sections, each marked with a triple-asterisk heading:

1. **Core Template**: Defines the structure for an AI instruction and expected JSON output.

2. **Answer Relevancy prompt**: Provides instructions for generating a question from a given answer and classifying it as noncommittal (1) or substantive (0).

3. **Examples**: Shows three input-output pairs demonstrating the application of the "Answer Relevancy prompt".

### Detailed Analysis

The text content is transcribed below, preserving the original structure and placeholders.

**Section 1: Core Template**

```

### Core Template

{instruction}

Please return the output in a JSON format that complies with the following schema as specified in JSON Schema:

{output_schema}Do not use single quotes in your response but double quotes,properly escaped with a backslash.

{examples}

--------------------------------------------

Now perform the same with the following input

input: {input_json}

Output:

```

**Section 2: Answer Relevancy prompt**

```

### Answer Relevancy prompt

Generate a question for the given answer and identify if the answer is noncommittal.

Give noncommittal as 1 if the answer is noncommittal (evasive, vague, or ambiguous) and 0 if the answer is substantive.

Examples of noncommittal answers: "I don't know", "I'm not sure", "It depends".

```

**Section 3: Examples**

```

### Examples

Input: {'response': 'Albert Einstein was born in Germany.'}

Output: {'question': 'Where was Albert Einstein born?', 'noncommittal': 0}

Input: {'response': 'The capital of France is Paris, a city known for its architecture and culture.'}

Output: {'question': 'What is the capital of France?', 'noncommittal': 0}

Input: {'response': 'I don't know about the groundbreaking feature of the smartphone invented in 2023 as I am unaware of information beyond 2022.'}

Output: {'question': 'What was the groundbreaking feature of the smartphone invented in 2023?', 'noncommittal': 1}

```

### Key Observations

* The document serves as a meta-instruction set, likely for configuring or testing an AI model's response generation capabilities.

* It emphasizes strict JSON formatting, requiring double quotes and proper escaping.

* The "Answer Relevancy prompt" introduces a binary classification task (0 or 1) based on the substantive or noncommittal nature of an answer.

* The examples clearly illustrate the expected transformation from an input `response` to an output containing a generated `question` and a `noncommittal` score.

* The third example demonstrates a noncommittal answer (score 1) where the response explicitly states a lack of knowledge due to a knowledge cutoff.

### Interpretation

This document is a technical specification for an AI evaluation or training pipeline. Its primary purpose is to standardize how an AI system should process a given "answer" (response) to produce two outputs: a relevant question that the answer addresses, and a binary flag indicating whether the original answer was evasive or substantive.

The inclusion of a knowledge cutoff reference ("unaware of information beyond 2022") in the third example is particularly notable. It suggests this template is designed to handle or test an AI's self-awareness regarding its training data limitations, which is a critical aspect of responsible AI behavior. The structure ensures that responses acknowledging such limitations are correctly flagged as "noncommittal" (1), while factual, direct answers are flagged as "substantive" (0). This system could be used for automated quality assurance, reinforcement learning from human feedback (RLHF), or benchmarking AI response reliability.