TECHNICAL ASSET FINGERPRINT

a1beacab4c32a5678b13fc63

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

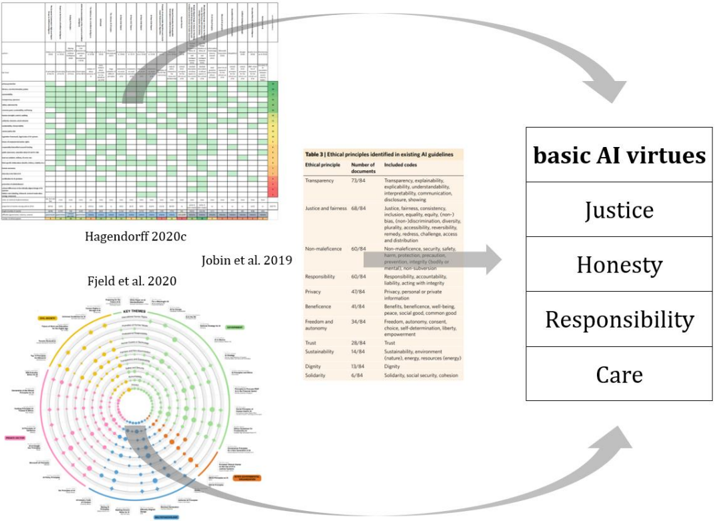

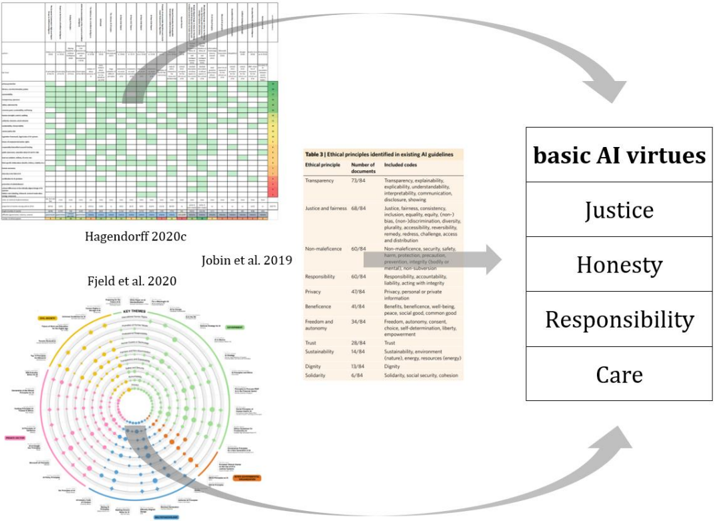

## Conceptual Diagram: Derivation of Basic AI Virtues from Existing Ethical Guidelines

### Overview

This image is a conceptual diagram illustrating the synthesis of "basic AI virtues" from three distinct analyses of existing AI ethical guidelines. It visually connects detailed research findings from Hagendorff (2020c), Jobin et al. (2019), and Fjeld et al. (2020) to a distilled set of core AI virtues. The diagram suggests that these virtues are derived from the most prominent and frequently identified ethical principles in the broader AI ethics landscape.

### Components/Axes

The image is composed of four main regions:

1. **Top-left**: A heatmap/matrix chart titled "Hagendorff 2020c".

2. **Middle-left**: A radial chart titled "Fjeld et al. 2020".

3. **Middle-right**: A data table titled "Table 3 | Ethical principles identified in existing AI guidelines" attributed to "Jobin et al. 2019".

4. **Far-right**: A stacked list of "basic AI virtues".

**1. Hagendorff 2020c Chart (Top-left)**

* **Type**: Heatmap/Matrix.

* **Rows (Vertical Axis)**: A list of ethical principles or concepts. From top to bottom, the most clearly discernible labels are:

* Transparency

* Accountability

* Fairness

* Non-discrimination

* Privacy

* Security

* Safety

* Human control

* Human oversight

* Beneficence

* Sustainability

* Dignity

* Autonomy

* Freedom

* Trust

* Solidarity

* Well-being

* Inclusiveness

* Explainability

* Interpretability

* Robustness

* Reliability

* Responsibility

* Non-maleficence

* Justice

* Human values

* Human rights

* Democracy

* Rule of law

* Proportionality

* Data governance

* Data quality

* Data protection

* Data security

* Data privacy

* Data access

* Data sharing

* Data ownership

* Data portability

* Data sovereignty

* Data ethics

* Data literacy

* Data education

* Data awareness

* (Many more principles are listed below these, but are too blurry to transcribe accurately.)

* **Columns (Horizontal Axis)**: Represent 84 distinct AI guidelines/documents, labeled numerically from 1 to 84.

* **Color Scale (Right Edge)**: A vertical gradient from dark green at the top to red at the bottom, indicating the prevalence of each principle across the 84 documents.

* Dark Green: High prevalence (principle frequently mentioned).

* Light Green/White: Medium to low prevalence.

* Red: Very low or no prevalence (principle rarely mentioned).

* **Summary Row (Bottom)**: Contains numerical values, likely counts or percentages, but too blurry to transcribe.

**2. Fjeld et al. 2020 Chart (Middle-left)**

* **Type**: Radial chart / Concentric circle diagram.

* **Central Label**: "KEY THEMES"

* **Concentric Rings**: Represent hierarchical levels of themes and sub-themes.

* **Colored Segments/Dots**:

* **Innermost Ring (Main Themes)**:

* Yellow segment: "Human Rights"

* Green segment: "Fairness"

* Light Blue segment: "Privacy"

* Dark Blue segment: "Accountability"

* Pink segment: "Transparency"

* Orange segment: "Safety"

* Red segment: "Sustainability"

* **Outer Rings (Sub-themes/Concepts)**:

* Under "Human Rights" (Yellow): Dignity, Autonomy, Freedom, Non-discrimination, Inclusiveness, Well-being, Solidarity.

* Under "Fairness" (Green): Justice, Equity, Bias, Non-discrimination.

* Under "Privacy" (Light Blue): Data protection, Data security, Data governance, Data quality, Data access, Data sharing, Data ownership, Data portability, Data sovereignty, Data ethics, Data literacy, Data education, Data awareness.

* Under "Accountability" (Dark Blue): Responsibility, Oversight, Human control, Robustness, Reliability.

* Under "Transparency" (Pink): Explainability, Interpretability, Communication, Disclosure.

* Under "Safety" (Orange): Security, Non-maleficence, Beneficence.

* Under "Sustainability" (Red): Environmental, Social, Economic.

* **Dot Size**: The size of the dots indicates the relative importance or frequency of the concept.

**3. Jobin et al. 2019 Table (Middle-right)**

* **Type**: Data Table.

* **Title**: "Table 3 | Ethical principles identified in existing AI guidelines"

* **Columns**:

1. "Ethical principle"

2. "Number of documents"

3. "Included codes"

* **Content**:

| Ethical principle | Number of documents | Included codes |

|---|---|---|

| Transparency | 73/84 | Transparency, explainability, interpretability, communication, disclosure, showing |

| Justice and fairness | 66/84 | Justice, fairness, consistency, inclusion, equality, equity, (non-) bias, (non-)discrimination, diversity, plurality, accessibility, reversibility, remedy, redress, challenge, access and distribution |

| Non-maleficence | 60/84 | Non-maleficence, security, safety, harm, protection, precaution, prevention, integrity (bodily or mental), non-subversion |

| Responsibility | 60/84 | Responsibility, accountability, liability, acting with integrity |

| Privacy | 43/84 | Privacy, personal or private information |

| Beneficence | 41/84 | Benefits, beneficence, well-being, peace, social good, common-good |

| Freedom and autonomy | 34/84 | Freedom, autonomy, consent, choice, self-determination, liberty, empowerment |

| Trust | 26/84 | Trust |

| Sustainability | 14/84 | Sustainability, environment (nature), energy, resources (energy) |

| Dignity | 13/84 | Dignity |

| Solidarity | 6/84 | Solidarity, social security, cohesion |

**4. Basic AI Virtues Stack (Far-right)**

* **Type**: Stacked list.

* **Title**: "basic AI virtues"

* **Content (from top to bottom)**:

1. Justice

2. Honesty

3. Responsibility

4. Care

**Connecting Arrows**:

* A large grey arrow originates from the upper-middle section of the Hagendorff 2020c chart (indicating highly prevalent principles) and points towards the "basic AI virtues" stack.

* A grey arrow originates from the "Non-maleficence" row in the Jobin et al. 2019 table and points towards the "basic AI virtues" stack.

* A grey arrow originates from a prominent dark blue dot labeled "Responsibility" within the "Accountability" segment of the Fjeld et al. 2020 chart and points towards the "basic AI virtues" stack.

### Detailed Analysis

**Hagendorff 2020c Chart:**

The heatmap visually represents the frequency of various ethical principles across 84 AI guidelines.

* **Trend**: Principles listed at the top of the chart, such as Transparency, Accountability, Fairness, Privacy, Security, Safety, Human control, Human oversight, Beneficence, Sustainability, Dignity, Autonomy, Freedom, Trust, Solidarity, Well-being, Inclusiveness, Explainability, Interpretability, Robustness, Reliability, Responsibility, Non-maleficence, and Justice, show a high prevalence, indicated by numerous dark green cells across the columns and a dark green segment in the rightmost summary column.

* As one moves down the list of principles, the prevalence generally decreases, with more light green, white, and eventually red cells appearing, particularly for principles related to "Data governance" and its sub-categories. This indicates that data-specific ethical considerations are less uniformly addressed across the surveyed documents compared to broader principles.

**Fjeld et al. 2020 Chart:**

This radial chart categorizes AI ethical themes and highlights specific concepts within them.

* **Key Themes**: The chart identifies seven main themes: Human Rights (yellow), Fairness (green), Privacy (light blue), Accountability (dark blue), Transparency (pink), Safety (orange), and Sustainability (red).

* **Prominent Concepts**: Within these themes, larger dots signify more emphasized concepts. For example:

* Under "Human Rights", "Dignity" and "Autonomy" appear prominent.

* Under "Fairness", "Justice" and "Equity" are notable.

* Under "Privacy", "Data protection" and "Data security" are significant.

* Under "Accountability", "Responsibility" is a large, central dot.

* Under "Transparency", "Explainability" and "Interpretability" are prominent.

* Under "Safety", "Non-maleficence" and "Beneficence" are notable.

* The arrow specifically highlights "Responsibility" (dark blue dot) as a key input.

**Jobin et al. 2019 Table:**

This table provides quantitative data on the frequency of ethical principles.

* **Top Principles by Frequency**:

1. Transparency: 73 out of 84 documents (approx. 87%)

2. Justice and fairness: 66 out of 84 documents (approx. 79%)

3. Non-maleficence: 60 out of 84 documents (approx. 71%)

4. Responsibility: 60 out of 84 documents (approx. 71%)

* **Lower Principles by Frequency**:

* Privacy: 43/84 (approx. 51%)

* Beneficence: 41/84 (approx. 49%)

* Freedom and autonomy: 34/84 (approx. 40%)

* Trust: 26/84 (approx. 31%)

* Sustainability: 14/84 (approx. 17%)

* Dignity: 13/84 (approx. 15%)

* Solidarity: 6/84 (approx. 7%)

* The "Included codes" column provides a detailed breakdown of sub-concepts associated with each main ethical principle, offering a qualitative dimension to the quantitative frequency data.

* The arrow specifically highlights "Non-maleficence" as a key input.

**Basic AI Virtues Stack:**

This component presents the final output of the synthesis process.

* The four identified "basic AI virtues" are: Justice, Honesty, Responsibility, and Care.

### Key Observations

* There is a clear consensus on certain ethical principles across the AI guidelines, with "Transparency," "Justice and fairness," "Non-maleficence," and "Responsibility" consistently appearing as the most frequently cited (Jobin et al. 2019).

* The Hagendorff 2020c heatmap visually reinforces this, showing a high density of green cells for these top-tier principles.

* The Fjeld et al. 2020 chart provides a thematic categorization, showing how these principles are grouped and which sub-concepts are most emphasized within each theme. "Responsibility" is highlighted as a significant concept within "Accountability."

* The "basic AI virtues" appear to be a distillation of these frequently occurring and emphasized principles. "Justice" and "Responsibility" are directly named in the source analyses and appear in the virtues list. "Non-maleficence" (from Jobin et al.) and "Care" (a broader concept encompassing beneficence and non-maleficence) are likely related. "Honesty" could be a reinterpretation or a higher-level abstraction of "Transparency" and "Trust."

### Interpretation

This diagram serves as a meta-analysis, demonstrating how a comprehensive review of existing AI ethical guidelines can lead to the identification of a concise set of fundamental "basic AI virtues." The arrows explicitly indicate that the "basic AI virtues" are derived from the insights gained from the three referenced research works.

The process suggests a move from a broad, detailed landscape of ethical principles (Hagendorff 2020c, Fjeld et al. 2020) and their quantitative prevalence (Jobin et al. 2019) to a more abstract, foundational set of virtues.

* **Justice** is directly carried over, reflecting its high prevalence and importance in all source analyses.

* **Responsibility** is also directly carried over, highlighted by both Jobin et al. (60/84 documents) and Fjeld et al. (prominent dot under Accountability).

* **Honesty** appears to be a synthesized virtue, likely encompassing aspects of "Transparency" (most frequent principle at 73/84 in Jobin et al.), "Trust" (26/84), and related concepts like "Explainability" and "Interpretability" from the Fjeld et al. chart. Honesty implies truthfulness and openness, which are core to transparency and building trust.

* **Care** is a broader virtue that likely integrates "Non-maleficence" (60/84 in Jobin et al., prominent in Fjeld et al. under Safety) and "Beneficence" (41/84), emphasizing the proactive and protective aspects of AI development and deployment.

The diagram effectively argues that despite the complexity and multitude of ethical principles discussed in AI guidelines, a core set of virtues can be identified, providing a simplified yet robust framework for ethical AI development. This distillation is crucial for practical application and for fostering a common understanding of fundamental ethical expectations for AI systems. The visual flow from detailed analysis to concise virtues underscores a process of abstraction and prioritization in AI ethics research.

DECODING INTELLIGENCE...