## Diagram: Trustworthy AI Framework

### Overview

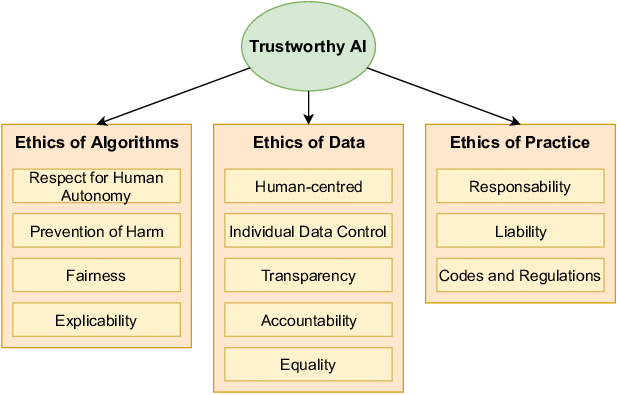

The image is a hierarchical concept diagram illustrating the components of "Trustworthy AI." It presents a top-down structure where a central concept branches into three primary ethical pillars, each containing specific principles or considerations. The diagram uses a clean, box-and-arrow layout with color-coding to distinguish levels of hierarchy.

### Components/Axes

The diagram has a clear hierarchical structure with three levels:

1. **Top-Level Concept (Central Node):**

* **Label:** "Trustworthy AI"

* **Shape & Color:** A light green oval.

* **Position:** Top-center of the image.

2. **Primary Pillars (Second Level):**

Three rectangular boxes with orange borders, positioned horizontally below the central oval. Each is connected to the central oval by a downward-pointing black arrow.

* **Left Box Label:** "Ethics of Algorithms"

* **Center Box Label:** "Ethics of Data"

* **Right Box Label:** "Ethics of Practice"

3. **Sub-Principles (Third Level):**

Within each primary pillar box, there are smaller, light-yellow rectangular boxes containing the specific principles. They are listed vertically.

### Detailed Analysis

**1. Ethics of Algorithms (Left Pillar)**

* Contains four sub-principles:

* Respect for Human Autonomy

* Prevention of Harm

* Fairness

* Explicability

**2. Ethics of Data (Center Pillar)**

* Contains five sub-principles:

* Human-centred

* Individual Data Control

* Transparency

* Accountability

* Equality

**3. Ethics of Practice (Right Pillar)**

* Contains three sub-principles:

* Responsability *(Note: This appears to be a spelling variant or typo for "Responsibility")*

* Liability

* Codes and Regulations

### Key Observations

* **Structural Symmetry:** The diagram is balanced, with the central "Ethics of Data" pillar containing the most sub-principles (five), flanked by pillars with four and three items respectively.

* **Visual Hierarchy:** The use of shape (oval vs. rectangles), color (green vs. orange/yellow), and connecting arrows clearly establishes "Trustworthy AI" as the overarching goal, with the three "Ethics of..." categories as its foundational components.

* **Content Scope:** The principles cover a broad spectrum, from high-level philosophical concepts (e.g., "Respect for Human Autonomy," "Fairness") to concrete legal and operational frameworks (e.g., "Liability," "Codes and Regulations").

* **Potential Anomaly:** The term "Responsability" in the "Ethics of Practice" pillar is spelled differently from the standard English "Responsibility." This could be a deliberate choice reflecting a non-English language origin (e.g., French, Spanish) or a simple typographical error.

### Interpretation

This diagram presents a comprehensive, structured framework for understanding what constitutes "Trustworthy AI." It argues that trustworthiness is not a single attribute but emerges from the interplay of three distinct yet interconnected ethical domains:

1. **Ethics of Algorithms:** Focuses on the design, behavior, and internal logic of the AI models themselves. Principles like "Explicability" and "Prevention of Harm" are directly tied to the technical system's operation.

2. **Ethics of Data:** Concerns the lifecycle of the information used to train and run AI systems. It emphasizes human agency ("Individual Data Control") and systemic fairness ("Equality," "Accountability") in data handling.

3. **Ethics of Practice:** Addresses the real-world deployment, governance, and consequences of AI. This pillar moves from theory to implementation, covering legal frameworks ("Liability"), professional standards ("Codes and Regulations"), and operational duty ("Responsability").

The framework suggests that a failure in any one pillar undermines the entire structure of trustworthy AI. For example, a fair algorithm (Ethics of Algorithms) trained on biased data (violating Ethics of Data) deployed without clear accountability (violating Ethics of Practice) cannot be considered trustworthy. The diagram serves as a checklist or map for developers, policymakers, and auditors to ensure all critical ethical dimensions are considered in the AI lifecycle.