## Diagram: Neural Network Architecture with Vector Quantization

### Overview

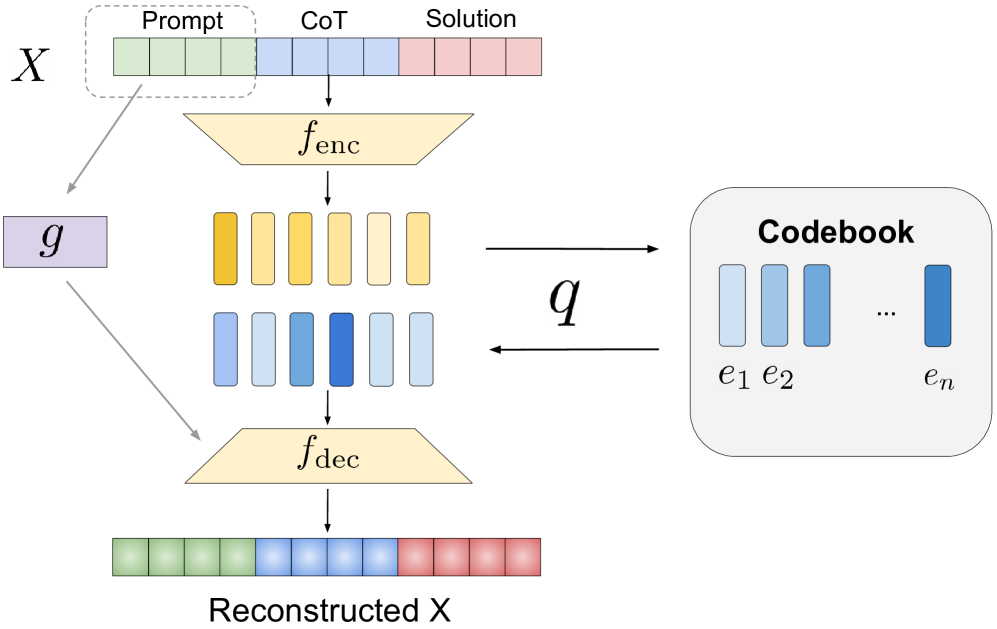

The image displays a technical diagram of a neural network architecture designed for sequence processing and reconstruction. It illustrates a flow from an input sequence `X` through encoding, quantization via a codebook, and decoding to produce a "Reconstructed X". The architecture incorporates a parallel processing path via function `g`.

### Components/Axes

The diagram is composed of several interconnected blocks and labels:

1. **Input Sequence (`X`)**: Located at the top-left. It is a segmented bar divided into three colored sections:

* **Prompt**: Green segments (leftmost).

* **CoT** (Chain-of-Thought): Blue segments (middle).

* **Solution**: Red segments (rightmost).

* A dashed box encloses the "Prompt" section, with an arrow pointing from it to a block labeled `g`.

2. **Encoder (`f_enc`)**: A yellow trapezoid (wider at top) positioned below the input sequence. It receives the full input sequence `X` as indicated by a downward arrow.

3. **Latent Representations**: Below the encoder are two rows of vertical bars representing encoded features:

* **Top Row**: Six yellow bars of varying shades.

* **Bottom Row**: Six blue bars of varying shades, with one bar (fourth from left) being a distinctly darker blue.

4. **Quantization (`q`)**: A bidirectional arrow labeled `q` connects the latent representations to the "Codebook". This indicates a quantization mapping process.

5. **Codebook**: A rounded rectangle on the right side. It contains:

* The title **"Codebook"**.

* A series of blue vertical bars labeled `e₁`, `e₂`, ..., `eₙ`, representing discrete code vectors or embeddings.

6. **Decoder (`f_dec`)**: A yellow trapezoid (wider at bottom) positioned below the latent representations. It receives the quantized latent features.

7. **Output Sequence ("Reconstructed X")**: A segmented bar at the bottom, mirroring the structure of the input `X`:

* Green segments (left).

* Blue segments (middle).

* Red segments (right).

* Labeled **"Reconstructed X"** below it.

8. **Parallel Function (`g`)**: A purple block on the left. It receives input from the "Prompt" section of `X` (via a gray arrow) and its output feeds into the decoder `f_dec` (via another gray arrow), bypassing the main encoder-quantization path.

### Detailed Analysis

The diagram depicts a specific data flow and transformation process:

* **Primary Encoding Path**: The entire input sequence `X` (Prompt + CoT + Solution) is processed by the encoder `f_enc` to produce continuous latent representations (the yellow and blue bars).

* **Quantization Process**: The continuous latent representations are mapped to discrete codes from the **Codebook** via the quantization function `q`. The bidirectional arrow suggests this involves finding the nearest codebook entry (`e₁` to `eₙ`) for each latent vector. The darker blue bar in the latent row likely represents a selected or quantized code.

* **Decoding and Reconstruction**: The quantized latent codes are fed into the decoder `f_dec`. The decoder also receives a direct signal from the input's "Prompt" section via function `g`. The decoder's output is the "Reconstructed X", which aims to replicate the original input's structure (Prompt, CoT, Solution).

* **Parallel Path (`g`)**: This creates a skip-connection or auxiliary pathway, allowing the decoder direct access to the original prompt information, potentially to preserve details or stabilize training.

### Key Observations

1. **Structured Input/Output**: The model explicitly handles sequences with a defined semantic structure (Prompt, Chain-of-Thought, Solution), suggesting it's designed for tasks like reasoning or step-by-step problem-solving.

2. **Vector Quantization (VQ) Core**: The central role of the **Codebook** and quantization `q` identifies this as a Vector Quantized (VQ) model, likely a VQ-VAE or similar, which learns discrete latent representations.

3. **Dual Latent Representation**: The two rows of bars (yellow and blue) after encoding may represent different feature channels or a split in the latent space before quantization.

4. **Asymmetric Encoder/Decoder**: The encoder (`f_enc`) and decoder (`f_dec`) are depicted as trapezoids of opposite orientation, a common visual metaphor for compression (encoding) and reconstruction (decoding).

5. **Color Consistency**: Colors are used consistently to track data types: Green=Prompt, Blue=CoT/Latent Codes, Red=Solution, Yellow=Encoder/Decoder operations.

### Interpretation

This diagram illustrates a **Vector-Quantized Encoder-Decoder architecture with a prompt-conditioned skip connection**.

* **Purpose**: The model is designed to learn a compressed, discrete representation (via the codebook) of structured sequences that involve a prompt, a reasoning chain (CoT), and a final solution. This is highly relevant for generative AI tasks requiring step-by-step reasoning, such as mathematical problem-solving or complex question answering.

* **Mechanism**: The encoder compresses the full sequence into a latent space. The quantization step forces this representation into a discrete set of codes (`e₁...eₙ`), which can improve sample efficiency and enable discrete manipulation. The decoder must then reconstruct the original sequence from these discrete codes.

* **Role of `g`**: The parallel function `g` acting on the prompt suggests a mechanism to prevent the loss of critical initial information during the compression-reconstruction cycle. It ensures the decoder has direct access to the original task specification (the prompt), which could be crucial for generating a coherent and correct solution. This acts as a form of "memory" or "attention" to the input condition.

* **Significance**: This architecture combines the benefits of discrete representation learning (VQ) with the need to preserve and reason over structured textual data. It represents a sophisticated approach to building models that can not only generate text but also internalize and manipulate the reasoning process in a compressed, discrete latent space. The reconstruction goal implies the model is trained in a self-supervised manner to faithfully reproduce its input, learning useful representations in the process.