## Diagram: Sequence Processing Architecture with Codebook

### Overview

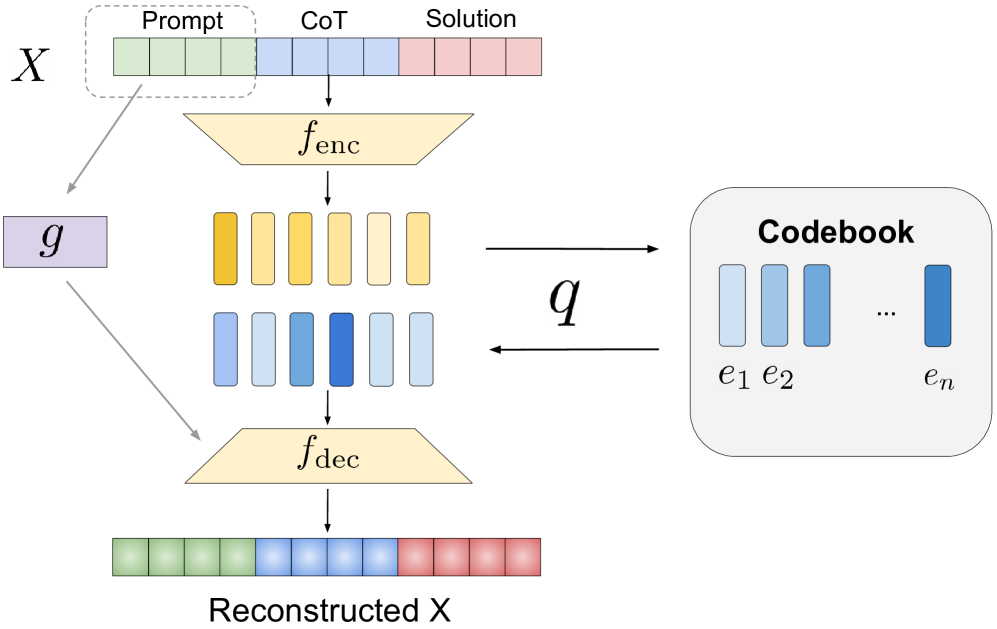

The diagram illustrates a sequence processing pipeline involving encoding, decoding, and codebook-based representation. Input sequence **X** is split into three segments (Prompt, CoT, Solution), processed through an encoder (**f_enc**) to generate embeddings (**q**), which are then decoded via **f_dec** to reconstruct **X**. A separate codebook visualizes the embeddings (**e₁, e₂, ..., eₙ**) derived from the encoder.

---

### Components/Axes

1. **Input Sequence (X)**

- Divided into three colored blocks:

- **Prompt** (green)

- **CoT** (blue)

- **Solution** (pink)

2. **Encoder (f_enc)**

- Processes **X** into embeddings (**q**).

- Visualized as two rows of colored blocks:

- Top row: Yellow/light yellow (possibly representing attention weights or token importance).

- Bottom row: Blue gradient (likely embeddings **q**).

3. **Decoder (f_dec)**

- Reconstructs **X** from embeddings (**q**).

- Output matches the original **X** structure (green/blue/pink blocks).

4. **Codebook**

- Right-side panel labeled "Codebook" with vertical bars:

- **e₁** (light blue)

- **e₂** (medium blue)

- **...**

- **eₙ** (dark blue)

- Represents discrete embeddings learned by **f_enc**.

---

### Detailed Analysis

- **Input Segmentation**:

The original sequence **X** is partitioned into three distinct regions (Prompt, CoT, Solution), suggesting a hierarchical or staged processing approach.

- **Encoder Functionality**:

- **f_enc** maps **X** to a latent space (**q**), visualized as a blue gradient.

- The top row of **f_enc** (yellow blocks) may represent intermediate attention mechanisms or token-level features.

- **Decoder Functionality**:

- **f_dec** reconstructs **X** from **q**, maintaining the original segmentation.

- The reconstruction preserves the color-coded structure, indicating fidelity to the input.

- **Codebook Structure**:

- Embeddings (**e₁, e₂, ..., eₙ**) are ordered from light to dark blue, possibly indicating increasing complexity or frequency of use.

- The codebook acts as a discrete dictionary for the encoder’s output, critical for tasks like quantization or compression.

---

### Key Observations

1. **Reconstruction Fidelity**:

The reconstructed **X** matches the original segmentation, implying **f_dec** effectively inverts **f_enc**.

2. **Codebook Granularity**:

The codebook’s progression from light to dark blue suggests a structured embedding space, potentially optimized for reconstruction accuracy.

3. **Attention/Token Importance**:

The yellow blocks in **f_enc** may highlight tokens or regions critical to the encoding process.

---

### Interpretation

This architecture resembles a **variational autoencoder (VAE)** or **codebook-based transformer** used for sequence modeling. The explicit codebook implies discrete latent representations, which could enhance interpretability or efficiency in tasks like text generation or compression. The segmentation of **X** into Prompt, CoT, and Solution hints at a multi-stage reasoning process, where the encoder captures contextual relationships and the decoder reconstructs the output while preserving structural integrity. The codebook’s role is pivotal, acting as a bridge between continuous embeddings and discrete representations, enabling scalable and interpretable model behavior.