\n

## Line Chart: Pass@k (%) Performance Comparison

### Overview

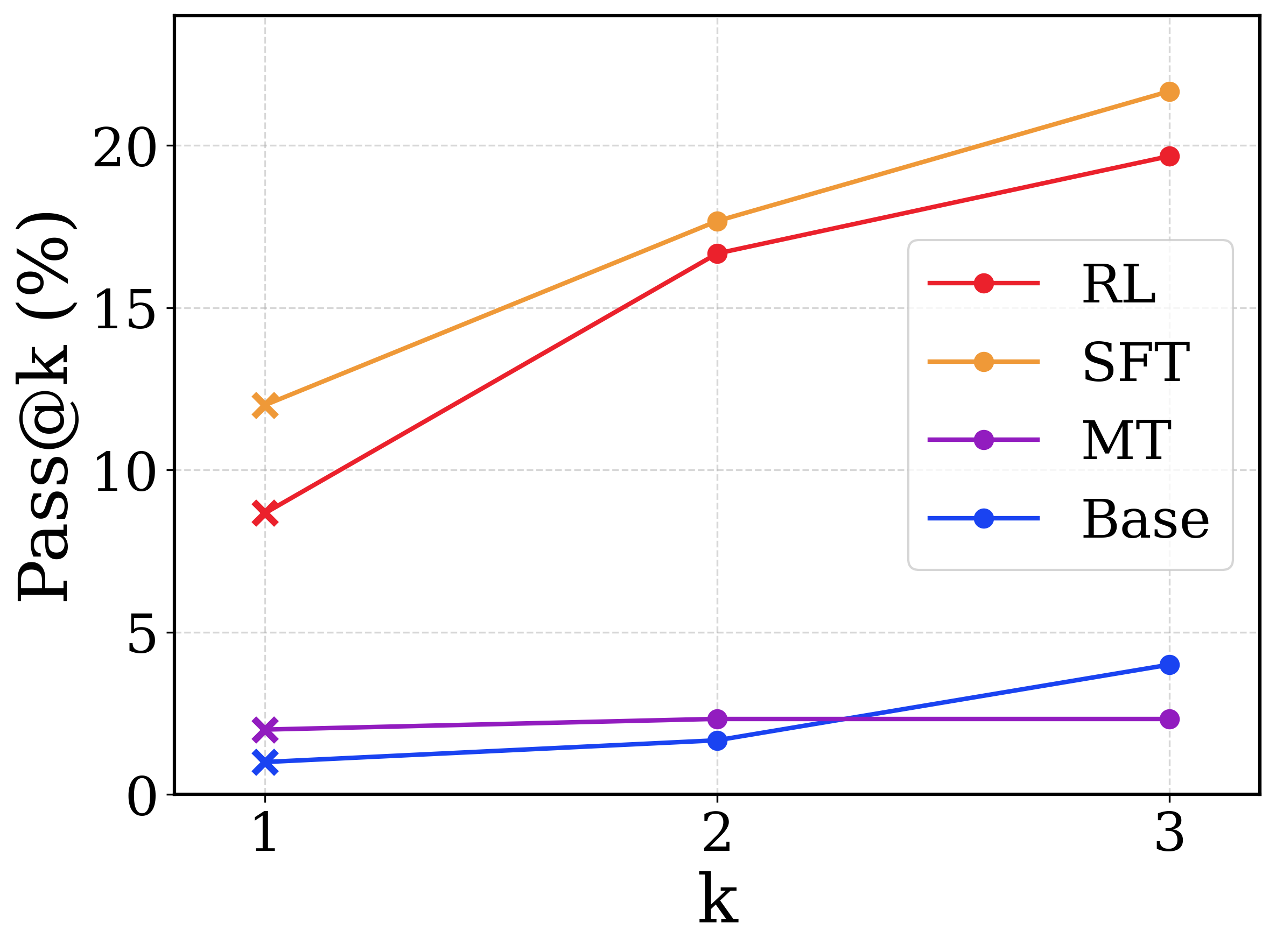

This is a line chart comparing the performance of four different models or methods (RL, SFT, MT, Base) across three values of `k` (1, 2, 3). The performance metric is "Pass@k (%)", which likely represents the percentage of problems solved correctly when given `k` attempts or samples. The chart shows that performance generally increases with `k` for most methods, but the rate of improvement varies significantly.

### Components/Axes

* **X-Axis:** Labeled "k". It has three discrete, evenly spaced tick marks at values 1, 2, and 3.

* **Y-Axis:** Labeled "Pass@k (%)". The scale runs from 0 to just above 20, with major tick marks at 0, 5, 10, 15, and 20.

* **Legend:** Located in the center-right portion of the chart area. It contains four entries, each with a colored line and marker:

* **RL:** Red line with circular markers.

* **SFT:** Orange line with circular markers.

* **MT:** Purple line with circular markers.

* **Base:** Blue line with circular markers.

* **Grid:** A light gray, dashed grid is present in the background, aligned with the major y-axis ticks.

### Detailed Analysis

The chart plots four data series. Below is the extracted data for each series at k=1, 2, and 3. Values are approximate based on visual alignment with the y-axis.

**1. SFT (Orange Line)**

* **Trend:** Shows a strong, steady upward slope from k=1 to k=3.

* **Data Points:**

* k=1: ~12.0%

* k=2: ~17.5%

* k=3: ~21.5%

**2. RL (Red Line)**

* **Trend:** Shows a strong upward slope, similar to SFT but starting from a lower point. The slope appears slightly steeper between k=1 and k=2 than between k=2 and k=3.

* **Data Points:**

* k=1: ~9.0%

* k=2: ~16.5%

* k=3: ~19.5%

**3. Base (Blue Line)**

* **Trend:** Shows a gentle upward slope. It starts very low and increases modestly with `k`.

* **Data Points:**

* k=1: ~1.0%

* k=2: ~1.8%

* k=3: ~4.0%

**4. MT (Purple Line)**

* **Trend:** Nearly flat. Performance shows almost no change as `k` increases from 1 to 3.

* **Data Points:**

* k=1: ~2.0%

* k=2: ~2.2%

* k=3: ~2.2%

### Key Observations

* **Performance Hierarchy:** At all values of `k`, the performance order from highest to lowest is consistently: SFT > RL > MT/Base (with Base surpassing MT at k=3).

* **Greatest Improvement:** The RL method shows the most dramatic relative improvement, more than doubling its Pass@1 score by k=3.

* **Stagnation:** The MT method's performance is effectively stagnant, showing negligible gain from increasing `k`.

* **Crossover:** The Base method, while starting the lowest, overtakes the MT method between k=2 and k=3.

* **Convergence Gap:** The gap between the top two methods (SFT, RL) and the bottom two (MT, Base) is substantial and widens as `k` increases.

### Interpretation

This chart demonstrates the effectiveness of different training or sampling strategies (likely for a code generation or problem-solving task) when evaluated with the Pass@k metric.

* **SFT (Supervised Fine-Tuning) is the most effective strategy** shown, consistently achieving the highest pass rates. Its strong performance suggests that fine-tuning on high-quality demonstrations is highly beneficial.

* **RL (Reinforcement Learning) is also highly effective**, particularly as `k` increases. Its steep improvement curve indicates that RL-trained models benefit greatly from having multiple attempts, possibly because they can explore a more diverse solution space.

* **The Base model performs poorly at k=1 but shows some capacity to improve with more samples**, suggesting its initial generations are low quality but it has some latent capability that can be unlocked with repeated sampling.

* **The MT (likely "Multi-Task" or another baseline) model shows a critical failure mode**: its performance does not scale with `k`. This implies the model is either generating very similar, incorrect solutions each time or has a fundamental limitation that prevents it from benefiting from additional attempts.

The data strongly suggests that for tasks measured by Pass@k, investing in SFT or RL training yields significantly better returns than the Base or MT approaches, especially when the evaluation allows for multiple attempts (k > 1). The widening gap at higher `k` values highlights that advanced training methods not only improve single-attempt accuracy but also dramatically improve the model's ability to self-correct or find correct solutions within a limited budget of attempts.