# Technical Document Extraction

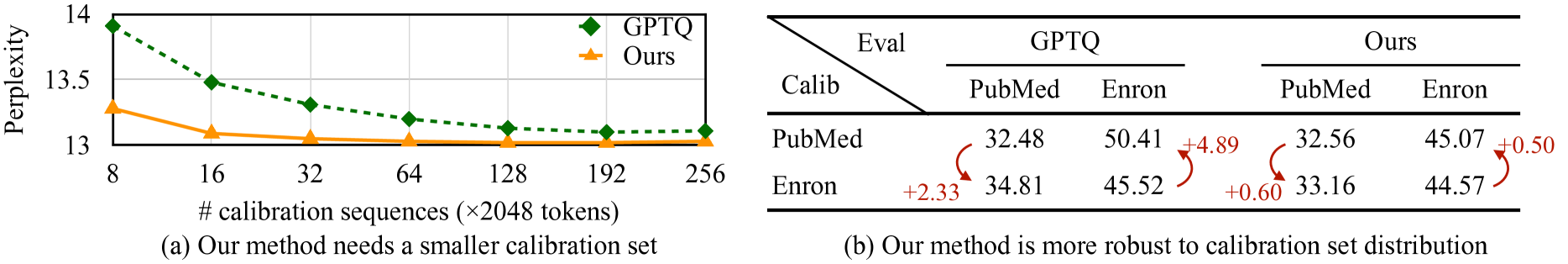

## Figure (a): Line Chart

### Title

- "Our method needs a smaller calibration set"

### Axes

- **X-axis**:

- Label: `# calibration sequences (×2048 tokens)`

- Values: 8, 16, 32, 64, 128, 192, 256

- **Y-axis**:

- Label: `Perplexity`

- Range: 13 to 14

### Legend

- **GPTQ**: Green diamonds (`□`)

- **Ours**: Orange triangles (`▲`)

### Data Trends

- **GPTQ**:

- Starts at 13.9 perplexity at 8 sequences

- Decreases to ~13.1 by 256 sequences

- **Ours**:

- Starts at 13.3 perplexity at 8 sequences

- Decreases to ~13.1 by 256 sequences

- **Observation**: Both methods show decreasing perplexity with more calibration sequences, but "Ours" maintains lower perplexity across all sequence counts.

---

## Figure (b): Table

### Title

- "Our method is more robust to calibration set distribution"

### Table Structure

| | **GPTQ** | **Ours** |

|----------------|----------------|----------------|

| **Eval** | | |

| **Calib** | | |

| **PubMed** | 32.48 | 32.56 |

| **Enron** | 50.41 | 45.07 |

| **PubMed** | 34.81 | 33.16 |

| **Enron** | 45.52 | 44.57 |

### Arrows & Differences

- **GPTQ PubMed → Ours PubMed**: `+0.08` (32.48 → 32.56)

- **GPTQ Enron → Ours Enron**: `+4.89` (50.41 → 45.07)

- **PubMed Enron (GPTQ) → Ours Enron**: `+0.60` (45.52 → 44.57)

- **PubMed Enron (Ours) → GPTQ Enron**: `+0.50` (44.57 → 45.07)

### Key Observations

- "Ours" method shows smaller performance gaps across evaluation sets compared to GPTQ.

- Largest improvement observed in Enron dataset (`+4.89` reduction).

- Consistent robustness across both PubMed and Enron evaluation sets.