\n

## Diagram: Recurrent Neural Network Unfolding

### Overview

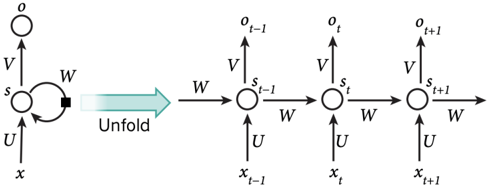

The image depicts the unfolding of a recurrent neural network (RNN) over time. It illustrates how a single RNN cell is expanded into a chain of cells, each representing a time step. The diagram shows the flow of information through the network, highlighting the inputs, outputs, and internal states at each time step.

### Components/Axes

The diagram consists of the following components:

* **RNN Cell (Left):** A single RNN cell with input 'x', hidden state 's', and output 'o'. The cell has connections labeled 'U', 'V', and 'W'.

* **Unfolding Arrow:** A teal arrow indicating the unfolding process. The text "Unfold" is written along the arrow.

* **Unfolded RNN Cells (Right):** A sequence of RNN cells, each representing a time step (t-1, t, t+1). Each cell has input 'x<sub>t-1</sub>', 'x<sub>t</sub>', 'x<sub>t+1</sub>', hidden state 's<sub>t-1</sub>', 's<sub>t</sub>', 's<sub>t+1</sub>', and output 'o<sub>t-1</sub>', 'o<sub>t</sub>', 'o<sub>t+1</sub>'. The connections within each cell are labeled 'U', 'V', and 'W'.

### Detailed Analysis / Content Details

The diagram illustrates the following relationships:

* **Input (x):** The input 'x' is fed into the first RNN cell and then propagates through subsequent time steps as 'x<sub>t-1</sub>', 'x<sub>t</sub>', 'x<sub>t+1</sub>'.

* **Hidden State (s):** The hidden state 's' is updated at each time step and passed to the next cell. The initial hidden state is 's<sub>0</sub>', and it evolves to 's<sub>t-1</sub>', 's<sub>t</sub>', 's<sub>t+1</sub>'.

* **Output (o):** The output 'o' is generated at each time step based on the hidden state. The outputs are 'o<sub>t-1</sub>', 'o<sub>t</sub>', 'o<sub>t+1</sub>'.

* **Weights (U, V, W):** The weights 'U', 'V', and 'W' are shared across all time steps. 'U' represents the input-to-hidden weight, 'V' represents the hidden-to-output weight, and 'W' represents the hidden-to-hidden weight.

The diagram does not provide specific numerical values for the weights or the states. It is a conceptual illustration of the RNN unfolding process.

### Key Observations

* The unfolding process demonstrates how RNNs can process sequential data by maintaining a hidden state that captures information about past inputs.

* The shared weights 'U', 'V', and 'W' across all time steps allow the RNN to generalize to different sequence lengths.

* The diagram highlights the temporal dependency in RNNs, where the output at each time step depends on both the current input and the previous hidden state.

### Interpretation

The diagram illustrates a fundamental concept in recurrent neural networks: the ability to process sequential data by maintaining a hidden state that represents the network's memory of past inputs. The unfolding process clarifies how the RNN's internal state evolves over time, allowing it to capture temporal dependencies in the data. This is crucial for tasks such as natural language processing, time series analysis, and speech recognition, where the order of information is important. The diagram emphasizes the core mechanism of RNNs – the recurrent connection represented by the weight 'W' – which enables the network to retain information across time steps. The diagram is a conceptual representation and does not contain specific data points or numerical values, but it effectively conveys the underlying principle of RNN unfolding.