## Diagram: Recurrent Neural Network Unfolding

### Overview

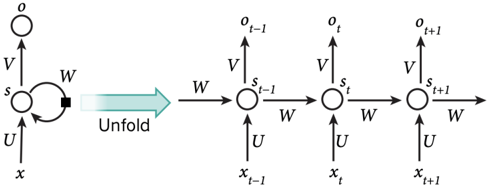

The image illustrates the unfolding of a recurrent neural network (RNN) over time. It shows a single RNN cell and its equivalent representation as a sequence of cells, each processing an input at a specific time step.

### Components/Axes

* **Left Side:** A single RNN cell with the following components:

* `x`: Input to the cell.

* `s`: State of the cell.

* `o`: Output of the cell.

* `U`: Weight matrix for the input.

* `V`: Weight matrix for the output.

* `W`: Weight matrix for the recurrent connection.

* **Middle:** An arrow labeled "Unfold" indicating the transformation from the single cell to the unfolded network.

* **Right Side:** The unfolded RNN, represented as a sequence of cells, each corresponding to a time step:

* `x_{t-1}`: Input at time t-1.

* `s_{t-1}`: State at time t-1.

* `o_{t-1}`: Output at time t-1.

* `x_t`: Input at time t.

* `s_t`: State at time t.

* `o_t`: Output at time t.

* `x_{t+1}`: Input at time t+1.

* `s_{t+1}`: State at time t+1.

* `o_{t+1}`: Output at time t+1.

* `U`: Weight matrix for the input (applied at each time step).

* `V`: Weight matrix for the output (applied at each time step).

* `W`: Weight matrix for the recurrent connection (applied at each time step).

### Detailed Analysis

* **Single RNN Cell:** The left side shows a single cell receiving input `x` and producing output `o`. The state `s` is updated based on the input and the previous state (recurrent connection). The weights `U`, `V`, and `W` govern the transformations.

* **Unfolding:** The "Unfold" arrow indicates that the recurrent connection (the loop) is unrolled over time. This means that the single cell is conceptually replicated for each time step in the sequence.

* **Unfolded RNN:** The right side shows the unfolded network. Each cell represents a time step. The input `x_t` at each time step is processed, and the state `s_t` is updated based on the input and the previous state `s_{t-1}`. The output `o_t` is produced at each time step. The weight matrices `U`, `V`, and `W` are shared across all time steps.

### Key Observations

* The diagram illustrates the core concept of RNNs: processing sequential data by maintaining a state that is updated over time.

* Unfolding the RNN makes the flow of information over time explicit.

* The shared weight matrices `U`, `V`, and `W` are a key feature of RNNs, allowing them to generalize across different time steps.

### Interpretation

The diagram demonstrates how a recurrent neural network processes sequential data. The unfolding process clarifies how the network's state is updated over time, allowing it to "remember" information from previous time steps. This memory is crucial for tasks such as natural language processing, where the meaning of a word depends on the context of the surrounding words. The shared weight matrices enable the network to learn general patterns that are applicable across different time steps, making it efficient for processing sequences of varying lengths.