\n

## Diagram: Recurrent Neural Network (RNN) Unfolding

### Overview

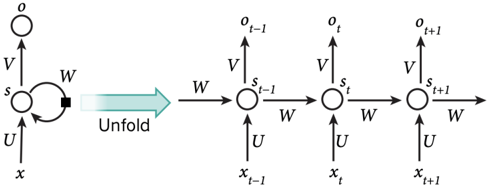

The image is a technical diagram illustrating the fundamental architecture of a Recurrent Neural Network (RNN). It visually explains the concept of "unfolding" or "unrolling" the network through time, which is a core concept for understanding how RNNs process sequential data. The diagram is divided into two main parts: a compact, recurrent representation on the left and its equivalent unfolded version over discrete time steps on the right.

### Components/Axes

The diagram contains no traditional chart axes or legends. Its components are nodes (circles), directed edges (arrows), and textual labels representing variables and weight matrices.

**Left Side (Compact Form):**

* **Nodes:**

* `x`: Input node at the bottom.

* `s`: Hidden state node in the center.

* `o`: Output node at the top.

* **Weight Matrices (labeled on arrows):**

* `U`: Weight matrix connecting input `x` to hidden state `s`.

* `V`: Weight matrix connecting hidden state `s` to output `o`.

* `W`: Recurrent weight matrix connecting the hidden state `s` back to itself (indicated by a dashed, looping arrow).

* **Label:** The word "Unfold" is placed above a large, light-blue arrow pointing from the compact form to the unfolded form.

**Right Side (Unfolded Form):**

* **Time Steps:** The network is shown across three sequential time steps, labeled with subscripts: `t-1`, `t`, and `t+1`.

* **Nodes (per time step):**

* **Inputs (Bottom Row):** `x_{t-1}`, `x_t`, `x_{t+1}`.

* **Hidden States (Middle Row):** `s_{t-1}`, `s_t`, `s_{t+1}`.

* **Outputs (Top Row):** `o_{t-1}`, `o_t`, `o_{t+1}`.

* **Weight Matrices (labeled on arrows):**

* `U`: Connects each input `x_t` to its corresponding hidden state `s_t`.

* `V`: Connects each hidden state `s_t` to its corresponding output `o_t`.

* `W`: Connects the hidden state from the previous time step (`s_{t-1}`) to the hidden state of the current time step (`s_t`). This is the unfolded representation of the recurrent connection.

### Detailed Analysis

The diagram explicitly maps the components and information flow.

1. **Spatial Grounding & Flow:**

* The flow is strictly left-to-right for the temporal sequence and bottom-to-top for processing within a single time step.

* In the unfolded form, information flows from the input `x_t`, through the hidden state `s_t` (which also receives information from the previous state `s_{t-1}`), to produce the output `o_t`.

* The weight matrices `U`, `V`, and `W` are **shared** across all time steps. This is a critical feature of RNNs, indicated by the same labels (`U`, `V`, `W`) being used on the arrows for every time step.

2. **Component Isolation:**

* **Header/Label:** The word "Unfold" and the large arrow define the diagram's purpose.

* **Main Diagram (Left):** Shows the abstract, recurrent definition of the network cell.

* **Main Diagram (Right):** Shows the concrete, computational graph over time, making the temporal dependencies explicit. The dashed arrow in the compact form becomes a solid, forward-pointing arrow (`W`) from `s_{t-1}` to `s_t` in the unfolded form.

### Key Observations

* The diagram is a standard, canonical representation found in machine learning textbooks and literature.

* The use of a dashed line for the recurrent connection (`W`) in the compact form is a common convention to denote a self-loop that is active over time.

* The unfolding process clarifies that the hidden state `s_t` is a function of both the current input `x_t` and the previous hidden state `s_{t-1}`.

* There are no numerical values or data trends; this is a purely conceptual and structural diagram.

### Interpretation

This diagram is a foundational explanatory tool for understanding sequential modeling with neural networks.

* **What it Demonstrates:** It visually answers the question: "How does an RNN remember past information?" The answer is through the hidden state `s`, which is passed from one time step to the next via the weight matrix `W`. The unfolding makes the "memory" aspect explicit by showing `s_{t-1}` influencing `s_t`.

* **Relationships:** The diagram establishes the core computational relationships:

1. The current output `o_t` depends on the current hidden state `s_t`.

2. The current hidden state `s_t` depends on the current input `x_t` **and** the previous hidden state `s_{t-1}`.

3. The parameters (`U`, `V`, `W`) are constant over time, allowing the network to generalize patterns across different positions in a sequence.

* **Underlying Concept:** The "Unfold" operation is not a physical process but a conceptual one used for analysis (e.g., understanding backpropagation through time - BPTT) and for implementing the network in software. The diagram bridges the abstract mathematical recurrence (`s_t = f(s_{t-1}, x_t)`) with a concrete computational graph that can be differentiated and trained.