## Diagram: Memory Architecture Comparison

### Overview

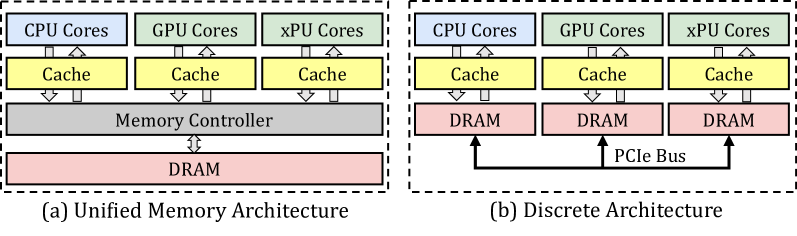

The image compares two memory architectures: **(a) Unified Memory Architecture** and **(b) Discrete Architecture**. Both diagrams illustrate hierarchical relationships between processing units (CPU, GPU, xPU cores), memory hierarchies (cache, DRAM), and interconnects.

### Components/Axes

- **Labels**:

- **Processing Units**: CPU Cores (blue), GPU Cores (green), xPU Cores (yellow).

- **Memory Hierarchy**: Cache (yellow), Memory Controller (gray), DRAM (pink).

- **Interconnect**: PCIe Bus (black).

- **Spatial Grounding**:

- **Unified Architecture (a)**:

- CPU/GPU/xPU cores at the top, connected to shared caches.

- Caches feed into a single Memory Controller.

- Memory Controller connects to a shared DRAM.

- **Discrete Architecture (b)**:

- CPU/GPU/xPU cores at the top, each with dedicated caches.

- Caches connect directly to individual DRAM modules.

- DRAM modules linked via a PCIe Bus.

### Detailed Analysis

- **Unified Architecture (a)**:

- Shared resources: All cores share a single Memory Controller and DRAM.

- Hierarchical flow: Data moves from cores → caches → Memory Controller → DRAM.

- **Discrete Architecture (b)**:

- Dedicated resources: Each core type has its own DRAM.

- Interconnect: PCIe Bus enables communication between DRAM modules.

- Hierarchical flow: Data moves from cores → caches → DRAM → PCIe Bus (for cross-core communication).

### Key Observations

1. **Unified Architecture**:

- Simplified memory hierarchy with centralized control.

- Potential bottleneck at the Memory Controller and shared DRAM.

2. **Discrete Architecture**:

- Decentralized memory access reduces contention.

- PCIe Bus introduces latency for inter-DRAM communication.

### Interpretation

- **Unified Architecture** prioritizes resource sharing, which may improve efficiency for workloads with uniform memory access patterns but risks contention during high concurrency.

- **Discrete Architecture** isolates memory resources per core type, enhancing scalability and reducing contention but at the cost of increased complexity and latency due to the PCIe Bus.

- The diagrams emphasize trade-offs between centralized vs. decentralized memory management, critical for optimizing performance in heterogeneous computing systems.

*Note: No numerical data or trends are present; the focus is on structural and hierarchical relationships.*