## Heatmap: Classification Accuracies

### Overview

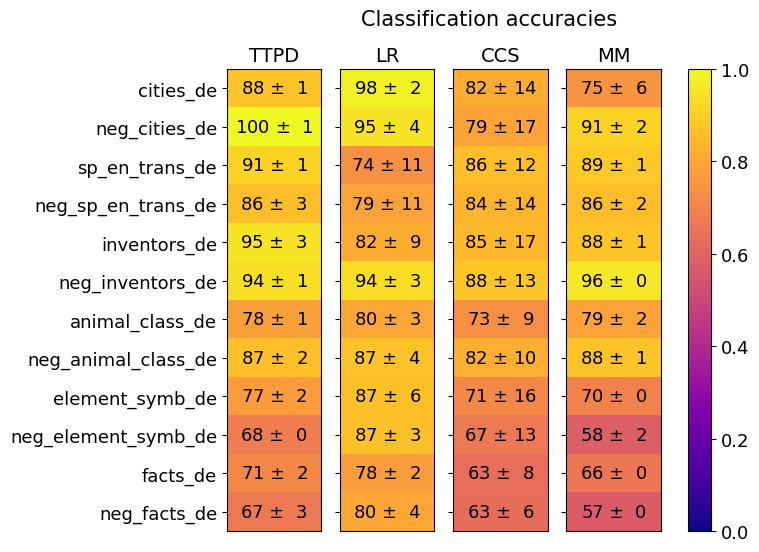

The image is a heatmap titled "Classification accuracies" that displays the performance (accuracy with standard deviation) of four different classification methods (TTPD, LR, CCS, MM) across twelve different datasets. The performance is encoded using a color gradient, with a corresponding color bar legend on the right side of the chart.

### Components/Axes

* **Title:** "Classification accuracies" (centered at the top).

* **Y-axis (Left):** Lists 12 dataset names. From top to bottom:

1. `cities_de`

2. `neg_cities_de`

3. `sp_en_trans_de`

4. `neg_sp_en_trans_de`

5. `inventors_de`

6. `neg_inventors_de`

7. `animal_class_de`

8. `neg_animal_class_de`

9. `element_symb_de`

10. `neg_element_symb_de`

11. `facts_de`

12. `neg_facts_de`

* **X-axis (Top):** Lists 4 method names. From left to right:

1. `TTPD`

2. `LR`

3. `CCS`

4. `MM`

* **Legend (Right):** A vertical color bar labeled from `0.0` (bottom, dark purple) to `1.0` (top, bright yellow). The gradient transitions from purple through red and orange to yellow, indicating increasing accuracy.

* **Data Cells:** A 12x4 grid. Each cell contains a numerical value in the format `XX ± Y`, representing the mean accuracy percentage and its standard deviation. The cell's background color corresponds to the mean accuracy value according to the legend.

### Detailed Analysis

Below is the extracted data for each cell, organized by dataset (row) and method (column). Values are percentages.

| Dataset (Y-axis) | TTPD (Column 1) | LR (Column 2) | CCS (Column 3) | MM (Column 4) |

| :--- | :--- | :--- | :--- | :--- |

| **cities_de** | 88 ± 1 (Yellow) | 98 ± 2 (Bright Yellow) | 82 ± 14 (Orange) | 75 ± 6 (Orange-Red) |

| **neg_cities_de** | 100 ± 1 (Bright Yellow) | 95 ± 4 (Yellow) | 79 ± 17 (Orange) | 91 ± 2 (Yellow) |

| **sp_en_trans_de** | 91 ± 1 (Yellow) | 74 ± 11 (Orange) | 86 ± 12 (Orange-Yellow) | 89 ± 1 (Yellow) |

| **neg_sp_en_trans_de** | 86 ± 3 (Orange-Yellow) | 79 ± 11 (Orange) | 84 ± 14 (Orange) | 86 ± 2 (Orange-Yellow) |

| **inventors_de** | 95 ± 3 (Yellow) | 82 ± 9 (Orange) | 85 ± 17 (Orange) | 88 ± 1 (Yellow) |

| **neg_inventors_de** | 94 ± 1 (Yellow) | 94 ± 3 (Yellow) | 88 ± 13 (Orange-Yellow) | 96 ± 0 (Bright Yellow) |

| **animal_class_de** | 78 ± 1 (Orange) | 80 ± 3 (Orange) | 73 ± 9 (Orange) | 79 ± 2 (Orange) |

| **neg_animal_class_de** | 87 ± 2 (Orange-Yellow) | 87 ± 4 (Orange-Yellow) | 82 ± 10 (Orange) | 88 ± 1 (Yellow) |

| **element_symb_de** | 77 ± 2 (Orange) | 87 ± 6 (Orange-Yellow) | 71 ± 16 (Orange-Red) | 70 ± 0 (Orange-Red) |

| **neg_element_symb_de** | 68 ± 0 (Orange-Red) | 87 ± 3 (Orange-Yellow) | 67 ± 13 (Red) | 58 ± 2 (Red-Purple) |

| **facts_de** | 71 ± 2 (Orange-Red) | 78 ± 2 (Orange) | 63 ± 8 (Red) | 66 ± 0 (Red) |

| **neg_facts_de** | 67 ± 3 (Red) | 80 ± 4 (Orange) | 63 ± 6 (Red) | 57 ± 0 (Red-Purple) |

### Key Observations

1. **Method Performance:**

* **LR (Logistic Regression?)** shows consistently high and stable performance across most datasets, often achieving the highest or second-highest accuracy (e.g., 98±2 on `cities_de`, 94±3 on `neg_inventors_de`). Its lowest score is 74±11 on `sp_en_trans_de`.

* **TTPD** also performs very well, achieving the highest score on several datasets (100±1 on `neg_cities_de`, 95±3 on `inventors_de`). It shows a significant performance drop on the last four datasets (`element_symb_de` to `neg_facts_de`).

* **MM** has high variance. It excels on some datasets (96±0 on `neg_inventors_de`, 91±2 on `neg_cities_de`) but performs poorly on others, notably achieving the lowest scores in the table on `neg_element_symb_de` (58±2) and `neg_facts_de` (57±0).

* **CCS** generally has the lowest average performance and the highest standard deviations (uncertainty), indicating less consistent results. Its scores are often in the 60s, 70s, or low 80s.

2. **Dataset Difficulty:**

* The datasets with the `_de` suffix (likely German language tasks) appear to be more challenging for all methods. The bottom four rows (`element_symb_de`, `neg_element_symb_de`, `facts_de`, `neg_facts_de`) contain the lowest accuracy scores across the board.

* The `neg_` prefixed datasets (possibly negation or adversarial examples) do not show a uniform pattern of being harder. For example, `neg_cities_de` and `neg_inventors_de` have very high accuracies, while `neg_element_symb_de` and `neg_facts_de` are among the hardest.

3. **Uncertainty (Standard Deviation):**

* The standard deviations vary greatly. Some cells have very low uncertainty (e.g., `neg_inventors_de`/MM: 96 ± 0, `neg_element_symb_de`/TTPD: 68 ± 0), suggesting highly consistent results.

* Others have very high uncertainty (e.g., `cities_de`/CCS: 82 ± 14, `inventors_de`/CCS: 85 ± 17), indicating the model's performance was highly variable across runs or folds for that specific task-method combination.

### Interpretation

This heatmap provides a comparative analysis of four classification techniques on a suite of tasks, likely related to natural language processing or knowledge probing, given dataset names like `cities`, `inventors`, `animal_class`, and `element_symb`. The `neg_` prefix suggests tests on negated or counterfactual versions of these concepts.

The data suggests that **LR is the most robust and reliable method** across this diverse set of tasks, maintaining high accuracy with relatively low variance. **TTPD is a strong performer** but shows a clear weakness on what appear to be more specialized or difficult knowledge-based tasks (elements, facts). The poor and inconsistent performance of **CCS** might indicate it is less suitable for these specific types of classification problems or requires different tuning. **MM is a high-risk, high-reward method**; it can achieve near-perfect accuracy on some tasks but fails dramatically on others, making its application less predictable.

The significant performance drop for all methods on the bottom four datasets indicates these tasks (`element_symb_de`, `facts_de` and their negations) are fundamentally more difficult. This could be due to the nature of the knowledge required (scientific symbols, abstract facts), greater ambiguity, or a more challenging data distribution. The high standard deviations for CCS on many tasks further highlight its instability compared to the more consistent LR and TTPD.