TECHNICAL ASSET FINGERPRINT

a31402fd9f868e3415601819

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

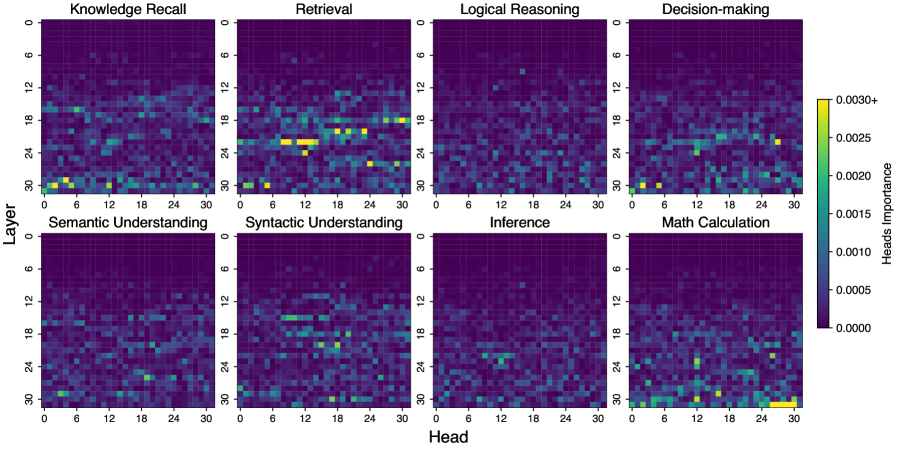

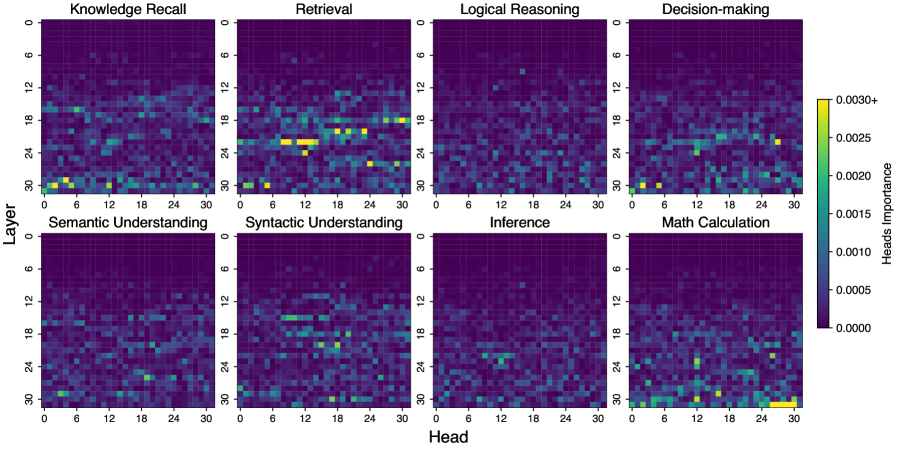

## Heatmap Grid: Head Importance Across Cognitive Tasks

### Overview

The image displays a grid of eight heatmaps arranged in two rows of four. Each heatmap visualizes the "Heads Importance" (likely attention head importance scores) across different layers and heads of a neural network model for a specific cognitive task. The overall purpose is to compare how the model allocates its attention resources for different types of reasoning and understanding.

### Components/Axes

* **Grid Structure:** 2 rows x 4 columns of individual heatmaps.

* **Individual Heatmap Axes:**

* **Y-axis (Vertical):** Labeled "Layer". Scale runs from 0 at the top to 30 at the bottom, with major ticks at 0, 6, 12, 18, 24, 30.

* **X-axis (Horizontal):** Labeled "Head". Scale runs from 0 on the left to 30 on the right, with major ticks at 0, 6, 12, 18, 24, 30.

* **Color Bar (Legend):** Located on the far right of the entire grid.

* **Title:** "Heads Importance".

* **Scale:** A vertical gradient from dark purple (bottom) to bright yellow (top).

* **Values:** Labeled ticks at 0.0000, 0.0005, 0.0010, 0.0015, 0.0020, 0.0025, and "0.0030+" at the top.

* **Heatmap Titles (Top Row, Left to Right):**

1. Knowledge Recall

2. Retrieval

3. Logical Reasoning

4. Decision-making

* **Heatmap Titles (Bottom Row, Left to Right):**

1. Semantic Understanding

2. Syntactic Understanding

3. Inference

4. Math Calculation

### Detailed Analysis

Each heatmap is a 31x31 grid (Layers 0-30, Heads 0-30). The color of each cell represents the importance score for that specific head at that layer for the given task.

**1. Knowledge Recall:**

* **Trend:** Diffuse, low-to-moderate importance across most of the grid. No single, dominant cluster.

* **Notable Patterns:** Slightly elevated importance (teal/green) appears scattered in the middle layers (approx. 12-24) across various heads. A few isolated brighter spots (yellow-green) are visible in the lower layers (24-30), particularly around heads 0-6.

**2. Retrieval:**

* **Trend:** Shows the most distinct and localized clusters of high importance.

* **Notable Patterns:** A prominent, bright yellow cluster (high importance, >0.0025) is located in the middle layers, approximately layers 18-21, spanning heads 6-12. Another significant cluster of high importance (yellow-green) appears in layers 24-27, heads 18-24. The rest of the map is predominantly dark purple (low importance).

**3. Logical Reasoning:**

* **Trend:** Very diffuse and low importance overall. The darkest heatmap of the set.

* **Notable Patterns:** Almost the entire grid is dark purple (0.0000-0.0005). A very faint, scattered pattern of slightly higher importance (dark blue) is barely visible in the middle layers (12-24).

**4. Decision-making:**

* **Trend:** Moderately diffuse, with some concentration in middle-to-higher layers.

* **Notable Patterns:** A band of slightly elevated importance (blue-green) runs horizontally across the middle layers (approx. 15-24). A few brighter spots (green-yellow) are present in the lower layers (24-30), especially around heads 0-6 and 24-30.

**5. Semantic Understanding:**

* **Trend:** Diffuse, similar to Knowledge Recall but with a slightly more defined horizontal band.

* **Notable Patterns:** A consistent, faint horizontal band of moderate importance (blue-green) is visible across most heads in the middle layers (approx. 15-21). The lower layers (24-30) show scattered low-to-moderate importance.

**6. Syntactic Understanding:**

* **Trend:** Shows a clear, concentrated horizontal band of importance.

* **Notable Patterns:** A distinct band of elevated importance (green-yellow) is located in the middle layers, approximately layers 15-21, stretching across most heads (0-30). This is the most defined horizontal structure among all maps.

**7. Inference:**

* **Trend:** Very diffuse and low importance, similar to Logical Reasoning.

* **Notable Patterns:** The grid is almost entirely dark purple. A very sparse scattering of slightly higher importance (dark blue) is present, with no clear concentration.

**8. Math Calculation:**

* **Trend:** Shows a unique pattern with high importance concentrated in the very lowest layers and specific head clusters.

* **Notable Patterns:** The most striking feature is a bright yellow cluster (very high importance) in the bottom-right corner, specifically layers 27-30 and heads 24-30. Another cluster of high importance (yellow-green) is visible in layers 24-27, heads 6-12. The upper and middle layers are mostly dark.

### Key Observations

1. **Task-Specific Allocation:** The model allocates attention head importance very differently depending on the cognitive task. Retrieval and Math Calculation show highly localized, intense clusters, while Logical Reasoning and Inference show almost no concentrated importance.

2. **Layer Specialization:** High-importance heads are most frequently found in the middle-to-lower layers (approx. 15-30). The upper layers (0-12) are consistently low-importance across all tasks.

3. **Horizontal vs. Clustered Patterns:** Syntactic Understanding shows a clear horizontal band (importance consistent across heads at specific layers). In contrast, Retrieval and Math Calculation show tight, localized clusters (importance specific to certain head-layer combinations).

4. **Math Calculation Anomaly:** The pattern for Math Calculation is an outlier, with its highest importance scores located in the very last layers and heads, unlike any other task.

5. **Low Importance for Abstract Reasoning:** Tasks like Logical Reasoning and Inference, which involve abstract step-by-step processing, show the least defined head importance patterns, suggesting a more distributed or less head-specific processing mechanism.

### Interpretation

This visualization provides a "cognitive map" of a neural network's internal processing. It suggests that different capabilities are not uniformly handled but are instead supported by specialized subsystems within the model.

* **Retrieval** relies on a specific set of heads in the middle layers, likely acting as a dedicated "memory access" module.

* **Syntactic Understanding** uses a consistent set of heads across a specific layer range, indicating a stable, dedicated circuit for parsing grammatical structure.

* **Math Calculation** uniquely depends on the final processing stages (lowest layers), possibly because mathematical operations require the most refined, integrated representations before output.

* The diffuse patterns for **Logical Reasoning** and **Inference** might indicate that these tasks are not solved by dedicated "reasoning heads" but emerge from the complex interaction of many heads across the network, or that the importance metric is less sensitive to the heads involved in these processes.

The stark contrast between tasks implies that improving a model's performance on a specific capability (e.g., math) might require targeted intervention on the specific heads and layers identified here, rather than a uniform approach. The absence of importance in the earliest layers (0-12) across all tasks suggests these layers perform more general, low-level feature extraction common to all processing.

DECODING INTELLIGENCE...