## Bar Chart: Prediction Flip Rate Comparison for Llama-3.2 Models

### Overview

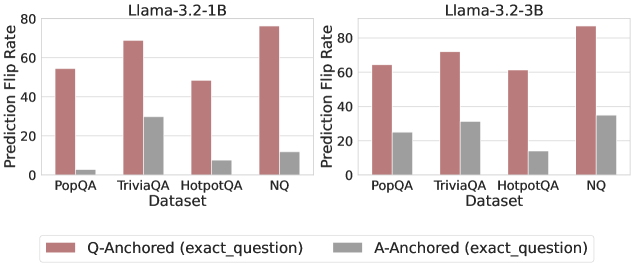

The image displays two side-by-side bar charts comparing the "Prediction Flip Rate" of two language models, Llama-3.2-1B and Llama-3.2-3B, across four question-answering datasets. The charts evaluate the models' sensitivity to two different prompting methods: "Q-Anchored" and "A-Anchored".

### Components/Axes

* **Chart Titles:**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **Y-Axis (Both Charts):**

* Label: `Prediction Flip Rate`

* Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **X-Axis (Both Charts):**

* Label: `Dataset`

* Categories (from left to right): `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend (Bottom Center):**

* A reddish-brown bar labeled: `Q-Anchored (exact_question)`

* A gray bar labeled: `A-Anchored (exact_question)`

### Detailed Analysis

**Llama-3.2-1B (Left Chart):**

* **PopQA:**

* Q-Anchored: ~55

* A-Anchored: ~2 (very low, near zero)

* **TriviaQA:**

* Q-Anchored: ~69

* A-Anchored: ~30

* **HotpotQA:**

* Q-Anchored: ~49

* A-Anchored: ~7

* **NQ:**

* Q-Anchored: ~78 (highest value in this chart)

* A-Anchored: ~12

**Llama-3.2-3B (Right Chart):**

* **PopQA:**

* Q-Anchored: ~64

* A-Anchored: ~25

* **TriviaQA:**

* Q-Anchored: ~71

* A-Anchored: ~31

* **HotpotQA:**

* Q-Anchored: ~61

* A-Anchored: ~15

* **NQ:**

* Q-Anchored: ~85 (highest value in the entire image)

* A-Anchored: ~34

**Trend Verification:**

* In both models, the **Q-Anchored** bars (reddish-brown) are consistently and significantly taller than the **A-Anchored** bars (gray) for every dataset.

* The **A-Anchored** flip rate is very low for the 1B model on PopQA and HotpotQA, but shows a noticeable increase in the 3B model for those same datasets.

* The **NQ** dataset shows the highest flip rate for the Q-Anchored method in both models.

### Key Observations

1. **Dominant Trend:** The Q-Anchored prompting method results in a substantially higher Prediction Flip Rate than the A-Anchored method across all datasets and both model sizes.

2. **Model Size Impact:** The larger Llama-3.2-3B model exhibits higher flip rates overall compared to the 1B model. This increase is particularly dramatic for the A-Anchored method on the PopQA and HotpotQA datasets.

3. **Dataset Sensitivity:** The NQ dataset consistently yields the highest flip rates for the Q-Anchored method. The HotpotQA dataset shows the lowest Q-Anchored flip rate for the 1B model but a much higher one for the 3B model.

4. **A-Anchored Stability:** The A-Anchored method shows relatively lower and more stable flip rates, especially in the smaller model, suggesting it may be a less volatile prompting strategy.

### Interpretation

This data suggests that the model's predictions are far more sensitive to variations when using a "Q-Anchored" (question-anchored) prompting format compared to an "A-Anchored" (answer-anchored) format. The "Prediction Flip Rate" likely measures how often a model changes its answer when the prompt is slightly altered.

The significant increase in flip rates for the larger 3B model, especially under A-Anchored prompting, indicates that increased model capacity may lead to greater sensitivity or less robustness to prompt phrasing, rather than more stability. The high flip rate on the NQ dataset could imply that questions in this dataset are more ambiguous or that the model's knowledge about them is less certain, making its answers more prone to change. The stark contrast between the two anchoring methods highlights a critical design choice in prompt engineering for achieving consistent model outputs.