TECHNICAL ASSET FINGERPRINT

a3410fc1235d727931a3e419

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

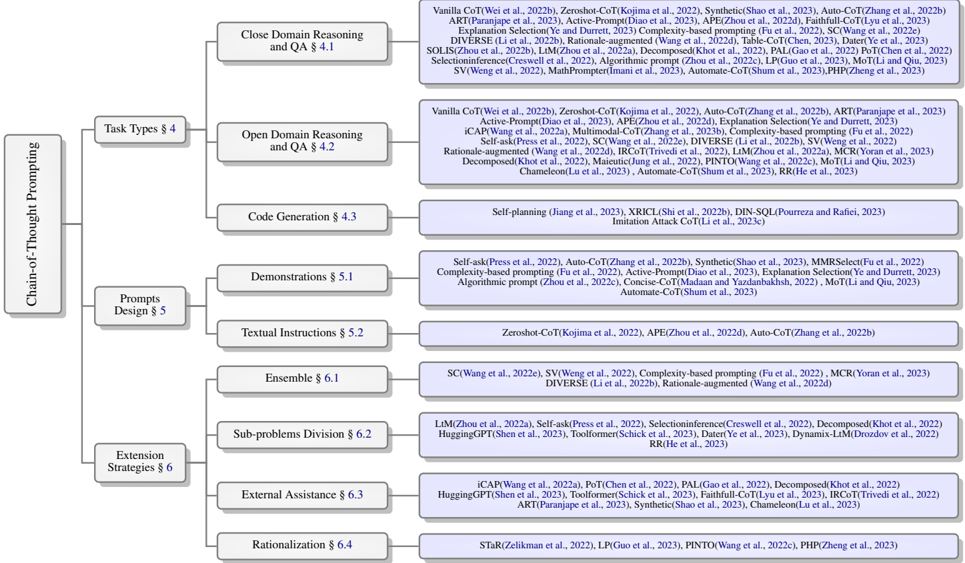

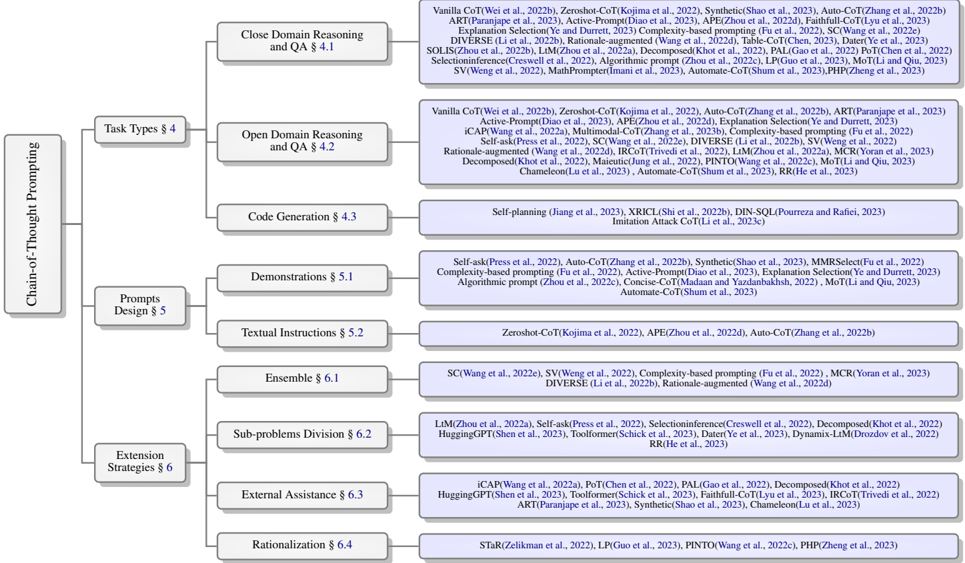

## Diagram: Chain-of-Thought Prompting Taxonomy

### Overview

The image presents a hierarchical diagram outlining a taxonomy of Chain-of-Thought (CoT) prompting techniques. It categorizes these techniques based on task types, prompt design, and extension strategies, providing specific examples and associated publications for each category.

### Components/Axes

* **Main Category:** "Chain-of-Thought Prompting" (located on the left side of the diagram)

* **Level 1 Categories:**

* "Task Types § 4"

* "Prompts Design § 5"

* "Extension Strategies § 6"

* **Level 2 Categories (under Task Types):**

* "Close Domain Reasoning and QA § 4.1"

* "Open Domain Reasoning and QA § 4.2"

* "Code Generation § 4.3"

* **Level 2 Categories (under Prompts Design):**

* "Demonstrations § 5.1"

* "Textual Instructions § 5.2"

* **Level 2 Categories (under Extension Strategies):**

* "Ensemble § 6.1"

* "Sub-problems Division § 6.2"

* "External Assistance § 6.3"

* "Rationalization § 6.4"

* **Examples/Techniques:** Each Level 2 category is further detailed with specific techniques and associated publications (e.g., "Vanilla CoT(Wei et al., 2022b)").

### Detailed Analysis or ### Content Details

**1. Task Types § 4**

* **Close Domain Reasoning and QA § 4.1:**

* Vanilla CoT(Wei et al., 2022b)

* Zeroshot-CoT(Kojima et al., 2022)

* Synthetic(Shao et al., 2023)

* Auto-CoT(Zhang et al., 2022b)

* ART(Paranjape et al., 2023)

* Active-Prompt(Diao et al., 2023)

* APE(Zhou et al., 2022d)

* Faithfull-CoT(Lyu et al., 2023)

* Explanation Selection(Ye and Durrett, 2023)

* Complexity-based prompting (Fu et al., 2022)

* SC(Wang et al., 2022e)

* DIVERSE (Li et al., 2022b)

* Rationale-augmented (Wang et al., 2022d)

* Table-CoT(Chen, 2023)

* Dater(Ye et al., 2023)

* SOLIS(Zhou et al., 2022b)

* LtM(Zhou et al., 2022a)

* Decomposed(Khot et al., 2022)

* PAL(Gao et al., 2022)

* PoT(Chen et al., 2022)

* Selectioninference(Creswell et al., 2022)

* Algorithmic prompt (Zhou et al., 2022c)

* LP(Guo et al., 2023)

* MoT(Li and Qiu, 2023)

* SV(Weng et al., 2022)

* MathPrompter(Imani et al., 2023)

* Automate-CoT(Shum et al., 2023)

* PHP(Zheng et al., 2023)

* **Open Domain Reasoning and QA § 4.2:**

* Vanilla CoT(Wei et al., 2022b)

* Zeroshot-CoT(Kojima et al., 2022)

* Auto-CoT(Zhang et al., 2022b)

* ART(Paranjape et al., 2023)

* Active-Prompt(Diao et al., 2023)

* APE(Zhou et al., 2022d)

* Explanation Selection(Ye and Durrett, 2023)

* iCAP(Wang et al., 2022a)

* Multimodal-CoT(Zhang et al., 2023b)

* Complexity-based prompting (Fu et al., 2022)

* Self-ask(Press et al., 2022)

* SC(Wang et al., 2022e)

* DIVERSE (Li et al., 2022b)

* SV(Weng et al., 2022)

* Rationale-augmented (Wang et al., 2022d)

* IRCoT(Trivedi et al., 2022)

* LtM(Zhou et al., 2022a)

* MCR(Yoran et al., 2023)

* Decomposed(Khot et al., 2022)

* Maieutic(Jung et al., 2022)

* PINTO(Wang et al., 2022c)

* MoT(Li and Qiu, 2023)

* Chameleon(Lu et al., 2023)

* Automate-CoT(Shum et al., 2023)

* RR(He et al., 2023)

* **Code Generation § 4.3:**

* Self-planning (Jiang et al., 2023)

* XRICL(Shi et al., 2022b)

* DIN-SQL(Pourreza and Rafiei, 2023)

* Imitation Attack CoT(Li et al., 2023c)

**2. Prompts Design § 5**

* **Demonstrations § 5.1:**

* Self-ask(Press et al., 2022)

* Auto-CoT(Zhang et al., 2022b)

* Synthetic(Shao et al., 2023)

* MMRSelect(Fu et al., 2022)

* Complexity-based prompting (Fu et al., 2022)

* Active-Prompt(Diao et al., 2023)

* Explanation Selection(Ye and Durrett, 2023)

* Algorithmic prompt (Zhou et al., 2022c)

* Concise-CoT(Madaan and Yazdanbakhsh, 2022)

* MoT(Li and Qiu, 2023)

* Automate-CoT(Shum et al., 2023)

* **Textual Instructions § 5.2:**

* Zeroshot-CoT(Kojima et al., 2022)

* APE(Zhou et al., 2022d)

* Auto-CoT(Zhang et al., 2022b)

**3. Extension Strategies § 6**

* **Ensemble § 6.1:**

* SC(Wang et al., 2022e)

* SV(Weng et al., 2022)

* Complexity-based prompting (Fu et al., 2022)

* MCR(Yoran et al., 2023)

* DIVERSE (Li et al., 2022b)

* Rationale-augmented (Wang et al., 2022d)

* **Sub-problems Division § 6.2:**

* LtM(Zhou et al., 2022a)

* Self-ask(Press et al., 2022)

* Selectioninference(Creswell et al., 2022)

* Decomposed(Khot et al., 2022)

* HuggingGPT(Shen et al., 2023)

* Toolformer(Schick et al., 2023)

* Dater(Ye et al., 2023)

* Dynamix-LtM(Drozdov et al., 2022)

* RR(He et al., 2023)

* **External Assistance § 6.3:**

* iCAP(Wang et al., 2022a)

* PoT(Chen et al., 2022)

* PAL(Gao et al., 2022)

* Decomposed (Khot et al., 2022)

* HuggingGPT(Shen et al., 2023)

* Toolformer(Schick et al., 2023)

* Faithfull-CoT(Lyu et al., 2023)

* IRCoT(Trivedi et al., 2022)

* ART(Paranjape et al., 2023)

* Synthetic(Shao et al., 2023)

* Chameleon(Lu et al., 2023)

* **Rationalization § 6.4:**

* STaR(Zelikman et al., 2022)

* LP(Guo et al., 2023)

* PINTO(Wang et al., 2022c)

* PHP(Zheng et al., 2023)

### Key Observations

* The diagram provides a structured overview of CoT prompting techniques, categorizing them based on different aspects of their application and design.

* Each category includes a list of specific techniques, along with citations to relevant publications.

* The diagram highlights the breadth and depth of research in CoT prompting, showcasing a variety of approaches and strategies.

* Many techniques appear in multiple categories, suggesting that they can be applied in different contexts or combined with other strategies. For example, Auto-CoT appears in Close Domain Reasoning, Open Domain Reasoning, Demonstrations, and Textual Instructions.

### Interpretation

The diagram serves as a valuable resource for researchers and practitioners interested in CoT prompting. It provides a clear and organized overview of the field, making it easier to understand the different approaches and their relationships. The inclusion of citations allows users to delve deeper into specific techniques and explore the underlying research. The diagram suggests that CoT prompting is a rapidly evolving field, with new techniques and strategies being developed continuously. The categorization helps to identify areas where further research is needed and to explore potential synergies between different approaches. The overlap of techniques across categories indicates that CoT prompting is a flexible and adaptable approach that can be tailored to different tasks and contexts.

DECODING INTELLIGENCE...