## Diagram: Chain-of-Thought Prompting - Task Types & Prompts

### Overview

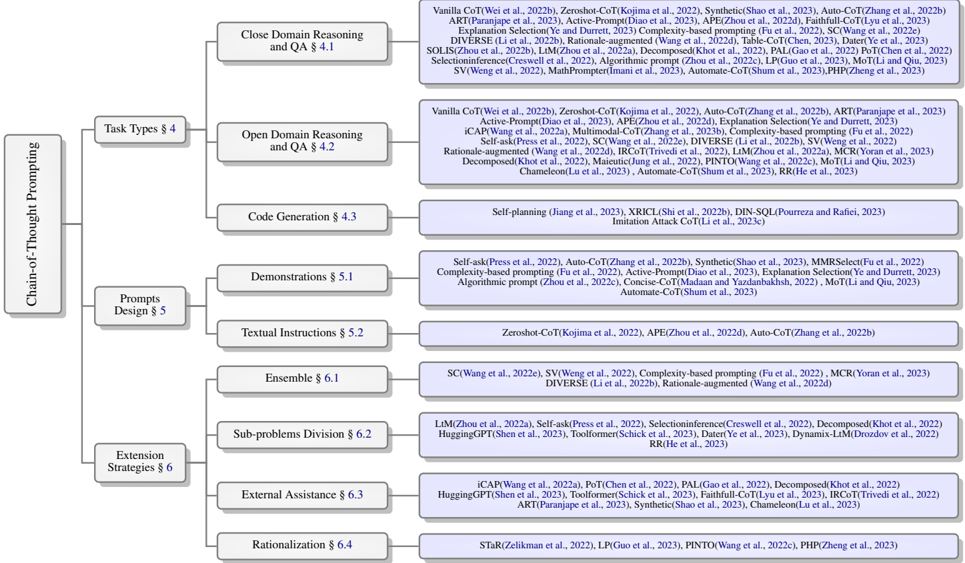

The image presents a diagram illustrating the landscape of Chain-of-Thought (CoT) Prompting techniques, categorized by Task Types (Close Domain Reasoning, Open Domain Reasoning, Code Generation) and Prompting Strategies (Vanilla, Self-ask, Demonstration, Ensemble). The diagram visually maps various CoT methods to these categories, with a gradient scale indicating performance based on accuracy.

### Components/Axes

* **Y-axis (Vertical):** Task Types - Close Domain Reasoning and QA, Open Domain Reasoning and QA, Code Generation.

* **X-axis (Horizontal):** Prompting Strategies - Vanilla, Self-ask, Demonstration, Ensemble.

* **Color Scale:** A gradient from light yellow (lower accuracy ~20%) to dark blue (higher accuracy ~80%). The color intensity represents the accuracy of the CoT method for a given task and prompting strategy.

* **Labels:** Each cell within the grid is labeled with the name of a specific CoT method (e.g., "Auto-CoT", "PINTO"). These labels are accompanied by citations (e.g., "Wei et al., 2022").

* **Legend:** Located at the bottom of the diagram, the legend maps the color gradient to accuracy percentages: 20%, 30%, 40%, 50%, 60%, 70%, 80%.

### Detailed Analysis or Content Details

The diagram is structured as a 3x4 grid. Each cell represents a combination of a Task Type and a Prompting Strategy. The color within each cell indicates the approximate accuracy of the corresponding CoT method.

**Close Domain Reasoning and QA § 4.1**

* **Vanilla:** Methods include: Vanilla CoT (Wei et al., 2022b), ZeroShot-CoT (Kojima et al., 2022b), Synthetic (Shao et al., 2023a), Auto-CoT (Zhang et al., 2022b), ART (Paranjape et al., 2023), Active-Prompt (Diao et al., 2023), APEX (Zhou et al., 2023), Faithful-CoT (Liu et al., 2023), Explanation Selection (Ye and Durett, 2023), Complexity-based prompting (Fu et al., 2022), SC (Wang et al., 2022a), DIVERSE (Li et al., 2023), Rationale-augmented (Wang et al., 2022d), Table-CoT (Chen, 2023), DateTye (Ye et al., 2023), SOLIS (Zhou et al., 2023), LMZhou et al., 2022a, Decomposed Knot (Li et al., 2022), PAL (Gao et al., 2022), Pot (Chen et al., 2022), SelectionInference (Creswell et al., 2022), Algorithmic prompt (Zhou et al., 2023), LP (Gao et al., 2023), MoT (Li and Qiu, 2023), SV (Wang et al., 2022), MathPrompter (Imani et al., 2023), Automate-CoT (Shum et al., 2023), PHP (Zheng et al., 2023). Accuracy ranges from approximately 20% (light yellow) to 60% (medium blue).

* **Self-ask:** Methods include: Vanilla CoT (Wei et al., 2022b), ZeroShot-CoT (Kojima et al., 2022b), Auto-CoT (Zhang et al., 2022b), ART (Paranjape et al., 2023), Active-Prompt (Diao et al., 2023), APEX (Zhou et al., 2023), Explanation Selection (Ye and Durett, 2023), CAPW (Wang et al., 2022a), Multimodal-CoT (Zhou et al., 2023b), Complexity-based prompting (Fu et al., 2022), Self-ask (Wang et al., 2022), SC (Wang et al., 2022a), DIVERSE (Li et al., 2023), Rationale-augmented (Wang et al., 2022d), IRC (Li et al., 2023), PINTO (Wang et al., 2022a), MORAL (Yuan et al., 2023), Decomposed Knot (Li et al., 2022), Automate-CoT (Shum et al., 2023), RR (He et al., 2023), MoT (Li and Qiu, 2023). Accuracy ranges from approximately 20% to 70%.

* **Demonstration:** Methods include: Self-planning (Jiang et al., 2023), XRCL (Shi et al., 2023), DIN-SQL (Poureza and Rafiei, 2023), Imitation Attack (Gotlick et al., 2023). Accuracy ranges from approximately 30% to 60%.

* **Ensemble:** Methods include: Auto-CoT (Zhang et al., 2022b), ART (Paranjape et al., 2023), Active-Prompt (Diao et al., 2023), APEX (Zhou et al., 2023), Faithful-CoT (Liu et al., 2023), SC (Wang et al., 2022a), DIVERSE (Li et al., 2023), Rationale-augmented (Wang et al., 2022d), Table-CoT (Chen, 2023), DateTye (Ye et al., 2023), SOLIS (Zhou et al., 2023), PAL (Gao et al., 2022), LP (Gao et al., 2023), MoT (Li and Qiu, 2023). Accuracy ranges from approximately 40% to 80%.

**Open Domain Reasoning and QA § 4.2**

* Similar distribution of methods and accuracy ranges as Close Domain Reasoning.

* Methods include: Vanilla CoT, ZeroShot-CoT, Auto-CoT, ART, Active-Prompt, APEX, Faithfull-CoT, CAPW, Multimodal-CoT, Complexity-based prompting, Self-ask, SC, DIVERSE, Rationale-augmented, IRC, PINTO, MORAL, Decomposed Knot, Automate-CoT, RR, MoT.

**Code Generation § 4.3**

* Methods include: Self-planning, XRCL, DIN-SQL, Imitation Attack. Accuracy ranges from approximately 20% to 50%.

### Key Observations

* **Ensemble prompting** generally yields the highest accuracy across all task types, often reaching the 70-80% range.

* **Vanilla prompting** consistently shows the lowest accuracy, typically between 20-40%.

* **Self-ask prompting** performs better than Vanilla, but generally falls short of Ensemble.

* **Code Generation** consistently has lower accuracy scores compared to the reasoning tasks.

* There is significant overlap in the methods listed across different prompting strategies, suggesting some methods can be applied in multiple ways.

### Interpretation

The diagram provides a comparative overview of various Chain-of-Thought prompting techniques, highlighting their effectiveness across different task types. The color gradient effectively visualizes the performance differences, demonstrating that Ensemble prompting is generally the most effective strategy. The lower accuracy scores for Code Generation suggest that this task is more challenging for CoT methods, or that specialized techniques are needed. The overlap in methods across strategies suggests that the *way* a method is applied (i.e., the prompting strategy) is crucial for achieving optimal performance. The citations accompanying each method indicate the research papers where these techniques were originally proposed, allowing for further investigation. The diagram serves as a valuable resource for researchers and practitioners looking to select the most appropriate CoT prompting strategy for their specific application. The diagram is a snapshot of the field as of the publication dates of the cited papers, and the landscape is likely to evolve as new methods are developed.