\n

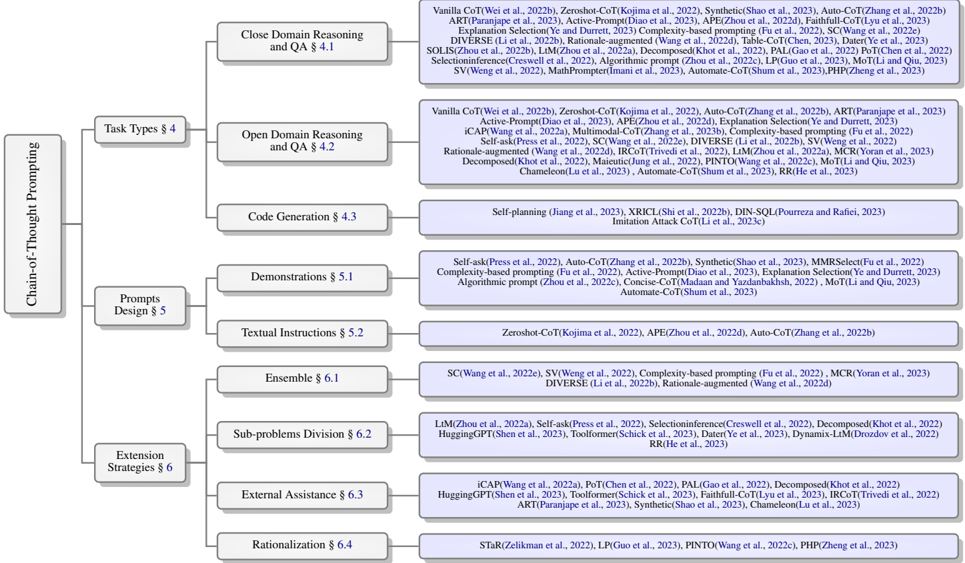

## Diagram: Taxonomy of Chain-of-Thought Prompting Methods

### Overview

The image is a hierarchical taxonomy diagram (a tree structure) that categorizes research methods and techniques related to "Chain-of-Thought Prompting." The diagram organizes the field into three primary branches, which further subdivide into specific categories and sub-categories. Each terminal node lists numerous academic papers and their associated methods, cited by author and year. The overall layout flows from left (root) to right (leaves).

### Components/Axes

* **Root Node (Leftmost):** "Chain-of-Thought Prompting"

* **Primary Branches (Level 1):**

1. Task Types § 4

2. Prompts Design § 5

3. Extension Strategies § 6

* **Secondary Branches (Level 2):** Each primary branch splits into more specific categories.

* **Terminal Nodes (Rightmost):** These are light purple boxes containing lists of specific methods, each followed by citations in parentheses (e.g., `(Wei et al., 2022b)`). The citations are in blue text.

* **Spatial Layout:** The root is centered vertically on the far left. The three primary branches extend horizontally to the right. The "Task Types" branch is the topmost, "Prompts Design" is in the middle, and "Extension Strategies" is at the bottom. Each primary branch fans out into its sub-categories, which are stacked vertically. The terminal nodes are aligned to the right of their parent category.

### Detailed Analysis

**1. Task Types § 4**

* **Close Domain Reasoning and QA § 4.1**

* Methods: Vanilla CoT, Zeroshot-CoT, Synthetic, Auto-CoT, ART, Active-Prompt, APE, Faithful-CoT, Selection-Inference, DIVERSE, Rationale-augmented, Table-CoT, DAT, SOLIS, LLM, Decomposed, PAL, SC, Self-ask, SV, MathPrompter, Automate-CoT, PHP.

* **Open Domain Reasoning and QA § 4.2**

* Methods: Vanilla CoT, Zeroshot-CoT, Auto-CoT, ART, Active-Prompt, APE, Explanation Selection, iCAP, Multimodal-CoT, Complexity-based prompting, Self-ask, Selection-Inference, Rationale-augmented, IRCoT, LLM, MCR, Decomposed, Maieutic, PINTO, MoT, Automate-CoT, RR, Chameleon.

* **Code Generation § 4.3**

* Methods: Self-planning, XRICL, DIN-SQL, Imitation Attack CoT.

**2. Prompts Design § 5**

* **Demonstrations § 5.1**

* Methods: Self-ask, Auto-CoT, Synthetic, ART, Complexity-based prompting, Active-Prompt, Explanation Selection, Algorithmic prompt, Concise-CoT, MoT, Automate-CoT.

* **Textual Instructions § 5.2**

* Methods: Zeroshot-CoT, APE, Auto-CoT.

**3. Extension Strategies § 6**

* **Ensemble § 6.1**

* Methods: SC, SV, Complexity-based prompting, MCR, DIVERSE, Rationale-augmented.

* **Sub-problems Division § 6.2**

* Methods: LLM, Self-ask, Selection-Inference, Decomposed, HuggingGPT, Toolformer, Dyer et al., RR, Dynamis-LM.

* **External Assistance § 6.3**

* Methods: iCAP, PoT, PAL, Decomposed, HuggingGPT, Toolformer, Faithful-CoT, IRCoT, ART, Synthetic, Chameleon.

* **Rationalization § 6.4**

* Methods: STaR, LP, PINTO, PHP.

### Key Observations

* **Density of Research:** The "Open Domain Reasoning and QA" and "Close Domain Reasoning and QA" categories contain the highest number of listed methods, indicating these are the most heavily researched application areas for Chain-of-Thought prompting.

* **Method Reoccurrence:** Several methods appear in multiple categories. For example, "Self-ask" is listed under Close Domain QA, Open Domain QA, Demonstrations, and Sub-problems Division. "Decomposed" appears under Close Domain QA, Open Domain QA, Sub-problems Division, and External Assistance. This suggests these techniques are versatile and foundational.

* **Citation Style:** All methods are accompanied by academic citations, typically in the format `(Author et al., Year)`. Some citations include a letter suffix (e.g., `2022b`), indicating multiple publications by the same lead author in a single year.

* **Structural Clarity:** The diagram uses a clean, left-to-right tree structure with clear hierarchical relationships. The use of section numbers (§ 4.1, § 5.2, etc.) implies this taxonomy is likely from a survey paper or review article, where these sections correspond to detailed discussions in the text.

### Interpretation

This taxonomy provides a structured map of the Chain-of-Thought (CoT) prompting research landscape as of the cited papers (mostly 2022-2023). It demonstrates that CoT is not a single technique but a broad family of methods.

The organization reveals a logical progression in the field:

1. **Task Types:** The initial focus was on applying CoT to different problem domains (reasoning, QA, code).

2. **Prompts Design:** Research then evolved to optimize *how* the chain-of-thought is elicited, through better demonstrations or instructions.

3. **Extension Strategies:** The most advanced branch involves making CoT more powerful and reliable through techniques like ensembling multiple reasoning paths, dividing complex problems, integrating external tools/knowledge, and adding rationalization steps.

The recurrence of methods like "Decomposed" and "Self-ask" across categories highlights core principles: breaking down problems and using explicit question-answering steps are fundamental to effective chain-of-thought reasoning. The sheer number of methods under "Open Domain Reasoning" suggests that applying CoT to less structured, knowledge-intensive tasks is a particularly active and challenging frontier. This diagram serves as a valuable index for navigating the complex and rapidly growing literature on making large language models reason more explicitly and reliably.