## Flowchart: Chain-of-Thought Prompting Framework

### Overview

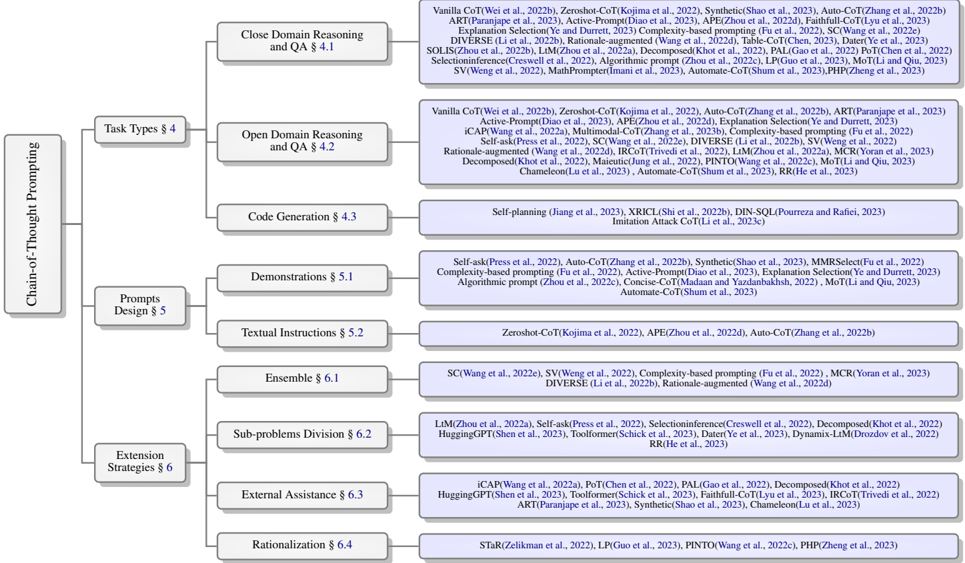

The flowchart illustrates a hierarchical framework for Chain-of-Thought (CoT) prompting, categorizing techniques into three main sections: **Chain-of-Thought Prompting**, **Prompts Design**, and **Extension Strategies**. Each section contains subcategories with specific methods and associated research references.

### Components/Axes

- **Main Categories**:

1. **Chain-of-Thought Prompting**

2. **Prompts Design**

3. **Extension Strategies**

- **Subcategories and References**:

- **Task Types (§4)**:

- Close Domain Reasoning and QA (§4.1)

- Open Domain Reasoning and QA (§4.2)

- Code Generation (§4.3)

- **Prompts Design (§5)**:

- Demonstrations (§5.1)

- Textual Instructions (§5.2)

- **Extension Strategies (§6)**:

- Sub-problems Division (§6.2)

- External Assistance (§6.3)

- Rationalization (§6.4)

### Detailed Analysis

#### Chain-of-Thought Prompting

- **Task Types (§4)**:

- **Close Domain Reasoning and QA (§4.1)**:

- References: Vanilla CoT (Wei et al., 2022b), Zeroshot-CoT (Kojima et al., 2022), Synthetic (Shao et al., 2023), Auto-CoT (Zhang et al., 2022b), ART (Paranjape et al., 2023), Explanation Selection (Ye et al., 2023), DIVERSE (Li et al., 2022b), Rationale-augmented (Wang et al., 2022d), SOLIS (Zhou et al., 2022b), Selectioninference (Creswell et al., 2022), Algorithmic prompt (Zhou et al., 2022c), LP (Guo et al., 2023), MoT (Li and Qiu, 2023), SV (Weng et al., 2022), MathPrompter (Imani et al., 2023), Automate-CoT (Shum et al., 2023), PHP (Zheng et al., 2023).

- **Open Domain Reasoning and QA (§4.2)**:

- References: Vanilla CoT (Wei et al., 2022b), Zeroshot-CoT (Kojima et al., 2022), Auto-CoT (Zhang et al., 2022b), ART (Paranjape et al., 2023), Active-Prompt (Diao et al., 2023), APE (Zhou et al., 2022d), iCAP (Wang et al., 2022a), Multimodal-CoT (Zhang et al., 2023b), Complexity-based prompting (Fu et al., 2022), Self-ask (Press et al., 2022), SC (Wang et al., 2022e), DIVERSE (Li et al., 2022b), SV (Weng et al., 2022), Rationale-augmented (Wang et al., 2022d), LLM (Zhou et al., 2022d), IRCoT (Trivedi et al., 2022), Decomposed (Khot et al., 2022), Maieutic (Jung et al., 2022), PINTO (Wang et al., 2022c), MCR (Yoran et al., 2023), Chameleon (Lu et al., 2023), Automate-CoT (Shum et al., 2023), RR (He et al., 2023).

- **Code Generation (§4.3)**:

- References: Self-planning (Jiang et al., 2023), XRICL (Shi et al., 2022b), DIN-SQL (Pourreza and Rafiei, 2023), Imitation Attack CoT (Li et al., 2023c).

#### Prompts Design (§5)

- **Demonstrations (§5.1)**:

- References: Self-ask (Press et al., 2022), Auto-CoT (Zhang et al., 2022b), Synthetic (Shao et al., 2023), MMRSelect (Fu et al., 2022), Complexity-based prompting (Fu et al., 2022), Active-Prompt (Diao et al., 2023), Explanation Selection (Ye et al., 2023), Algorithmic prompt (Zhou et al., 2022c), Concise-CoT (Madaan and Yazdanbakhsh, 2022), MoT (Li and Qiu, 2023), Automate-CoT (Shum et al., 2023).

- **Textual Instructions (§5.2)**:

- References: Zeroshot-CoT (Kojima et al., 2022), APE (Zhou et al., 2022d), Auto-CoT (Zhang et al., 2022b).

#### Extension Strategies (§6)

- **Sub-problems Division (§6.2)**:

- References: LLM (Zhou et al., 2022a), Self-ask (Press et al., 2022), Selectioninference (Creswell et al., 2022), Decomposed (Khot et al., 2022), HuggingGPT (Shen et al., 2023), Toolformer (Schick et al., 2023), Dynamix-LLM (Drozdov et al., 2022), RR (He et al., 2023).

- **External Assistance (§6.3)**:

- References: iCAP (Wang et al., 2022a), PoT (Chen et al., 2022), PAL (Gao et al., 2022), Decomposed (Khot et al., 2022), HuggingGPT (Shen et al., 2023), Toolformer (Schick et al., 2023), Faithfull-CoT (Lyu et al., 2023), IRCoT (Trivedi et al., 2022), ART (Paranjape et al., 2023), Synthetic (Shao et al., 2023), Chameleon (Lu et al., 2023).

- **Rationalization (§6.4)**:

- References: STaR (Zelikman et al., 2022), LP (Guo et al., 2023), PINTO (Wang et al., 2022c), PHP (Zheng et al., 2023).

### Key Observations

1. **Research Focus**: Most references cluster around **CoT variants** (e.g., Auto-CoT, Zeroshot-CoT) and **reasoning enhancements** (e.g., DIVERSE, Rationale-augmented).

2. **Code Generation**: Limited to self-planning and imitation attack methods, suggesting a niche focus.

3. **External Assistance**: Dominated by tool integration (e.g., HuggingGPT, Toolformer) and decomposition strategies.

4. **Temporal Spread**: References span 2022–2023, indicating rapid development in this area.

### Interpretation

The flowchart maps the evolution of CoT prompting strategies, emphasizing **task-specific adaptations** (e.g., code generation) and **scalability solutions** (e.g., decomposition, external tools). The dominance of **reasoning augmentation** (DIVERSE, Rationale-augmented) highlights efforts to improve model robustness. The inclusion of **automation frameworks** (Automate-CoT) and **multi-modal approaches** (APE, IRCoT) reflects trends toward practical deployment. Notably, the absence of **human-in-the-loop** methods suggests a focus on fully automated systems. This structure provides a roadmap for researchers to identify gaps (e.g., underrepresented domains) and prioritize innovations.