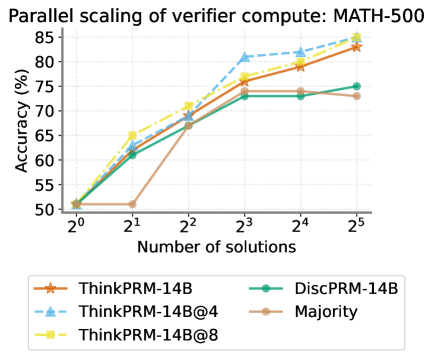

## Line Chart: Parallel scaling of verifier compute: MATH-500

### Overview

This image is a line chart titled "Parallel scaling of verifier compute: MATH-500". It plots the accuracy percentage of five different models or methods against an increasing number of solutions, presented on a logarithmic scale (base 2). The chart demonstrates how the performance of these verifiers scales as more parallel solutions are considered.

### Components/Axes

* **Title:** "Parallel scaling of verifier compute: MATH-500" (Top center).

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 50 to 85, with major tick marks every 5 units (50, 55, 60, 65, 70, 75, 80, 85).

* **X-Axis:** Labeled "Number of solutions". The scale is logarithmic with base 2, showing tick marks at 2⁰ (1), 2¹ (2), 2² (4), 2³ (8), 2⁴ (16), and 2⁵ (32).

* **Legend:** Positioned at the bottom of the chart, centered. It contains five entries, each with a colored line, marker symbol, and label:

1. **ThinkPRM-14B:** Orange line with star (★) markers.

2. **DiscPRM-14B:** Teal/green line with circle (●) markers.

3. **ThinkPRM-14B@4:** Light blue dashed line with triangle (▲) markers.

4. **ThinkPRM-14B@8:** Yellow line with square (■) markers.

5. **Majority:** Brown/tan line with circle (●) markers.

### Detailed Analysis

The chart tracks five data series. All series begin at the same point (1 solution, 50% accuracy). Below is the approximate data extracted for each series, with trends noted.

**1. ThinkPRM-14B (Orange, ★)**

* **Trend:** Consistent, strong upward slope.

* **Data Points (Approx.):**

* 2⁰ (1): 50%

* 2¹ (2): ~61%

* 2² (4): ~68%

* 2³ (8): ~75%

* 2⁴ (16): ~79%

* 2⁵ (32): ~83%

**2. DiscPRM-14B (Teal, ●)**

* **Trend:** Increases initially, then plateaus after 8 solutions.

* **Data Points (Approx.):**

* 2⁰ (1): 50%

* 2¹ (2): ~61%

* 2² (4): ~67%

* 2³ (8): ~73%

* 2⁴ (16): ~73% (plateau)

* 2⁵ (32): ~75%

**3. ThinkPRM-14B@4 (Light Blue, ▲, Dashed Line)**

* **Trend:** Very steep upward slope, one of the top performers.

* **Data Points (Approx.):**

* 2⁰ (1): 50%

* 2¹ (2): ~65%

* 2² (4): ~71%

* 2³ (8): ~81%

* 2⁴ (16): ~82%

* 2⁵ (32): ~84%

**4. ThinkPRM-14B@8 (Yellow, ■)**

* **Trend:** Strong upward slope, ends as the highest-performing series.

* **Data Points (Approx.):**

* 2⁰ (1): 50%

* 2¹ (2): ~65%

* 2² (4): ~71%

* 2³ (8): ~77%

* 2⁴ (16): ~80%

* 2⁵ (32): ~85%

**5. Majority (Brown, ●)**

* **Trend:** Increases to a point, then flatlines completely.

* **Data Points (Approx.):**

* 2⁰ (1): 50%

* 2¹ (2): 50% (no initial gain)

* 2² (4): ~67%

* 2³ (8): ~73%

* 2⁴ (16): ~73% (plateau)

* 2⁵ (32): ~73% (plateau)

### Key Observations

1. **Universal Starting Point:** All methods start at 50% accuracy with a single solution, which is the baseline.

2. **Clear Performance Tiers:** At the maximum measured point (32 solutions), a clear hierarchy emerges:

* **Top Tier:** ThinkPRM-14B@8 (~85%) and ThinkPRM-14B@4 (~84%).

* **Middle Tier:** ThinkPRM-14B (~83%).

* **Lower Tier:** DiscPRM-14B (~75%) and Majority (~73%).

3. **Scaling Behavior:** The "ThinkPRM" family of models (all variants) shows continuous improvement across the entire range of solutions. In contrast, both "DiscPRM-14B" and "Majority" show diminishing returns, plateauing after 8 solutions (2³).

4. **Initial Jump:** The "@4" and "@8" variants of ThinkPRM show a larger initial performance jump from 1 to 2 solutions compared to the base ThinkPRM-14B.

5. **Majority Baseline:** The "Majority" method, likely a simple voting baseline, fails to gain any benefit from 1 to 2 solutions and is ultimately outperformed by all neural verifier models.

### Interpretation

This chart provides a performance benchmark for different AI verification strategies on the MATH-500 dataset, specifically measuring how accuracy improves when the system is allowed to generate and evaluate multiple solution candidates in parallel.

The data suggests that the **ThinkPRM architecture scales more effectively with increased parallel compute** than the DiscPRM architecture or a simple majority vote. The continuous upward trend of the ThinkPRM lines indicates that its method for verifying or ranking solutions continues to extract useful signal even from a large pool of 32 candidates.

The plateau of the **Majority and DiscPRM methods** implies a fundamental limit to their approach. For Majority, this is intuitive—beyond a certain point, adding more random guesses doesn't improve the consensus. For DiscPRM, it may indicate that its discrimination capability saturates, and it cannot effectively differentiate between correct and incorrect solutions in a larger, noisier set.

The superior performance of **ThinkPRM-14B@8 and @4** over the base ThinkPRM-14B suggests that techniques like increased sampling (@8 likely means 8 samples per problem) or other ensemble methods provide a significant boost, making them the most compute-efficient strategies for achieving high accuracy in this parallel scaling regime. The chart makes a strong case for investing in verifier models that are designed to leverage parallelism, as the accuracy gains are substantial and sustained.