## Bar Chart: Ablation study of meta-buffer

### Overview

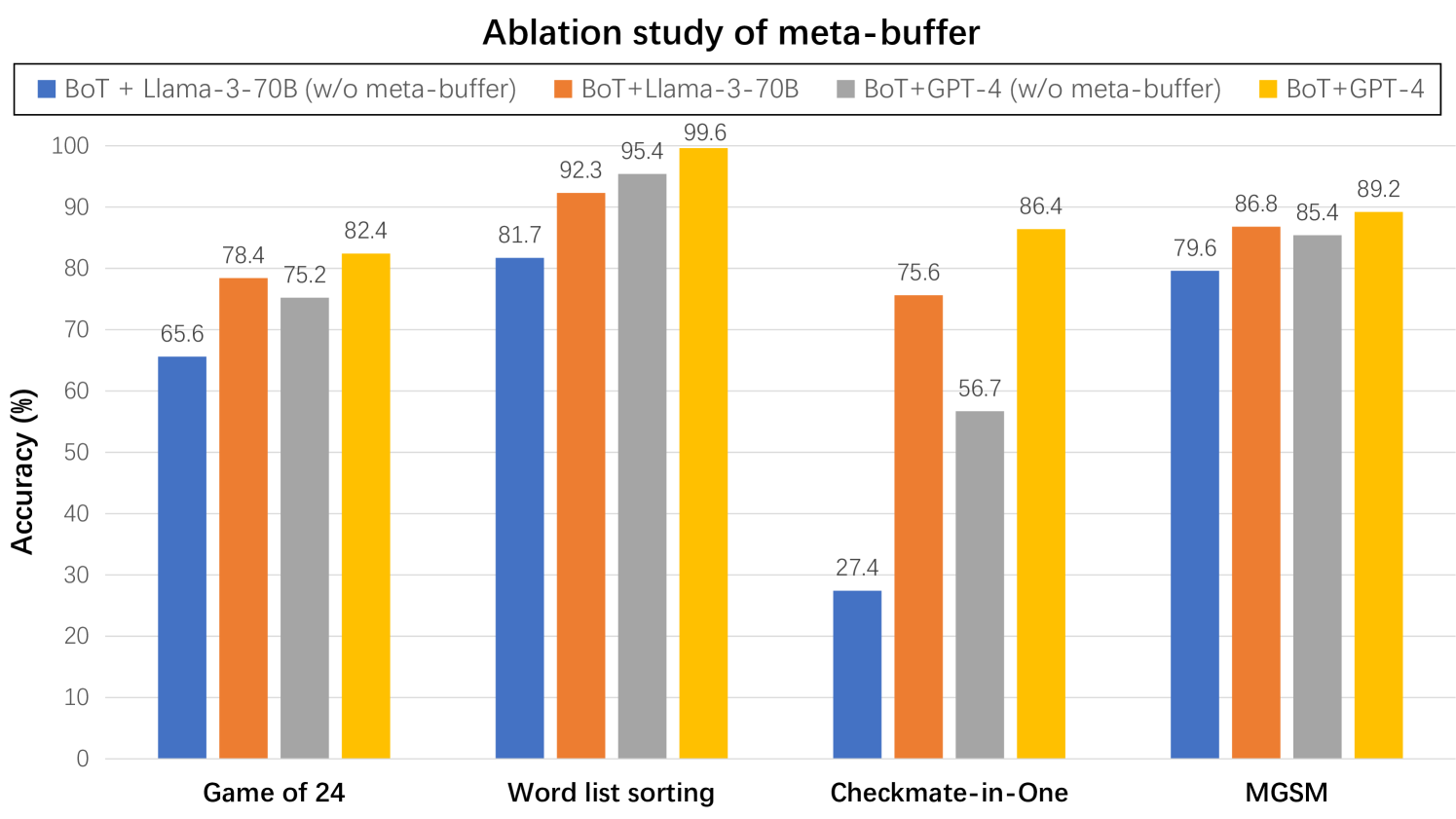

This is a grouped bar chart titled "Ablation study of meta-buffer." It compares the performance (accuracy in %) of four different model configurations across four distinct tasks. The chart is designed to show the impact of including or excluding a "meta-buffer" component when using two base models (Llama-3-70B and GPT-4) within a framework called "BoT".

### Components/Axes

* **Title:** "Ablation study of meta-buffer" (centered at the top).

* **Legend:** Positioned at the top center, below the title. It defines four data series:

* **Blue Square:** BoT + Llama-3-70B (w/o meta-buffer)

* **Orange Square:** BoT+Llama-3-70B

* **Gray Square:** BoT+GPT-4 (w/o meta-buffer)

* **Yellow Square:** BoT+GPT-4

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 0 to 100 with major tick marks every 10 units (0, 10, 20, ..., 100).

* **X-Axis:** Represents four different tasks. The category labels are:

1. Game of 24

2. Word list sorting

3. Checkmate-in-One

4. MGSM

### Detailed Analysis

The chart presents accuracy percentages for each model configuration on each task. The data is as follows:

| Task | BoT + Llama-3-70B (w/o meta-buffer) [Blue] | BoT+Llama-3-70B [Orange] | BoT+GPT-4 (w/o meta-buffer) [Gray] | BoT+GPT-4 [Yellow] |

| :--- | :---: | :---: | :---: | :---: |

| **Game of 24** | 65.6 | 78.4 | 75.2 | 82.4 |

| **Word list sorting** | 81.7 | 92.3 | 95.4 | 99.6 |

| **Checkmate-in-One** | 27.4 | 75.6 | 56.7 | 86.4 |

| **MGSM** | 79.6 | 86.8 | 85.4 | 89.2 |

**Trend Verification per Data Series:**

* **Blue Bars (BoT + Llama-3-70B w/o meta-buffer):** Performance varies significantly by task. It is lowest on "Checkmate-in-One" (27.4%) and highest on "Word list sorting" (81.7%).

* **Orange Bars (BoT+Llama-3-70B):** Consistently shows higher accuracy than its blue counterpart (without meta-buffer) across all tasks. The improvement is most dramatic for "Checkmate-in-One".

* **Gray Bars (BoT+GPT-4 w/o meta-buffer):** Generally performs well, but shows a notable dip on "Checkmate-in-One" (56.7%) compared to other tasks.

* **Yellow Bars (BoT+GPT-4):** Consistently achieves the highest accuracy among all four configurations for every single task. The trend is a clear, step-wise improvement over the gray bars (its counterpart without meta-buffer).

### Key Observations

1. **Universal Benefit of Meta-Buffer:** For both base models (Llama-3-70B and GPT-4), the configuration *with* the meta-buffer (orange and yellow) always outperforms the configuration *without* it (blue and gray) on the same task.

2. **Task-Dependent Impact:** The performance gain from adding the meta-buffer is not uniform. It is most pronounced on the "Checkmate-in-One" task, where the Llama-3-70B configuration sees a 48.2 percentage point increase (27.4% to 75.6%), and the GPT-4 configuration sees a 29.7 point increase (56.7% to 86.4%).

3. **Model Comparison:** The GPT-4 based configurations (gray and yellow) generally outperform the Llama-3-70B based configurations (blue and orange) on the same task, with or without the meta-buffer. The exception is "Word list sorting," where BoT+GPT-4 (w/o meta-buffer) at 95.4% is very close to BoT+Llama-3-70B at 92.3%.

4. **Highest and Lowest Scores:** The highest accuracy recorded is 99.6% (BoT+GPT-4 on Word list sorting). The lowest is 27.4% (BoT + Llama-3-70B w/o meta-buffer on Checkmate-in-One).

### Interpretation

This ablation study provides strong evidence for the efficacy of the "meta-buffer" component within the BoT framework. The data suggests that the meta-buffer acts as a critical performance enhancer, particularly for tasks that likely require complex reasoning or multi-step planning, such as "Checkmate-in-One" (a chess puzzle) and "Game of 24" (a mathematical puzzle).

The consistent superiority of the yellow bars (BoT+GPT-4) indicates that the combination of a more powerful base model (GPT-4) with the meta-buffer yields the best results. However, the substantial relative improvements seen in the orange bars (BoT+Llama-3-70B) demonstrate that the meta-buffer can significantly elevate the capabilities of a smaller model, making it a valuable architectural addition regardless of the base model's scale. The near-ceiling performance on "Word list sorting" (99.6%) suggests this task may be less challenging for these models or that the meta-buffer is exceptionally well-suited for it. The chart effectively argues that the meta-buffer is not an optional add-on but a core component for achieving robust performance across diverse reasoning tasks.