\n

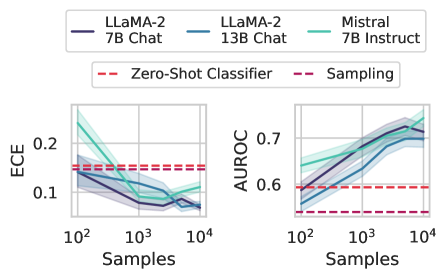

## Line Charts: Model Calibration (ECE) and Classification Performance (AUROC) vs. Training Samples

### Overview

The image displays two side-by-side line charts comparing the performance of three large language models (LLMs) as a function of the number of training samples used. The left chart measures Expected Calibration Error (ECE), and the right chart measures Area Under the Receiver Operating Characteristic curve (AUROC). Both charts include baseline performance lines for a "Zero-Shot Classifier" and a "Sampling" method.

### Components/Axes

* **Legend (Top Center):** A shared legend identifies three model series:

* **LLaMA-2 7B Chat:** Dark purple solid line.

* **LLaMA-2 13B Chat:** Blue solid line.

* **Mistral 7B Instruct:** Teal solid line.

* **Baseline Legend (Below Main Legend):** Identifies two horizontal dashed lines:

* **Zero-Shot Classifier:** Red dashed line.

* **Sampling:** Purple dashed line.

* **Left Chart (ECE):**

* **Y-axis:** Label "ECE". Scale ranges from approximately 0.05 to 0.25. Major ticks at 0.1 and 0.2.

* **X-axis:** Label "Samples". Logarithmic scale with major ticks at 10², 10³, and 10⁴.

* **Right Chart (AUROC):**

* **Y-axis:** Label "AUROC". Scale ranges from approximately 0.55 to 0.75. Major ticks at 0.6 and 0.7.

* **X-axis:** Label "Samples". Identical logarithmic scale to the left chart (10², 10³, 10⁴).

* **Data Series:** Each model series is plotted with a shaded region around the central line, indicating confidence intervals or variance.

### Detailed Analysis

**Left Chart - ECE (Lower is Better):**

* **Trend Verification:** All three model lines show a clear downward trend as the number of samples increases, indicating improved calibration (lower error).

* **Data Points (Approximate):**

* **LLaMA-2 7B Chat (Dark Purple):** Starts at ~0.15 (10² samples), decreases to ~0.10 (10³ samples), and ends at ~0.08 (10⁴ samples).

* **LLaMA-2 13B Chat (Blue):** Starts at ~0.14 (10² samples), decreases to ~0.09 (10³ samples), and ends at ~0.07 (10⁴ samples).

* **Mistral 7B Instruct (Teal):** Starts highest at ~0.22 (10² samples), decreases sharply to ~0.12 (10³ samples), and ends at ~0.09 (10⁴ samples). It shows a slight upward bump between 10³ and 10⁴ samples.

* **Baselines (Horizontal Dashed Lines):**

* **Zero-Shot Classifier (Red):** Constant at ~0.15.

* **Sampling (Purple):** Constant at ~0.14.

* **Spatial Grounding:** The baselines are positioned in the upper half of the chart. All model lines start near or above these baselines at 10² samples and fall significantly below them by 10⁴ samples.

**Right Chart - AUROC (Higher is Better):**

* **Trend Verification:** All three model lines show a clear upward trend as the number of samples increases, indicating improved classification performance.

* **Data Points (Approximate):**

* **LLaMA-2 7B Chat (Dark Purple):** Starts at ~0.60 (10² samples), increases to ~0.68 (10³ samples), and ends at ~0.72 (10⁴ samples).

* **LLaMA-2 13B Chat (Blue):** Starts at ~0.58 (10² samples), increases to ~0.66 (10³ samples), and ends at ~0.70 (10⁴ samples).

* **Mistral 7B Instruct (Teal):** Starts at ~0.64 (10² samples), increases to ~0.70 (10³ samples), and ends highest at ~0.74 (10⁴ samples).

* **Baselines (Horizontal Dashed Lines):**

* **Zero-Shot Classifier (Red):** Constant at ~0.60.

* **Sampling (Purple):** Constant at ~0.56.

* **Spatial Grounding:** The baselines are positioned in the lower half of the chart. All model lines start at or above the Zero-Shot baseline and end well above both baselines.

### Key Observations

1. **Inverse Relationship:** There is a clear inverse relationship between ECE and AUROC for all models; as performance (AUROC) improves with more data, calibration error (ECE) decreases.

2. **Model Comparison:** Mistral 7B Instruct starts with the worst calibration (highest ECE) but best initial performance (highest AUROC) at low samples (10²). By 10⁴ samples, LLaMA-2 13B Chat achieves the best calibration (lowest ECE), while Mistral achieves the best performance (highest AUROC).

3. **Data Efficiency:** All models surpass the "Zero-Shot Classifier" baseline in both metrics with as few as 10² samples. They surpass the "Sampling" baseline shortly thereafter.

4. **Convergence:** The performance gap between models narrows as the number of samples increases, particularly for ECE.

### Interpretation

This data demonstrates the critical impact of fine-tuning sample size on both the reliability (calibration) and effectiveness (discriminative power) of LLMs for classification tasks.

* **Calibration vs. Performance:** The charts show that calibration (ECE) and raw performance (AUROC) are related but distinct axes of model quality. A model can be well-performing but poorly calibrated, or vice-versa, especially in low-data regimes.

* **Value of Data:** The consistent trends indicate that increasing the fine-tuning dataset size from 100 to 10,000 samples yields significant, monotonic benefits for both metrics across all tested models. This suggests the models are not yet saturated at 10⁴ samples.

* **Model Selection Implications:** The choice between LLaMA-2 and Mistral may depend on the application's priority. If calibration is paramount (e.g., for risk assessment), LLaMA-2 13B Chat appears superior with sufficient data. If maximizing discriminative power is the sole goal, Mistral 7B Instruct shows a slight edge at high sample counts.

* **Baseline Context:** The "Zero-Shot" and "Sampling" baselines provide a crucial reference point, showing that even minimal fine-tuning (100 samples) provides a substantial boost over these methods. The flat baselines highlight that these methods do not benefit from the additional training data being supplied to the other models.