TECHNICAL ASSET FINGERPRINT

a3d1bd3ef3131f5f4063b756

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Neural Network Visualization: Inputs and Outputs

### Overview

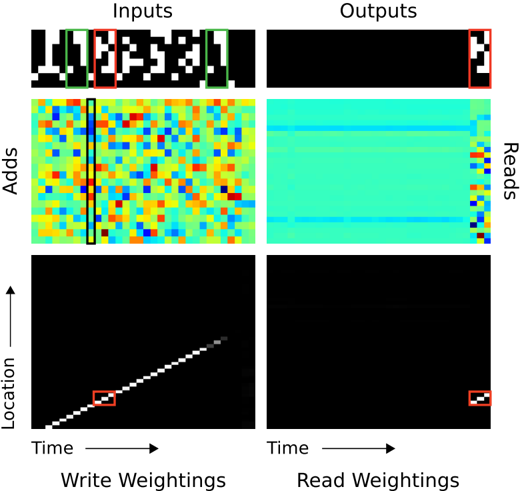

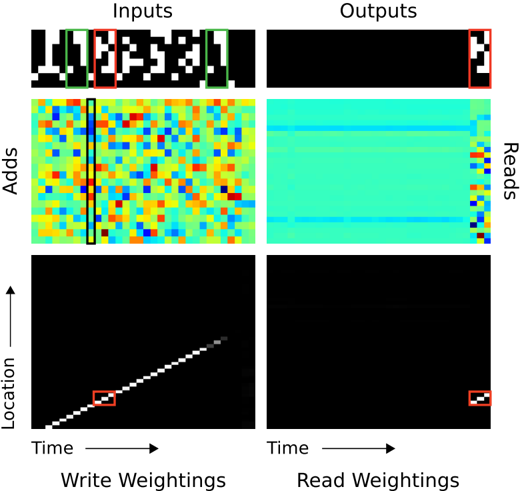

The image presents a visualization of the internal workings of a neural network, specifically focusing on the inputs, outputs, adds, reads, write weightings, and read weightings. The visualization is structured as a 3x2 grid, with "Inputs" on the left and "Outputs" on the right. Each row represents a different aspect of the network's operation: the first row shows the raw input and output data, the second row shows the "Adds" and "Reads" operations, and the third row shows the "Write Weightings" and "Read Weightings" over time and location.

### Components/Axes

* **Titles:**

* Top: "Inputs" (left), "Outputs" (right)

* Left Column: "Adds", "Location" (with an upward-pointing arrow)

* Bottom Row: "Time" (with a rightward-pointing arrow), "Write Weightings" (left), "Read Weightings" (right)

* Right Column: "Reads"

* **Axes:**

* The "Adds" and "Reads" visualizations appear to be heatmaps, with no explicit axes labels.

* The "Location" vs. "Time" plots have axes labeled "Location" (vertical) and "Time" (horizontal).

* **Annotations:**

* There are red, green, and black rectangular boxes highlighting specific regions in the visualizations.

### Detailed Analysis

**Row 1: Inputs and Outputs**

* **Inputs (Top-Left):** A binary image consisting of black and white pixels. The image appears to contain a pattern or character. A red box highlights a small section of the input, and a green box highlights a larger section.

* **Outputs (Top-Right):** A mostly black image with a small section of white pixels in the bottom-right corner. A red box highlights this section, and a green box highlights a larger section.

**Row 2: Adds and Reads**

* **Adds (Middle-Left):** A heatmap with a color gradient ranging from blue to red. The distribution of colors appears random, with no clear pattern. A black box highlights a vertical section of the heatmap.

* **Reads (Middle-Right):** A heatmap with a color gradient ranging from blue to red. The heatmap is predominantly cyan, with some vertical stripes of other colors on the right side.

**Row 3: Write Weightings and Read Weightings**

* **Write Weightings (Bottom-Left):** A black image with a diagonal line of white pixels extending from the bottom-left to the top-right. The line represents the weights being written over time and location. A red box highlights a small section of the diagonal line.

* **Read Weightings (Bottom-Right):** A mostly black image with a small cluster of white pixels in the bottom-right corner. A red box highlights this cluster.

### Key Observations

* The input image contains a distinct pattern, while the output image is mostly black with a small, localized activation.

* The "Adds" heatmap shows a seemingly random distribution of values, while the "Reads" heatmap shows a more structured pattern.

* The "Write Weightings" plot shows a clear diagonal pattern, indicating a sequential writing process.

* The "Read Weightings" plot shows a localized activation, suggesting that the network is reading from a specific location.

### Interpretation

The image provides a glimpse into the internal operations of a neural network, likely a memory-augmented neural network (MANN) or a similar architecture. The "Adds" and "Reads" heatmaps likely represent the memory content being written to and read from, respectively. The "Write Weightings" and "Read Weightings" plots show how the network is accessing its memory over time.

The fact that the output is mostly black suggests that the network is either still learning or that the input pattern is not strongly associated with any particular output. The localized activation in the "Read Weightings" plot suggests that the network is focusing on a specific memory location to produce the output.

The diagonal line in the "Write Weightings" plot indicates that the network is sequentially writing information to memory. This could be related to how the network is processing the input sequence or how it is storing information for later use.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmap Grid: Neural Network Attention Visualization

### Overview

The image presents a 2x2 grid of heatmaps visualizing attention weights within a neural network, likely a recurrent neural network or transformer. The grid shows "Inputs" on the left and "Outputs" on the right, with "Adds" and "Location" represented vertically. The heatmaps are color-coded, with warmer colors (red, yellow) indicating higher attention weights and cooler colors (blue, cyan) indicating lower weights. Each heatmap has a small red square in the top-right corner, likely indicating a specific focus or region of interest.

### Components/Axes

* **Inputs:** Top row, representing the input data.

* **Outputs:** Right column, representing the output data.

* **Adds:** Left column (below "Inputs"), representing additive attention mechanisms. The y-axis is labeled "Adds".

* **Location:** Bottom row, representing spatial or temporal location. The y-axis is labeled "Location" and the x-axis is labeled "Time".

* **Write Weightings:** Below the "Inputs" and "Adds" heatmaps.

* **Read Weightings:** Below the "Outputs" and "Reads" heatmaps.

* **Color Scale:** Implicitly, red/yellow = high attention, blue/cyan = low attention.

* **Red Square:** Present in each heatmap, top-right corner. Its purpose is unclear without further context, but it likely highlights a specific area of focus.

### Detailed Analysis or Content Details

**1. Inputs Heatmap (Top-Left):**

This heatmap displays a pattern resembling the input sequence "Hellow". The heatmap is predominantly black, with white/light-colored regions corresponding to the letters. The red square is positioned over the last letter, "o".

* The input sequence appears to be "Hellow" (approximate).

* The attention is focused on the last character, "o".

**2. Adds Heatmap (Middle-Left):**

This heatmap shows a complex pattern of attention weights. The colors are highly varied, ranging from deep blue to bright red. A vertical blue line is present, approximately in the center of the heatmap. The red square is positioned in the top-right corner.

* The heatmap is highly dynamic, with no clear dominant trend.

* The vertical blue line suggests a consistent attention pattern across the "Adds" dimension.

**3. Location Heatmap (Bottom-Left):**

This heatmap shows a diagonal line of high attention weights (yellow/red) extending from the bottom-left to the top-right corner. This indicates a strong correlation between "Time" and "Location". The red square is positioned at the bottom-left corner.

* The diagonal line indicates a sequential processing of information over time.

* The attention weights increase linearly with both time and location.

**4. Outputs Heatmap (Top-Right):**

This heatmap is predominantly cyan/blue, with a few horizontal lines of slightly higher attention (yellow/green). The red square is positioned in the top-right corner.

* The heatmap shows a relatively uniform distribution of attention weights.

* The horizontal lines suggest a focus on specific output features.

**5. Reads Heatmap (Middle-Right):**

This heatmap is similar to the "Outputs" heatmap, predominantly cyan/blue with a few horizontal lines of slightly higher attention. The red square is positioned in the top-right corner.

* The heatmap shows a relatively uniform distribution of attention weights.

* The horizontal lines suggest a focus on specific output features.

**6. Read Weightings Heatmap (Bottom-Right):**

This heatmap is almost entirely black, with very few areas of attention. The red square is positioned in the bottom-right corner.

* The heatmap shows minimal attention weights.

* This suggests that the network is not actively reading from this region.

### Key Observations

* The "Location" heatmap shows a clear diagonal trend, indicating a sequential processing of information.

* The "Adds" heatmap is highly dynamic, with no clear dominant trend.

* The "Inputs" heatmap shows attention focused on the last character of the input sequence.

* The "Outputs" and "Reads" heatmaps show relatively uniform attention weights, with a few horizontal lines of slightly higher attention.

* The "Read Weightings" heatmap shows minimal attention weights.

### Interpretation

The image demonstrates how attention mechanisms work within a neural network. The "Inputs" heatmap shows where the network is focusing its attention on the input sequence. The "Adds" heatmap visualizes the additive attention weights, while the "Location" heatmap shows how attention changes over time and location. The "Outputs" and "Reads" heatmaps show where the network is focusing its attention on the output sequence. The "Read Weightings" heatmap shows where the network is actively reading from.

The diagonal trend in the "Location" heatmap suggests that the network is processing information sequentially. The dynamic pattern in the "Adds" heatmap suggests that the network is using a complex attention mechanism. The minimal attention weights in the "Read Weightings" heatmap suggest that the network is not actively reading from that region.

The red squares in each heatmap likely highlight a specific area of focus or region of interest. The consistent placement of the red square in the top-right corner suggests that this region is important for the network's performance.

The data suggests a model that processes sequential data, focusing on the end of the input sequence and utilizing a complex attention mechanism to generate the output. The model appears to be actively reading from certain regions of the output sequence, but not others.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Memory-Augmented Neural Network Visualization

### Overview

The image is a technical diagram composed of six panels arranged in a 3x2 grid. It visualizes the internal state and operations of a memory-augmented neural network or a similar differentiable memory system. The diagram illustrates how input sequences are processed, written to memory, and subsequently read from memory to produce outputs. The visualization uses a combination of symbolic sequences, heatmaps, and spatiotemporal plots.

### Components/Axes

The diagram is segmented into three rows and two columns, with clear labels for each major section.

**Row 1: Symbolic Sequence Processing**

* **Top-Left Panel (Inputs):** Labeled "Inputs" at the top. Shows a sequence of 11 pixelated, MNIST-like character symbols. The 3rd and 10th symbols are outlined with a **green box**. The 4th and 11th symbols are outlined with a **red box**.

* **Top-Right Panel (Outputs):** Labeled "Outputs" at the top. Shows a mostly black field with a single pixelated character symbol (resembling a '2' or 'Z') in the far right, outlined with a **red box**.

**Row 2: Memory Access Heatmaps**

* **Middle-Left Panel (Adds):** Labeled "Adds" on the left vertical axis. This is a heatmap with a complex, noisy pattern of colors (blue, cyan, yellow, orange, red). A vertical **black line** is drawn through the left portion of the heatmap.

* **Middle-Right Panel (Reads):** Labeled "Reads" on the right vertical axis. This is a heatmap dominated by a cyan/green color, with a distinct, structured pattern of blue and yellow/orange pixels concentrated along the right edge.

**Row 3: Memory Weighting Plots**

* **Bottom-Left Panel (Write Weightings):** Labeled "Write Weightings" at the bottom. The x-axis is labeled "Time" (increasing to the right). The y-axis is labeled "Location" (increasing upward). The plot shows a bright, diagonal line of white/grey pixels from the bottom-left to the top-right, indicating a sequential writing process. A small segment of this diagonal is highlighted with a **red box**.

* **Bottom-Right Panel (Read Weightings):** Labeled "Read Weightings" at the bottom. The x-axis is labeled "Time" (increasing to the right). The y-axis is labeled "Location" (increasing upward). The plot is mostly black, with a single, small cluster of white/grey pixels in the bottom-right corner, highlighted with a **red box**.

### Detailed Analysis

1. **Input/Output Sequence (Top Row):**

* The input is a sequence of 11 discrete symbols.

* The output is a single symbol, which matches the 11th (final) input symbol (both outlined in red). This suggests the network's task may be to recall or reconstruct the last item in a sequence.

* The green boxes on the 3rd and 10th inputs may indicate points of interest or control signals, but their specific function is not labeled.

2. **Memory Operations (Middle Row):**

* **"Adds" Heatmap:** This likely represents the *additions* or *writes* to the memory matrix over time. The noisy, distributed pattern suggests that information from the input sequence is being written across many memory locations in a complex, distributed code. The vertical black line may mark a specific time step.

* **"Reads" Heatmap:** This likely represents the *reads* from the memory matrix. The pattern is highly structured and localized to the right edge (corresponding to later time steps). This indicates that during the output phase, the system is focusing its read operations on a specific, narrow region of the memory.

3. **Memory Access Patterns (Bottom Row):**

* **Write Weightings:** The perfect diagonal line demonstrates a **sequential, location-based writing strategy**. At each time step `t`, the network writes to memory location `t` (or a location linearly related to `t`). This is a simple, clock-like addressing mechanism for storing the sequence.

* **Read Weightings:** The single cluster in the bottom-right shows that at the final time step, the network's read head is focused **exclusively on the last memory location** (Location ~11, Time ~11). This directly correlates with the output being the final input symbol.

### Key Observations

* **Spatial Grounding:** The red box in the "Outputs" panel corresponds to the red box on the final input symbol. The red box in the "Read Weightings" plot corresponds to the final time/location, which in turn corresponds to the structured read pattern on the far right of the "Reads" heatmap.

* **Trend Verification:** The "Write Weightings" show a clear, linear upward trend (diagonal). The "Read Weightings" show no trend; it is a single, focused point of activity at the end of the sequence.

* **Component Isolation:** The three rows show different abstraction levels: 1) The symbolic task, 2) The raw memory matrix activity, and 3) The interpretable attention/weighting patterns of the read/write heads.

### Interpretation

This diagram illustrates the mechanics of a **simple sequential memory task**, likely a "last-item recall" or "copy" task. The system demonstrates two distinct addressing mechanisms:

1. **Sequential Writing:** The network uses a simple, iterative process to store each incoming symbol in the next available memory slot (the diagonal in "Write Weightings"). This is a robust way to preserve temporal order.

2. **Focused Reading:** To produce the output, the network learns to direct its read attention solely to the memory location containing the final item of the sequence. The "Reads" heatmap shows this results in a very specific activation pattern being retrieved from memory.

The "Adds" heatmap's complexity versus the "Reads" heatmap's simplicity suggests that while *storing* information involves a distributed, potentially noisy code, *retrieving* a specific piece of information (the last item) can be achieved by a very precise and localized read operation. The green boxes on earlier inputs might be distractors or part of a more complex task variant, but the core demonstrated functionality is the reliable storage and pinpoint retrieval of the final element in a temporal sequence. This is a foundational capability for more complex reasoning and question-answering tasks in memory-augmented networks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Visualization: System Processing Dynamics

### Overview

The image presents a 2x2 grid visualization comparing system inputs/outputs and processing dynamics across time and location dimensions. Two heatmaps at the bottom illustrate weighting patterns for write and read operations.

### Components/Axes

- **Left Column Labels**:

- Top: Inputs (black/white pattern with red/green boxes)

- Middle: Adds (color mosaic with vertical black line)

- Bottom: Write Weightings (diagonal white line on black background)

- **Right Column Labels**:

- Top: Outputs (black background with white stripe)

- Middle: Reads (blue gradient with vertical stripe)

- Bottom: Read Weightings (horizontal white line at bottom)

- **Axes**:

- Horizontal: Time (left to right)

- Vertical: Location (bottom to top)

- **Color Coding**:

- Write Weightings: White line (high weighting) vs. black (low)

- Read Weightings: White line (high weighting) vs. black (low)

### Detailed Analysis

1. **Inputs Section**:

- Black/white checkerboard pattern with red/green rectangular boxes

- Red boxes concentrated in top-left quadrant

- Green boxes distributed along right edge

2. **Outputs Section**:

- Predominantly black with horizontal white stripe in upper-right quadrant

- Stripe width ≈ 1/5 of total height

3. **Adds Section**:

- Color mosaic with dominant yellow/orange in center

- Vertical black line at x=1/3 position

- Color intensity decreases toward edges

4. **Reads Section**:

- Blue gradient from dark (bottom) to light (top)

- Vertical white stripe at x=4/5 position

- Gradient slope ≈ 15° from horizontal

5. **Write Weightings Heatmap**:

- Diagonal white line from bottom-left to top-right

- Line thickness ≈ 1/10 of image height

- Line position: y = 0.25x + 0.1 (approximate)

6. **Read Weightings Heatmap**:

- Horizontal white line at bottom 5% of image

- Line thickness ≈ 1/20 of image height

### Key Observations

- **Temporal Localization**:

- Write operations show diagonal weighting pattern suggesting sequential processing

- Read operations concentrate at bottom location across all time points

- **Spatial Correlation**:

- Inputs show right-edge green boxes correlating with read outputs' right-side stripe

- Adds section's vertical black line aligns with write weightings' diagonal trajectory

- **Color-Value Relationship**:

- Brighter colors in Adds section correlate with higher write weighting values

- Darker blue regions in Reads section match lower read weighting intensities

### Interpretation

The visualization demonstrates a system where:

1. Inputs are processed through additive operations (Adds) with spatial-temporal weighting

2. Write operations follow a diagonal progression through time and location

3. Read operations focus on a specific bottom location regardless of time

4. Outputs emerge as a simplified representation of processed inputs

The diagonal write weighting pattern suggests temporal sequencing in data processing, while the concentrated read weighting indicates a fixed output location. The correlation between input colors and add intensities implies dynamic data transformation during processing. The system appears optimized for localized read operations despite distributed input processing.

DECODING INTELLIGENCE...