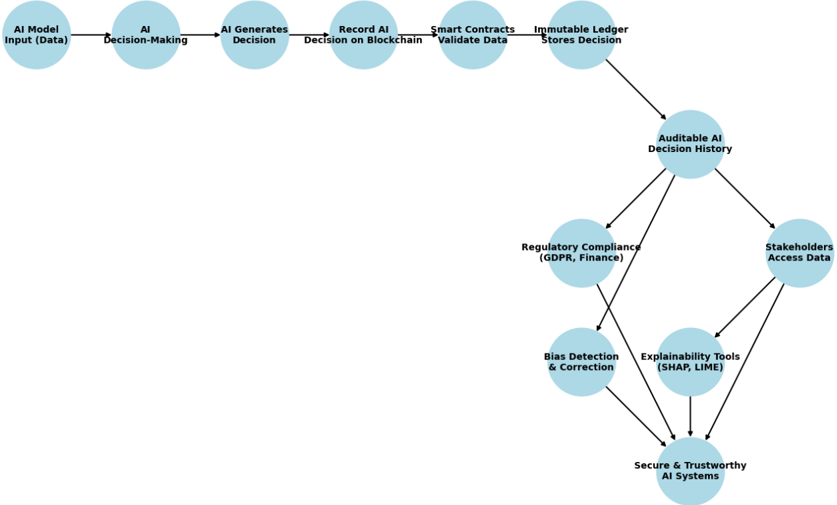

## Flowchart: AI-Blockchain Integration for Trustworthy Systems

### Overview

The flowchart illustrates a multi-stage process integrating AI decision-making with blockchain technology and compliance frameworks. It emphasizes transparency, auditability, and regulatory adherence in AI systems.

### Components/Axes

- **Nodes (Circles)**: Represent process stages or components.

- **Arrows**: Indicate directional flow between stages.

- **Key Labels**:

- **Left-to-Right Flow**:

1. AI Model Input (Data)

2. AI Decision-Making

3. AI-Generated Decision

4. Record AI Decision on Blockchain

5. Smart Contracts Validate Data

6. Immutable Ledger Stores Decision

- **Branching Paths** (from "Immutable Ledger Stores Decision"):

- **Auditable AI Decision History**

- Regulatory Compliance (GDPR, Finance)

- Stakeholders Access Data

- **Bias Detection & Correction**

- **Explainability Tools (SHAP, LIME)**

- **Final Convergence**: Secure & Trustworthy AI Systems

### Detailed Analysis

- **Primary Flow**:

- Starts with **AI Model Input (Data)**.

- Progresses through **AI Decision-Making** to generate decisions.

- Decisions are recorded on a **blockchain** and validated via **smart contracts**.

- Finalized decisions are stored in an **immutable ledger**.

- **Branching Paths**:

- **Auditable AI Decision History**: Enables traceability for regulators and stakeholders.

- **Regulatory Compliance**: Explicitly references GDPR and finance sectors.

- **Stakeholders Access Data**: Ensures transparency for end-users.

- **Bias Detection & Correction**: Addresses fairness concerns.

- **Explainability Tools (SHAP, LIME)**: Provide interpretability for AI decisions.

- **Final Node**: All paths converge into **Secure & Trustworthy AI Systems**, emphasizing holistic accountability.

### Key Observations

- **Immutable Ledger as Central Hub**: Acts as the single source of truth for decisions, ensuring data integrity.

- **Regulatory Focus**: GDPR compliance highlights data privacy and accountability requirements.

- **Explainability Tools**: SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) are named, indicating technical specificity.

- **Bias Mitigation**: Explicitly addressed as a critical step post-decision storage.

### Interpretation

The diagram underscores a systemic approach to ethical AI deployment:

1. **Transparency**: Blockchain and immutable ledgers ensure decisions are tamper-proof and auditable.

2. **Accountability**: Stakeholders and regulators can access decision histories, aligning with GDPR’s "right to explanation."

3. **Bias Mitigation**: Proactive detection and correction mechanisms are integrated into the workflow.

4. **Explainability**: Tools like SHAP and LIME bridge the "black box" nature of AI, fostering trust.

5. **Holistic Design**: The convergence of compliance, bias correction, and explainability into "Secure & Trustworthy AI Systems" reflects a commitment to ethical AI governance.

This architecture is critical for industries like finance, where regulatory scrutiny and fairness are paramount. The flowchart positions blockchain not just as a data storage layer but as an enabler of systemic trust in AI-driven decisions.