TECHNICAL ASSET FINGERPRINT

a49d50e890776cf7df349711

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

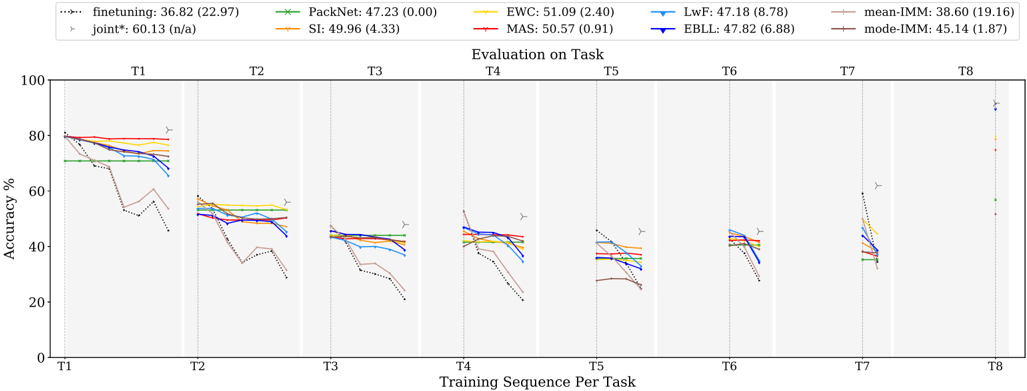

## Line Chart: Evaluation on Task

### Overview

The image is a line chart comparing the performance of different continual learning algorithms across a sequence of tasks (T1 to T8). The y-axis represents accuracy percentage, and the x-axis represents the training sequence per task. Each line represents a different algorithm, and the chart shows how the accuracy of each algorithm changes as it is trained on subsequent tasks.

### Components/Axes

* **Title:** Evaluation on Task

* **X-axis:** Training Sequence Per Task (T1, T2, T3, T4, T5, T6, T7, T8)

* **Y-axis:** Accuracy % (Scale: 0 to 100)

* **Legend (Top-Left):**

* finetuning: 36.82 (22.97) - Dotted Black Line

* joint*: 60.13 (n/a) - Gray Line with Triangle Markers

* PackNet: 47.23 (0.00) - Green Line with X Markers

* SI: 49.96 (4.33) - Orange Line

* EWC: 51.09 (2.40) - Yellow Line

* MAS: 50.57 (0.91) - Red Line

* LwF: 47.18 (8.78) - Light Blue Line

* EBLL: 47.82 (6.88) - Dark Blue Line

* mean-IMM: 38.60 (19.16) - Light Brown Line

* mode-IMM: 45.14 (1.87) - Dark Brown Line

### Detailed Analysis

**Task 1 (T1):**

* finetuning (Dotted Black): Starts at approximately 78% and drops sharply to around 52%.

* joint* (Gray w/ Triangles): Starts at approximately 82% and remains relatively stable.

* PackNet (Green w/ X): Starts at approximately 72% and remains relatively stable.

* SI (Orange): Starts at approximately 80% and remains relatively stable.

* EWC (Yellow): Starts at approximately 78% and remains relatively stable.

* MAS (Red): Starts at approximately 80% and remains relatively stable.

* LwF (Light Blue): Starts at approximately 74% and remains relatively stable.

* EBLL (Dark Blue): Starts at approximately 76% and remains relatively stable.

* mean-IMM (Light Brown): Starts at approximately 70% and remains relatively stable.

* mode-IMM (Dark Brown): Starts at approximately 78% and remains relatively stable.

**Task 2 (T2):**

* finetuning (Dotted Black): Decreases to approximately 35%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 58%.

* PackNet (Green w/ X): Remains relatively stable at approximately 52%.

* SI (Orange): Remains relatively stable at approximately 54%.

* EWC (Yellow): Remains relatively stable at approximately 55%.

* MAS (Red): Remains relatively stable at approximately 52%.

* LwF (Light Blue): Remains relatively stable at approximately 50%.

* EBLL (Dark Blue): Remains relatively stable at approximately 52%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 50%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 55%.

**Task 3 (T3):**

* finetuning (Dotted Black): Decreases to approximately 32%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 56%.

* PackNet (Green w/ X): Remains relatively stable at approximately 48%.

* SI (Orange): Remains relatively stable at approximately 48%.

* EWC (Yellow): Remains relatively stable at approximately 48%.

* MAS (Red): Remains relatively stable at approximately 46%.

* LwF (Light Blue): Remains relatively stable at approximately 44%.

* EBLL (Dark Blue): Remains relatively stable at approximately 46%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 42%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 46%.

**Task 4 (T4):**

* finetuning (Dotted Black): Decreases to approximately 30%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 54%.

* PackNet (Green w/ X): Remains relatively stable at approximately 46%.

* SI (Orange): Remains relatively stable at approximately 46%.

* EWC (Yellow): Remains relatively stable at approximately 46%.

* MAS (Red): Remains relatively stable at approximately 44%.

* LwF (Light Blue): Remains relatively stable at approximately 42%.

* EBLL (Dark Blue): Remains relatively stable at approximately 44%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 38%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 44%.

**Task 5 (T5):**

* finetuning (Dotted Black): Decreases to approximately 28%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 52%.

* PackNet (Green w/ X): Remains relatively stable at approximately 44%.

* SI (Orange): Remains relatively stable at approximately 44%.

* EWC (Yellow): Remains relatively stable at approximately 44%.

* MAS (Red): Remains relatively stable at approximately 42%.

* LwF (Light Blue): Remains relatively stable at approximately 40%.

* EBLL (Dark Blue): Remains relatively stable at approximately 42%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 34%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 42%.

**Task 6 (T6):**

* finetuning (Dotted Black): Decreases to approximately 26%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 50%.

* PackNet (Green w/ X): Remains relatively stable at approximately 42%.

* SI (Orange): Remains relatively stable at approximately 42%.

* EWC (Yellow): Remains relatively stable at approximately 42%.

* MAS (Red): Remains relatively stable at approximately 40%.

* LwF (Light Blue): Remains relatively stable at approximately 38%.

* EBLL (Dark Blue): Remains relatively stable at approximately 40%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 32%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 40%.

**Task 7 (T7):**

* finetuning (Dotted Black): Decreases to approximately 24%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 62%.

* PackNet (Green w/ X): Remains relatively stable at approximately 38%.

* SI (Orange): Remains relatively stable at approximately 38%.

* EWC (Yellow): Remains relatively stable at approximately 40%.

* MAS (Red): Remains relatively stable at approximately 38%.

* LwF (Light Blue): Remains relatively stable at approximately 36%.

* EBLL (Dark Blue): Remains relatively stable at approximately 38%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 30%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 38%.

**Task 8 (T8):**

* finetuning (Dotted Black): Increases to approximately 92%.

* joint* (Gray w/ Triangles): Remains relatively stable at approximately 92%.

* PackNet (Green w/ X): Remains relatively stable at approximately 58%.

* SI (Orange): Remains relatively stable at approximately 58%.

* EWC (Yellow): Remains relatively stable at approximately 92%.

* MAS (Red): Remains relatively stable at approximately 92%.

* LwF (Light Blue): Remains relatively stable at approximately 92%.

* EBLL (Dark Blue): Remains relatively stable at approximately 92%.

* mean-IMM (Light Brown): Remains relatively stable at approximately 58%.

* mode-IMM (Dark Brown): Remains relatively stable at approximately 58%.

### Key Observations

* The "finetuning" algorithm (dotted black line) experiences a significant drop in accuracy after the first task and remains low for subsequent tasks until Task 8 where it spikes.

* The "joint*" algorithm (gray line with triangle markers) maintains a relatively stable and higher accuracy compared to other algorithms across all tasks, except for Task 8 where most algorithms perform similarly.

* Other algorithms (PackNet, SI, EWC, MAS, LwF, EBLL, mean-IMM, mode-IMM) show a gradual decrease in accuracy as the task sequence progresses, but they perform better than "finetuning" in tasks T2-T7.

* In Task 8, most algorithms show a significant increase in accuracy, suggesting a potential change or reset in the task setup.

### Interpretation

The chart illustrates the challenge of continual learning, where models struggle to maintain performance on previously learned tasks as they are trained on new ones. The "finetuning" algorithm suffers from catastrophic forgetting, as its accuracy drops significantly after the first task. The "joint*" algorithm, likely trained on all tasks simultaneously, provides a performance upper bound and demonstrates the potential accuracy achievable without forgetting. The other algorithms represent various strategies to mitigate forgetting, and their performance reflects the effectiveness of these strategies. The spike in accuracy for most algorithms in Task 8 suggests that this task might be significantly different or easier than the preceding tasks, or that some form of reset or adaptation occurs at this point.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

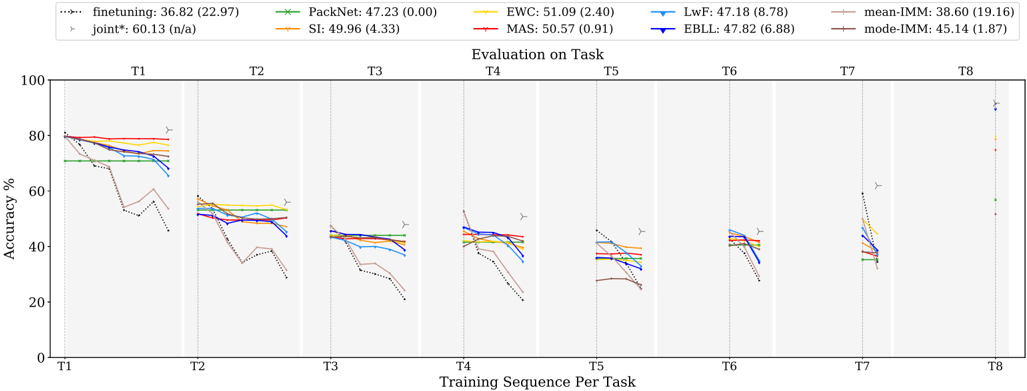

## Line Chart: Accuracy vs. Training Sequence Per Task

### Overview

This line chart depicts the accuracy performance of several machine learning algorithms across eight tasks (T1-T8) as the training sequence progresses. The y-axis represents accuracy in percentage, while the x-axis represents the training sequence per task. Each line represents a different algorithm, and error bars are shown at each task to indicate variance.

### Components/Axes

* **X-axis:** "Training Sequence Per Task" with markers T1, T2, T3, T4, T5, T6, T7, and T8.

* **Y-axis:** "Accuracy %" ranging from 0 to 80, with increments of 10.

* **Legend:** Located at the top-right of the chart, listing the algorithms and their corresponding colors:

* finetuning: 36.82 (22.97) - Dotted line, purple color.

* joint*: 60.13 (n/a) - Dashed line, dark green color.

* PackNet: 47.23 (0.00) - Solid line, light green color.

* SI: 49.96 (4.33) - Solid line, orange color.

* EWC: 51.09 (2.40) - Solid line, red color.

* LwF: 47.18 (8.78) - Solid line, blue color.

* MAS: 50.57 (0.91) - Solid line, dark red color.

* EBLL: 47.82 (6.88) - Solid line, yellow color.

* mean-IMM: 38.60 (19.16) - Solid line, light blue color.

* mode-IMM: 45.14 (1.87) - Solid line, brown color.

* **Error Bars:** Represented by small vertical lines with 'T' shaped ends at each data point, indicating the standard deviation or confidence interval.

### Detailed Analysis

The chart shows the accuracy of each algorithm as it is trained on successive tasks. The following details are observed:

* **Finetuning (purple, dotted):** Starts at approximately 82% accuracy at T1 and declines steadily to around 20% by T8.

* **Joint* (dark green, dashed):** Starts at approximately 80% accuracy at T1 and declines to around 40% by T8.

* **PackNet (light green, solid):** Starts at approximately 80% accuracy at T1 and declines to around 30% by T8.

* **SI (orange, solid):** Starts at approximately 80% accuracy at T1 and declines to around 35% by T8.

* **EWC (red, solid):** Starts at approximately 80% accuracy at T1 and declines to around 40% by T8.

* **LwF (blue, solid):** Starts at approximately 80% accuracy at T1 and declines to around 30% by T8.

* **MAS (dark red, solid):** Starts at approximately 80% accuracy at T1 and declines to around 40% by T8.

* **EBLL (yellow, solid):** Starts at approximately 80% accuracy at T1 and declines to around 30% by T8.

* **mean-IMM (light blue, solid):** Starts at approximately 80% accuracy at T1 and declines to around 25% by T8.

* **mode-IMM (brown, solid):** Starts at approximately 80% accuracy at T1 and declines to around 35% by T8.

All algorithms show a general downward trend in accuracy as the training sequence progresses. The rate of decline varies between algorithms. The error bars indicate that the accuracy values have some variance, but the overall trends are clear.

### Key Observations

* All algorithms experience catastrophic forgetting, as evidenced by the decreasing accuracy across tasks.

* The "joint*" algorithm appears to maintain a slightly higher accuracy compared to most other algorithms, especially in the later tasks (T5-T8).

* The "finetuning" algorithm exhibits the most significant decline in accuracy.

* The error bars are relatively small for most algorithms, suggesting consistent performance.

### Interpretation

The chart demonstrates the challenge of continual learning, where models struggle to retain knowledge from previous tasks when learning new ones. This phenomenon is known as catastrophic forgetting. The algorithms tested here represent different approaches to mitigating catastrophic forgetting, but all exhibit some degree of performance degradation as the number of tasks increases.

The "joint*" algorithm's relatively stable performance suggests that it may be more effective at preserving knowledge across tasks. The significant decline in "finetuning" indicates that it is highly susceptible to catastrophic forgetting. The error bars provide a measure of the reliability of the accuracy estimates, and their relatively small size suggests that the observed trends are statistically significant.

The data suggests that continual learning is a complex problem that requires careful consideration of the trade-off between learning new tasks and preserving knowledge from old ones. Further research is needed to develop algorithms that can effectively address catastrophic forgetting and enable machines to learn continuously over time.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Chart: Evaluation of Continual Learning Methods on Sequential Tasks

### Overview

This image is a line chart comparing the performance of 10 different continual learning methods across 8 sequential tasks (T1 through T8). The chart plots the test accuracy (%) of each method on a given task as subsequent tasks are trained. The primary purpose is to visualize and compare how well each method mitigates catastrophic forgetting.

### Components/Axes

* **Chart Type:** Multi-series line chart with grouped data points per task.

* **X-Axis:** Labeled "Training Sequence Per Task". It has 8 major categorical ticks: T1, T2, T3, T4, T5, T6, T7, T8. Each tick represents a stage where a new task is introduced and trained.

* **Y-Axis:** Labeled "Accuracy %". It is a linear scale from 0 to 100, with major gridlines at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Legend:** Positioned at the top of the chart, spanning its full width. It lists 10 methods with their corresponding line color/marker and a summary statistic in the format: `Method Name: Mean Accuracy (Standard Deviation)`.

* `finetuning: 36.82 (22.97)` - Black dotted line with circle markers.

* `PackNet: 47.23 (0.00)` - Green solid line with 'x' markers.

* `EWC: 51.09 (2.40)` - Yellow solid line with '+' markers.

* `LwF: 47.18 (8.78)` - Light blue solid line with triangle-down markers.

* `mean-IMM: 38.60 (19.16)` - Pink solid line with diamond markers.

* `joint*: 60.13 (n/a)` - Gray solid line with right-pointing triangle markers. (Note: `n/a` for standard deviation).

* `SI: 49.96 (4.33)` - Orange solid line with square markers.

* `MAS: 50.57 (0.91)` - Red solid line with circle markers.

* `EBLL: 47.82 (6.88)` - Dark blue solid line with triangle-up markers.

* `mode-IMM: 45.14 (1.87)` - Brown solid line with left-pointing triangle markers.

* **Plot Area:** Divided into 8 vertical sections by faint gray background shading, one for each task (T1-T8). Within each section, data points for all methods are plotted at the same x-coordinate (the task label) but at different y-values (accuracy).

### Detailed Analysis

The chart shows the accuracy of each method on a specific task *after* training on all tasks up to and including that task. For example, the data points at "T3" show each method's accuracy on Task 3 after the model has been sequentially trained on Tasks 1, 2, and 3.

**Trend Verification & Data Points (Approximate Values):**

* **T1 (Initial Task Performance):** All methods start with high accuracy, clustered between ~70% and ~80%. `joint*` (gray) is highest at ~80%. `finetuning` (black dotted) and `mean-IMM` (pink) show the steepest initial decline.

* **T2:** A significant drop for all methods. `joint*` remains highest (~58%). `finetuning` drops sharply to ~50%. Most other methods cluster between ~50-55%.

* **T3:** Continued decline. `joint*` (~48%) and `EWC` (yellow, ~46%) lead. `finetuning` and `mean-IMM` are lowest (~30-35%).

* **T4:** Performance stabilizes somewhat for some methods. `joint*` (~45%), `EWC` (~44%), `MAS` (red, ~43%) are top. `finetuning` and `mean-IMM` remain low (~25-30%).

* **T5:** Similar pattern to T4. `joint*` (~42%), `EWC` (~41%), `MAS` (~40%) lead. `finetuning` and `mean-IMM` are at the bottom (~25%).

* **T6:** Tight clustering among the middle group. `joint*` (~41%), `EWC` (~40%), `MAS` (~39%), `SI` (orange, ~38%). `finetuning` and `mean-IMM` show a slight recovery to ~30%.

* **T7:** `joint*` shows a notable spike to ~60%. `EWC` (~40%), `MAS` (~39%), `SI` (~38%) remain stable. `finetuning` and `mean-IMM` drop again to ~25-28%.

* **T8 (Final Task Performance):** This shows performance on the last task learned. `joint*` is highest at ~90%. `EWC` (~80%), `MAS` (~78%), `SI` (~75%) perform well. `finetuning` and `mean-IMM` are lowest at ~55-60%. `PackNet` (green) shows a unique pattern, maintaining a flat line (~70%) across all tasks from T1 onward, indicating no forgetting but also no learning on new tasks after T1.

### Key Observations

1. **Catastrophic Forgetting:** The `finetuning` (black dotted) and `mean-IMM` (pink) methods exhibit severe catastrophic forgetting, with accuracy on early tasks plummeting as new tasks are learned (steep downward slopes from T1 to T4).

2. **Stability-Plasticity Trade-off:** Methods like `PackNet` (green) show perfect stability (flat line, 0.00 std dev) but likely at the cost of plasticity (inability to learn new tasks effectively after the first). `EWC`, `MAS`, and `SI` show a better balance, maintaining relatively high and stable accuracy.

3. **Upper Bound:** The `joint*` method (gray) consistently performs best, serving as an approximate upper bound. This method likely represents joint training on all data simultaneously, which is not a continual learning scenario but a performance benchmark.

4. **Performance Clustering:** After T3, the methods (excluding `finetuning`, `mean-IMM`, and `joint*`) form a tight performance cluster, with `EWC` and `MAS` often at the top of this group.

5. **Task-Specific Anomaly:** The spike for `joint*` at T7 is unusual and may indicate that Task 7 is particularly easy or similar to previous tasks when all data is available.

### Interpretation

This chart is a classic evaluation of continual (or lifelong) learning algorithms. It demonstrates the core challenge: a model's ability to retain performance on old tasks while learning new ones.

* **What the data suggests:** The data strongly suggests that specialized continual learning methods (`EWC`, `MAS`, `SI`, `LwF`, etc.) are effective at reducing catastrophic forgetting compared to naive `finetuning`. However, they still suffer a significant performance drop compared to the `joint*` upper bound, indicating the problem is not fully solved.

* **How elements relate:** The x-axis represents time/sequence in a learning process. The downward slope of most lines from left to right visually encodes the "forgetting" phenomenon. The vertical spread between lines at any task point (e.g., T4) quantifies the relative effectiveness of each algorithm at that stage.

* **Notable outliers/trends:**

* **Outlier:** `PackNet`'s perfectly flat line is a major outlier, suggesting a method that partitions network parameters for each task, preventing interference but also preventing knowledge transfer or incremental capacity use.

* **Trend:** The general trend for all methods (except `PackNet`) is a sharp initial decline followed by a gradual leveling off. This suggests the most significant forgetting happens early in the sequence.

* **Anomaly:** The `joint*` method's spike at T7 and very high performance at T8 highlight that the tasks themselves may have varying difficulty or relatedness, which affects evaluation. The `n/a` for its standard deviation implies it was only run once, as a single benchmark.

**In summary, the chart provides a comparative snapshot of algorithmic resilience to forgetting, showing that while progress has been made, matching the performance of joint training remains a significant challenge in continual learning.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Evaluation on Task Accuracy Across Training Sequences

### Overview

The image is a multi-line graph comparing the accuracy performance of various machine learning methods across eight training tasks (T1-T8). The y-axis represents accuracy percentage (0-100%), while the x-axis shows sequential training tasks. The graph includes eight distinct data series representing different model configurations, with a legend at the top providing method names, colors, and performance metrics.

### Components/Axes

- **X-axis**: "Training Sequence Per Task" labeled with tasks T1 through T8

- **Y-axis**: "Accuracy %" scaled from 0 to 100

- **Legend**: Located at the top with 8 entries:

1. finetuning: 36.82 (22.97) - black dotted line

2. PackNet: 47.23 (0.00) - green star line

3. EWC: 51.09 (2.40) - yellow line

4. MAS: 50.57 (0.91) - red line

5. LwF: 47.18 (8.78) - blue line

6. EBLL: 47.82 (6.88) - dark blue line

7. mean-IMM: 38.60 (19.16) - light brown dashed line

8. mode-IMM: 45.14 (1.87) - dark brown dashed line

- **Additional Elements**:

- Vertical gray lines separating task boundaries

- Yellow triangle markers at task boundaries

- Vertical dashed lines at task boundaries

- "joint*": 60.13 (n/a) - gray triangle marker

### Detailed Analysis

1. **Finetuning** (black dotted line):

- Starts at ~80% on T1

- Sharp decline to ~40% by T3

- Fluctuates between 30-50% through T8

- Final value: 36.82% (22.97 uncertainty)

2. **PackNet** (green star line):

- Maintains ~47% accuracy across all tasks

- Minimal variation (0.00 uncertainty)

- Consistent performance with slight dip at T7

3. **EWC** (yellow line):

- Peaks at ~51% on T1

- Gradual decline to ~40% by T8

- Moderate stability with 2.40 uncertainty

4. **MAS** (red line):

- Starts at ~50% on T1

- Slight decline to ~45% by T8

- Very stable with 0.91 uncertainty

5. **LwF** (blue line):

- Begins at ~47% on T1

- Sharp drop to ~30% by T3

- Recovers to ~40% by T8

- High variability (8.78 uncertainty)

6. **EBLL** (dark blue line):

- Starts at ~48% on T1

- Gradual decline to ~40% by T8

- Moderate stability (6.88 uncertainty)

7. **mean-IMM** (light brown dashed line):

- Starts at ~38% on T1

- Sharp decline to ~20% by T3

- Recovers to ~30% by T8

- High variability (19.16 uncertainty)

8. **mode-IMM** (dark brown dashed line):

- Starts at ~45% on T1

- Gradual decline to ~35% by T8

- Most stable with 1.87 uncertainty

### Key Observations

1. **Performance Variance**:

- EWC shows highest initial performance (51.09%) but declines over time

- PackNet maintains most consistent performance (47.23% ±0.00)

- Finetuning has highest initial accuracy (80%) but largest drop-off

2. **Task-Specific Patterns**:

- T1 shows highest overall performance across methods

- T8 has most significant performance drops for most methods

- Joint* method (gray triangle) shows highest performance at T8 (60.13%)

3. **Uncertainty Patterns**:

- Finetuning has highest uncertainty (22.97)

- PackNet has perfect certainty (0.00)

- MAS shows lowest uncertainty (0.91)

### Interpretation

The data demonstrates significant variability in model performance across training tasks. PackNet's perfect certainty and consistent performance suggest superior stability, while finetuning's high initial accuracy but large uncertainty indicates potential overfitting. The joint* method's superior T8 performance (60.13%) suggests effective long-term adaptation, though its "n/a" uncertainty makes this less reliable. The mean-IMM line's high variability (19.16) indicates inconsistent performance across methods, while mode-IMM's stability (1.87) suggests common failure modes. The sharp declines observed in most methods after T1 highlight challenges in maintaining performance across sequential tasks, with EWC and MAS showing better long-term retention than LwF and finetuning.

DECODING INTELLIGENCE...