## Line Chart: Per-Period Regret Over Time

### Overview

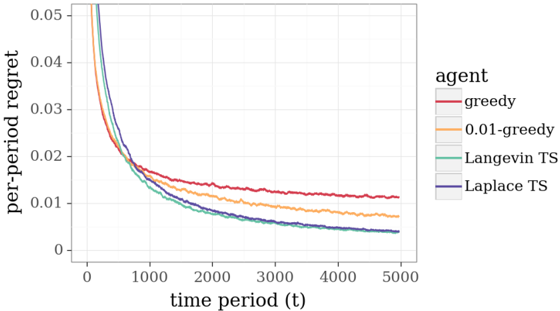

The image is a line chart comparing the performance of four different algorithmic agents over time. The performance metric is "per-period regret," which decreases for all agents as the time period increases, indicating learning or optimization. The chart is rendered with a clean, academic style, featuring a white background, light gray grid lines, and distinct colored lines for each agent.

### Components/Axes

* **Y-Axis (Vertical):**

* **Label:** "per-period regret"

* **Scale:** Linear scale from 0 to 0.05.

* **Major Ticks:** 0, 0.01, 0.02, 0.03, 0.04, 0.05.

* **X-Axis (Horizontal):**

* **Label:** "time period (t)"

* **Scale:** Linear scale from 0 to 5000.

* **Major Ticks:** 0, 1000, 2000, 3000, 4000, 5000.

* **Legend:**

* **Position:** Centered on the right side of the chart area.

* **Title:** "agent"

* **Entries (from top to bottom):**

1. **greedy** - Represented by a red line.

2. **0.01-greedy** - Represented by an orange line.

3. **Langevin TS** - Represented by a green line.

4. **Laplace TS** - Represented by a blue line.

### Detailed Analysis

The chart plots four data series, each showing a decreasing trend in per-period regret as time progresses.

1. **greedy (Red Line):**

* **Trend:** Starts at the highest regret value (off the chart, >0.05 at t=0). It decreases rapidly initially but then flattens out, maintaining the highest regret among all agents for the entire duration.

* **Approximate Data Points:**

* t=0: >0.05

* t=1000: ~0.018

* t=2000: ~0.014

* t=3000: ~0.012

* t=5000: ~0.011

2. **0.01-greedy (Orange Line):**

* **Trend:** Starts very high (off chart, >0.05 at t=0). It decreases sharply, crossing below the greedy line before t=1000. It continues to decrease but remains above the two Thompson Sampling (TS) lines.

* **Approximate Data Points:**

* t=0: >0.05

* t=1000: ~0.016

* t=2000: ~0.011

* t=3000: ~0.009

* t=5000: ~0.007

3. **Langevin TS (Green Line):**

* **Trend:** Starts high (off chart, >0.05 at t=0). It decreases very rapidly, converging closely with the Laplace TS line after t=2000. It performs better (lower regret) than both greedy variants.

* **Approximate Data Points:**

* t=0: >0.05

* t=1000: ~0.015

* t=2000: ~0.008

* t=3000: ~0.006

* t=5000: ~0.004

4. **Laplace TS (Blue Line):**

* **Trend:** Starts high (off chart, >0.05 at t=0). It shows the steepest initial decline and maintains the lowest regret for most of the timeline, though it is nearly identical to Langevin TS from t=2000 onward.

* **Approximate Data Points:**

* t=0: >0.05

* t=1000: ~0.015

* t=2000: ~0.008

* t=3000: ~0.006

* t=5000: ~0.004

### Key Observations

* **Performance Hierarchy:** There is a clear and consistent ordering of performance from worst to best: greedy < 0.01-greedy < Langevin TS ≈ Laplace TS.

* **Convergence:** All agents show diminishing returns in regret reduction over time. The rate of improvement slows significantly after t=2000.

* **Similarity of TS Methods:** The two Thompson Sampling variants (Langevin and Laplace) exhibit nearly identical performance after the initial learning phase (t>2000), suggesting their regret-minimization properties converge in this scenario.

* **Impact of Exploration:** The pure greedy agent performs worst. Introducing a small exploration rate (0.01-greedy) yields a significant improvement. The probabilistic exploration inherent in Thompson Sampling methods yields the best performance.

### Interpretation

This chart demonstrates the classic exploration-exploitation trade-off in multi-armed bandit or reinforcement learning problems. The "regret" metric quantifies the cost of not choosing the optimal action at each time step.

* **What the data suggests:** The data strongly suggests that for this specific problem, algorithms incorporating sophisticated probabilistic exploration (Thompson Sampling) are more efficient at minimizing long-term regret than simple epsilon-greedy strategies. The near-identical performance of Langevin and Laplace TS indicates that the specific approximation method for the posterior distribution may be less critical than the fundamental decision-making framework of Thompson Sampling itself.

* **Relationship between elements:** The x-axis (time) represents the learning process. As the agents gather more data (t increases), their models improve, leading to better decisions and lower regret (y-axis). The separation between the lines illustrates the relative efficiency of each agent's learning algorithm.

* **Notable trends/anomalies:** The most notable trend is the rapid initial descent of all curves, followed by a long tail of slow improvement. This is characteristic of learning curves where easy gains are made early, and further optimization becomes progressively harder. There are no apparent anomalies; the curves behave as expected for well-understood algorithms. The fact that all regret values are positive and decreasing confirms that all agents are learning, but at different rates.