## System Architecture Diagram: APTPU Generation Framework

### Overview

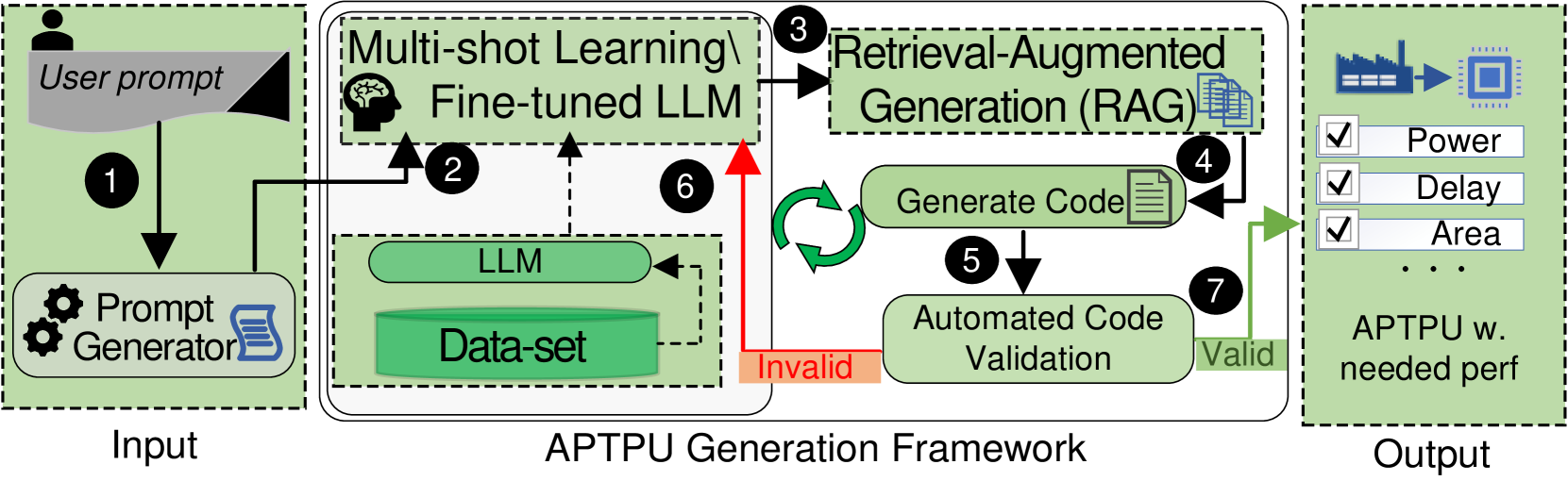

The image is a technical flowchart illustrating the architecture and workflow of the "APTPU Generation Framework." It depicts an automated system that takes a user prompt as input and generates a specialized processing unit (APTPU) with specified performance characteristics. The process involves multiple stages of prompt engineering, large language model (LLM) processing, retrieval-augmented generation, code generation, and validation.

### Components/Flow

The diagram is organized into three primary vertical sections, from left to right:

1. **Input Section (Left, light green background):** Contains the starting point of the workflow.

2. **APTPU Generation Framework (Center, white background):** The core processing engine, containing multiple interconnected modules.

3. **Output Section (Right, light green background):** Shows the final deliverable.

**Key Components and Their Spatial Placement:**

* **User prompt:** Top-left corner, represented by a document icon with a user silhouette.

* **Prompt Generator:** Below the User prompt, represented by a gear icon and a document icon.

* **Multi-shot Learning / Fine-tuned LLM:** Top-center of the framework section, represented by a brain icon.

* **LLM & Data-set:** Center-left of the framework, shown as a cylinder (Data-set) connected to a rounded rectangle (LLM).

* **Retrieval-Augmented Generation (RAG):** Top-right of the framework, represented by a document stack icon.

* **Generate Code:** Center-right of the framework, represented by a document icon.

* **Automated Code Validation:** Below "Generate Code," represented by a rounded rectangle.

* **Output Block:** Far right, showing a factory icon leading to a chip icon, followed by a checklist and the final label "APTPU w. needed perf".

**Process Flow (Numbered Steps):**

The flow is indicated by black arrows and numbered circles (1-7).

1. The **User prompt** feeds into the **Prompt Generator**.

2. The output of the Prompt Generator is sent to the **Multi-shot Learning / Fine-tuned LLM**.

3. The LLM's output is sent to the **Retrieval-Augmented Generation (RAG)** module.

4. The RAG module's output is sent to the **Generate Code** module.

5. Generated code is sent to **Automated Code Validation**.

6. **Feedback Loop:** If validation fails (marked by a red arrow labeled "Invalid"), the process loops back to the **Multi-shot Learning / Fine-tuned LLM** for refinement.

7. If validation succeeds (marked by a green arrow labeled "Valid"), the process proceeds to the **Output**.

**Internal Data Flow within the Framework:**

* A dashed arrow connects the **Data-set** to the **LLM**, indicating training or reference data.

* A dashed arrow connects the **LLM** to the **Multi-shot Learning / Fine-tuned LLM**, suggesting the base model is used to create the fine-tuned version.

* A circular green arrow between "Generate Code" and "Automated Code Validation" indicates an iterative refinement loop.

### Detailed Analysis

**Textual Content Transcription:**

* **Input Section:** "User prompt", "Prompt Generator"

* **APTPU Generation Framework:**

* "Multi-shot Learning \ Fine-tuned LLM"

* "Retrieval-Augmented Generation (RAG)"

* "LLM"

* "Data-set"

* "Generate Code"

* "Automated Code Validation"

* Flow labels: "Invalid" (on red arrow), "Valid" (on green arrow)

* **Output Section:**

* Checklist items: "Power", "Delay", "Area", "..." (ellipsis indicating more items)

* Final label: "APTPU w. needed perf"

**Component Relationships:**

The system is a sequential pipeline with a critical feedback loop. The **Prompt Generator** acts as an initial translator of user intent. The core intelligence resides in the **Fine-tuned LLM**, which is augmented by both a static **Data-set** and a dynamic **RAG** system for retrieving relevant information during generation. The **Generate Code** module produces hardware description code, which is then vetted by **Automated Code Validation**. The "Invalid" feedback path (Step 6) is crucial, as it forces the LLM to learn from its mistakes, creating a self-improving system. The "Valid" path (Step 7) leads to the final product.

### Key Observations

1. **Hybrid AI Architecture:** The framework combines several advanced AI techniques: prompt engineering, multi-shot learning, fine-tuning, and Retrieval-Augmented Generation (RAG). This suggests a system designed for high accuracy and adaptability.

2. **Closed-Loop Validation:** The presence of the "Automated Code Validation" step with a feedback loop to the LLM is a key feature. It implies the system doesn't just generate code once but iteratively improves it until it meets functional or performance criteria.

3. **Performance-Driven Output:** The output is explicitly defined by a checklist of hardware metrics ("Power", "Delay", "Area"), indicating the framework's goal is to generate hardware (an APTPU) optimized for specific, quantifiable performance targets.

4. **Modular Design:** Each major function (prompt generation, LLM processing, retrieval, code generation, validation) is a distinct module, suggesting a flexible and maintainable system architecture.

### Interpretation

This diagram illustrates a sophisticated **AI-driven hardware design automation tool**. The "APTPU" likely stands for something like "Application-Specific Processing Unit" or "Adaptive Processing Unit." The framework's purpose is to bridge the gap between high-level, possibly natural language, design specifications ("User prompt") and low-level, synthesizable hardware code.

The inclusion of RAG is particularly significant. It suggests the system doesn't rely solely on the LLM's parametric knowledge but can actively retrieve and incorporate up-to-date or specialized design rules, component libraries, or architectural templates from an external knowledge base during the generation process. This would greatly enhance the relevance and correctness of the generated hardware designs.

The feedback loop transforms the system from a simple code generator into a **correct-by-construction synthesis engine**. By validating the output and feeding failures back into the LLM, the system can learn common pitfalls and constraints of hardware design, progressively improving its success rate. The final output is not just code, but a guaranteed (to the extent of the validator's checks) hardware block meeting specified power, performance, and area (PPA) constraints, which are the fundamental metrics in chip design.

In essence, the image depicts a pipeline that automates the specialized hardware design process using a suite of modern AI techniques, aiming to reduce design time, lower the expertise barrier, and reliably produce optimized hardware accelerators.