## Diagram: Paradigms for Integrating Large Language Models (LLMs) and Knowledge Graphs (KGs)

### Overview

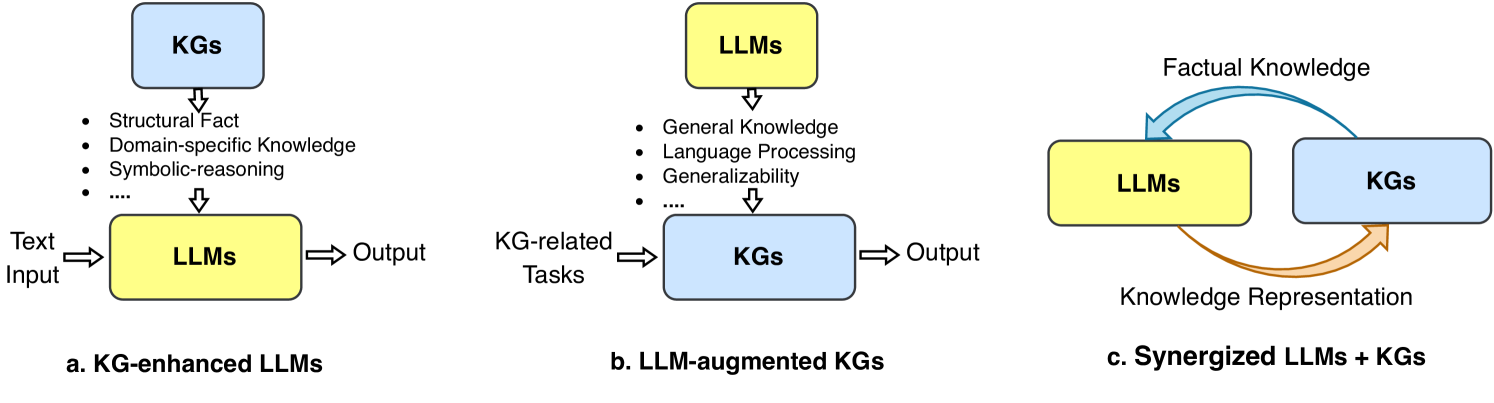

The image is a technical diagram illustrating three distinct paradigms for the interaction and integration between Large Language Models (LLMs) and Knowledge Graphs (KGs). It is divided into three horizontally arranged sections, labeled a, b, and c, each depicting a different architectural relationship and flow of information.

### Components/Axes

The diagram uses consistent visual elements:

* **Boxes:** Two types of rounded rectangles represent the core components.

* **Blue Boxes:** Labeled "KGs" (Knowledge Graphs).

* **Yellow Boxes:** Labeled "LLMs" (Large Language Models).

* **Arrows:** Indicate the direction of information flow or influence.

* **Straight, Downward Arrows:** Show the flow of capabilities or knowledge from one component to another.

* **Straight, Horizontal Arrows:** Indicate input and output for a process.

* **Curved Arrows (in section c):** Represent a bidirectional, synergistic exchange.

* **Text Labels:** Provide titles, component names, and descriptive bullet points.

### Detailed Analysis

The diagram is segmented into three independent models:

**a. KG-enhanced LLMs (Left Section)**

* **Layout:** A blue "KGs" box is positioned at the top. A downward arrow points to a yellow "LLMs" box below it.

* **Flow:** Text enters the LLM box from the left ("Text Input"), and an output exits to the right ("Output").

* **Capabilities from KGs:** The arrow from KGs to LLMs is annotated with a bulleted list describing the knowledge transferred:

* Structural Fact

* Domain-specific Knowledge

* Symbolic-reasoning

* ... (ellipsis indicating additional items)

**b. LLM-augmented KGs (Center Section)**

* **Layout:** A yellow "LLMs" box is positioned at the top. A downward arrow points to a blue "KGs" box below it.

* **Flow:** "KG-related Tasks" enter the KG box from the left, and an "Output" exits to the right.

* **Capabilities from LLMs:** The arrow from LLMs to KGs is annotated with a bulleted list describing the capabilities provided:

* General Knowledge

* Language Processing

* Generalizability

* ... (ellipsis indicating additional items)

**c. Synergized LLMs + KGs (Right Section)**

* **Layout:** A yellow "LLMs" box and a blue "KGs" box are placed side-by-side at the same horizontal level.

* **Flow:** Two curved arrows create a closed loop between them.

* A **blue, curved arrow** flows from the top of the "KGs" box to the top of the "LLMs" box. It is labeled **"Factual Knowledge"**.

* An **orange, curved arrow** flows from the bottom of the "LLMs" box to the bottom of the "KGs" box. It is labeled **"Knowledge Representation"**.

### Key Observations

1. **Directional Dependency:** Sections (a) and (b) depict a unidirectional, hierarchical relationship where one system enhances the other. Section (c) depicts a bidirectional, peer-to-peer relationship.

2. **Role Specialization:** The bullet points explicitly define the complementary strengths each system contributes: KGs provide structured, factual, and symbolic knowledge, while LLMs provide broad, procedural, and linguistic capabilities.

3. **Visual Consistency:** The color coding (blue for KGs, yellow for LLMs) is maintained across all three panels, allowing for easy comparison of the structural differences between paradigms.

4. **Process Context:** Sections (a) and (b) include explicit input/output labels ("Text Input", "KG-related Tasks", "Output"), grounding the abstract models in a practical processing context. Section (c) abstracts this into a continuous cycle of knowledge exchange.

### Interpretation

This diagram serves as a conceptual framework for understanding the evolving field of neuro-symbolic AI, specifically the integration of neural networks (LLMs) and symbolic knowledge bases (KGs).

* **Paradigm (a) - KG-enhanced LLMs:** This represents a "knowledge injection" approach. The goal is to ground the vast but potentially unstructured and hallucination-prone knowledge of an LLM with the precise, structured facts from a KG to improve accuracy and reliability, especially for domain-specific or factual question-answering.

* **Paradigm (b) - LLM-augmented KGs:** This represents an "automation and enrichment" approach. Here, the LLM's powerful language understanding and generation capabilities are used to automate labor-intensive KG tasks like entity linking, relation extraction, schema induction, and query generation, making KG construction and maintenance more scalable.

* **Paradigm (c) - Synergized LLMs + KGs:** This is the most advanced vision, proposing a continuous, co-evolutionary loop. The KG provides verified **Factual Knowledge** to constrain and inform the LLM's outputs. In return, the LLM helps structure unstructured data into formal **Knowledge Representation** (e.g., generating RDF triples or updating ontologies) to expand and refine the KG. This creates a self-improving system where each component addresses the other's weaknesses: the KG provides precision and explainability, while the LLM provides flexibility and scalability.

The progression from (a) to (c) illustrates a shift from using one system as a tool for the other towards a deeply integrated partnership, aiming to combine the reasoning strengths of symbolic AI with the generative and adaptive strengths of modern deep learning.