## Diagram: Integration of LLMs and KGs in AI Systems

### Overview

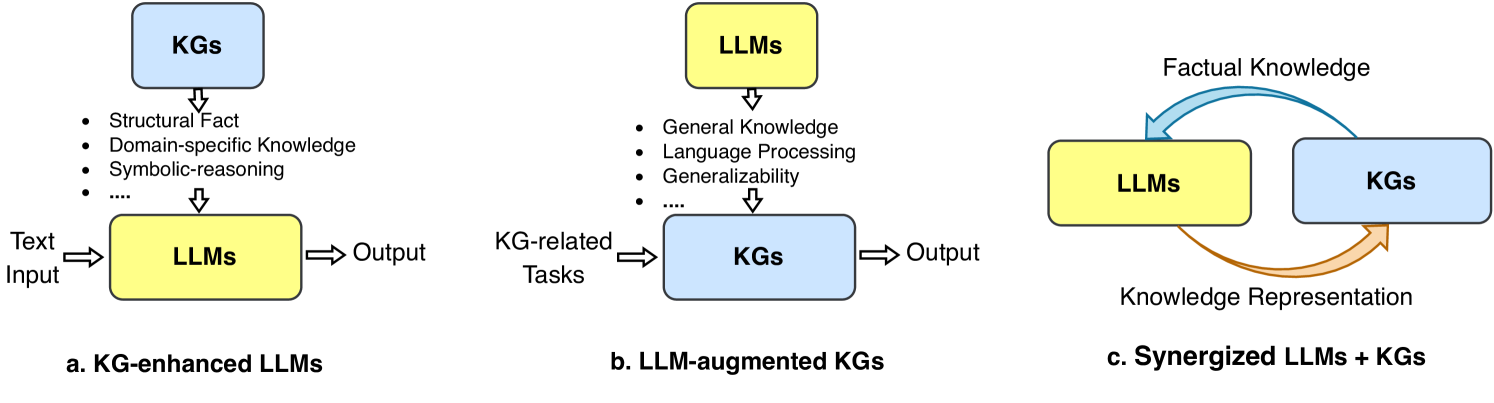

The diagram illustrates three conceptual frameworks for integrating Large Language Models (LLMs) and Knowledge Graphs (KGs) to enhance AI capabilities. It emphasizes bidirectional knowledge exchange, with KGs providing structured domain-specific knowledge and LLMs contributing language processing and generalization.

### Components/Axes

1. **Color Coding**:

- **Blue**: Represents KGs (Knowledge Graphs).

- **Yellow**: Represents LLMs (Large Language Models).

- **Orange Arrows**: Denote "Knowledge Representation" or "Factual Knowledge" flow.

2. **Key Labels**:

- **Section a (KG-enhanced LLMs)**:

- Input: Text Input → LLMs → Output.

- KGs provide: Structural Fact, Domain-specific Knowledge, Symbolic-reasoning.

- **Section b (LLM-augmented KGs)**:

- Input: KG-related Tasks → KGs → Output.

- LLMs contribute: General Knowledge, Language Processing, Generalizability.

- **Section c (Synergized LLMs + KGs)**:

- Bidirectional flow: LLMs ↔ KGs via "Factual Knowledge" and "Knowledge Representation."

3. **Flow Direction**:

- Arrows indicate unidirectional or bidirectional knowledge transfer.

- Orange arrows in section c highlight cyclical knowledge refinement.

### Detailed Analysis

- **Section a**:

- KGs act as a knowledge reservoir, injecting structured facts and domain expertise into LLMs to improve output quality.

- Example: A medical LLM enhanced with a KG of clinical guidelines.

- **Section b**:

- LLMs enrich KGs by adding general knowledge and language understanding, enabling KGs to handle dynamic, real-world tasks.

- Example: A KG for customer service augmented with an LLM’s ability to interpret natural language queries.

- **Section c**:

- Synergy: LLMs and KGs mutually refine each other’s outputs. LLMs process unstructured text, while KGs validate and structure the knowledge.

- Example: A legal document analysis system where LLMs extract clauses and KGs ensure compliance with regulations.

### Key Observations

1. **Bidirectional Dependency**:

- KGs provide LLMs with factual grounding, reducing hallucinations.

- LLMs enable KGs to process and adapt to unstructured data.

2. **Knowledge Representation**:

- The orange arrows in section c emphasize iterative knowledge refinement, suggesting a feedback loop for continuous improvement.

3. **Task Specialization**:

- KGs excel in structured, domain-specific tasks (e.g., medical diagnoses).

- LLMs excel in language understanding and generalization (e.g., chatbots).

### Interpretation

This diagram underscores the transformative potential of combining LLMs and KGs:

- **For LLMs**: KGs mitigate limitations like factual inaccuracies by anchoring outputs in verified knowledge.

- **For KGs**: LLMs enhance flexibility, allowing KGs to evolve with new, unstructured data (e.g., social media trends).

- **Synergy**: The cyclical relationship in section c suggests a paradigm shift toward hybrid AI systems that balance structured reasoning (KGs) with adaptive learning (LLMs).

**Notable Insight**: The absence of explicit numerical data implies this is a conceptual framework, prioritizing architectural relationships over empirical metrics. The focus is on design principles rather than performance benchmarks.