## Neural Network Architecture Diagram: Dueling DQN with Auxiliary Tasks

### Overview

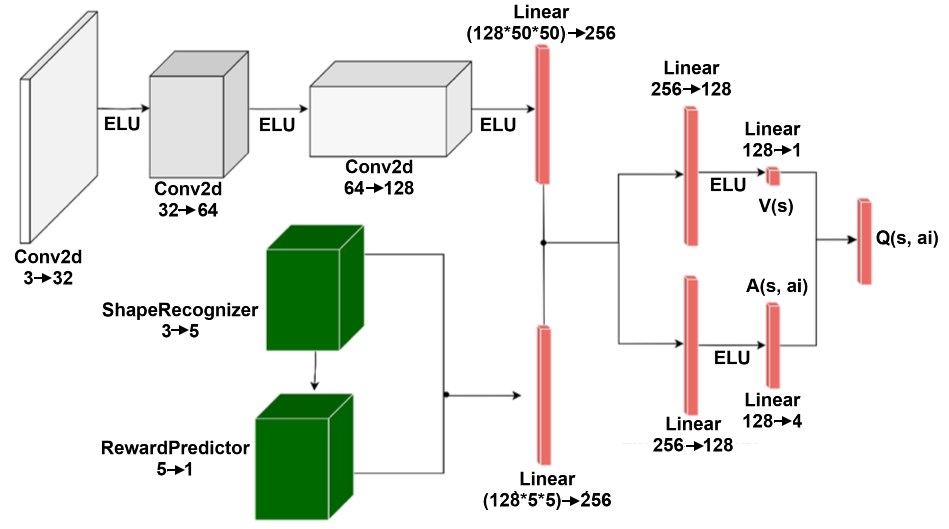

The image displays a detailed architectural diagram of a deep neural network designed for reinforcement learning. It combines a convolutional neural network (CNN) feature extractor with auxiliary modules for shape recognition and reward prediction, culminating in a Dueling Deep Q-Network (DQN) structure that outputs action-values Q(s, aᵢ). The flow proceeds from left to right, with visual input processed by the CNN and auxiliary tasks processed in parallel before their features are integrated.

### Components/Axes

The diagram is composed of interconnected blocks representing layers and modules, with arrows indicating data flow. Key components are color-coded:

* **Gray 3D Blocks**: Convolutional layers (Conv2d).

* **Green 3D Blocks**: Auxiliary task modules (ShapeRecognizer, RewardPredictor).

* **Red Vertical Bars**: Fully connected (Linear) layers.

* **Text Labels**: Layer names, input/output dimensions, and activation functions (ELU) are placed adjacent to their respective components.

**Spatial Layout:**

* **Top-Left to Top-Center**: The main CNN feature extraction branch.

* **Bottom-Left**: The auxiliary task branch (ShapeRecognizer and RewardPredictor).

* **Center-Right**: The integration point and subsequent Dueling network architecture (Value and Advantage streams).

* **Far-Right**: The final output node.

### Detailed Analysis

**1. Main CNN Branch (Top, Gray Blocks):**

* **Input**: Implicitly an image (3 channels, e.g., RGB).

* **Layer 1**: `Conv2d 3→32` followed by `ELU` activation. Output is a 32-channel feature map.

* **Layer 2**: `Conv2d 32→64` followed by `ELU` activation. Output is a 64-channel feature map.

* **Layer 3**: `Conv2d 64→128` followed by `ELU` activation. Output is a 128-channel feature map.

* **Flatten & Linear**: The output is flattened and passed to a `Linear (128*50*50)→256` layer. This suggests the spatial dimensions of the final convolutional feature map are 50x50. The output is a 256-dimensional vector.

**2. Auxiliary Task Branch (Bottom, Green Blocks):**

* **ShapeRecognizer**: `3→5`. This module takes a 3-dimensional input (possibly shape descriptors or a small feature vector) and outputs a 5-dimensional representation.

* **RewardPredictor**: `5→1`. This module takes the 5-dimensional output from the ShapeRecognizer and predicts a scalar reward (1-dimensional output).

* **Feature Integration**: The outputs from the auxiliary branch are not used directly as final predictions in this diagram. Instead, a feature vector (implied to be derived from these modules) is passed to a `Linear (128*5*5)→256` layer. This suggests the auxiliary modules process a 5x5 spatial input with 128 channels, flattening to 128*5*5=3200 before projection to 256 dimensions.

**3. Feature Fusion & Dueling Architecture (Right, Red Bars):**

* The 256-dimensional outputs from the **main CNN branch** and the **auxiliary branch** are concatenated or summed (the diagram shows them merging at a single point) to form a combined feature vector.

* This combined vector is passed through a shared `Linear 256→128` layer.

* The output then splits into two streams:

* **Value Stream V(s)**: `Linear 128→1` followed by `ELU`. Outputs a scalar state-value V(s).

* **Advantage Stream A(s, aᵢ)**: `Linear 128→4` followed by `ELU`. Outputs a 4-dimensional advantage vector, suggesting there are 4 possible actions (aᵢ).

* **Final Output Q(s, aᵢ)**: The value and advantage streams are combined (typically as Q(s,a) = V(s) + A(s,a) - mean(A(s,a'))) to produce the final action-value output `Q(s, aᵢ)`, represented by a single red bar.

### Key Observations

1. **Hybrid Architecture**: The model integrates pure visual feature learning (CNN) with explicit auxiliary tasks (shape recognition, reward prediction). This is a form of multi-task or auxiliary-task learning, often used to improve representation learning and sample efficiency in reinforcement learning.

2. **Dueling DQN Structure**: The clear separation into Value V(s) and Advantage A(s, aᵢ) streams is the hallmark of a Dueling DQN architecture, which can lead to more stable learning by separately estimating state values and action advantages.

3. **Dimensionality Flow**: The diagram meticulously notes the changing dimensionality at each step (e.g., `3→32`, `128*50*50→256`), providing a clear blueprint for implementation.

4. **Activation Function**: The Exponential Linear Unit (`ELU`) is used consistently after convolutional and linear layers, except before the final output nodes of the Value and Advantage streams.

5. **Action Space**: The advantage stream outputs 4 values (`Linear 128→4`), indicating the environment has a discrete action space of size 4.

### Interpretation

This diagram represents a sophisticated reinforcement learning agent's "brain." The architecture suggests the agent is designed for an environment where visual perception is crucial (hence the deep CNN). The inclusion of **ShapeRecognizer** and **RewardPredictor** as auxiliary tasks is a strategic design choice. By forcing the network to simultaneously learn to recognize shapes and predict rewards, it likely develops more robust and generalizable internal representations of the environment. This can lead to faster learning and better performance, especially in environments with sparse rewards or visual complexity.

The **Dueling DQN** head is a proven technique for improving value estimation. By learning which states are valuable regardless of the action (V(s)) and which actions offer the most advantage in a given state (A(s, aᵢ)), the agent can make more nuanced decisions. The final output `Q(s, aᵢ)` provides the estimated long-term return for taking each of the 4 possible actions in a given state, which the agent would use to select the best action.

In summary, this is not a generic network but a purpose-built architecture for a visual reinforcement learning task, incorporating modern techniques (auxiliary tasks, dueling streams) to enhance learning efficiency and decision quality. The explicit dimensionality notes make it a technical blueprint ready for implementation.