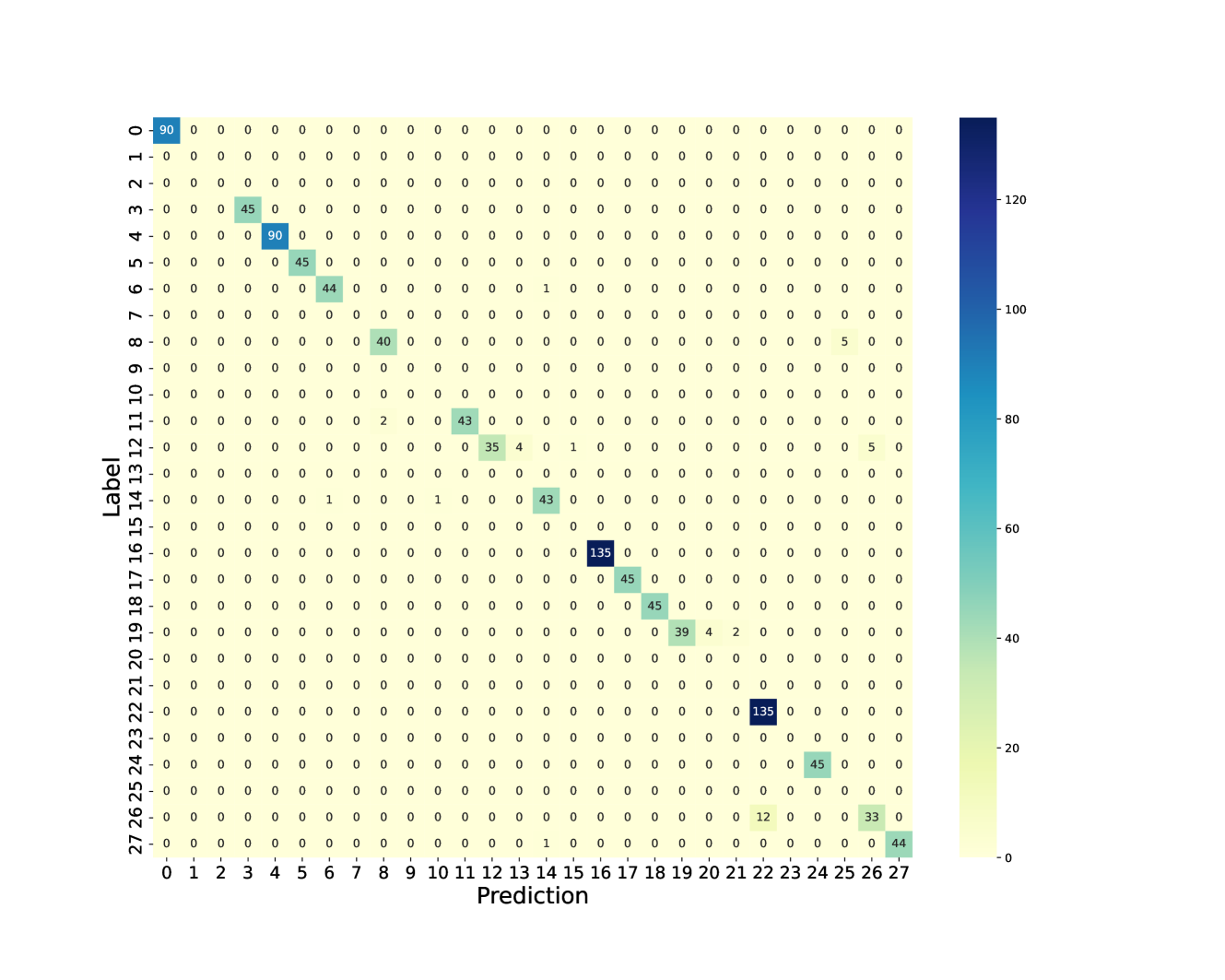

## Heatmap: Confusion Matrix for 28-Class Classification

### Overview

The image displays a confusion matrix, a type of heatmap used to evaluate the performance of a classification model. The matrix compares the true "Label" (ground truth) against the model's "Prediction" for 28 distinct classes, numbered 0 through 27. The primary language in the image is English.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Label". It lists the true class identifiers from 0 at the top to 27 at the bottom.

* **X-Axis (Horizontal):** Labeled "Prediction". It lists the predicted class identifiers from 0 on the left to 27 on the right.

* **Color Scale/Legend:** A vertical color bar is positioned on the right side of the chart. It maps numerical values within the matrix cells to a color gradient.

* **Scale Range:** Approximately 0 to over 120.

* **Color Gradient:** Transitions from a pale yellow (low values, ~0) through teal and cyan to a deep blue (high values, ~135).

* **Tick Marks:** The bar has labeled tick marks at 0, 20, 40, 60, 80, 100, and 120.

* **Matrix Grid:** A 28x28 grid of cells. Each cell's color corresponds to the count of samples where the true class was the row label and the predicted class was the column label. The numerical count is also printed inside each cell.

### Detailed Analysis

The matrix is predominantly filled with zeros (pale yellow cells), indicating that for most class pairs, there were no samples. The non-zero values are concentrated along the main diagonal (from top-left to bottom-right), which represents correct classifications (where Label = Prediction).

**Non-Zero Data Points (True Label, Prediction, Count):**

* **Diagonal (Correct Predictions):**

* (0, 0): 90

* (3, 3): 45

* (4, 4): 90

* (5, 5): 45

* (6, 6): 44

* (8, 8): 40

* (11, 11): 43

* (12, 12): 35

* (14, 14): 43

* (16, 16): 135 (Highest value on the diagonal)

* (17, 17): 45

* (18, 18): 45

* (19, 19): 39

* (22, 22): 135 (Tied for highest value)

* (24, 24): 45

* (26, 26): 33

* (27, 27): 44

* **Off-Diagonal (Misclassifications):**

* (6, 13): 1

* (8, 25): 5

* (11, 9): 2

* (12, 13): 4

* (12, 15): 1

* (12, 25): 5

* (14, 8): 1

* (14, 10): 1

* (19, 20): 4

* (19, 21): 2

* (26, 22): 12

* (27, 14): 1

### Key Observations

1. **Strong Diagonal Dominance:** The vast majority of non-zero counts lie on the main diagonal, indicating the model has high accuracy for most classes.

2. **High-Performing Classes:** Classes 16 and 22 have the highest correct prediction counts (135 each). Classes 0 and 4 also show strong performance (90 each).

3. **Specific Misclassification Patterns:**

* Class 12 is most often misclassified as class 13 (4 instances) and class 25 (5 instances).

* Class 26 has a notable misclassification as class 22 (12 instances).

* Class 8 is misclassified as class 25 (5 instances).

* Class 19 shows minor confusion with classes 20 and 21.

4. **Sparse Errors:** Misclassifications are generally low-count (mostly 1-5 instances), except for the (26, 22) pair.

5. **Class Imbalance:** The number of samples per class varies significantly, as seen in the diagonal values (e.g., 135 vs. 33).

### Interpretation

This confusion matrix provides a detailed diagnostic view of a multi-class classifier's performance. The strong diagonal indicates the model has learned the primary features of most classes effectively. The off-diagonal elements reveal specific, systematic errors.

The most significant finding is the model's confusion between **class 26 and class 22** (12 instances). This suggests these two classes share visual or feature-space similarities that the model struggles to distinguish. Similarly, the confusion between **class 12 and classes 13/25** points to potential ambiguity in the defining characteristics of these groups.

The variation in diagonal values (from 33 to 135) strongly suggests the underlying dataset is imbalanced, with some classes having far more training examples than others. This imbalance likely contributes to the model's varying confidence and accuracy across classes.

For a technical document, this analysis highlights which class pairs require further investigation—either through collecting more distinct training data, feature engineering, or adjusting the model's loss function to penalize these specific errors more heavily. The matrix serves as a roadmap for targeted model improvement.