TECHNICAL ASSET FINGERPRINT

a5e894f97f77d0fe24c0e252

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

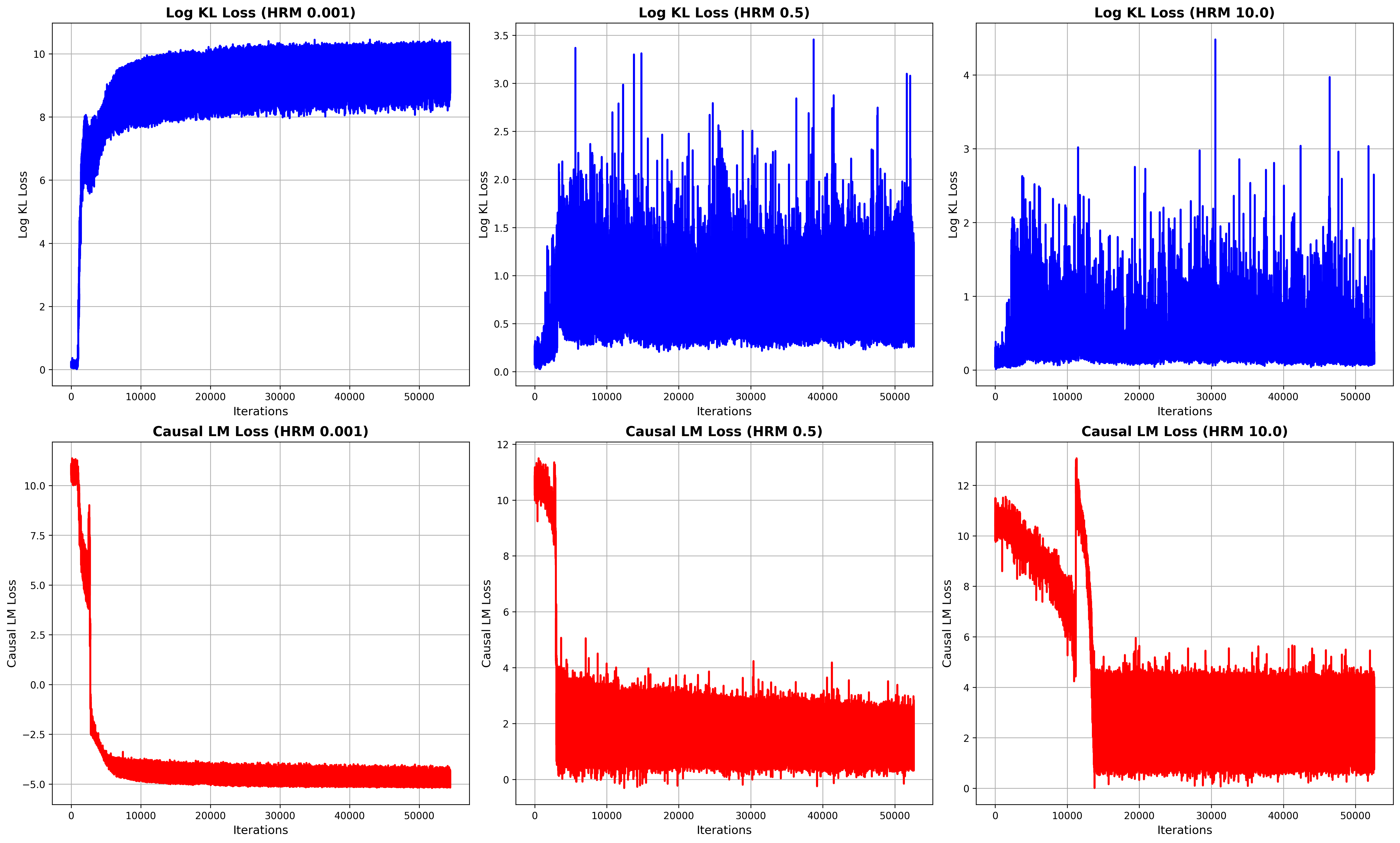

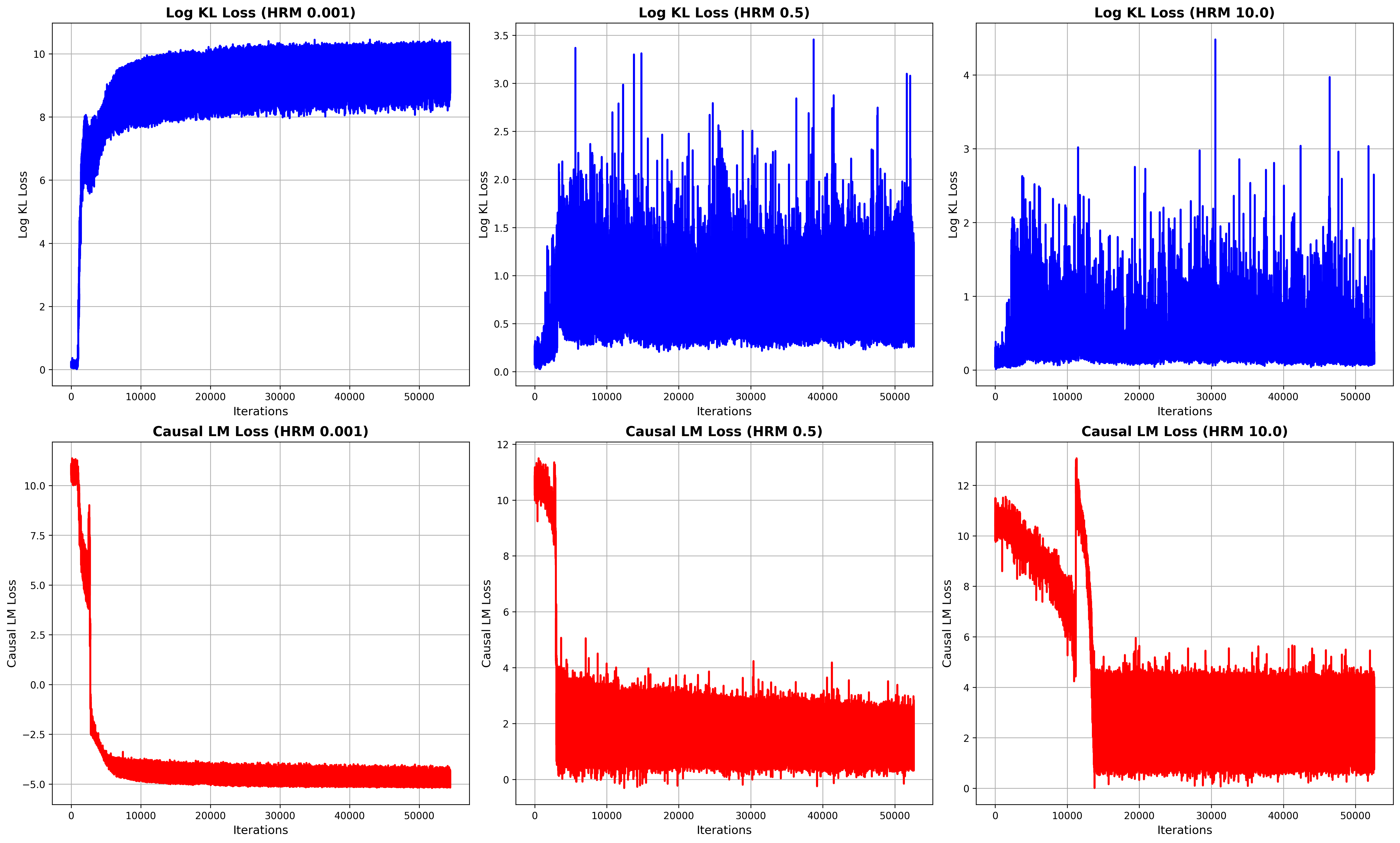

## Loss Curves for Log KL and Causal LM with Varying HRM Values

### Overview

The image presents six line charts arranged in a 2x3 grid. The top row displays "Log KL Loss" against "Iterations" for three different HRM (Hyperparameter Ratio Metric) values: 0.001, 0.5, and 10.0. The bottom row mirrors this structure, but for "Causal LM Loss." The x-axis, "Iterations," is consistent across all charts, ranging from 0 to 50000. The y-axes vary in scale depending on the loss type and HRM value.

### Components/Axes

* **Titles:**

* Top-left: "Log KL Loss (HRM 0.001)"

* Top-middle: "Log KL Loss (HRM 0.5)"

* Top-right: "Log KL Loss (HRM 10.0)"

* Bottom-left: "Causal LM Loss (HRM 0.001)"

* Bottom-middle: "Causal LM Loss (HRM 0.5)"

* Bottom-right: "Causal LM Loss (HRM 10.0)"

* **X-axis:**

* Label: "Iterations"

* Range: 0 to 50000, with gridlines at approximately 10000 intervals.

* **Y-axis (Log KL Loss):**

* Label: "Log KL Loss"

* Top-left: Range 0 to 10, with gridlines at intervals of 2.

* Top-middle: Range 0.0 to 3.5, with gridlines at intervals of 0.5.

* Top-right: Range 0 to 4, with gridlines at intervals of 1.

* **Y-axis (Causal LM Loss):**

* Label: "Causal LM Loss"

* Bottom-left: Range -5.0 to 10.0, with gridlines at intervals of 2.5.

* Bottom-middle: Range 0 to 12, with gridlines at intervals of 2.

* Bottom-right: Range 0 to 12, with gridlines at intervals of 2.

* **Data Series:**

* Log KL Loss: Blue line

* Causal LM Loss: Red line

### Detailed Analysis

**Top Row: Log KL Loss**

* **Log KL Loss (HRM 0.001):**

* Trend: The blue line starts near 0, rapidly increases to approximately 8 by 10000 iterations, and then plateaus with minor fluctuations around 8-10 for the remaining iterations.

* Data Points: Initial value ~0, peak value ~10, plateau value ~8.

* **Log KL Loss (HRM 0.5):**

* Trend: The blue line starts near 0, increases to approximately 1.5 by 5000 iterations, and then fluctuates significantly between 0 and 3.5 for the remaining iterations.

* Data Points: Initial value ~0, peak value ~3.5, average value ~1.

* **Log KL Loss (HRM 10.0):**

* Trend: The blue line starts near 0, increases to approximately 1 by 5000 iterations, and then fluctuates significantly between 0 and 4 for the remaining iterations.

* Data Points: Initial value ~0, peak value ~4, average value ~1.

**Bottom Row: Causal LM Loss**

* **Causal LM Loss (HRM 0.001):**

* Trend: The red line starts at approximately 11, rapidly decreases to approximately -4 by 10000 iterations, and then plateaus with minor fluctuations around -4 to -5 for the remaining iterations.

* Data Points: Initial value ~11, minimum value ~-5, plateau value ~-4.

* **Causal LM Loss (HRM 0.5):**

* Trend: The red line starts at approximately 12, rapidly decreases to approximately 4 by 5000 iterations, and then fluctuates significantly between 0 and 6 for the remaining iterations.

* Data Points: Initial value ~12, average value ~3.

* **Causal LM Loss (HRM 10.0):**

* Trend: The red line starts at approximately 12, rapidly decreases to approximately 2 by 15000 iterations, and then fluctuates significantly between 0 and 4 for the remaining iterations.

* Data Points: Initial value ~12, average value ~2.

### Key Observations

* For Log KL Loss, a lower HRM value (0.001) results in a more stable and higher loss value after the initial increase. Higher HRM values (0.5 and 10.0) lead to more volatile loss curves.

* For Causal LM Loss, a lower HRM value (0.001) results in a more stable and lower loss value after the initial decrease. Higher HRM values (0.5 and 10.0) lead to more volatile loss curves.

* The "Iterations" axis is consistent across all plots, allowing for direct comparison of convergence speed and stability.

* The scales of the Y-axes differ, reflecting the different magnitudes of the loss values for each loss type and HRM value.

### Interpretation

The charts illustrate the impact of the HRM hyperparameter on the training dynamics of two different loss functions: Log KL Loss and Causal LM Loss. The results suggest that a lower HRM value (0.001) leads to more stable convergence for both loss functions, albeit at different final loss values. Higher HRM values (0.5 and 10.0) result in more volatile training, potentially indicating instability or a slower convergence rate. The choice of HRM value appears to be a critical factor in achieving stable and efficient training for these models. The data suggests that for this particular setup, an HRM of 0.001 is preferable, as it leads to a stable loss value for both Log KL Loss and Causal LM Loss.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Loss Curves for Different HRM Values

### Overview

The image presents six line charts displaying loss curves during training iterations. Each chart represents a different loss function (Log KL Loss and Causal LM Loss) evaluated at three different HRM (Hidden Representation Masking) values: 0.001, 0.5, and 10.0. The x-axis represents the number of iterations, ranging from 0 to 50000. The y-axis represents the loss value, with different scales for each chart.

### Components/Axes

* **X-axis (all charts):** Iterations (0 to 50000)

* **Y-axis (Top Row):** Log KL Loss (scales vary)

* **Y-axis (Bottom Row):** Causal LM Loss (scales vary)

* **Titles:** Each chart has a title indicating the loss function and HRM value.

* Log KL Loss (HRM 0.001)

* Log KL Loss (HRM 0.5)

* Log KL Loss (HRM 10.0)

* Causal LM Loss (HRM 0.001)

* Causal LM Loss (HRM 0.5)

* Causal LM Loss (HRM 10.0)

* **Data Series:** Each chart contains a single blue (for Log KL Loss) or red (for Causal LM Loss) line representing the loss value over iterations.

### Detailed Analysis or Content Details

**1. Log KL Loss (HRM 0.001):**

* Trend: The line starts at approximately 8.5 and decreases rapidly to around 1.0 within the first 1000 iterations. After that, it fluctuates around a value of approximately 1.0, with minor oscillations.

* Data Points (approximate):

* Iteration 0: Loss ≈ 8.5

* Iteration 1000: Loss ≈ 1.0

* Iteration 50000: Loss ≈ 1.0

**2. Log KL Loss (HRM 0.5):**

* Trend: The line starts at approximately 3.0 and decreases to around 0.5 within the first 1000 iterations. It then exhibits significant fluctuations, oscillating between approximately 0.5 and 3.0 throughout the remaining iterations.

* Data Points (approximate):

* Iteration 0: Loss ≈ 3.0

* Iteration 1000: Loss ≈ 0.5

* Iteration 50000: Loss ≈ 2.0

**3. Log KL Loss (HRM 10.0):**

* Trend: The line starts at approximately 4.0 and decreases to around 1.0 within the first 1000 iterations. It then fluctuates significantly, oscillating between approximately 1.0 and 4.0 throughout the remaining iterations.

* Data Points (approximate):

* Iteration 0: Loss ≈ 4.0

* Iteration 1000: Loss ≈ 1.0

* Iteration 50000: Loss ≈ 3.0

**4. Causal LM Loss (HRM 0.001):**

* Trend: The line starts at approximately 10.0 and decreases rapidly to around -5.0 within the first 1000 iterations. After that, it remains relatively stable around -5.0, with minor fluctuations.

* Data Points (approximate):

* Iteration 0: Loss ≈ 10.0

* Iteration 1000: Loss ≈ -1.0

* Iteration 50000: Loss ≈ -5.0

**5. Causal LM Loss (HRM 0.5):**

* Trend: The line starts at approximately 12.0 and decreases to around 2.0 within the first 1000 iterations. It then fluctuates significantly, oscillating between approximately 2.0 and 10.0 throughout the remaining iterations.

* Data Points (approximate):

* Iteration 0: Loss ≈ 12.0

* Iteration 1000: Loss ≈ 2.0

* Iteration 50000: Loss ≈ 4.0

**6. Causal LM Loss (HRM 10.0):**

* Trend: The line starts at approximately 12.0 and decreases to around 2.0 within the first 1000 iterations. It then fluctuates significantly, oscillating between approximately 2.0 and 10.0 throughout the remaining iterations.

* Data Points (approximate):

* Iteration 0: Loss ≈ 12.0

* Iteration 1000: Loss ≈ 2.0

* Iteration 50000: Loss ≈ 6.0

### Key Observations

* Lower HRM values (0.001) generally lead to more stable loss curves, especially for Causal LM Loss.

* Higher HRM values (0.5 and 10.0) result in more volatile loss curves, indicating potentially unstable training dynamics.

* Log KL Loss appears to converge to a relatively stable value for HRM 0.001, while it continues to fluctuate for higher HRM values.

* Causal LM Loss exhibits a strong decreasing trend initially for all HRM values, but the stability varies significantly.

### Interpretation

The charts demonstrate the impact of the HRM value on the training dynamics of the model. A very low HRM (0.001) seems to promote stable training, particularly for the Causal LM Loss, leading to convergence. However, increasing the HRM value introduces instability, as evidenced by the fluctuating loss curves. This suggests that masking a larger portion of the hidden representation (higher HRM) makes the training process more sensitive to the initial conditions and potentially hinders convergence. The differences in behavior between Log KL Loss and Causal LM Loss suggest that these loss functions respond differently to the masking strategy. The initial rapid decrease in Causal LM Loss across all HRM values indicates that the model quickly learns to predict the masked tokens, but the subsequent fluctuations suggest that maintaining this prediction accuracy becomes more challenging with higher HRM values. The data suggests that there is an optimal HRM value that balances the benefits of masking with the need for stable training. Further investigation would be needed to determine this optimal value.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Chart Type]: Training Loss Curves (2x3 Grid)

### Overview

The image displays a 2x3 grid of six line charts, visualizing two types of loss metrics over training iterations for three different values of a parameter labeled "HRM". The top row shows "Log KL Loss" (blue lines), and the bottom row shows "Causal LM Loss" (red lines). Each column corresponds to a specific HRM value: 0.001 (left), 0.5 (center), and 10.0 (right). All plots share the same x-axis representing training iterations.

### Components/Axes

**Common Elements:**

* **X-Axis (All Plots):** Labeled "Iterations". The scale runs from 0 to 50,000, with major tick marks at 0, 10000, 20000, 30000, 40000, and 50000.

* **Plot Titles:** Each subplot has a title indicating the loss type and HRM value.

* **Grid:** All plots have a light gray grid in the background.

**Top Row - Log KL Loss (Blue Lines):**

* **Y-Axis Label:** "Log KL Loss".

* **Plot 1 (Top-Left):** Title: "Log KL Loss (HRM 0.001)". Y-axis scale: 0 to 10, with ticks at 0, 2, 4, 6, 8, 10.

* **Plot 2 (Top-Center):** Title: "Log KL Loss (HRM 0.5)". Y-axis scale: 0.0 to 3.5, with ticks at 0.0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0, 3.5.

* **Plot 3 (Top-Right):** Title: "Log KL Loss (HRM 10.0)". Y-axis scale: 0 to 4, with ticks at 0, 1, 2, 3, 4.

**Bottom Row - Causal LM Loss (Red Lines):**

* **Y-Axis Label:** "Causal LM Loss".

* **Plot 4 (Bottom-Left):** Title: "Causal LM Loss (HRM 0.001)". Y-axis scale: -5.0 to 10.0, with ticks at -5.0, -2.5, 0.0, 2.5, 5.0, 7.5, 10.0.

* **Plot 5 (Bottom-Center):** Title: "Causal LM Loss (HRM 0.5)". Y-axis scale: 0 to 12, with ticks at 0, 2, 4, 6, 8, 10, 12.

* **Plot 6 (Bottom-Right):** Title: "Causal LM Loss (HRM 10.0)". Y-axis scale: 0 to 12, with ticks at 0, 2, 4, 6, 8, 10, 12.

### Detailed Analysis

**Trend Verification & Data Points:**

1. **Log KL Loss (HRM 0.001):**

* **Trend:** The blue line shows a very rapid, near-vertical increase from near 0 at iteration 0 to approximately 8 by iteration ~2000. It then continues to rise more gradually, reaching a plateau between ~8 and ~10.5 from iteration ~10,000 onward. The line exhibits high-frequency noise/variance throughout the plateau.

* **Key Values:** Starts ~0. Rapid rise to ~8 (iter ~2k). Plateau range: ~8 to ~10.5 (iter 10k-50k).

2. **Log KL Loss (HRM 0.5):**

* **Trend:** The blue line starts near 0, rises quickly to a range of ~0.3 to ~1.5 by iteration ~5000. It then enters a highly volatile phase with frequent, large upward spikes. The baseline of the signal appears to slowly increase from ~0.3 to ~0.5 over the course of training, while spikes regularly reach between 2.0 and 3.5.

* **Key Values:** Initial rise to ~0.3-1.5 (iter ~5k). Volatile baseline: ~0.3 to ~0.5. Prominent spikes: multiple instances reaching 2.5-3.5.

3. **Log KL Loss (HRM 10.0):**

* **Trend:** Similar volatile pattern to HRM 0.5, but with a different scale. The blue line starts near 0, rises to a noisy band between ~0.1 and ~1.0 by iteration ~5000. It continues with extreme volatility, featuring sharp spikes. The baseline appears lower than the HRM 0.5 case, but the spikes are very pronounced.

* **Key Values:** Initial rise to ~0.1-1.0 (iter ~5k). Volatile baseline: ~0.1 to ~0.5. Major spikes: several reaching 3.0-4.0.

4. **Causal LM Loss (HRM 0.001):**

* **Trend:** The red line starts at a high value (~10-11) and undergoes a dramatic, steep decline within the first ~5000 iterations, dropping below 0. It continues to decrease, stabilizing in a negative range. From iteration ~10,000 onward, it forms a dense, noisy band centered around approximately -4.5 to -5.0.

* **Key Values:** Starts ~10-11. Sharp drop to <0 by iter ~5k. Stable negative plateau: dense band from ~-4.0 to ~-5.5 (iter 10k-50k).

5. **Causal LM Loss (HRM 0.5):**

* **Trend:** The red line starts high (~10-11), drops sharply within the first ~5000 iterations to a range of ~0 to ~4. After this initial drop, it stabilizes into a noisy, horizontal band. The band's center appears to be around 2.0, with fluctuations mostly between 0 and 4.

* **Key Values:** Starts ~10-11. Sharp drop to ~0-4 by iter ~5k. Stable noisy band: ~0 to ~4, centered near 2.0 (iter 5k-50k).

6. **Causal LM Loss (HRM 10.0):**

* **Trend:** The red line starts high (~10-11). It shows an initial decline, but then exhibits a significant upward spike around iteration ~12,000, reaching near 13. Following this spike, it drops again and stabilizes into a noisy band. This final band is positioned higher than the HRM 0.5 case, centered around 4.0, with fluctuations mostly between 0 and 6.

* **Key Values:** Starts ~10-11. Spike to ~13 (iter ~12k). Stable noisy band after spike: ~0 to ~6, centered near 4.0 (iter ~15k-50k).

### Key Observations

1. **HRM Parameter Impact:** The HRM value dramatically affects the behavior and final value of both loss types.

2. **Log KL Loss Behavior:** Lower HRM (0.001) leads to a high, stable (though noisy) Log KL Loss. Higher HRM values (0.5, 10.0) result in lower baseline loss but introduce extreme volatility and large spikes.

3. **Causal LM Loss Behavior:** Lower HRM (0.001) drives the Causal LM Loss to a negative value, which is unusual for a standard loss function. Higher HRM values keep the loss positive, with the final stable value increasing as HRM increases (from ~2.0 at HRM 0.5 to ~4.0 at HRM 10.0).

4. **Inverse Relationship:** There appears to be an inverse relationship between the two losses across the HRM spectrum. The configuration that minimizes Causal LM Loss (HRM 0.001) maximizes Log KL Loss, and vice-versa.

5. **Training Dynamics:** All configurations show rapid change in the first 5,000-10,000 iterations before entering a more stable (though noisy) phase. The HRM 10.0 Causal LM Loss plot shows a notable instability (spike) later in training.

### Interpretation

This grid of charts likely visualizes the training dynamics of a machine learning model, possibly a variational autoencoder (VAE) or a similar model that optimizes a combined loss function containing both a Kullback-Leibler (KL) divergence term (Log KL Loss) and a language modeling (LM) term (Causal LM Loss). The "HRM" parameter appears to be a weighting coefficient that balances these two objectives.

The data demonstrates a classic **trade-off** controlled by HRM:

* **Low HRM (0.001):** The model heavily prioritizes minimizing the Causal LM Loss (achieving very low, even negative values), likely at the expense of the latent space regularization, causing the KL divergence (Log KL Loss) to become large. This could indicate posterior collapse or a poorly regularized latent space.

* **High HRM (10.0):** The model prioritizes keeping the KL divergence low (better regularization), but this comes at the cost of a higher Causal LM Loss, meaning the model's primary generative or predictive performance may be worse. The high volatility in KL loss suggests instability in the regularization process.

* **Intermediate HRM (0.5):** Represents a middle ground, with moderate values for both losses.

The negative Causal LM Loss for HRM 0.001 is a critical anomaly. In standard setups, cross-entropy loss (common for LM) is non-negative. This suggests either a non-standard loss formulation, a logging error (e.g., plotting the negative of the loss), or that the model is achieving a likelihood greater than the reference distribution in a way that yields a negative value on the chosen scale. This would require investigation into the specific loss implementation.

In summary, the charts provide a clear empirical map of how the HRM hyperparameter navigates the tension between model fit (Causal LM Loss) and latent space regularization (Log KL Loss), highlighting the instability and trade-offs inherent in training such models.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Log KL Loss and Causal LM Loss Across HRM Values

### Overview

The image contains six line graphs comparing **Log KL Loss** (top row) and **Causal LM Loss** (bottom row) across three Hyperparameter (HRM) values: **0.001**, **0.5**, and **10.0**. Each graph tracks loss values over **50,000 iterations**, with distinct trends observed for different HRM settings.

---

### Components/Axes

1. **X-Axis**: Labeled "Iterations" (0 to 50,000 in increments of 10,000).

2. **Y-Axis**:

- Top row: "Log KL Loss" (0 to 12, logarithmic scale).

- Bottom row: "Causal LM Loss" (0 to 12, linear scale).

3. **Legends**:

- Blue lines represent **Log KL Loss**.

- Red lines represent **Causal LM Loss**.

4. **Graph Titles**:

- Top row: "Log KL Loss (HRM X.X)".

- Bottom row: "Causal LM Loss (HRM X.X)".

---

### Detailed Analysis

#### Log KL Loss (Top Row)

1. **HRM 0.001**:

- Line starts at ~10, drops sharply to ~8 within 1,000 iterations, then stabilizes near **8.5**.

- Minimal fluctuation after initial drop.

2. **HRM 0.5**:

- Line oscillates between **1.5** and **3.5** with frequent spikes.

- No clear convergence; high volatility.

3. **HRM 10.0**:

- Line starts at ~2, spikes to ~4, then fluctuates between **1.5** and **3.5**.

- Persistent instability with sharp peaks.

#### Causal LM Loss (Bottom Row)

1. **HRM 0.001**:

- Line starts at ~10, drops sharply to **-5** within 1,000 iterations, then stabilizes near **-5**.

- Sharp initial decline followed by flatline.

2. **HRM 0.5**:

- Line starts at ~10, drops to ~2 within 1,000 iterations, then fluctuates between **1.5** and **4**.

- Moderate volatility after initial drop.

3. **HRM 10.0**:

- Line starts at ~10, drops to ~4 within 1,000 iterations, then stabilizes near **4**.

- Sharp initial drop but higher baseline than lower HRM values.

---

### Key Observations

1. **Log KL Loss**:

- Lower HRM (0.001) shows rapid convergence and stability.

- Higher HRM (10.0) exhibits persistent instability with frequent spikes.

- HRM 0.5 balances between volatility and partial convergence.

2. **Causal LM Loss**:

- All HRM values show sharp initial declines, but lower HRM achieves deeper minima.

- Higher HRM (10.0) stabilizes at a higher loss value than lower HRM settings.

3. **Inverse Relationship**:

- Lower HRM correlates with lower final Log KL Loss but higher final Causal LM Loss.

- Higher HRM correlates with higher final Log KL Loss but lower final Causal LM Loss.

---

### Interpretation

1. **HRM Impact on Training Dynamics**:

- **Low HRM (0.001)**: Prioritizes stability in Log KL Loss but may underfit Causal LM objectives.

- **High HRM (10.0)**: Encourages exploration (higher Log KL Loss) but risks instability in Causal LM training.

- **Intermediate HRM (0.5)**: Balances exploration and stability but lacks clear convergence.

2. **Loss Trade-offs**:

- The inverse relationship between Log KL and Causal LM Loss suggests a tension between model fidelity (KL divergence) and predictive performance (Causal LM).

- Lower HRM values may improve alignment with a reference distribution (KL) but sacrifice generalization (Causal LM).

3. **Anomalies**:

- HRM 10.0 in Log KL Loss shows extreme spikes, indicating potential overfitting or optimization instability.

- Causal LM Loss for HRM 0.001 achieves the lowest value (-5), suggesting optimal alignment with training objectives but possible over-specialization.

---

### Spatial Grounding

- **Legends**: Positioned in the top-right corner of each graph, matching line colors (blue for Log KL, red for Causal LM).

- **Axis Labels**: Centered on respective axes, with clear numerical increments.

- **Graph Titles**: Centered above each plot, explicitly stating HRM values.

---

### Conclusion

The data demonstrates that HRM values critically influence training stability and convergence behavior. Lower HRM settings favor stable, low-loss trajectories in Log KL Loss but may limit Causal LM performance. Higher HRM values encourage exploration but introduce volatility. These trade-offs highlight the need for careful HRM tuning to balance model alignment and generalization.

DECODING INTELLIGENCE...