\n

## Line Chart: LM Trailing Loss vs. Number of Hybrid Full Layers

### Overview

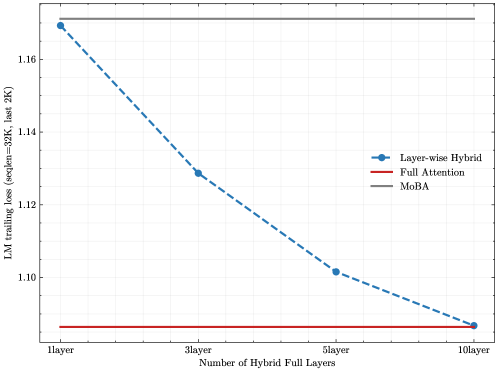

This chart displays the relationship between the number of Hybrid Full Layers and the LM trailing loss (seqlen=32K, last 2K). It compares the performance of "Layer-wise Hybrid", "Full Attention", and "MoBA" models. The chart shows a decreasing trend for the Layer-wise Hybrid model as the number of layers increases, while the other two models maintain relatively constant loss values.

### Components/Axes

* **X-axis:** Number of Hybrid Full Layers. Marked at 1 layer, 3 layer, 5 layer, and 10 layer.

* **Y-axis:** LM trailing loss (seqlen=32K, last 2K). Scale ranges from approximately 1.10 to 1.18.

* **Legend:** Located in the top-right corner.

* Layer-wise Hybrid (Blue)

* Full Attention (Red)

* MoBA (Brown)

### Detailed Analysis

* **Layer-wise Hybrid (Blue Line):** The blue line slopes downward, indicating a decrease in loss as the number of layers increases.

* At 1 layer: Approximately 1.175.

* At 3 layers: Approximately 1.135.

* At 5 layers: Approximately 1.105.

* At 10 layers: Approximately 1.08.

* **Full Attention (Red Line):** The red line is nearly horizontal, indicating a relatively constant loss value.

* Across all layer counts (1, 3, 5, 10): Approximately 1.07.

* **MoBA (Brown Line):** The brown line is also nearly horizontal, indicating a relatively constant loss value.

* Across all layer counts (1, 3, 5, 10): Approximately 1.07.

### Key Observations

* The Layer-wise Hybrid model demonstrates a significant reduction in loss as the number of layers increases, suggesting improved performance with more layers.

* Both the Full Attention and MoBA models exhibit stable loss values, independent of the number of Hybrid Full Layers.

* The Layer-wise Hybrid model starts with a higher loss than the other two models but surpasses them as the number of layers increases.

### Interpretation

The data suggests that increasing the number of Hybrid Full Layers in the Layer-wise Hybrid model leads to a substantial decrease in LM trailing loss, indicating improved language modeling performance. This implies that the hybrid architecture benefits from increased depth. The consistent performance of the Full Attention and MoBA models suggests that their performance is not significantly affected by the addition of Hybrid Full Layers, or that they have already reached a performance plateau. The initial higher loss of the Layer-wise Hybrid model could be due to the overhead of the hybrid architecture, which is then offset by the benefits of increased depth. The fact that the Layer-wise Hybrid model eventually outperforms the other two suggests that the hybrid approach, when scaled appropriately, can be more effective than traditional Full Attention or MoBA. The consistent values for Full Attention and MoBA could indicate that they are less sensitive to the specific sequence length or that they have reached their optimal performance level within the tested range.